Why Securing Secrets in Cloud and Container Environments Is Important – and How to Do It

In containerized environments, secrets auditing tools make it possible to

recognize the presence of secrets within source code repositories, container

images, across CI/CD pipelines, and beyond. Deploying container services will

activate platform and orchestrator security measures that distribute, encrypt

and properly manage secrets. By default, secrets are secured in system

containers or services — and this protection suffices in most use cases.

However, for especially sensitive workloads — and Uber’s customer database

backend service is a strong example, as are any data encryption or standard

image scanning use cases — it’s not adequate to simply rely on conventional

secret store security and secret distribution. These sensitive use cases call

for more robust defense in depth protections. Within container environments,

defense-in-depth implementations leverage deep packet inspection (DPI) and

data leakage prevention (DLP) to enable secrets monitoring while they’re being

used. Any transmission of a secret via network packets can be recognized,

flagged and blocked if inappropriate. In this way, the most sensitive data can

be effectively secured throughout the full container lifecycle, and attacks

that could otherwise result in breach incidents can be thwarted due to this

additional layer of safeguards.

Large-Scale Multilingual AI Models from Google, Facebook, and Microsoft

While Google's and Microsoft's models are designed to be fine-tuned for NLP

tasks such as question-answering, Facebook has focused on the problem of

neural machine translation (NMT). Again, these models are often trained on

publicly-available data, consisting of "parallel" texts in two different

languages, and again the problem of low-resource languages is common. Most

models therefore train on data where one of the languages is English, and

although the resulting models can do a "zero-shot" translation between two

non-English languages, often the quality of such translations is sub-par. To

address this problem, Facebook's researchers first collected a dataset of

parallel texts by mining Common Crawl data for "sentences that could be

potential translations," mapping sentences into an embedding space using an

existing deep-learning model called LASER and finding pairs of sentences from

different languages with similar embedding values. The team trained a

Transformer model of 15.4B parameters on this data. The resulting model can

translate between 100 languages without "pivoting" through English, with

performance comparable to dedicated bi-lingual models.

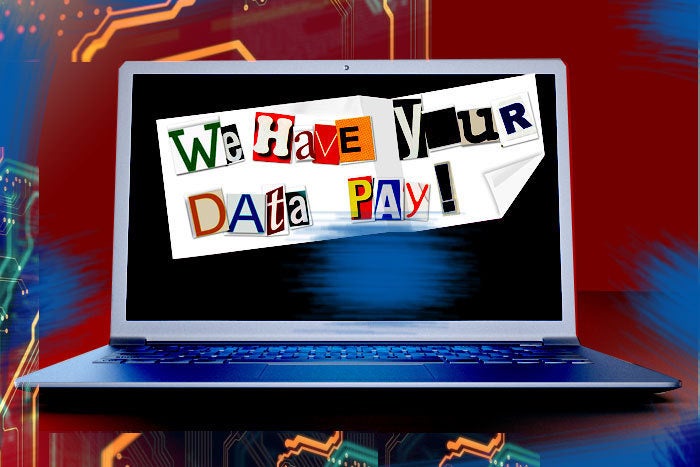

How to Prevent Pwned and Reused Passwords in Your Active Directory

There are many different types of dangerous passwords that can expose your

organization to tremendous risk. One way that cybercriminals compromise

environments is by making use of breached password data. This allows launching

password spraying attacks on your environment. Password spraying involves

trying only a few passwords against a large number of end-users. In a password

spraying attack, cybercriminals will often use databases of breached

passwords, a.k.a pwned passwords, to effectively try these passwords against

user accounts in your environment. The philosophy here is that across many

different organizations, users tend to think in very similar ways when it

comes to creating passwords they can remember. Often passwords exposed in

other breaches will be passwords that other users are using in totally

different environments. This, of course, increases risk since any compromise

of the password will expose not a single account but multiple accounts if used

across different systems. Pwned passwords are dangerous and can expose your

organization to the risks of compromise, ransomware, and data breach threats.

What types of tools are available to help discover and mitigate these types of

password risks in your environment?

The practice of DevOps

Continuous integration (CI) is the process that aligns the code and build

phases in the DevOps pipeline. This is the process where new code is merged

with the existing structure, and engineers ensure that everything is working

fine. The developers who make frequent changes to the code, update the same on

the shared central code repository. The repository starts with a master

branch, which is a long-term stable branch. For every new feature, a new

branch is created, and the developer regularly (daily) commits his code to

this branch. After the development for a feature is complete, a pull request

is created to the release branch. Similarly, a pull request is created to the

master branch and the code is merged. We have seen slight variations to these

practices across organisations. Sometimes the developers maintain a fork, or

copy, of the central repository. This limits the merge issues to their own

fork and isolates the central repository from the risk of corruption.

Sometimes, the new branches don’t branch out from the feature branch but from

the release or master branch. Small and mid-size companies often use open

sites like GitHub for their code repository, while larger firms use Bitbucket

as their code repository, which is not free.

The 5 Biggest Cloud Computing Trends In 2021

Currently, the big public cloud providers - Amazon, Microsoft, Google, and so

on – take something of a walled garden approach to the services they provide.

And why not? Their business model has involved promoting their platforms as

one-stop-shops, covering all of an organization's cloud, data, and compute

requirements. In practice, however, industry is increasingly turning to hybrid

or multi-cloud environments (see below), with requirements for infrastructure

to be deployed across multiple models. ... As far as cloud goes, AI is a key

enabler of several ways in which we can expect technology to adapt to our

needs throughout 2021. Cloud-based as-a-service platforms enable users on just

about any budget and with any level of skill to access machine learning

functions such as image recognition tools, language processing, and

recommendation engines. Cloud will continue to allow these revolutionary

toolsets to become more widely deployed by enterprises of all sizes and in all

fields, leading to increased productivity and efficiency. ... Amazon most

recently joined the ranks of tech giants and startups offering their own

platform for cloud gaming. Just as with music and video streaming before it,

cloud gaming promises to revolutionize the way we consume entertainment media

by offering instant access to vast libraries of games that can be played for a

monthly subscription.

Quantum computers are coming. Get ready for them to change everything

The challenge lies in building quantum computers that contain enough qubits

for useful calculations to be carried out. Qubits are temperamental: they are

error-prone, hard to control, and always on the verge of falling out of their

quantum state. Typically, scientists have to encase quantum computers in

extremely cold, large-scale refrigerators, just to make sure that qubits

remain stable. That's impractical, to say the least. This is, in essence, why

quantum computing is still in its infancy. Most quantum computers currently

work with less than 100 qubits, and tech giants such as IBM and Google are

racing to increase that number in order to build a meaningful quantum computer

as early as possible. Recently, IBM ambitiously unveiled a roadmap to a

million-qubit system, and said that it expects a fault-tolerant quantum

computer to be an achievable goal during the next ten years. Although it's

early days for quantum computing, there is still plenty of interest from

businesses willing to experiment with what could prove to be a significant

development. "Multiple companies are conducting learning experiments to help

quantum computing move from the experimentation phase to commercial use at

scale," Ivan Ostojic, partner at consultant McKinsey, tells ZDNet.

How enterprise architects and software architects can better collaborate

After the EAs have developed the enterprise architecture map, they should

share these plans with software architects across each solution, application,

or system. After all, it’s important that the software architect who works

most closely with the solution shares their own insight and clarity with the

enterprise architecture, who is more concerned with high-level architecture.

Software architects and EAs can collaborate and suggest changes or

improvements based on the existing architecture. Software architects can then

go in and map out the new architecture based on the business requirements.

Non-technical leaders can gain a better understanding and this can lead to

quicker alignment. Software architects can evaluate the quality of

architecture and share their learnings with the enterprise architect, who can

then incorporate findings into the enterprise architecture. Software

architects are also largely interested in standardization and they can help

enterprise architects scale that standardization across the business. Once the

EA has developed a full model or map, it’s easier to see where assets can be

reused. Software architects can recommend standardization and innovation and

weigh in on the EA’s suggestions of optimizing enterprise resources.

Dealing with Psychopaths and Narcissists during Agile Change

Many of the techniques or practices you use with healthy people do not work

well with psychopaths or narcissists. For example, if you are using the Scrum

framework, it is very risky to include a toxic person as part of a

retrospective meeting. Countless consultants also believe that coaching

works with most folks. However, the psychopathic person normally ends up

learning the coach’s tools and manipulating him or her for their own purpose.

This obviously aggravates the problem. ... From an organizational point of

view, these toxic people are excellent professionals, because they look like

they perform almost any task successfully. This helps a company to “tick off”

necessary accomplishments in the short-term to increase agile maturity,

managers to get their bonuses, and the psychopath to obtain greater prestige.

Obviously, these things are not sustainable, and what seems to be agility is

transformed in the medium term into fragility and loss of resilience. Agile

also requires—apart from good organizational health—execution with purpose and

visions and goals that involve feelings and inspire people to move forward.

Responsible technology: how can the tech industry become more ethical?

Three priorities right now should be crafting smart regulations, increasing the

diversity of thought in the tech industry (i.e. adding more social scientists),

and bridging the gulf between those that develop the technology and those that

are most impacted by its deployment. If we truly want to impact behavior, smart

regulations can be an effective tool. Right now, there is often a tension

between “being ethical” and being successful from a business perspective. In

particular, social media platforms typically rely on an ad-based business model

where the interests of advertisers can run counter to the interests of users.

Adding diverse thinkers to the tech industry is important because “tech” should

not be confined to technologists. What we are developing and deploying is

impacting people, which heightens the need to incorporate disciplines that

naturally understand human behavior. By bridging the gulf between the

individuals developing and deploying technology and those impacted by it, we can

better align our technology with our individual and societal needs. Facial

recognition is a prime example of a technology that is being deployed faster

than our ability to understand its impact on communities.

Enterprise architecture has only begun to tap the power of digital platforms

The problem that enterprises have been encountering, Ross, says, is getting hung

up at stage three. “We observed massive failures in business transformations

that frankly were lasting six, eight, 10 years. It’s so hard because it’s an

exercise in reductionism, in tight focus,” she said. “We recommend that

companies zero in on their single most important data. this is the packaged data

you keep – the customer data, the supply chain data… this is the thing that

matters most. If they get this right, then things will take off.” The challenge

is now moving past this stage, as in the fourth stage, “we actually understand

now that what’s starting to happen is we can start to componentize our

business,” says Ross. She estimates that only about seven percent of companies

have reached this stage. “This is not just about plugging modules into this

platform, this is about recognizing that any product or process can be

decomposed into people, process and technology bundles. And we can assign

individual teams or even individuals’ accountability for one manageable piece

that that team can keep up to date, improve with new technology, and respond to

customer demand.”

Quote for the day:

"Let him who would be moved to convince others, be first moved to convince himself." -- Thomas Carlyle