WFH model disrupting network security business practices

“Social distancing measures that call for employees to work from home when possible have dramatically changed patterns of connection to enterprise networks,” said Rodney Joffe, chairman of NISC, and senior vice-president and fellow at Neustar. “More than 90% of an organisation’s employees typically connect to the network locally, with a slim minority relying on remote connectivity via a VPN, but that dynamic has flipped. “The dramatic increase in VPN use has led to frequent connectivity issues, and — especially considering the disruption to usual security practices — it also creates significant risk, as it multiplies the potential impact of a distributed denial-of-service (DDoS) attack. VPNs are an easy vector for a DDoS attack.” An increase in size of volumetric attacks on networks has been detected, with Neustar recently mitigating an attack measured at 1.17 terabytes that required unique and diverse tactics in order to successfully fend it off. “In times like these,” continued Joffe, “an always-on managed DDoS protection service is critical.

Third-party compliance risk could become a bigger problem

“Remote working has been hastily adopted by suppliers to keep their business running, so it’s unlikely every organization or employee is following best practices,” said Vidhya Balasubramanian, managing vice president in the Gartner Legal and Compliance practice. “Legal and compliance leaders are concerned about the new risks this highly disruptive environment has created for their organizations.” Bribery and corruption, privacy, fraud, and ethical conduct were all noted as the most-increased third-party risks (10% of respondents for each) for a signification number of respondents. “Legal and compliance leaders need to act now to mitigate third-party risk while still enabling their supply chain partners to flex to the current pressures on the system,” said Ms. Balasubramanian. “This will likely mean managing the contractual risks and opportunities of current relationships, mitigating emerging issues, and streamlining due diligence for new third-parties. ...”

To meet head-on the scale of challenge organizations face to put that response in place fast and at scale, there’s now an unanswerable case for standing up virtual assistants. They can help achieve two vital tasks. The first is to provide automated answers to customers’ basic questions. That relieves human staff so they can focus on more complex and higher priority issues. That’s borne out by research that Accenture’s done which shows that during a time of crisis most customers prefer to turn to contact centers to get answers to urgent and complex issues. And the other essential role virtual assistants can play is to give call center workers faster and better access to the information they need to better support customers. So, what should organizations focus on to get virtual assistants up and running as fast as possible? There are a couple of critical areas to focus on. The first is speed of implementation. That’s going to need the relevant infrastructure, management systems and processes all set up quickly. Next is making sure that virtual assistants are trained on accurate and relevant content.

How IoT changes Banks and Fintech companies

Advantages of IoT in Finance- Before studying as well as using the IoT in fintech and the banking domain, company managers need to recognize the benefits the innovation supplies. Right here are the main factors for IoT adoption in Fintech: Customized client service- Banking companies can use IoT to collect even more information about their clients. After gathering real-time understandings concerning customers’ requirements as well as the rate of interest, organizations can supply custom-made content and customized experience. Subsequently, companies get in touch with their target market in more ways as well as benefits. Boosted decision-making- IoT aids services get information for credit scores threat assessment. With D2D (device-to-device) interaction procedures as well as sensor execution, possession monitoring firms can obtain pertinent data across various other areas such as retail, farming, etc.

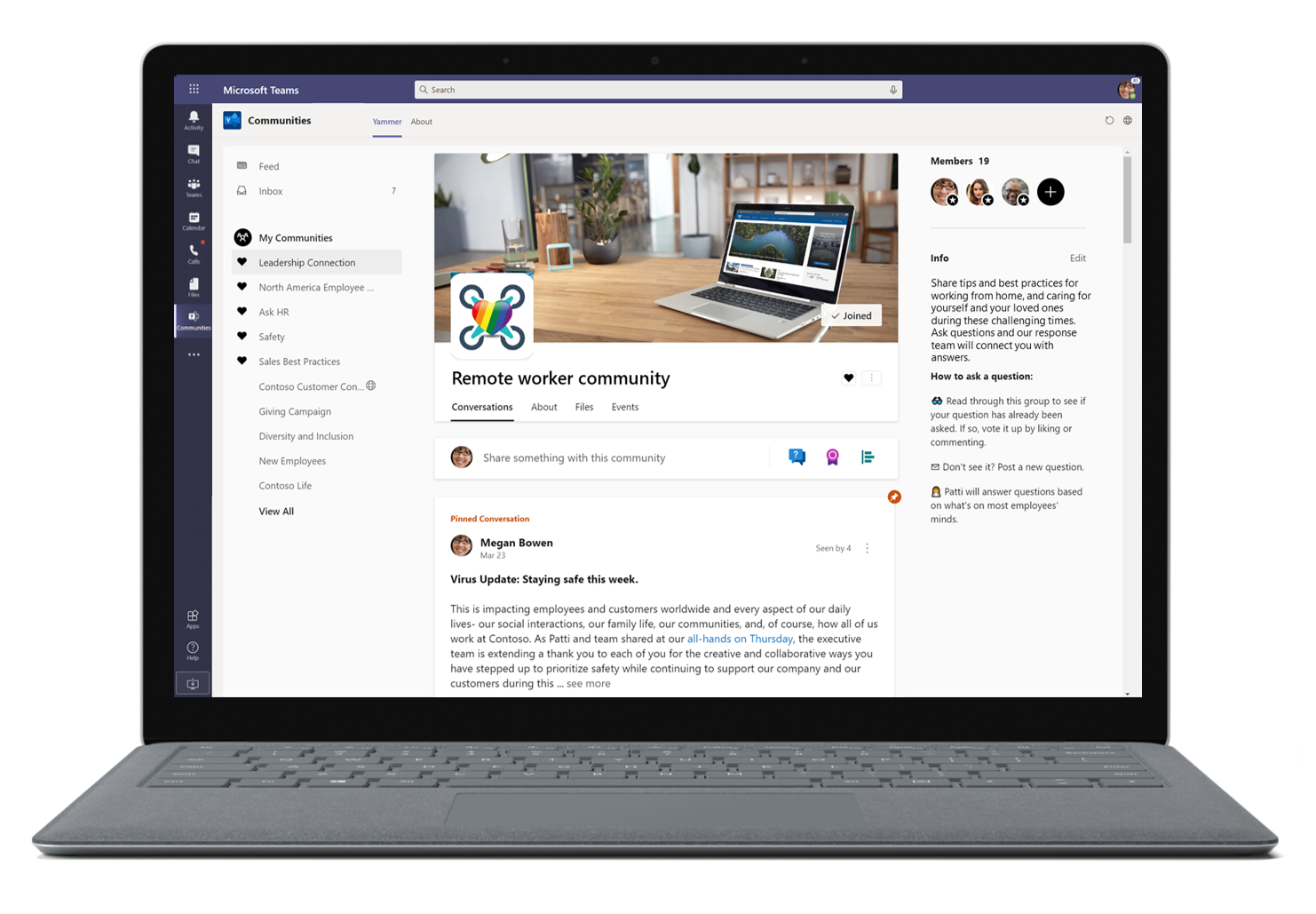

A GIF Image Could Have Let Hackers Hijack Microsoft Teams at Your Firm

Unfortunately, As the threat researchers at CyberArk explain, a flaw in both the desktop and web browser editions of Microsoft Teams could have been exploited by malicious hackers to read users' messages, send messages pretending to be from users, create groups, and control Teams accounts in a variety of ways. In fact, a single .GIF image sent to a Microsoft Teams user could have been enough to hijack multiple business accounts, traversing through an organisation like a worm. Users wouldn't even have to share the dangerous .GIF to be impacted. All that would be needed was for other users to see the .GIF image via Microsoft Teams, each time stealing authentication tokens and dramatically increasing the attack's ability to spread through an organisation. ... Many businesses are currently struggling enough without the additional nightmare of cybercriminals stealing their sensitive corporate secrets and compromising their network. Fortunately, for the attack to succeed hackers would have to have already compromised a subdomain belonging to the targeted organisation, on which to host the malicious image.

7 Habits of Highly Effective (Remote) SOCs

We're doing everything we can to make our shift to a remote SOC seamless for the team. But we're also being super cognizant of the quality of our work output. We use a quality control (QC) standard, Acceptable Quality Limits (AQL), to tell us how many alerts and incidents we should review each day. We then randomly select a number (based on AQL) of alerts, investigations and incidents and review them using a check sheet. We send the results to the team using a Slack workflow. Reviewing the results with the team lets us know how we're doing. It lets us know how we can adjust and improve. And no, we never expect perfection. This one is a bit obvious but it's worth stating. Since we're no longer working alongside each other, effective communication is crucial. And working in an all-remote setup may mean more distractions for some folks, not less. We're emphasizing empathy and constantly listening to learn what these distractions are for the team and landed on the need to over-communicate.

CISOs: Quantifying cybersecurity for the board of directors

CISOs must reconsider their communication approach and perspective prior to a board and/or C-Suite discussion. It’s crucial that they report cyber-risk in a language that the board and the rest of the C-Suite can comprehend. It can be quite frustrating to explain advanced malware or technical controls to an audience who is not savvy about the technical details of cybersecurity. From a board member’s perspective, cyber-risk posture is viewed as a set of risk items with corresponding business impact and associated expense. The board wants to know where the enterprise is on the cyber risk spectrum, where it should be, and, if there’s a gap, how it’s going to close it. CISOs should focus on shifting the conversation from cybersecurity to cyber risk and provide concise, quantitative responses to the board’s questions without the use of overly technical terms or concepts. ... A CISO’s plan needs to be converted into an easily digestible, high-level list of small steps or initiatives, each with corresponding time frames, required resources and a dollar cost. Furthermore, given that the board will expect the CISO to drive and execute a plan, he or she must quantify all the responsible constituents involved.

AI startup: We've removed humans from business negotiations

Pactum's AI-based negotiation tool starts the process by interviewing the customer, recording all the required information surrounding the negotiation, and determining the value for each possible tradeoff in the contract for the customer. Pactum's team then builds the negotiation flows. When conducting the chat-based negotiation, the system gets to know the partner or supplier. "Besides the best-practice negotiation strategies, the system uses what it learned and all the available information to strike a win-win deal," explains Korjus, adding that although the system can operate in a fully autonomous mode, it can also be configured to loop in a human, depending on the customer's needs. By improving the way that suppliers are managed without human involvement, companies should see financial benefits, he argues: "Fortune Global 2000 companies have immense long tails of suppliers that go unmanaged because there are so many of them." The idea for an AI-based business negotiation tool was conceived by Pactum's second co-founder, Martin Rand.

One Size Doesn’t Fit All for AI Regulation

As we accelerate faster into this brave new world, it is essential that the leaders of the various regulatory agencies have a strong conceptual understanding of both the AI methods, as well as the underlying ethical and societal implications of these emerging use-cases. Deep industry expertise will be required among regulators as they collaborate with companies and citizens to shape this inevitable future. The most fruitful approach toward AI regulation requires industry and federal government working groups to collaborate on use-case specific regulation. However, broader, international mandates will likely be less effective at this early juncture. Every country and most every industry are thinking about their AI use-cases strategically and more likely from a geopolitical perspective. We are entering a period in which the commanding heights of geopolitics will not be defined by nuclear proliferation, but AI proliferation. In these uncertain times, businesses are impacted by unprecedented, exogenous forces including the current COVID-19 global pandemic. It’s particularly in these moments that society needs technological innovation to progress forward.

To Microservices and Back Again - Why Segment Went Back to a Monolith

Noonan pointed out the limitations of a one-size-fits-all approach to their microservices. Because there was so much effort required just to add new services, the implementations were not customized. One auto-scaling rule was applied to all services, despite each having vastly different load and CPU resource needs. Also, a proper solution for true fault isolation would have been one microservice per queue per customer, but that would have required over 10,000 microservices. The decision in 2017 to move back to a monolith considered all the trade-offs, including being comfortable with losing the benefits of microservices. The resulting architecture, named Centrifuge, is able to handle billions of messages per day sent to dozens of public APIs. There is now a single code repository, and all destination workers use the same version of the shared library. The larger worker is better able to handle spikes in load. Adding new destinations no longer adds operational overhead, and deployments only take minutes. Most important for the business, they were able to start building new products again.

Quote for the day:

"The world is moved not only by the mighty shoves of the heroes, but also by the aggregate of the tiny pushes of each honest worker." -- Frank C. Ross