Understanding which disciplines and skills are up-and-coming and which are fading can help both companies and developers ensure they have the right skills and knowledge to succeed. And what better way to find that out than to mine developer job postings. Indeed.com analyzed job postings using a list of 500 key technology skill terms to see which ones employers are looking for more these days and which are falling out of favor. Such research has helped identify cutting-edge skills over the past five years, with some previous years’ risers now well establish, thanks to explosive growth. Docker, for one, has risen more than 4,000 percent in the past five years and was listed in more than 5 percent of all U.S. tech jobs in 2019. IoT as well has shot up nearly 2,000 percent in the past half-decade, with Ansible — an IT automation, configuration management, and deployment tool — and Kafka — a tool for building real-time data pipelines and streaming apps — showing similarly strong growth. And, of course, the rise of data science has also since cemented high demand for a range of skills, including artificial intelligence, machine learning, and data analysis.

Simply put, an anytime algorithm is just an algorithm that gradually improves a solution over time and can be interrupted at any time for that solution. For example, if we’re trying to come up with a route from the grocery story to the hospital, the anytime algorithm would continually produce routes that get better and better with more and more time. Basically, when we say a robot is thinking, what we really mean is that the robot is executing an anytime algorithm that produces solutions that improve over time. An anytime algorithm usually has a couple nice properties. First, an anytime algorithm exhibits monotonicity: it guarantees that solution quality increases or stays the same but never gets worse over time. Next, an anytime algorithm exhibits diminishing returns: the improvement in solution quality is high at the early stages of the computation and low at later stages. To illustrate the behavior of an anytime algorithm, take a look at this photo. In this photo, as computation time increases, solution quality increases as well. It turns out that this behavior is pretty typical of an anytime algorithm.

The pros and cons of AI and ML in DevOps

A criticism that has been made towards AI in DevOps is that it can distract engineering teams from the end goal, and from more human elements of processes that are just as vital to success. “When it comes to tech and DevOps, we’re not talking about ‘strong AI’ or ‘Artificial General Intelligence’ that mimics the breadth of human understanding, but ‘soft’, or ‘weak’ AI, and specifically narrow, task-specific ‘intelligence’,” said Nigel Kersten, field CTO of Puppet. “We’re not talking about systems that think, but really just referring to statistics paired with computational power being applied to a specific task. “Now that sounds practical and useful, but is it useful to DevOps? Sure, but I strongly believe that focusing on this is dangerous and distracts from the actual benefits of a DevOps approach, which should always keep humans front and centre. “I see far too many enterprise leaders looking to ‘AI’ and Robotic Process Automation as a way of dealing with the complexity and fragility of their IT environments instead of doing the work of applying systems thinking, streamlining processes, creating autonomous teams, adopting agile and lean methodologies, and creating an environment of incremental progress and continuous improvement.

Raspberry Pi sales are rocketing in the middle of the coronavirus outbreak

When the Raspberry Pi Foundation has asked for stories about how people are using their Raspberry Pi devices to address COVID-19, one of the most common uses it saw was people showing their 3D-printed face shields, driven by a Raspberry Pi. "And that's just been individuals, that's what's inspiring - making face shields seems to be a community effort. You have people with a home printer, printing these things once a week and then going to a post office and sending them," he said. "Then you'll have some people sat in a hack space receiving the parcels, cutting the acetate and the elastic, assembling them into face shields then sending them to the hospital. It's amazing." Upton suggested this effort could eventually be ramped up to a "massively distributed scale", with the benefit of open source being that, once you have a good design that works, it can be rapidly iterated. In the long term, this could even include the ventilators themselves, he said. "One thing we're seeing with this is people finding a niche within which open hardware really works," he said.

Embracing the Journey to Public Cloud

Whatever the sector, digital disruptors have one characteristic in common. They leverage the capabilities of the cloud to the maximum extent possible. A shift in power toward digital-first companies is underway, and the cloud plays a major role in helping these companies establish themselves. Many incumbents are just now taking their first steps on the journey to the cloud, and still working on the challenges involved in migrating their existing legacy applications to more scalable and agile public cloud environments. While they’re engaged in this process, digital insurgents are already exploiting the full potential of the cloud to deliver solutions that meet emerging customer needs associated with today’s evolving lifestyles. In highly regulated industries, such as financial services and healthcare, major brands will not necessarily see an immediate decline in their customer base, nor profits. Nonetheless, digital-first companies are capturing millions of customers annually, and this growth poses a considerable challenge over time. Embracing the journey to public cloud is crucial for legacy enterprises.

jQuery 3.5 Released, Fixes XSS Vulnerability

Timmy Willison recently released a new version of jQuery. jQuery 3.5 fixes a cross-site scripting (XSS) vulnerability found in the jQuery’s HTML parser. The Snyk open source security platform estimates that 84% of all websites may be impacted by jQuery XSS vulnerabilities. jQuery 3.5 also adds missing methods for the positional selectors :even and :odd in preparation for the complete removal of positional selectors in the next major jQuery release (jQuery 4). Masato Kinugawa found a cross-site scripting (XSS) vulnerability in the htmlPrefilter method of jQuery, and published an example showing a popup alert window in the form of a challenge. Kinugawa explains that jQuery’s html() function calls the htmlPrefilter() method which uses a regexp replacing XHTML-like tags with versions that work in HTML ... While jQuery is a mature library, its presence is also very pervasive in websites. The Snyk open source security platform estimated in its State of JavaScript frameworks security report 2019 that 84% of all websites may be impacted by jQuery XSS vulnerabilities. jQuery can be found in 79% of the top 5,000 URLs from Alexa.

What Chrome OS needs to conquer next

When you look at what types of tablets people are actually buying these days, what do you see? Specific data can be somewhat tough to come by, but we can pretty easily assemble a broad overview of what's happening. The big trend, not surprisingly, is that Apple tends to be the most prominent player in tablet sales — with around 36% of the worldwide market, according to IDC's latest stats. But it's what comes next that's particularly interesting for our current purposes. The second-place tablet-seller, again in no huge surprise, is almost always Samsung. But despite all the breathless coverage given to the company's high-priced tablets, IDC's past data indicates the "majority of its shipments" have been "comprised of the lower-end E and A series" devices. Hmmm. The next especially-significant-to-the-U.S. player in the list is Amazon, which uses Android as the base for its own custom tablet operating system. And guess what? ... So why are traditional Android tablets still hanging on and Chromebooks as tablets failing to catch, erm, fire? The answer is right in front of our eyes: When it comes to the non-Apple-associated tablet experience, people seem to be looking for cheap and often thus small-sized options.

Security Lapse Exposed ClearView Source Code

The repository contained Clearview’s source code, which could be used to compile and run the apps from scratch. The repository also stored some of the company’s secret keys and credentials, which granted access to Clearview’s cloud storage buckets. Inside those buckets, Clearview stored copies of its finished Windows, Mac and Android apps, as well as its iOS app, which Apple recently blocked for violating its rules. The storage buckets also contained early, pre-release developer app versions that are typically only for testing, Hussein said. The repository also exposed Clearview’s Slack tokens, according to Hussein, which, if used, could have allowed password-less access to the company’s private messages and communications. Clearview has been dogged by privacy concerns since it was forced out of stealth following a profile in The New York Times, but its technology has gone largely untested and the accuracy of its facial recognition tech unproven. Clearview claims it only allows law enforcement to use its technology, but reports show that the startup courted users from private businesses like Macy’s, Walmart and the NBA.

Zoom in crisis: How to respond and manage product security incidents

Cybersecurity is a discipline in managing the risks to security, privacy, and safety. It does not eliminate them, but rather seeks to find an optimal balance between the risks, costs, and usability. That means there will always be a chance for undesired impacts. If managed properly from the onset, the minimization of those residual risks can also be handled in ways that reduce the negative effects. Crisis response is a specialty that benefits from forethought, experience, leadership, and skills. I have lead crisis response teams over the years and been fortunate to be part of strong teams that handled events with speed, efficiency, and professionalism. I have also witnessed complete train-wrecks where the wrong people were attempting to lead, focus was misplaced, valuable time and resources were squandered, legal instruments were applied to hide the truth, communication was confusing, and feeble attempts leveraging marketing to “spin messages” were preferred over actually addressing issues head-on. Poor leadership is caustic, can result in more problems and a prolonged recovery. Crisis response is a complex dance. It requires a clearly defined objective to pursue and an understanding of the opposition, obstacles, and resources.

Blockchain Is a Key Technology for the Development of Internet of Things (IoT) Solutions

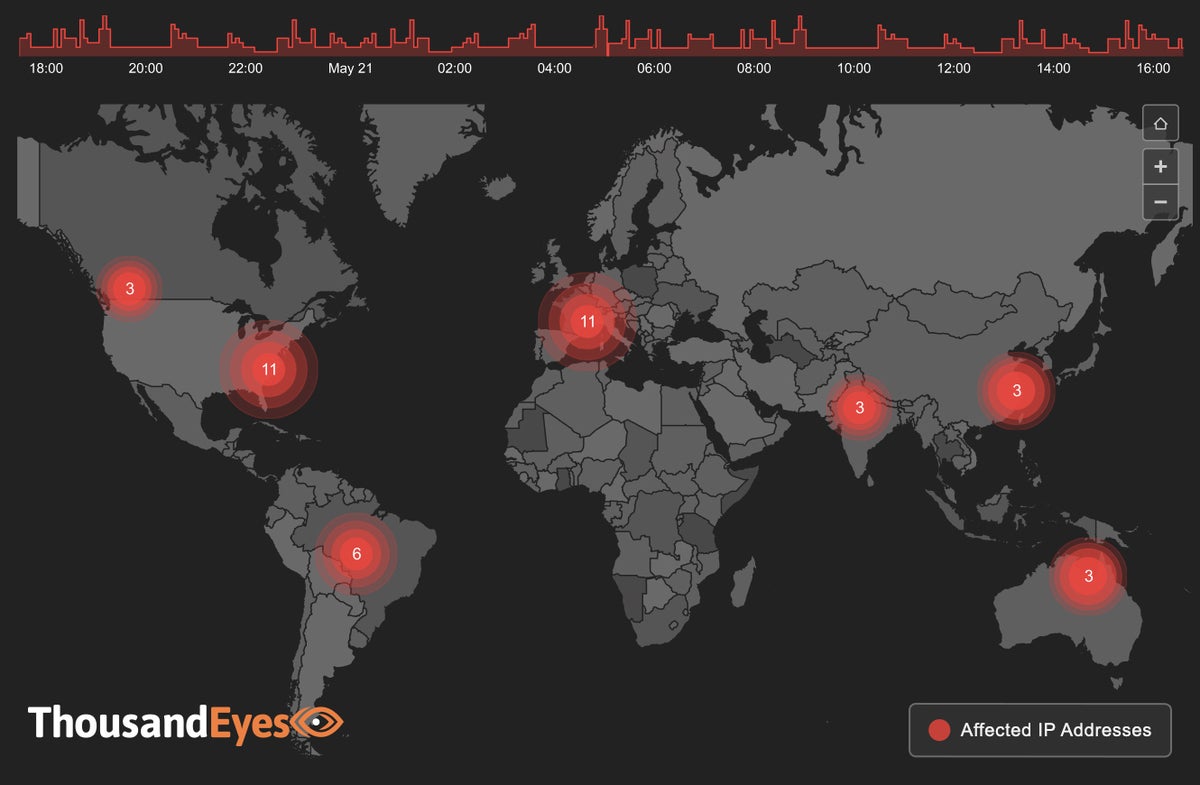

The main problem with the IoT is the danger it poses to users’ internet safety. Any device connected to the IoT is open to exploitation by hackers, and there have been multiple news reports in recent years ranging from hackers exploiting baby monitors to an IoT botnet taking down portions of the Internet. By opening ourselves up even more to the digital world, we are putting ourselves more and more at risk of cyberattack and hacking. And there’s no chance we’re taking a backstep anytime soon - modern internet uptake statistics say it all. We aren’t moving away from technology as the years go by, we are simply surrounding ourselves with more and more of it, putting us at more and more risk of being compromised or hacked. But rest easy, because apparently blockchain could help make the IoT a whole lot safer for us in the coming years. Given that IoT applications are, by very definition, ‘distributed’. It makes sense that distributed ledger technology like blockchain could play a vital role in allowing devices to communicate with each other. Blockchain is, at its core, a cryptographically secured, distributed ledger that allows for the secure transfer of data between parties.

Quote for the day:

"Increasingly, management_s role is not to organize work, but to direct passion and purpose." -- Greg Satell