You need to methodically identify the possible risks that could face your start-up. You might want to think outside the box for this one because anything could, and will, happen so you better have an answer for any risks you have identified. Next you need to assess the likelihood that the risks will happen and understand how to respond. You might want to put them in a list from most likely to almost impossible. Unless you live in Tornado Alley you may consider a natural disaster as a long shot. Arlene Dickinson, the Canadian Dragon’s Den maven may disagree. She went to work one day after a brutal storm to find that the basement in her tony Toronto office had water damage. No, this wasn’t a leaky roof, her entire basement where she stored client records, computers, taxes and more was filled to the ceiling with smelly, dirty water. Everything was ruined but she did have insurance. When you see the types of risks you will have to come up with your own way to mitigate them.

No single central entity stores and processes payments or manages the admission of new units into the database, thus ensuring freedom of access. This is the key difference of DAG over its predecessors. In centralized systems, only one party was allowed to add transactions to the ledger, while in blockchains, only a select few - the miners - are allowed to do it. And, in DAG, everybody is allowed to write to the ledger. DAG also improves speed and throughput. So, instead of having one single chain of blocks, data can be added to any number of parallel interconnected “lanes.” One can think that it would be challenging to keep this thing together while everybody is allowed to write to the ledger at the same time, which is right. This is what consensus algorithms are about and it is currently an area of active research where some of the brightest minds are involved. Simplified, the intuition behind Obyte’s consensus algorithm is as follows: when a user adds a new transaction, it is placed on the ledger together with addresses of twelve witnesses.

The weaknesses, collectively called Thunderclap, highlight a new class of threats posed by malicious peripherals. The research has been in the works since 2016, and Apple is one of several vendors that have issued software updates as a result. The work focused on the Thunderbolt 3 data transfer standard over USB Type-C connectors. Although operating systems are supposed to only allow a peripheral to have direct memory access for the resources it needs, researchers found that this defense isn't implemented effectively to prevent data theft. The research also covered PCI Express, or PCIe, an older set of device connection and data transfer protocols. Stealing data this way would require physical access to a device. "The combination of power, video and peripheral-device DMA over Thunderbolt 3 ports facilitates the creation of malicious charging stations or displays that function correctly but simultaneously take control of connected machines," the researchers write in a blog post.

The approach has the potential not just to diversify tech but to help “techify” everything else, said Megan Smith, former CTO for the Obama administration: “We could really work on ... the hardest problems together in this collaborative way.” Faculty at the new college will work with other MIT departments to cross-pollinate ideas. Classes will also be designed so that technical skills, social sciences, and the humanities are bound up together within each course rather than learned separately. “It’s not just thinking about how you learn computation,” Melissa Nobles, the dean of MIT’s School of Humanities, Arts, and Social Sciences, told MIT Technology Review after the main-stage event, “but it’s also students having an awareness of the larger political, social context in which we’re all living.” This has also been my driving mission with MIT Technology Review’s AI newsletter, The Algorithm: to dismantle our outdated notions that technology is for the tech people and social problems are for the humanities people; that there is such thing as a “math person,” which is certainly not the same as a “people person.”

Psychologists and researchers have developed a systematic approach for discovering a sustainable solution to any problem. This technique, commonly referred to as the problem-solving cycle, starts with identifying the problem. After all, there could be multiple issues within one situation, and you could be focusing on the wrong one. Separate the symptoms from the cause. After defining the problem, form a strategy. This will vary depending on the situation and your preferences, but develop wide-ranging ideas while taking into consideration your resources. Are the solutions feasible? Come up with multiple ideas to have options. Organize your information: What do you know -- or not know -- about the problem? By collecting as much information as possible, you increase your chances of achieving a positive outcome. Once you settle on a solution, monitor its progress. The solution you developed should be measurable so you can assess whether it's reaching its destination. If not, you may need to implement an alternative strategy.

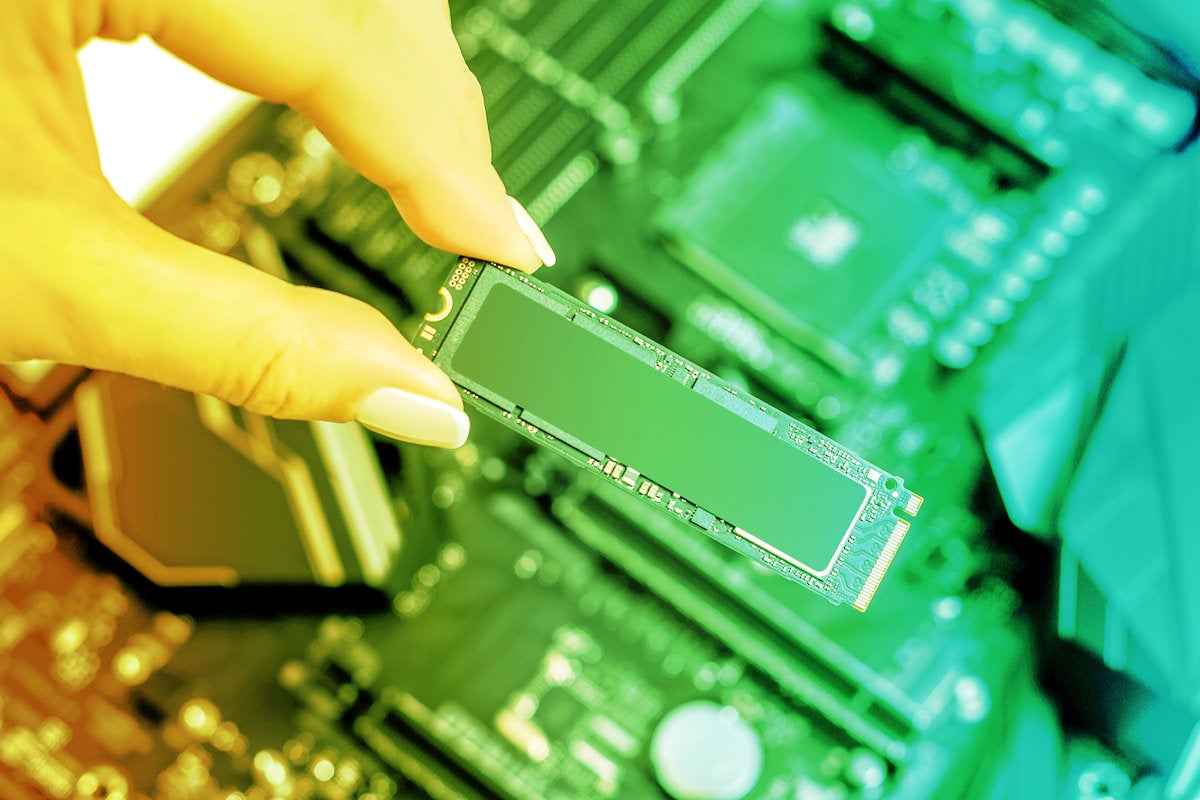

Kimball noted that when they started the company, they weren't yet sure where CockroachDB would fit into the ecosystem, or what kinds of companies would be willing and able to move to a new RDBMS. He went on to add, however, that in 2018 they began to answer those questions and ended with an impressive first year of revenue: "It turns out that much of the Fortune 2000 is struggling with often board-level mandates to embrace the benefits of the public cloud. That modernization process opens the door to consideration of alternatives to Oracle, especially databases better suited to exploiting the opportunities inherent in the cloud. Where CockroachDB has a big strategic advantage over the likes of AWS Aurora or Google Cloud Spanner is that we offer a bridge from the reality of existing on-premise deployments to the desired outcome of using the public cloud wherever it makes sense. CockroachDB can be run on-premise, hybrid, and across arbitrary cloud vendors."

“First, data is a rich source of insights and discovery about any domain, to understand deeper the things that we already know about the domain and to discover new things that we did not know about it. Second, data is the fuel (the essential input) for interesting algorithms and models that can be used to help predict the future, to optimize outcomes, to reveal emerging trends, and to detect anomalies, sometimes before they happen. Third, data is sensory input to our natural human activities of pattern detection and pattern recognition that become the basis for nearly all human decisions and actions as we move forward through our world. Fourth, data are measurements that encode knowledge – as such, data delivers a wonderful very human challenge to us to decode that knowledge and consequently to become smarter and wiser about people, processes, events, and all things. Finally, data ignites innovation, transformation, and value creation in all organizations and businesses through pattern exploration and pattern exploitation within the digital signals that flow all around us.”

Bob Diachenko, an independent security researcher, discovered that an Amazon Web Services-hosted Elasticsearch database exposed the records, TechCrunch first reported. The exposed data, which has since been secured, is Dow Jones' Watchlist database, which companies use as part of their risk and compliance efforts. Dow Jones says in a statement that "an authorized third party" was to blame for the exposure, but it did not name the company. Dow Jones declined to provide further details on the incident. Security researchers say the incident highlights the need for adequate vendor risk management. A recent Verizon report found that one of every two data breaches stems from third-party risks. Too many organizations focus on protecting their own IT infrastructure, ignoring the security of data handed over to a third party, security experts say. "This becomes a major issue because you are as vulnerable as your vendor managing your data," says Edwin Lim, director of professional services - APJ, at Trustwave, a Singtel company.

By creating a seemingly innocent application that holds a malicious exploit script, potential attackers can dupe users when the app asks for permission to access the external storage. A typical user is likely to approve the request, enabling the attacker to manipulate the data written on that storage and used by multiple applications. App development guidelines urge developers not to have their apps store sensitive code in the external storage, though our researchers found that many apps, including Google Translate, did not heed this advice. However, while security-related guidelines are great, frankly, it’s naïve to expect every developer in the world to have security top of mind when they write their code, let alone to have enough expertise to get it right. Google patched their applications that were affected by this particular vulnerability, as responsible companies do, but it goes to show that identifying just one entry point is enough to keep attackers in business.

The only thing constant is change. And, no matter what the size of an enterprise is, over the last few decades, businesses across the world have witnessed tremendous changes in the ways they operate and run. One of the major factors influencing this change is, undoubtedly, the technological explosion in all aspects of our lives. From the gigantic machinery that man started out with, the ones with heavy knobs and loud motors, to sleek tablets and microchips with tremendous computing and processing powers, the application of science and technology in businesses has brought about drastic changes, and mostly for the better. Since technology stepped into businesses, a spike in the production of software, programs, applications, and interfaces all designed exclusively for businesses to collaborate with teams, manage data, and derive insights from sales made, have been flooding the market. Today, we have a new class of businesses called ‘digital businesses’ that heavily rely on the Internet for their everyday functionality.

Quote for the day:

"A leader must have the courage to act against an expert_s advice." -- James Callaghan