Quote for the day:

"Power should be reserved for weightlifting and boats, and leadership really involves responsibility." -- Herb Kelleher

The quiet data breach hiding in AI workflows

Prompt leaks happen when sensitive data, such as proprietary information,

personal records, or internal communications, is unintentionally exposed through

interactions with LLMs. These leaks can occur through both user inputs and model

outputs. On the input side, the most common risk comes from employees. A

developer might paste proprietary code into an AI tool to get debugging help. A

salesperson might upload a contract to rewrite it in plain language. These

prompts can contain names, internal systems info, financials, or even

credentials. Once entered into a public LLM, that data is often logged, cached,

or retained without the organization’s control. Even when companies adopt

enterprise-grade LLMs, the risk doesn’t go away. Researchers found that many

inputs posed some level of data leakage risk, including personal identifiers,

financial data, and business-sensitive information. Output-based prompt leaks

are even harder to detect. If an LLM is fine-tuned on confidential documents

such as HR records or customer service transcripts, it might reproduce specific

phrases, names, or private information when queried. This is known as data

cross-contamination, and it can occur even in well-designed systems if access

controls are loose or the training data was not properly scrubbed.

The Rise of Security Debt: Your Security IOUs Are Due

Despite measurable improvements, security debt — defined as flaws that remain

unfixed for more than a year after discovery — continues to put enterprises at

risk. Security debt impacts almost three-quarters (74.2%) of organizations, up

from 71% in previous measurements. More frighteningly, half of all organizations

suffer from critical security debt: a dangerous combination of high-severity,

long-unresolved flaws. There's a reason it is described as critical debt: the

longer a security flaw survives within an enterprise, the less likely it will be

resolved. Today, more than a quarter (28%) of flaws remain open two years after

discovery, and even after five years, 9% of flaws still linger in applications.

... Applications are only as secure as the code used to write them, and security

flaws are a fact of life in every code base in the world. That being said, the

origin of the code that is being used matters. Leveraging third-party code has

become standard practice across the industry, which introduces added risks. ...

organizations need the ability to correlate and contextualize findings in a

single view to prioritize their backlog based on context. This allows companies

to reduce the most risk with the least effort. Since the average time to fix

flaws has increased dramatically, programs seeking to improve their security

posture must focus on the findings that matter most in their specific

context.

How to Cut the Hidden Costs of IT Downtime

"Workers struggling with these problems waste productive time waiting for

fixes," said Ryan MacDonald, CTO at Liquid Web. Businesses can reduce these

costs by investing in proactive IT support, automating troubleshooting

processes, and training workers on best practices to prevent repeat problems, he

said. MacDonald explained that while tech failures are inevitable, companies

often take a reactive rather than proactive approach to IT. Instead of

addressing persistent issues at their root, organizations frequently apply

short-term fixes, resulting in continuous inefficiencies and mounting expenses.

... Companies that fail to modernize their systems will continue to experience

recurring IT problems that hinder productivity and increase operational costs.

In addition to upgrading infrastructure, organizations must conduct regular IT

audits to proactively identify inefficiencies before they escalate into major

disruptions. MacDonald stressed the importance of continuous evaluation.

"Regularly scheduled IT audits allow companies to find recurring inefficiencies

and invest money into fixing them before they become costly disruption points,"

he said. Rather than waiting for issues to break, businesses should implement

proactive IT strategies, which can save time, reduce financial losses, and

improve overall system reliability.

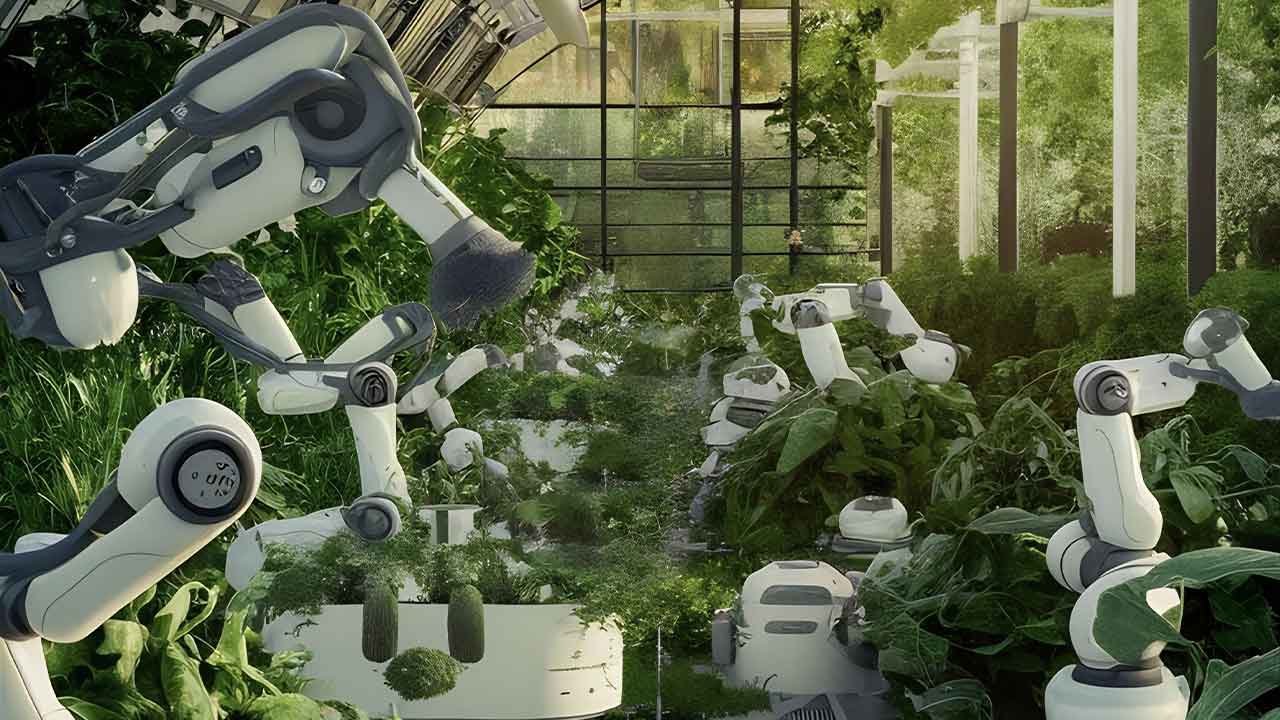

A multicloud experiment in agentic AI: Lessons learned

At its core, an agentic AI system is a self-governing decision-making system.

It uses AI to assign and execute tasks autonomously, responding to changing

conditions while balancing cost, performance, resource availability, and other

factors. I wanted to leverage multiple public cloud platforms harmoniously.

The architecture would have to be flexible enough to balance cloud-specific

features while achieving platform-agnostic consistency. ... challenges with

interoperability, platform-specific nuances, and cost optimization remain.

More work is needed to improve the viability of multicloud architectures. The

big gotcha is that the cost was surprisingly high. The price of resource usage

on public cloud providers, egress fees, and other expenses seemed to spring up

unannounced. Using public clouds for agentic AI deployments may be too

expensive for many organizations and push them to cheaper on-prem

alternatives, including private clouds, managed services providers, and

colocation providers. I can tell you firsthand that those platforms are more

affordable in today’s market and provide many of the same services and tools.

This experiment was a small but meaningful step toward realizing a future

where cloud environments serve as dynamic, self-managing ecosystems.

What boards want and don’t want to hear from cybersecurity leaders

A lack of clarity can lead to either oversharing technical details or not

providing enough strategic context. Paul Connelly, former CISO turned board

advisor, independent director and mentor, finds many CISOs focus too heavily on

metrics while the board is looking for more strategic insights. The board

doesn’t need to know the results of your phishing test, says Connelly. Boards

are focused on risks the organization faces, strategies to address these risks,

progress updates, obstacles to success, and whether they’re tackling the right

things. “I coach CISOs to study their board — read their bios, understand their

background, and understand the fiduciary responsibility of a board,” he says.

The goal is to understand the make-up of the board and their priorities and

channel their metrics into risk and threat analysis for the business. Using this

information, CISOs can develop a story about their program aligned with the

business. “That high-level story — supported by measurements — is what boards

want to hear, not a bunch of metrics on malicious emails and critical patches or

scary Chicken Little-type of threats,” Connelly tells CSO. However, it’s not a

one-way interaction, yet many CISOs are engaging with boards that lack the

appropriate skills and understanding to foster meaningful discussions on cyber

threats. “Very few boards have any directors with true expertise in technology

or cyber,” says Connelly.

The future of insurance is digital, intelligent, and customer-first

The Indian insurance sector is undergoing transformative changes, driven by

insurtech innovations, personalised policies, and efficient claim settlements.

Reliance General Insurance leads this evolution by integrating AI, data science,

and automation to enhance customer experiences. According to Deloitte, 70% of

Central European insurers have recently partnered with insurtech, with 74%

expressing satisfaction, highlighting the global trend of technological

collaboration. Emphasising innovation, speed, and customer-centric measures, the

industry aims to demystify insurance, boost its adoption, and eliminate service

hindrances, steering towards a technology-oriented future. ... Protecting our

customer’s data is essential at Reliance General Insurance. To avoid the misuse

of the customer information, the company employs a strong multi-layered security

framework involving encryption, threat intelligence services, and real-time

monitoring. To help mitigate these risks, we also offer cyber insurance

products. ... As much as self-regulatory innovation evokes progressive

strides, risk management becomes paramount in the adoption of insurtech

solutions. Seamlessly integrating new technologies is the objective, and

Reliance General employs constant feedback monitoring to ensure new technologies

meet security and regulatory standards.

Examining the business case for multi-million token LLMs

As enterprises weigh the costs of scaling infrastructure against potential gains

in productivity and accuracy, the question remains: Are we unlocking new

frontiers in AI reasoning, or simply stretching the limits of token memory

without meaningful improvements? This article examines the technical and

economic trade-offs, benchmarking challenges and evolving enterprise workflows

shaping the future of large-context LLMs. ... Increasing the context window also

helps the model better reference relevant details and reduces the likelihood of

generating incorrect or fabricated information. A 2024 Stanford study found that

128K-token models reduced hallucination rates by 18% compared to RAG systems

when analyzing merger agreements. However, early adopters have reported some

challenges: JPMorgan Chase’s research demonstrates how models perform poorly on

approximately 75% of their context, with performance on complex financial tasks

collapsing to near-zero beyond 32K tokens. Models still broadly struggle with

long-range recall, often prioritizing recent data over deeper insights. This

raises questions: Does a 4-million-token window truly enhance reasoning, or is

it just a costly expansion of memory? How much of this vast input does the model

actually use? And do the benefits outweigh the rising computational costs?

IT compensation satisfaction at an all-time low

“We’re going through a leveling of the economy right now,” Sutton said, adding

that during difficult business periods employees crave consistency and

reliability. “There is a little bit of satisfaction and contentment with what is

seen as a stable role.” Industry observers also said that although money is a

critical factor in how appreciated employees feel, unhappiness with one’s IT

role is often a result of other factors, such as changing job descriptions and a

general lack of job security. “Compensation is not the only tool enterprises

have to improve employee experience and satisfaction. Enterprises can make sure

that their employees are focused on work that excites them and they can see the

value of,” Forrester’s Mark said. “Provide ample opportunities for upskilling in

line not just with the technology strategy, but also with employees’ career

aspirations. Ensure that employees feel empowered and have autonomy over

decisions which impact them, and of course manage work-life balance,

demonstrating that organizations do not simply value the work outputs, but the

employees themselves as unique individuals.” Matt Kimball, VP and principal

analyst for Moor Insights and Strategy, agreed that employee sentiment goes well

beyond salary and bonuses.

Amazon Gift Card Email Hooks Microsoft Credentials

The Cofense Phishing Defense Center (PDC) has recently identified a new

credential phishing campaign that uses an email disguised as an Amazon e-gift

card from the recipient’s employer. While the email appears to offer a

substantial reward, its true purpose is to harvest Microsoft credentials from

unsuspecting recipients. The combination of the large monetary value and the

appearance of an email seemingly from their employer lures the recipient into a

false sense of security that leaves them unaware of the dangers ahead. ... Once

the recipient submits their email address, they will be redirected to a phishing

page, as shown in Figure 3. The phishing page is well-disguised as a legitimate

Microsoft login site, once again prompting the victim to input their

credentials. Legitimate Microsoft Outlook login pages should be hosted on

domains belonging to Microsoft (such as live.com or outlook.com), but as you can

see in Figure 3, the domain for this site is officefilecenter[.]com, which was

created less than a month before the time of analysis. Credential phishing

emails such as these are a perfect example of the various ways that threat

actors can exploit the emotions of the recipient. Whether it is the theme of

phish, the content within, or the time of the year, threat actors will utilize

anything they can to make sure you do not catch on until it’s too late.

Driving Sustainability Forward with IIoT: Smarter Processes for a Greener Future

AI-driven IIoT systems are transforming how industries manage raw materials,

inventory, and human resources. In smart factories, AI forecasts demand,

streamlines production schedules, and optimizes supply chains to reduce waste

and emissions. For instance, AI calculates the exact quantity of materials

needed for production, preventing overstocking and minimizing excess. It also

enhances SIOP and logistics by consolidating shipments and selecting

eco-friendly transportation routes, reducing the carbon footprint of global

supply chains. Predictive maintenance, powered by AI, contributes by detecting

equipment issues early, preventing breakdowns, extending lifespan and uptime

while reducing defective outputs. ... IIoT is a key enabler of the circular

economy, which focuses on recycling, reusing, and reducing waste. Automated

systems allow manufacturers to recycle heat, water, and materials within their

facilities, creating closed-loop processes. For example, excess heat from

industrial ovens can be captured and repurposed for heating water or other

facility needs. While sensors monitor production processes to optimize material

usage and reduce scrap, product take-back programs are another cornerstone of

the circular economy.

/dq/media/media_files/2025/04/08/6WDshzRE4ixEeRPByn45.jpg)