Mergers and Acquisitions in Healthcare: The Security Risks

Incidents such as the CommonSpirit ransomware attack highlight the critical

importance for entities to carefully assess and address potential IT security

risks involving a potential merger or acquisition, experts say. "We are seeing

that well-established health systems or entities that have very mature

cybersecurity programs take on an entity which is less secure," says John Riggi,

national adviser for cybersecurity and risk at the American Hospital

Association. The association advises hospital mergers to treat cyber risk with

the same priority as financial analysis in a merger. But determining and

identifying the array of systems and myriad of devices used by another

healthcare entity that's being acquired is not easy. "When you buy an

organization, you typically don't know everything you're buying," says Kathy

Hughes, CISO of New York-based Northwell Health, which has 21 hospitals and over

550 outpatient facilities, many of which were acquired by the organization,

which is the result of a 1997 merger between North Shore Health System and Long

Island Jewish Medical Center.

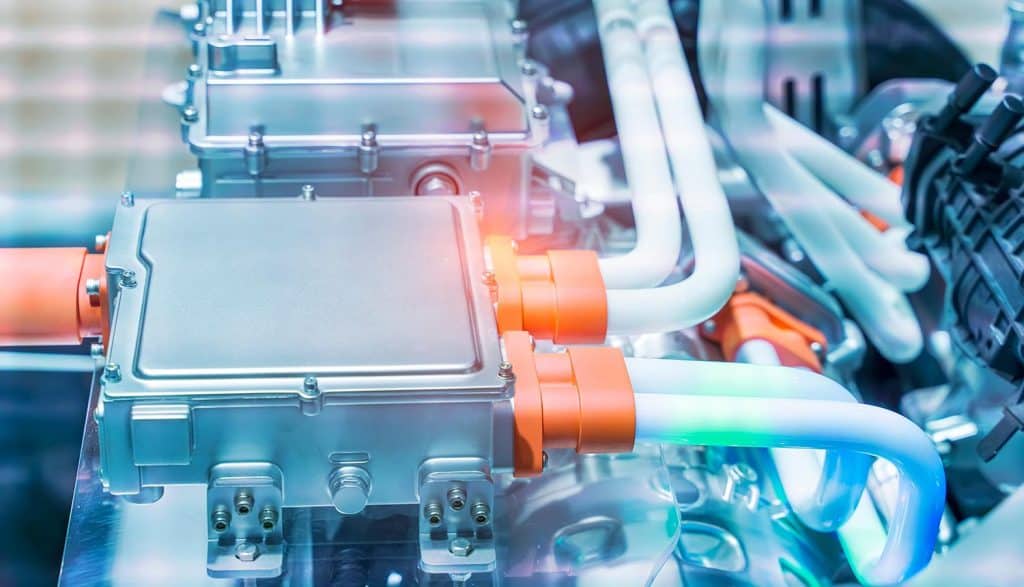

Forget ChatGPT vs Bard, The Real Battle is GPUs vs TPUs

Solving for efficient matrix multiplication can cut down on the amount of

compute resources required for training and inferencing tasks. While other

methods like quantisation and model shrinking have also proven to cut down on

compute, they sacrifice on accuracy. For a tech giant creating a

state-of-the-art model, they’d rather spend the $5 million, if there’s no way to

cut costs. ... NVIDIA’s GPUs were well-suited to matrix multiplication

tasks due to their hardware architecture, as they were able to effectively

parallelise across multiple CUDA cores. Training models on GPUs became the

status quo for deep learning in 2012, and the industry has never looked back.

Building on this, Google also launched the first version of the tensor

processing unit (TPU) in 2016, which contains custom ASICs (application-specific

integrated circuits) optimised for tensor calculations. In addition to this

optimisation, TPUs also work extremely well with Google’s TensorFlow framework;

the tool of choice for machine learning engineers at the company.

As Digital Trade Expands, Data Governance Fragments

The upshot is that we are still far from any more global efforts. Even

preliminary convergence on national laws about data protection and privacy

between the United States and the European Union is difficult to achieve.

Instead, Aaronson advocated for the establishment of a new international

organization that could provide proper incentives to, and pay, global firms to

share data. Overall, the panellists urged that technical discussions of data

flows, data governance and rules for digital trade be contextualized within

fundamental concerns about the nature of data and the role of human rights.

These concerns equally require attention and governance. The discussion on

effective digital governance requires a fundamental rethink of the nature of

data. As emphasized by panellist Kyung Sin Park, data embeds fundamental human

freedoms and human information. It is closely linked to human rights. Data is

much more than an economic asset used in training artificial intelligence (AI)

algorithms.

Fall in Love with the Problem, Not the Solution: A Handbook for Entrepreneurs

Think of a problem—a big problem, something worth solving, something that

would make the world a better place. Ask yourself, who has this problem? If

you happen to be the only person on the planet with this problem, then go to a

shrink. It’s much cheaper and easier than building a startup. But if a lot of

people have this problem, go and speak with those people to understand their

perception of the problem. Know the reality, and only then start building the

solution. If you follow this path and your solution works, it’s guaranteed to

create value. But there is a more important part to this. Imagine speaking

with people and their feedback is, yeah, go ahead and solve that for me—this

is a big problem. All of a sudden you feel committed to this journey. You

essentially fall in love with the problem. Falling in love with the problem

dramatically increases your likelihood of being successful because the problem

becomes the north star of your journey, keeping you focused.

Data Mobility Framework: Expert Offers Four Keys to Know

It’s common for hybrid work teams to schedule when employees will be in the

office and when they’ll work remotely. But while remote workers don’t always

work from the same home office, they do expect similar access to business

data and applications regardless of the network or device they’re using—and

all of this remote connectivity has a material impact on data storage

demands. Organizations try to balance data storage initiatives to address

this without causing downtime to mission-critical applications and data. The

faster organizations can add new storage or move data non-disruptively to

another location, the better services they can deliver to end-users.

Thankfully, the right data migration partner can perform these critical

services non-disruptively in a matter of hours. This enables the

organization and its partners to access a range of capabilities to minimize

data migration efforts, including being able to migrate “hot data” to a new,

more powerful array without downtime. Hot data is any data that is in

constant demand, such as a database or application that’s essential for your

business to operate.

Stop Suffocating Success! 7 Ways Established Businesses Can Start Thinking Like a Startup.

Startups aren't trapped by old rules—they're in the process of inventing

themselves. Obviously, established companies can't just completely throw out

the rulebook. But remember rules should exist to help, not just because

they've always been there. Otherwise, people wind up blindly following often

annoying processes without thinking about the end goal. For example, if

multiple clients ask for a product feature that hasn't been included, but

there isn't a feature review meeting until the next quarter, does it make

sense to follow the rules and wait? Or should staff be empowered to add the

feature (or, at least, fast-track a product review)? Beware of any policy

that exists because "We've-always-done-things-this-way." ... Incompetent

workers can take a terrible toll. To start, everything's harder when the

people around you don't carry their weight. It's also demoralizing—you're

working so hard and hitting all your goals, while the person next to you

fails spectacularly and apparently isn't penalized for it. Over time, you're

likely to grow bitter or just stop trying so hard since results clearly

don't matter.

The Stubborn Immaturity of Edge Computing

Of course, they don’t even think of it as “the edge”. To them, it’s where

real work takes place. So when IT vendors and cloud providers and carriers

talk about the “far edge” (where real customers and real factories and real

work takes place), that makes no sense to people outside of IT vendors’

data-center-centric bubble. The real world doesn’t revolve around the data

center, or the cloud. What’s really far in the real world? The cloud. The

data center. Edge computing is a technology style that’s part of a digital

transformation trend. Digital transformation has been on a march for

decades, well before we called it that. It’s accelerated because of cloud

computing, and global connectivity. A lot of the technology transformation

has been taking place at the back-end. In data centers, in business models.

And there’s a lot left to be done. But the true green field in digital

transformation is where people and things and factories actually exist. (OK,

we’ll call that the “edge”, but that’s such an old IT-centric way of

talking!)

How the Future of Work Will Be Shaped by No Code AI

No-code, like other breakthroughs, is a thrilling disruption and improvement

in the software development process, particularly for small firms. Among its

various applications, no-code has enabled users with little technical

experience to create applications using pre-built frameworks and templates,

which will undoubtedly lead to further inventions and design and development

in the digital town square. It also cuts down on software development time,

allowing for faster implementation of business solutions. Aside from the

time saved, no-code can enhance computer and human resources by transferring

these duties to software suppliers. ... No-code is also a game changer for

many AI technology developers and non-technical people since it focuses on

something we never imagined possible in the difficult field of artificial

intelligence: simplicity. Anyone will be able to swiftly build AI apps using

no-code development platforms, which provide a visual, code-free, and

easy-to-use interface for deploying AI and machine learning models.

Code Readability vs Performance: Here is The Verdict

Code performance is critical, especially when working on projects that

require high-speed computation and real-time processing. This can result in

slow and sluggish user experiences. But focusing on the performance of a

code that is not readable is useless. Moreover it can also be prone to bugs

and errors. Performance is a quirky thing. Starting to write a code with

performance as the first priority is not a path that any developer would

take, or even recommend. In a Reddit thread, a developer gives an example of

a code that compiles in 1 millisecond, and the other code in 0.1

millisecond. No one can really notice the difference between both the models

as long as the code is “fast enough”. So improving the performance and

focusing on it, while sacrificing the readability of the code can be

counterproductive. Moreover, in the same Reddit thread, another developer

pointed out that writing faster algorithms actually requires you to write

harder code oftentimes, which again sacrifices the readability.

LockBit Group Goes From Denial to Bargaining Over Royal Mail

LockBit's about-face - "it wasn't us" to "it was us" - is a reminder that

ransomware groups will continue to lie, cheat and steal, so long as they can

profit at a victim's expense. Isn't hitting a piece of Britain's critical

national infrastructure - as in, the national postal service - risky? After

DarkSide hit Colonial Pipeline in the United States in May 2021, for

example, the group first blamed an affiliate before shutting down its

operations and later rebooting under a different name. While hitting CNI

might seem like playing with fire, many security experts' consensus is that

ransomware groups' target selection remains opportunistic. Both operators

and any affiliates who use their malware, as well as the initial access

brokers from whom they often buy ready-made access to victims' networks,

seem to snare whoever they can catch and then perhaps prioritize victims

based on size and industry. What's notable isn't necessarily that LockBit -

or one of its affiliates - hit Royal Mail, but that it decided to press the

attack.

Quote for the day:

“None of us can afford to play small anymore. The time to step up and

lead is now.” -- Claudio Toyama