What you need to know about site reliability engineering

What is site reliability engineering? The creator of the first site reliability

engineering (SRE) program, Benjamin Treynor Sloss at Google, described it this

way: Site reliability engineering is what happens when you ask a software

engineer to design an operations team. What does that mean? Unlike traditional

system administrators, site reliability engineers (SREs) apply solid software

engineering principles to their day-to-day work. For laypeople, a clearer

definition might be: Site reliability engineering is the discipline of building

and supporting modern production systems at scale. SREs are responsible for

maximizing reliability, performance availability, latency, efficiency,

monitoring, emergency response, change management, release planning, and

capacity planning for both infrastructure and software. ... SREs should be

spending more time designing solutions than applying band-aids. A general

guideline is for SREs to spend 50% of their time in engineering work, such as

writing code and automating tasks. When an SRE is on-call, time should be split

between about 25% of time managing incidents and 25% on operations duty.

Are blockchains decentralized?

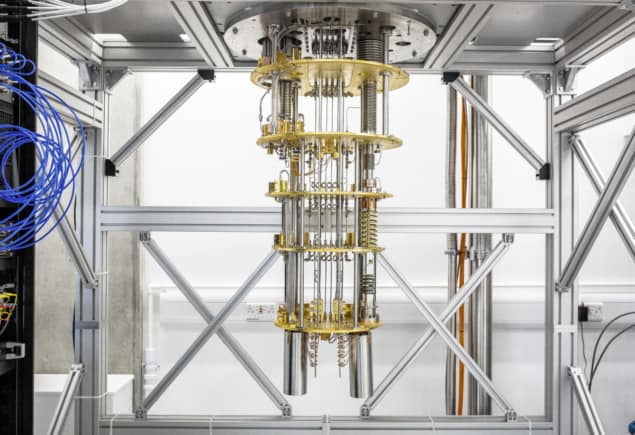

Over the past year, Trail of Bits was engaged by the Defense Advanced Research

Projects Agency (DARPA) to examine the fundamental properties of blockchains and

the cybersecurity risks associated with them. DARPA wanted to understand those

security assumptions and determine to what degree blockchains are actually

decentralized. To answer DARPA’s question, Trail of Bits researchers performed

analyses and meta-analyses of prior academic work and of real-world findings

that had never before been aggregated, updating prior research with new data in

some cases. They also did novel work, building new tools and pursuing original

research. The resulting report is a 30-thousand-foot view of what’s currently

known about blockchain technology. Whether these findings affect financial

markets is out of the scope of the report: our work at Trail of Bits is entirely

about understanding and mitigating security risk. The report also contains links

to the substantial supporting and analytical materials. Our findings are

reproducible, and our research is open-source and freely distributable. So you

can dig in for yourself.

Why The Castle & Moat Approach To Security Is Obsolete

At first, the shift in security strategy went from protecting one, single castle

to a “multiple castle” approach. In this scenario, you’d treat each

salesperson’s laptop as a sort of satellite castle. SaaS vendors and cloud

providers played into this idea, trying to convince potential customers not that

they needed an entirely different way to think about security, but rather that,

by using a SaaS product, they were renting a spot in the vendor’s castle. The

problem is that once you have so many castles, the interconnections become

increasingly more difficult to protect. And it’s harder to say exactly what is

“inside” your network versus what is hostile wilderness. Zero trust assumes that

the castle system has broken down completely, so that each individual asset is a

fortress of one. Everything is always hostile wilderness, and you operate under

the assumption that you can implicitly trust no one. It’s not an attractive

vision for society, which is why we should probably retire the castle and moat

metaphor. Because it makes sense to eliminate the human concept of trust

in our approach to cybersecurity and treat every user as potentially hostile.

Improving AI-based defenses to disrupt human-operated ransomware

Disrupting attacks in their early stages is critical for all sophisticated

attacks but especially human-operated ransomware, where human threat actors seek

to gain privileged access to an organization’s network, move laterally, and

deploy the ransomware payload on as many devices in the network as possible. For

example, with its enhanced AI-driven detection capabilities, Defender for

Endpoint managed to detect and incriminate a ransomware attack early in its

encryption stage, when the attackers had encrypted files on fewer than four

percent (4%) of the organization’s devices, demonstrating improved ability to

disrupt an attack and protect the remaining devices in the organization. This

instance illustrates the importance of the rapid incrimination of suspicious

entities and the prompt disruption of a human-operated ransomware attack. ... A

human-operated ransomware attack generates a lot of noise in the system. During

this phase, solutions like Defender for Endpoint raise many alerts upon

detecting multiple malicious artifacts and behavior on many devices, resulting

in an alert spike.

Reexamining the “5 Laws of Cybersecurity”

The first rule of cybersecurity is to treat everything as if it’s vulnerable

because, of course, everything is vulnerable. Every risk management course,

security certification exam, and audit mindset always emphasizes that there is

no such thing as a 100% secure system. Arguably, the entire cybersecurity

field is founded on this principle. ... The third law of cybersecurity,

originally popularized as one of Brian Krebs’ 3 Rules for Online Safety, aims

to minimize attack surfaces and maximize visibility. While Krebs was referring

only to installed software, the ideology supporting this rule has expanded.

For example, many businesses retain data, systems, and devices they don’t use

or need anymore, especially as they scale, upgrade, or expand. This is like

that old, beloved pair of worn out running shoes that sit in a closet. This

excess can present unnecessary vulnerabilities, such as a decades-old exploit

discovered in some open source software. ... The final law of cybersecurity

states that organizations should prepare for the worst. This is perhaps truer

than ever, given how rapidly cybercrime is evolving. The risks of a zero-day

exploit are too high for businesses to assume they’ll never become the victims

of a breach.

How to Adopt an SRE Practice (When You’re not Google)

At a very high level, Google defines the core of SRE principles and practices

as an ability to ’embrace risk.’ Site reliability engineers balance the

organizational need for constant innovation and delivery of new software with

the reliability and performance of production environments. The practice of

SRE grows as the adoption of DevOps grows because they both help balance the

sometimes opposing needs of the development and operations teams. Site

reliability engineers inject processes into the CI/CD and software delivery

workflows to improve performance and reliability but they will know when to

sacrifice stability for speed. By working closely with DevOps teams to

understand critical components of their applications and infrastructure, SREs

can also learn the non-critical components. Creating transparency across all

teams about the health of their applications and systems can help site

reliability engineers determine a level of risk they can feel comfortable

with. The level of desired service availability and acceptable performance

issues that you can reasonably allow will depend on the type of service you

support as well.

Are Snowflake and MongoDB on a collision course?

At first blush, it looks like Snowflake is seeking to get the love from the

crowd that put MongoDB on the map. But a closer look is that Snowflake is

appealing not to the typical JavaScript developer who works with a variable

schema in a document database, but to developers who may write in various

languages, but are accustomed to running their code as user-defined functions,

user-defined table functions or stored procedures in a relational database.

There’s a similar issue with data scientists and data engineers working in

Snowpark, but with one notable exception: They have the alternative to execute

their code through external functions. That, of course, prompts the debate

over whether it’s more performant to run everything inside the Snowflake

environment or bring in an external server – one that we’ll explore in another

post. While document-oriented developers working with JSON might perceive SQL

UDFs as foreign territory, Snowflake is making one message quite clear with

the Native Application Framework: As long as developers want to run their code

in UDFs, they will be just as welcome to profit off their work as the data

folks.

Fermyon wants to reinvent the way programmers develop microservices

If you’re thinking the solution sounds a lot like serverless, you’re not

wrong, but Matt Butcher, co-founder and CEO at Fermyon, says that instead of

forcing a function-based programming paradigm, the startup decided to use

WebAssembly, a much more robust programming environment, originally created

for the browser. Using WebAssembly solved a bunch of problems for the company

including security, speed and efficiency in terms of resources. “All those

things that made it good for the browser were actually really good for the

cloud. The whole isolation model that keeps WebAssembly from being able to

attack the hosts through the browser was the same kind of [security] model we

wanted on the cloud side,” Butcher explained. What’s more, a WebAssembly

module could download really quickly and execute instantly to solve any

performance questions, and finally instead of having a bunch of servers that

are just sitting around waiting in case there’s peak traffic, Fermyon can

start them up nearly instantly and run them on demand.

Metaverse Standards Forum Launches to Solve Interoperability

According to Trevett, the new forum will not concern itself with philosophical

debates about what the metaverse will be in 10-20 years time. However, he

thinks the metaverse is “going to be a mixture of the connectivity of the web,

some kind of evolution of the web, mixed in with spatial computing.” He added

that spatial computing is a broad term, but here refers to “3D modeling of the

real world, especially in interaction through augmented and virtual reality.”

“No one really knows how it’s all going to come together,” said Trevett. “But

that’s okay. For the purposes of the forum, we don’t really need to know. What

we are concerned with is that there are clear, short-term interoperability

problems to be solved.” Trevett noted that there are already multiple

standards organizations for the internet, including of course the W3C for web

standards. What MSF is trying to do is help coordinate them, when it comes to

the evolving metaverse. “We are bringing together the standards organizations

in one place, where we can coordinate between each other but also have good

close relationships with the industry that [is] trying to use our standards,”

he said.

What We Now Know: Digital Transformation Reaches a Point of Clarity

Technology adoption, as part of digital transformation initiative, is

generally of a greater scale and impact than what most are accustomed to,

primarily because we are looking not only to revamp parts of our IT

enterprise, but to also introduce brand new technology architecture

environments comprised of a combination of heavy-duty systems. In addition to

the due diligence that comes which planning for and incorporating new

technology innovations, with digital transformation initiatives we need to be

extra careful not to be lured into over-automation. The reengineering and

optimization of our business processes in support of enhancing productivity

and customer-centricity need to be balanced with practical considerations and

the opportunity to first prove that a given enhancement is actually effective

with our customers before building enhancements upon it. If we automate too

much too soon, it will be painful to roll back, both financially and

organizationally. Laying out a phased approach will avoid this.

Quote for the day:

"Real leadership is being the person

others will gladly and confidently follow." -- John C. Maxwell