Yann LeCun: AI Doesn’t Need Our Supervision

Self-supervised learning (SSL) allows us to train a system to learn good

representation of the inputs in a task-independent way. Because SSL training

uses unlabeled data, we can use very large training sets, and get the system to

learn more robust and more complete representations of the inputs. It then takes

a small amount of labeled data to get good performance on any supervised task.

This greatly reduces the necessary amount of labeled data [endemic to] pure

supervised learning, and makes the system more robust, and more able to handle

inputs that are different from the labeled training samples. It also sometimes

reduces the sensitivity of the system to bias in the data—an improvement about

which we’ll share more of our insights in research to be made public in the

coming weeks. What’s happening now in practical AI systems is that we are moving

toward larger architectures that are pretrained with SSL on large amounts of

unlabeled data. These can be used for a wide variety of tasks. For example, Meta

AI now has language-translation systems that can handle a couple hundred

languages.

Leading from the top to create a resilient organisation

In the rush to keep operations going, many businesses made quick decisions and

often, adopted the wrong services for their organisation. Our own research found

that over half (53%) of UK IT decision makers believe they made unnecessary tech

investments during the Covid-19 pandemic, and by speeding up or ignoring their

original strategy, have hindered their long term resilience. One thing almost

all businesses have recognised throughout the pandemic, is that their people are

the most critical and limiting factor to their business. Employee time is

valuable and by not having technology that supports them in their role,

productivity will drop, and employees may become an internal threat in terms of

cyber security. If businesses acknowledge that hybrid is the new normal, and

their people should be the priority, they can go some way to understand how IT

moves from an expense to adding value. Although most of this has stemmed from a

pandemic no one could have predicted, businesses and their leaders must now make

sure they haven’t created the perfect storm of a distributed, disconnected

workforce that is at risk of service outages.

Details of NSA-linked Bvp47 Linux backdoor shared by researchers

The attacks employing the Bvp47 backdoor are dubbed as 'Operation Telescreen' by

Pangu Lab. A telescreen was a device envisioned by George Orwell in his novel

1984 that enabled the state to remotely monitor others to control them.

According to Pangu Lab researchers, the malicious code of Bvp47 was developed to

give operators long-term control over compromised machines. 'The tool is

well-designed, powerful, and widely adapted. Its network attack capability

equipped by 0-day vulnerabilities was unstoppable, and its data acquisition

under covert control was with little effort,' they said. Complex code, Linux

multi-version platform adaption, segment encryption and decryption and extensive

rootkit anti-tracking mechanisms are all part of Bvp47's implementation. It also

features an advanced BPF engine, which is employed in advanced covert channels,

as well as a communication encryption and decryption procedure. The researchers

say the attribution to the Equation Group is based on the fact the sample code

shows similarities with exploits contained in the encrypted archive file

'eqgrp-auction-file.tar.xz.gpg' which was posted by the Shadow Brokers after the

failed auction in 2016.

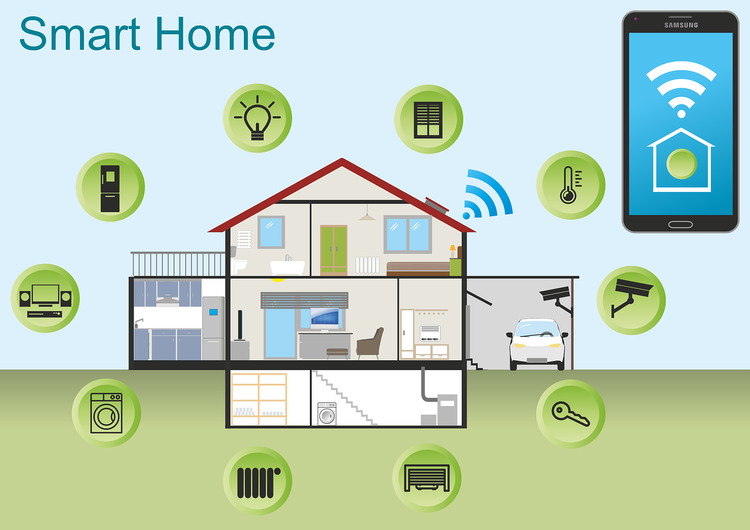

Cloud computing vs fog computing vs edge computing: The future of IoT

Cloud computing is the process of delivering on-demand services or resources

over the internet that allows users to gain seamless access to resources from

remote locations without expending any additional time, cost or workforce.

Switching from building in-house data centres to cloud computing helps the

company reduce its investment and maintenance costs considerably. ... Fog

computing is a type of computing architecture that utilises a series of nodes to

receive and process data from IoT devices in real-time. It is a decentralised

infrastructure that provides access to the entry points of various service

providers to compute, store, transmit and process data over a networking area.

This method significantly improves the efficiency of the process as the time

utilised in the transmission and processing of data is reduced. In addition, the

implementation of protocol gateways ensures that the data is secure. ... Cloud

or fog data prove to be unreliable when dealing with applications that require

instantaneous responses with tightly managed latency. Edge computing deals with

processing persistent data situated near the data source in a region considered

the ‘edge’ of the apparatus.

Data Unions Offer a New Model for User Data

One of the promises of a decentralized Web3 is the notion that as users we can

all own our data. This is in contrast to Web 2.0, where the prevailing view is

that we the users and our data are the product being exploited for financial

gain by large centralized organizations. A data union is a scalable way to

collect real-time data from individuals and package that data for sale, in a way

that is mutually agreeable to both the data source and the packaging

application. Much like workers joining a union in real life to rally around a

common set of goals, data unions allow individuals to join these unions to

aggregate data in a controlled way, complete with the ability to vote on how and

where the data is used, through DAO (decentralized autonomous organization)

governance. For users, one challenge to the idea of controlling your data is

finding an interested buyer. Few data consumers want to go through the hassle of

acquiring data from one individual at a time. Data unions solve this by

aggregating data from a set of users who opt-in.

How to protect your Kubernetes infrastructure from the Argo CD vulnerability

In terms of the impact of this vulnerability, Apiiro has determined the

following (so far). Note that the following information was from Apiiro’s

website at the time of the announcement and may be subject to change. Please

refer to Apiiro’s website for the latest information. Here’s what we know about

the vulnerability and what it could enable an attacker: The attacker can

read and exfiltrate secrets, tokens, and other sensitive information residing on

other applications; The attacker can “move laterally” from their

application to another application’s data. The risk was given a severity rating

of high given that the malicious Helm chart could potentially expose sensitive

information stored on a Git repository and also “roam” through applications

allowing attackers to read secrets, tokens, and sensitive data that reside

within the applications. The team behind Argo CD quickly provided a patch that

impacted organizations should apply as soon as possible as the vulnerability

affects all versions of the tool. The patch is available via Argo CD’s GitHub

repository.

Understanding your automation journey

In order to achieve shorter-term automation goals, businesses need to evaluate

their existing automation needs and ask a few key questions. Are they seeking to

automate mundane tasks to increase personal productivity, such as processing

emails, setting up notifications or organising files? Personal productivity

automation is employee-driven and used to tackle multiple tasks for productivity

gains at the individual level. Are they seeking to streamline business

processes, such as processing a high volume of invoices or moving data from one

system to another? Business process automation (BPA) is also employee-driven but

it streamlines business processes to deliver efficiencies and productivity gains

across users and departments. Automation might also be an ongoing project, often

referred to as an automation Centre of Excellence (CoE), which focuses on

intricate, enterprise-wide automation and orchestration. CoE-driven automation

is fairly complicated and has a significant influence on automating connected

processes.

Going Digital in the Middle of a Pandemic

/filters:no_upscale()/articles/going-digital-pandemic/en/resources/2image005-1645551760453.jpeg)

Independent work-streams allowed them to work in parallel. Does that mean we did

not have any dependencies? Not really. We had a stand-up which we called as

Scrum of Scrum, conducted daily, with participation from each development team,

with focus on dependencies and impediment resolution during the iteration. Given

the nature of program and diverse set of stakeholders, we decided to conduct

consolidated program iteration planning and showcase events. Development teams

would conduct their planning meetings individually. And join this program

meeting to share summary of key features taken up in the iteration, and the

sprint goal. Lastly, to provide stakeholders a view of how we were progressing

against defined release milestones, we tracked progress against iteration goals

vis-à-vis release objectives. A release was defined as a set of features

required to board users from a specific Geography. We provided a one-page

weekly/fortnightly program summary to senior CIO leadership and program

stakeholders, with data from ALM tool, along with any blockers & issues that

needed executive leadership support.

Cyber Insurance's Battle With Cyberwarfare: An IW Special Report

While the clauses were issued in the company’s marketing association bulletin

and allowed individual underwriters flexibility in applying them to individual

policies, they were widely interpreted as signifying a shift toward

non-coverage. All of Lloyd’s cyber policies are expected to include some

variation of these clauses going forward. Lloyd's of London's definition of

cyberwar broadly includes “cyber operations between states which are not

excluded by the definition of war, cyber war or cyber operations which have a

major detrimental impact on a state.” Formal attribution is not necessary for

exclusion, an important caveat that would allow for broad latitude in making

determinations of whether a given event is actually cyberwar or not. “I think

you're going to see a lot more of that, unless there is legislation that comes

out that more specifically defines cyberwar. I don't think we're really seeing

it at this point,” notes Adrian Mak, CEO of AdvisorSmith. The language in the

individual contracts is “what is driving the coverage at this point. And also,

interpretation of that [language].”

Digital transformation: Do's and don'ts for IT leaders to succeed

Fear is a natural reaction when we enter uncharted territory. Moreover, the

digital transformation journey also requires skill, patience, and a huge

financial investment, which adds an extra level of anxiety. Many leaders are

uncertain about investing resources into an initiative that they are unsure of,

even if there are plenty of stats available to back it up. If you are feeling

uncomfortable, try to focus your energy toward embracing your digital

transformation initiative and giving it everything it needs to succeed. Remind

yourself that in time, you will witness the positive results of your efforts and

even scale your business’s revenue. Every enterprise and organization must

eventually make digitalization a strategic cornerstone to remain competitive and

better serve their constituents. If convenience, scalability, and security are

among your business priorities, implementing a thoughtful digital transformation

initiative is essential.

Quote for the day:

"Absolute identity with one's cause is

the first and great condition of successful leadership." --

Woodrow Wilson