The Metaverse Is Coming; We May Already Be in It

The metaverse has moved beyond science fiction to become a “technosocial

imaginary,” a collective vision of the future held by those with the power to

turn that vision into reality. Facebook recently changed its name to Meta and

committed $10 billion to build out metaverse-related technology. Microsoft just

announced that it was spending a record-breaking $69 billion to buy Activision

Blizzard, the makers of some of the most popular massively multiplayer online

games in the world, including World of Warcraft. This current vision of the

metaverse goes well beyond the simple VR of my ping-pong game to eventually

include augmented reality (or AR, where smart glasses project objects onto the

physical world), portable digital goods and currency in the form of nonfungible

tokens (NFTs) and cryptocurrency, realistic AI characters that can pass the

Turing test, and brain-computer interface (BCI) technology. BCIs will eventually

allow us to not only control our avatars via brain waves, but eventually, to

beam signals from the metaverse directly into our brains, further muddying the

waters of what is real and what is virtual.

Using Machine Learning for Fast Test Feedback to Developers and Test Suite Optimization

The necessary step of integrating source control and test result data opens up

an “incidental” use case concerning the correct routing of defects in multi-team

environments. Sometimes there are defects/bugs where it is not clear which team

they should be assigned to. Typically, if you have more than two teams it can be

cumbersome to find the correct team to take care of a fix. This can lead to a

kind of defect ping-pong between the teams because no one feels responsible

until the defect is finally assigned to the correct team. Since the Healthineers

data also contains change management logs, there is information about defects

and their fixes, e.g. which team performed a fix or which files were changed. In

many cases, there are test cases connected to a defect - either existing ones

when a problem is found in a test run before release or new tests added because

a test gap was identified. This allows tackling the problem of this “defect hot

potato”. Defects can be related to test cases in several ways, for example if a

test case is mentioned in the defect’s description or if the defect management

system allows explicit links between defects and test cases.

Curious about quantum computing

As technologists, it’s our responsibility to also keep an eye on these

advancements—to learn where they’re headed, to steer our business partners

toward the right use cases for them, and even to help shape what they become.

Quantum computing is one such technology. I find the very idea of quantum

computing fascinating. It takes computer science—the hardware and software

that we created in the computer industry—and blends in the fundamentals of

nature, physics, and other observed sciences. I believe quantum computing is

an area that will fundamentally change the world around us… eventually. But I

also find that there’s a lot of hype and misinformation around quantum

computing, with only a handful of experts truly in a position to discuss its

current state (did you catch what I did there?). I wanted to cut through the

hype and go straight to one of these experts myself to get a better

understanding of where quantum computing is today and where it’s headed in the

future. Introducing, Dr. John Preskill. Dr. John Preskill is a pioneer in the

field of quantum computing. He is the Richard P. Feynman Professor of

Theoretical Physics at the California Institute of Technology, where he is

also the Director of the Institute for Quantum Information and Matter.

Is Serverless Just a Stopover for Event-Driven Architecture?

Serverless does illustrate many desirable traits. It is easy to scale up and

scale down. It’s triggered by events that are pushed rather than via a polling

mechanism. Functions only consume resources based on that job’s needs, then

exits and frees up resources for other workloads. Developers benefit from the

abstraction of infrastructure and could deploy code easily via their CI/CD

pipelines without concern as to how to provision resources. However, the point

that Aniszczyk alludes to is that serverless isn’t designed for many

situations including long-running applications. They can actually be more

expensive to the end user than running a dedicated application in containers,

a VM or on bare metal. As an opinionated solution, it forces developers into

the model facilitated by the vendor. In addition, serverless doesn’t have an

easy way to handle state. Finally, though serverless deployments are largely

deployed in the cloud, they aren’t easily deployed across cloud providers. The

tooling and mechanisms for managing serverless are very much specific to the

cloud, though perhaps with the donation of Knative to the CNCF, there could be

a serverless platform that could be developed and deployed with the support of

the industry, much like Kubernetes has.

Why Big Tech is losing talent to crypto, web3 projects

Another example of a high-profile person leaving big tech for crypto is John

deVadoss, former Managing Director (MD) at Microsoft, where he spent about 16

years of his career in a variety of roles, for example General Manager (GM)

overseeing the developer platform Microsoft.NET, and most recently building

Microsoft Digital from zero to half a billion dollars of business worldwide.

“I built and led Architecture strategy for .NET at Microsoft; I built the

first enterprise frameworks and tools for Visual Studio .Net; I lead

Microsoft’s first application platform product line and strategy, and I also

worked on the Azure developer experience, long before it was called Microsoft

Azure,” says deVadoss in an interview with CryptoSlate. After all these years

at Microsoft, deVadoss went for Neo – the “Chinese Ethereum” blockchain with

high ambitions indeed. ... “I have worked on developer platforms and tools for

over 25 years, and it was a natural move to build the blockchain industry’s

best developer tools and experience for Neo N3, the first polyglot blockchain

platform in the industry and the most developer-friendly,” deVadoss says.

Blockchain: The game-changing technology that’s about to disrupt almost every industry

Blockchain technology can offer effective solutions to banks and non-banking

financial institutions (NBFCs) to improve their payment clearing and credit

information systems. It can also enhance the security of online banking

transactions. With blockchain, banks could combine their payment protocols

with smart contracts, and this would allow them to establish multiple data

points on each transaction. These data points would further enable banks to

monitor their loans, track transactions, and easily manage their invoicing and

financing-related activities. In a blockchain-based banking system, each user

can be provided with a private key for every transaction on the ledger; this

key works like a unique digital signature. So at any point, if a banking

record is altered, the digital signature is rendered invalid, and the whole

banking network is notified of the anomaly. ... Cryptocurrencies provide an

alternative to traditional banking for people who remain unbanked, for various

reasons. There use has also been suggested as a way to decouple currencies

from the traditional monetary systems. For example, the hyperinflation that

began in Venezuela in 2016 resulted in a steep devolution of the nation’s

currency.

Behind the stalkerware network spilling the private phone data of hundreds of thousands

TechCrunch first discovered the vulnerability as part of a wider exploration

of consumer-grade spyware. The vulnerability is simple, which is what makes it

so damaging, allowing near-unfettered remote access to a device’s data. But

efforts to privately disclose the security flaw to prevent it from being

misused by nefarious actors has been met with silence both from those behind

the operation and from Codero, the web company that hosts the spyware

operation’s back-end server infrastructure. The nature of spyware means those

targeted likely have no idea that their phone is compromised. With no

expectation that the vulnerability will be fixed any time soon, TechCrunch is

now revealing more about the spyware apps and the operation so that owners of

compromised devices can uninstall the spyware themselves, if it’s safe to do

so. Given the complexities in notifying victims, CERT/CC, the vulnerability

disclosure center at Carnegie Mellon University’s Software Engineering

Institute, has also published a note about the spyware.

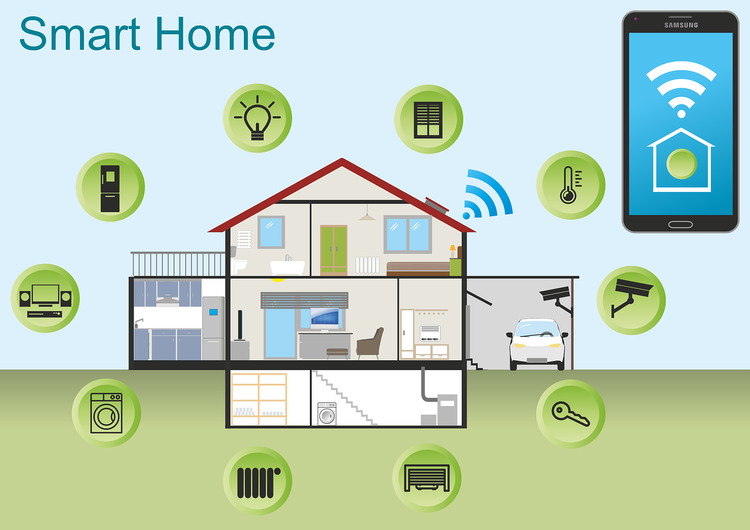

Matter, explained: What is the next-gen smart home standard?

Matter uses a wireless technology based on Internet Protocol (IP), which Wi-Fi

routers use to assign an address to your connected devices. There are no

awkward handoffs or other wireless technologies to deal with by natively

integrating an IP-based protocol for smart home devices. It paves the way

forward to a future where all Matter certified devices will work alongside

each other in synchronous harmony. As you can see, bringing our smart home

devices together like this not only makes setup a breeze, but it's absolutely

essential when designing a single universal smart home environment that just

works. The ultimate goal here is to create a "set it and forget it" situation

where these devices essentially fade into the background rather than sit in

the foreground. Thankfully, Matter sounds like the thing we need to finally

bridge that gap and fix the smart home situation once and for all. We have

some of the biggest tech giants working together to make Matter a unified

protocol in our smart homes of the future.

Mitigating Risks in Cloud Native Applications

As the shift to the work-from-anywhere model becomes mainstream and cloud

applications continue to surge, it is redefining new developments like

“security and observability is converging,” said Tipirneni. While DevOps and

IT security have traditionally been treated as separate disciplines, their

roles and responsibilities are increasingly moving toward the DevSecOps trend.

“Solving the security problem and observability problem is your ability to

instrument everything that is happening in the system at a very fine-grained

level — from gathering the data and really making sense of the data,” said

Tipirneni. “Developers try to work around security controls that are complex

but bringing those two together puts the power in the developers’ hands” he

added. Information security and development teams have traditionally managed

Tigera’s solutions like Calico and Envoy, but for cloud-first companies who do

not have legacy applications “DevOps, Cloud Ops engineers are pretty much

responsible end to end,” said Tipirneni. From deploying applications to

troubleshooting and managing compliance and security, “the challenge they have

is that there’s just way too much on their plate to do,” Tipirneni added.

NFT use cases for businesses

NFTs have also shown capability of showing organisations the interests of

their customers, without marketing teams needing to scour Internet usage data.

In time, NFTs could be utilised to learn more about what customers need,

before a product is purchased. Conor Svensson, founder and CEO of Web3

Labs, said: “I believe the true inflection point of adoption will be when the

majority of smartphone users hold them. Whilst the technology is there to do

this currently, only a minority of people keep NFTs on them. This will be key

for true mass adoption. “An NFT can represent any real-world or virtual good,

as it stands the greatest value outside of financial for them is the

communities that are forming around holders of them. This is a marketeer’s

dream, as prior to NFTs it wasn’t easy to learn a person was interested in a

product or brand unless they purchased it or engaged with it by signing up for

email updates, liking Twitter posts, etc. “The NFTs a person holds in a wallet

can be viewed as an expression of their interests, and the fact that this is

public information is a powerful tool for targeting individuals and

communities.

Quote for the day:

"Leadership is familiar, but not well

understood." -- Gerald Weinberg

No comments:

Post a Comment