How to answer the age-old question: Could this meeting have been an email?

Companies want their people to be productive and their processes and systems to

be efficient. “From an efficiency perspective, meetings to discuss a challenging

issue or to make a decision are a good investment of time,” says Christie. “It

is a better use of the team’s time to get them in a room together for 30 minutes

to debate an issue or make a decision, than it is to send multiple emails

seeking input, to read all of the input, to synthesize the input, and then

arrive at a decision which then needs to be disseminated.” ... Before you send

out a calendar invite, consider that meetings are multiples of their attendees,

says Burns. “If a bad six-minute meeting has 10 attendees, that’s an hour of

wasted productivity,” he says. “Now imagine a bad 30-minute meeting with three

people or more.” Meetings are requests for someone’s time, so carefully evaluate

the its cost or benefit, says Janardan. ... Resist the urge to default to

a meeting, says Janardan: “Remind yourself of all the things that got resolved

when you had those casual run-ins at work and try to recreate them—whether you

are in-person or not. Sometimes the fastest way to come to a solution is to just

pick up the phone or physically seek out a colleague if you are spending more

time in a physical office.”

Companies want their people to be productive and their processes and systems to

be efficient. “From an efficiency perspective, meetings to discuss a challenging

issue or to make a decision are a good investment of time,” says Christie. “It

is a better use of the team’s time to get them in a room together for 30 minutes

to debate an issue or make a decision, than it is to send multiple emails

seeking input, to read all of the input, to synthesize the input, and then

arrive at a decision which then needs to be disseminated.” ... Before you send

out a calendar invite, consider that meetings are multiples of their attendees,

says Burns. “If a bad six-minute meeting has 10 attendees, that’s an hour of

wasted productivity,” he says. “Now imagine a bad 30-minute meeting with three

people or more.” Meetings are requests for someone’s time, so carefully evaluate

the its cost or benefit, says Janardan. ... Resist the urge to default to

a meeting, says Janardan: “Remind yourself of all the things that got resolved

when you had those casual run-ins at work and try to recreate them—whether you

are in-person or not. Sometimes the fastest way to come to a solution is to just

pick up the phone or physically seek out a colleague if you are spending more

time in a physical office.”

Researchers Create New Approach to Detect Brand Impersonation

These attacks, in which adversaries craft content to mimic known brands and trick victims into sharing information, have grown harder to detect as technology and techniques improve, says Justin Grana, applied researcher at Microsoft. While business-related applications are most often spoofed in these types of attacks, criminals can forge brand logos for any organization. "Brand impersonation has increased in its fidelity, in the sense that, at least from a visual [perspective], something that is malicious brand impersonation can look identical to the actual, legitimate content," Grana explains. "There's no more copy-and-paste, or jagged logos." In today's attacks, visual components of brand impersonation almost exactly mimic true content. ... While too many types of content can present a detection challenge, too few can do the same. Many brands, such as regional banks and other small organizations, aren't often seen in brand impersonation, so there might only be a handful of training examples for a system to learn from.The 10 temptations you should not fall into as a leader

The path of leadership is plagued with complex situations, which merit making

unpleasant decisions. A layoff, sanctions of different kinds, tackling a tactic,

abandoning a long-standing customer or an established supplier. In this sense, a

frequent error of being observed happens because, due to lack of courage or fear

of losing the admiration of collaborators, one avoids taking this type of

action, with the naive idea of assuming that “everything happens”. On the

contrary, each and every one of these situations that are not resolved tend to

increase their emotional volume as the days go by and constantly acquire an

increasingly bitter taste. ... Leaders who fall into this temptation have a

clear component of insecurity, feeling that if they do not know everything that

happens, they could be in danger. Then, people who use the logic of mistrust as

a standard, will place a collaborator "of his kidney" whose main responsibility

will be to tell him everything that his colleagues say, do or even think. The

effects of this are lethal. The credibility of the leader is undermined and

collective mistrust is strengthened, seriously affecting the transparency that

the team culture requires as the oxygen for its operation.

The path of leadership is plagued with complex situations, which merit making

unpleasant decisions. A layoff, sanctions of different kinds, tackling a tactic,

abandoning a long-standing customer or an established supplier. In this sense, a

frequent error of being observed happens because, due to lack of courage or fear

of losing the admiration of collaborators, one avoids taking this type of

action, with the naive idea of assuming that “everything happens”. On the

contrary, each and every one of these situations that are not resolved tend to

increase their emotional volume as the days go by and constantly acquire an

increasingly bitter taste. ... Leaders who fall into this temptation have a

clear component of insecurity, feeling that if they do not know everything that

happens, they could be in danger. Then, people who use the logic of mistrust as

a standard, will place a collaborator "of his kidney" whose main responsibility

will be to tell him everything that his colleagues say, do or even think. The

effects of this are lethal. The credibility of the leader is undermined and

collective mistrust is strengthened, seriously affecting the transparency that

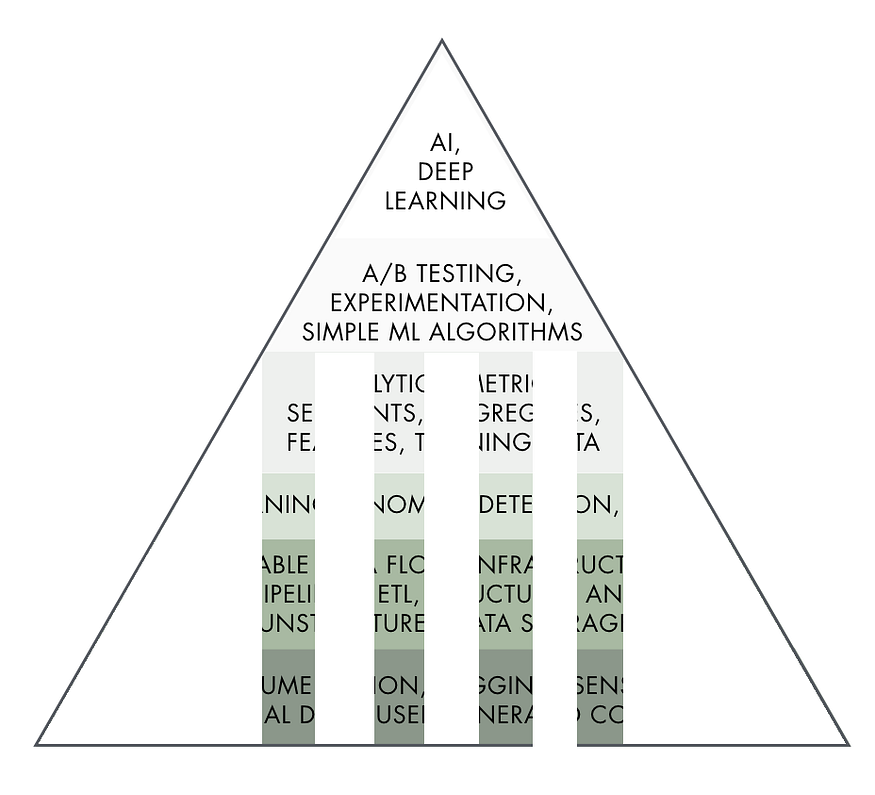

the team culture requires as the oxygen for its operation.Beginner’s Guide To Machine Learning With Apache Spark

Spark is known as a fast, easy to use and general engine for big data

processing. A distributed computing engine is used to process and analyse large

amounts of data, just like Hadoop MapReduce. It is quite faster than the other

processing engines when it comes to data handling from various platforms. In the

industry, there is a big demand for engines that can process tasks like the

above. Today or later, your company or client will be asked to develop

sophisticated models that would enable you to discover a new opportunity or risk

associated with it, and this all can be done with Pyspark. It is not hard to

learn Python and SQL; it is easy to start with it. Pyspark is a data analysis

tool created by the Apache Spark community for using Python and Spark. It allows

you to work with Resilient Distributed Dataset(RDD) and DataFrames in python.

Pyspark has numerous features that make it easy, and an amazing framework for

machine learning MLlib is there. When it comes to huge amounts of data, pyspark

provides you with fast and real-time processing, flexibility, in-memory

computation and various other features.

Spark is known as a fast, easy to use and general engine for big data

processing. A distributed computing engine is used to process and analyse large

amounts of data, just like Hadoop MapReduce. It is quite faster than the other

processing engines when it comes to data handling from various platforms. In the

industry, there is a big demand for engines that can process tasks like the

above. Today or later, your company or client will be asked to develop

sophisticated models that would enable you to discover a new opportunity or risk

associated with it, and this all can be done with Pyspark. It is not hard to

learn Python and SQL; it is easy to start with it. Pyspark is a data analysis

tool created by the Apache Spark community for using Python and Spark. It allows

you to work with Resilient Distributed Dataset(RDD) and DataFrames in python.

Pyspark has numerous features that make it easy, and an amazing framework for

machine learning MLlib is there. When it comes to huge amounts of data, pyspark

provides you with fast and real-time processing, flexibility, in-memory

computation and various other features.Facebook AI Releases ‘BlenderBot 2.0’: An Open Source Chatbot

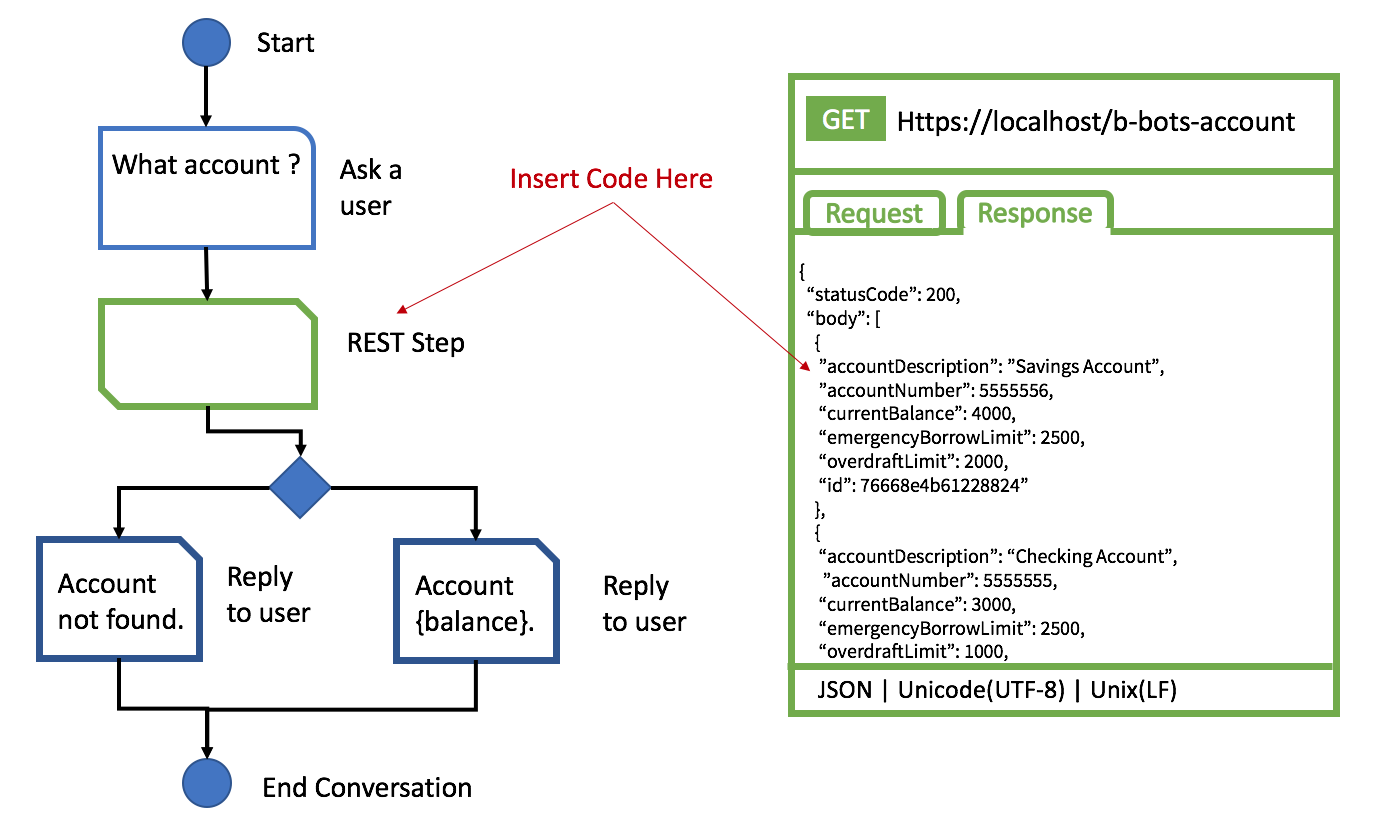

BlenderBot 2.0 is better at conducting more extended, more knowledgeable, and

factually consistent conversations over multiple sessions than the existing

state-of-the-art chatbot. BlenderBot’s improved conversational abilities have

made it a serious contender for artificial intelligence research. The AI model

takes the information it gets from conversations and stores them in long-term

memory. The knowledge is stored separately for each person they speak to, which

ensures that new information learned in one conversation can’t be used against

another. This model can read and respond in real-time, making it an excellent

tool for keeping up with current events. It can scan the internet for new

information to have a more up-to-date conversation. Facebook AI Research is

releasing the complete model, code, and evaluation set up to help advance

conversational AI research. The Facebook team combined human conversations with

internet searches that have been bolstered for training purposes.

BlenderBot 2.0 is better at conducting more extended, more knowledgeable, and

factually consistent conversations over multiple sessions than the existing

state-of-the-art chatbot. BlenderBot’s improved conversational abilities have

made it a serious contender for artificial intelligence research. The AI model

takes the information it gets from conversations and stores them in long-term

memory. The knowledge is stored separately for each person they speak to, which

ensures that new information learned in one conversation can’t be used against

another. This model can read and respond in real-time, making it an excellent

tool for keeping up with current events. It can scan the internet for new

information to have a more up-to-date conversation. Facebook AI Research is

releasing the complete model, code, and evaluation set up to help advance

conversational AI research. The Facebook team combined human conversations with

internet searches that have been bolstered for training purposes.The Evolving Role of the CISO

In the era of the digital workplace, CISOs must not only focus on preventing

threats, but create systems that work for the business and still keep everyone

protected. Constant innovation, creation and implementation of unique strategies

are already part of the CISOs job description. ... Decision-making that ties

business strategy and security processes into a firm knot is the only way to

stand straight amidst the faced-paced, ever-changing storm of digital services.

The role of the CISO is evolving faster than ever, and becoming the jack of all

security and business trades. On Monday, they’re the superheroes keeping the

cybercriminals out. On Tuesday, they’re improving the organization’s security

posture. By the end of the week they’re C-suite ambassadors and innovating the

concept of security, all while delivering positive business value. As the role

continues to evolve and the CISO’s depth and breadth of knowledge regarding the

business, its underlying technology and its core risks, the role will continue

to elevate outside of IT and be seen as a peer of the CIO.

In the era of the digital workplace, CISOs must not only focus on preventing

threats, but create systems that work for the business and still keep everyone

protected. Constant innovation, creation and implementation of unique strategies

are already part of the CISOs job description. ... Decision-making that ties

business strategy and security processes into a firm knot is the only way to

stand straight amidst the faced-paced, ever-changing storm of digital services.

The role of the CISO is evolving faster than ever, and becoming the jack of all

security and business trades. On Monday, they’re the superheroes keeping the

cybercriminals out. On Tuesday, they’re improving the organization’s security

posture. By the end of the week they’re C-suite ambassadors and innovating the

concept of security, all while delivering positive business value. As the role

continues to evolve and the CISO’s depth and breadth of knowledge regarding the

business, its underlying technology and its core risks, the role will continue

to elevate outside of IT and be seen as a peer of the CIO.Is DeFi the future of financial infrastructure and money?

DeFi apps could benefit by borrowing some of the legacy concepts, particularly

in terms of compliance and consumer experience. For example, they could

definitely make the front-end of these apps a much better customer experience

for end users. The DeFi space also doesn’t really have a concept of customer

relationship management nor typically collects any amount of consumer data.

While great from a privacy perspective, there is great value in understanding

the customer better. There are security audits DeFi products do, but they

feature none of the security guarantees most consumers are accustomed to in the

traditional financial world. Notifications or alerts also don’t really exist at

all in the DeFi space. In terms of products, there are tools to measure

blockchain activity, but not to measure engagement within DeFi applications.

Most of the developers in the crypto space are building right on top of the

layer one protocol itself. There aren’t any concepts of developer platforms or

middleware yet. In traditional finance, if you make a mistake, a financial

institution can initiate a rollback of the transaction – this doesn’t exist at

all in DeFi yet.

DeFi apps could benefit by borrowing some of the legacy concepts, particularly

in terms of compliance and consumer experience. For example, they could

definitely make the front-end of these apps a much better customer experience

for end users. The DeFi space also doesn’t really have a concept of customer

relationship management nor typically collects any amount of consumer data.

While great from a privacy perspective, there is great value in understanding

the customer better. There are security audits DeFi products do, but they

feature none of the security guarantees most consumers are accustomed to in the

traditional financial world. Notifications or alerts also don’t really exist at

all in the DeFi space. In terms of products, there are tools to measure

blockchain activity, but not to measure engagement within DeFi applications.

Most of the developers in the crypto space are building right on top of the

layer one protocol itself. There aren’t any concepts of developer platforms or

middleware yet. In traditional finance, if you make a mistake, a financial

institution can initiate a rollback of the transaction – this doesn’t exist at

all in DeFi yet. How Blockchain and Cryptocurrency Can Revolutionize Businesses

Unlike traditional card payments, which can be reversed using the chargeback

feature, Bitcoin and other cryptocurrency payments cannot be reversed. Because

each transaction is securely recorded, there is a long-term audit trail that can

be utilized to trace transactions and verify their authenticity. As a result,

each transaction has greater audibility and accountability, dramatically

reducing the likelihood of fraudulent transactions. This audibility feature can

also be used to track other assets, allowing businesses to keep a database of

various types of information about these assets up to date. Increased

traceability of the supply chain The use of blockchain-based applications makes

it easier to track products and goods as they move through different stages of

the supply chain. The ability to monitor suppliers in real-time, eliminate human

errors in data updating and use smart contracts for payments is expected to

transform the global supply chain industry. With the supply chain becoming more

efficient, organizations can shift their focus on cutting down other costs and

more efficiently streamlining other processes, including production.

Unlike traditional card payments, which can be reversed using the chargeback

feature, Bitcoin and other cryptocurrency payments cannot be reversed. Because

each transaction is securely recorded, there is a long-term audit trail that can

be utilized to trace transactions and verify their authenticity. As a result,

each transaction has greater audibility and accountability, dramatically

reducing the likelihood of fraudulent transactions. This audibility feature can

also be used to track other assets, allowing businesses to keep a database of

various types of information about these assets up to date. Increased

traceability of the supply chain The use of blockchain-based applications makes

it easier to track products and goods as they move through different stages of

the supply chain. The ability to monitor suppliers in real-time, eliminate human

errors in data updating and use smart contracts for payments is expected to

transform the global supply chain industry. With the supply chain becoming more

efficient, organizations can shift their focus on cutting down other costs and

more efficiently streamlining other processes, including production.The lighthouse signals a digital disruption storm

In BFSI, with remote working/collaboration and digital transactions,

cybersecurity has become one of the main focus points during the COVID-19

pandemic, adds Rajdeep Saha, Managing Director, Financial Services – Technology

Consulting Practice, Protiviti India. “In this context, secure access service

edge (SASE) and Zero Trust model can help create a single cloud-native security

service, coupled with other enablers.” Tare recommends a host of measures

underlining the new role that artificial intelligence (AI) and analytics would

be playing ahead. “Cyberattack insurance is now available from several

organisations. A bundle like this is a must for all financial institutions.

Security analytics, machine learning, and artificial intelligence are some of

the cutting-edge technologies that are helping strengthen the cyber defense

mechanism. Before threats assault your infrastructure, the finest protection

mechanisms detect and neutralise risks.” Implementation of PCI-DSS compliance,

card payment security, and others have reduced the impact of cyber threats in

financial institutions, he adds.

In BFSI, with remote working/collaboration and digital transactions,

cybersecurity has become one of the main focus points during the COVID-19

pandemic, adds Rajdeep Saha, Managing Director, Financial Services – Technology

Consulting Practice, Protiviti India. “In this context, secure access service

edge (SASE) and Zero Trust model can help create a single cloud-native security

service, coupled with other enablers.” Tare recommends a host of measures

underlining the new role that artificial intelligence (AI) and analytics would

be playing ahead. “Cyberattack insurance is now available from several

organisations. A bundle like this is a must for all financial institutions.

Security analytics, machine learning, and artificial intelligence are some of

the cutting-edge technologies that are helping strengthen the cyber defense

mechanism. Before threats assault your infrastructure, the finest protection

mechanisms detect and neutralise risks.” Implementation of PCI-DSS compliance,

card payment security, and others have reduced the impact of cyber threats in

financial institutions, he adds.Is Cryptocurrency-Mining Malware Due for a Comeback?

Cryptocurrency mining refers to solving computationally intensive mathematical tasks. In the case of bitcoin, such tasks are used to verify the blockchain, or public ledger, of transactions. As an incentive, anyone who mines for cryptocurrency has a chance of getting some cryptocurrency back as a reward. But for bitcoin and some other types of cryptocurrency, the amount of reward decreases as more blocks get added. Mining can consume copious amounts of electricity - so much so, that some studies have found it would be cheaper to buy gold outright rather than obtain cryptocurrency via mining. Such calculations are always in flux, with the rise and fall in cryptocurrency value. But for attackers, the easiest approach is to have someone else pay for the power while they walk away with the cryptocurrency. ... The takeaway for security teams, as ever, is vigilance, because if attackers can sneak cryptominers onto an organization's systems - eating up processing power and racking up sky-high electricity bills - they might put something nastier there too.Quote for the day:

"The level of morale is a good barometer of how each of your people is experiencing your leadership." -- Danny Cox