Why Developers Should Learn Kubernetes

Along with DevOps and SRE adoption, there is also a lot of discussion about

“shifting left” in the software development world. At its core, shifting left

means focusing on moving problem detection and prevention earlier in the

software development lifecycle (SDLC) to improve overall quality. More robust,

automated continuous integration/continuous delivery (CI/CD) pipelines and

testing practices are prime examples of how this works. Shifting left applies to

operational best practices as well. Once upon a time, developers would code

their applications and then hand them off to operations to deploy into

production. Things have changed dramatically since that time, and old models

don’t work the way they once did. Knowing about the platform that the

application lives on is critical. Successful engineering organizations work hard

to ensure development and operations teams avoid working in silos. Instead, they

aim to collaborate earlier in the software development lifecycle so that coding,

building, testing and deployments are all well understood by all teams involved

in the process.

Top 5 Programming Languages for Automation Testing

JavaScript focuses strongly on test automation and performs well when it comes

to rebranding the client-side expectations through front-end development.

Unavoidably, there are many web applications like Instagram, Accenture, Slack,

and Airbnb which support libraries written through JavaScript automation, such

as instauto, ATOM (Accenture Test Automation Open source Modular Libraries),

Botkit, and Mavericks. Besides, there are various testing frameworks like Zest,

Jasmine, and Nightwatch JS which refine multiple processes of unit testing as

well as end-to-end testing. The reason for using them is that programmers or

developers may build strong web applications primarily focusing on the core

logic of businesses and quickly resolving security-related issues that may occur

anywhere and anytime. With such advantages, teams working for automation testing

won’t feel pressured because the debugging time and other code glitches are

reduced and the productivity is promisingly increased with the shift-left

testing approach.

Trickbot Malware Rebounds with Virtual-Desktop Espionage Module

The latest version of the spy module makes use of virtual network computing

(VNC): hence its name, vncDll. It essentially sets up a virtual desktop that

mirrors the desktop of a victim machine and sets about using it to steal

information. It’s been circulating since late May, researchers said. When first

installed, vncDll uses a custom communications protocol to transmit information

to and from one of the up to nine C2 servers that are defined in its

configuration file. The module will use the first one to which it can connect.

“The port used to communicate with the servers is 443, to avoid arousing the

suspicion of anyone observing the traffic,” according to the Bitdefender

analysis. “Although traffic on this port normally uses SSL or TLS, the data is

sent unencrypted.” The first order of business is to announce to the C2 server

that it’s been installed, and it then waits to receive a set of commands. The C2

connects to an attacker-controlled client, which is a software application that

the attackers use to interact with the victims through the C2 servers.

Four common biases in boardroom culture

Boards can be effective only if they can come to a consensus. Let’s say a

company is considering the launch of a significant new product, but five of the

12 directors have concerns going into a meeting on the topic. Some have

discussed the issue among themselves before the meeting. Many are worried about

how the full board discussion will go. In the meeting, one director starts to

share his concerns, but the CEO quickly moves on. Over the course of the

meeting, more and more heads start to nod along. No parts of the strategy for

this new product have changed. But now the entire board appears supportive,

including the director whose concerns were dismissed. Though consensus-building

is important, boards may be too inclined to seek harmony or conformity. This can

lead to groupthink, a much-written-about challenge facing companies in which

dissenting views are not welcomed or even entertained. In fact, though most

boards work to solicit a range of views and come to a consensus on key issues,

the 2020 edition of PwC’s Annual Corporate Directors Survey found that 36% of

directors have difficulty voicing a dissenting view on at least one topic in the

boardroom.

Moving Data is Expensive

Data created at the edge must be accessed and processed by the applications in

the datacenter. The necessity to move data to the application incurs a

productivity penalty. Take media and entertainment: editors, colorists, and

special effects artists in multiple locations may sit idle waiting for data to

become accessible. A 30 minute delay across 200 animators may result in ~$400K

unintended cost. Data may have to be moved multiple times, each time incurring

the productivity penalty. Every time data is moved or copied, storage resources

must be made available to store it. Whether it is persistent storage or a

caching device, disk drives are deployed to catch data being sent. Moving 10TB

requires 10TB of storage to be available in every location requiring data

access. The cost of storage varies from $120/TB/yr for archiving tier to

$720/TB/yr for high-performance tier. Every copy created incurs an added storage

cost. These estimates are marginally accurate; procuring small amounts of

storage may be even more costly since economies of scale kick in at over

40TB.

How to Best Assess Your Security Posture

Risk assessment can help an organization figure out what assets it has, the

ownership of those assets and everything down to patch management. It involves

figuring out what you want to measure risk around because there are a bunch of

different frameworks out there [such as] NIST and the Cyber Security Maturity

Model, (C2M2)" said Bill Lawrence, CISO at risk management platform provider

SecurityGate.io. "Then, in an iterative fashion, you want to take that initial

baseline or snapshot to figure out how well or how poorly they're measuring up

to certain criteria so you can make incremental or sometimes large improvements

to systems to reduce risk. ... Looking at your own scorecard is a good way to

get started and thinking about assessments because ultimately you're going to be

assigning the same types of weights and risk factors to your vendors," said Mike

Wilkes, CISO at cybersecurity ratings company SecurityScorecard. "We need to get

beyond thinking that you're going to send out an Excel spreadsheet

[questionnaire] once a year to your core vendors.

Leveraging data: what retailers can learn from Netflix

For bricks and mortar retail, collecting data on customers is obviously more

difficult – they don’t need to ‘login’ in order to enter a shop. Retailers,

however, can track credit cards to group transactions back to a specific

customer, and use this data to link both online and offline sales. AI, when used

smartly in store, can also help retailers to get to know their customers better.

Connected devices and IoT, along with Computer Vision technology (CV), allows

businesses to collect data from sensors, cameras and mobile devices on consumer

behaviours. This can include the items that are picked up or put back down, the

directions visitors move in, whether the shoppers are regulars, or which areas

of the store are most visited. Analysing the data gathered by this suite of

technologies can, in turn, help drive brand loyalty with a tailored in-store

experience. Loyalty programmes continue to have a key role to play in supporting

retailers with data capture (such as behavioural and transactional information),

and analytics are helping loyalty schemes to become more powerful in driving

sales than ever before.

The real cost of MSSPs not implementing new tech

Taking on a complex cybersecurity landscape without the right tools can result in serious weaknesses that threaten an organization’s networks and data. Among the potential problem areas: The comprehension gap. - The lack of a translation layer between tactical and strategic stakeholders (i.e., those making reactive decisions and those who plan for the future) can result in separate tools and systems within an organization. This results in failures while making crucial, time-sensitive decisions, as well as in fully understanding the threat landscape and effectively allocating resources. A regulatory disconnect - Organizations need to balance collaborative cybersecurity efforts with compliance. Various regulations, such as the Federal Information Management Security Act, the General Data Protection Regulation (GDPR) or the California Privacy Rights Act (CPRA), tend to restrict the ability of security platforms to collect and share threat intelligence. Loss of time and momentum - Without the right tools, security teams can find themselves besieged by a steady onslaught of low-impact events and security control system alerts

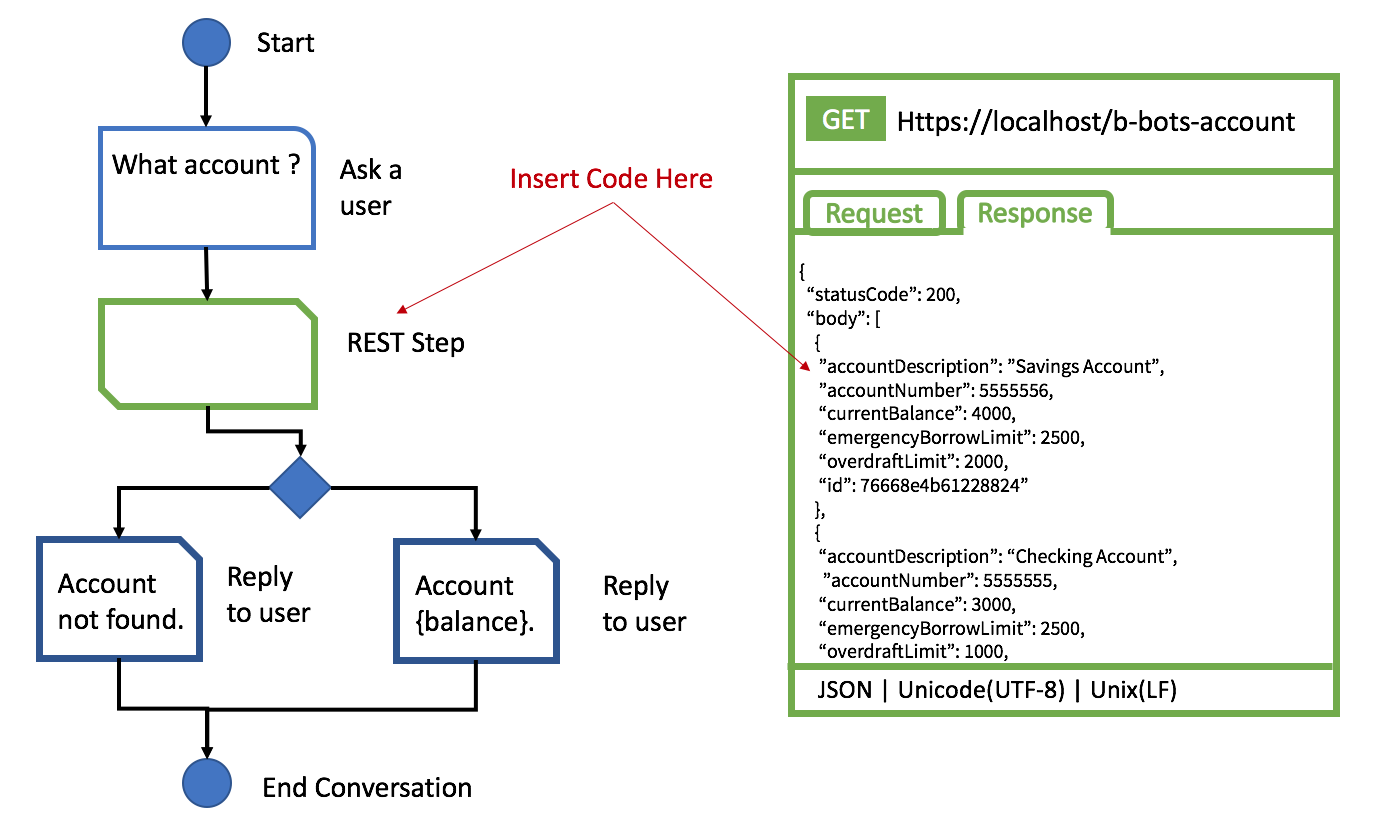

Training NLP Engines Without All of the Answers

Natural Language Processing (NLP) or Natural Language Understanding (NLU) is a

subset of Artificial Intelligence (AI). There are many benefits when using the

technology, and I am surprised at the pushback from technical people when

talking about deploying it. I guess there is a difference between learning

about technology in academia and the complexity of actually deploying it. ...

Another common over-promising statement is that it is easy to build the

conversation and responses. In some cases, you build a simple decision matrix

via the UI. After a while, you find out all of the variables in the

conversation have created a mess. The other option is to create a machine

learning (ML) model to look at data and provide observations and predictions.

You might as well pull out your calculus textbooks and remember how all of

this complex math works to build a ML algorithm. Building the ML is a

specialized discipline in applied mathematics. Just because you can take a

distance learning course does not mean you have the mathematics to build them.

When asking a mathematician how long it will take to observe, hypothesize, and

build an algorithm to try, they will tell you it takes time.

7 Key Insights of Product Management

There are inputs everywhere: feedback from customers, the team, leadership teams; quant data will tell us something and qual data will give us another insight. But are they all equal? Is the "customer always right?" Noooooooo, not necessarily. Using customers as an example: co-designing solutions can be dangerous, but they are good at helping you discover problems, so get them involved here. Good decisions come from proper weighting and attention to the inputs: the data, customer feedback, the market, your experience built from your track record, the team’s competence and so on. Again, it depends on what company, which product, what market. I’ve been a PM carrying almost everything from articulating and validating the initial idea through to writing FAQs and call scripts for the Customer Service Team. I’ve sometimes looked more like an Executive Producer, focused on the vision and strategy, galvanising multiple teams, suppliers and partners and engaging with a multitude of stakeholders. Perhaps you’re a Product Manager as well as a Product Marketer with your emphasis on positioning, pricing and Go-To-Market.

Quote for the day:

"Leaders think and talk about the

solutions. Followers think and talk about the problems." --

Brian Tracy

No comments:

Post a Comment