The future of deep learning, according to its pioneers

“Humans and animals seem to be able to learn massive amounts of background

knowledge about the world, largely by observation, in a task-independent

manner,” Bengio, Hinton, and LeCun write in their paper. “This knowledge

underpins common sense and allows humans to learn complex tasks, such as

driving, with just a few hours of practice.” Elsewhere in the paper, the

scientists note, “[H]umans can generalize in a way that is different and more

powerful than ordinary iid generalization: we can correctly interpret novel

combinations of existing concepts, even if those combinations are extremely

unlikely under our training distribution, so long as they respect high-level

syntactic and semantic patterns we have already learned.” Scientists provide

various solutions to close the gap between AI and human intelligence. One

approach that has been widely discussed in the past few years is hybrid

artificial intelligence that combines neural networks with classical symbolic

systems. Symbol manipulation is a very important part of humans’ ability to

reason about the world. It is also one of the great challenges of deep learning

systems. Bengio, Hinton, and LeCun do not believe in mixing neural networks and

symbolic AI.

Machine Learning for Performance Management

Like whether they are likely to finish on time, or be asked to do overtime.

However, again as humans, we can only process a handful of variables at any one

time and we base our predictions on our past experiences. As none of us can work

24/7 the predictions of one person will likely be different to that of another.

When you consider other factors such as people, differing operating procedures,

machine health, raw material variability, storage and movement conditions and

environmental changes such as weather, the number of variables grows and

human-predictability begins to drop off. This is where the reliance on gut

decisions begins to increase. Gut decisions are those where we cannot easily

explain the rationale. Gut decisions are still based on experience and in fact,

may be the result of combining a lot of inputs and experiences subconsciously

and creating a best guess. They are not the same as a lucky guess. Therefore,

you will likely find in a really experienced operator, that these gut decisions

are actually pretty good. Unfortunately, the experienced workers are becoming

scarce and the ones we do have are far too useful to be staring at trends all

day!

How Business Leaders Can Foster an Innovative Work Culture

To cultivate a culture of innovation, you must encourage action on creative

ideas. Let your employees feel valued, like they have some autonomy in the idea

creation process. They should be able to feel safe to share bold or crazy ideas

that come to their mind. Trust your team to find new ways to solve problems. If

you’ve never failed, you’ve never taken chances. Taking risks is a big part of

innovation. You have to remind your employees that failure is inevitable and

every idea has a degree of uncertainty, and you can do this by creating a safe

environment where you encourage your team to test their innovative ideas and

even make mistakes that do not cost the company a huge fortune. The important

thing is to learn from your mistakes to ensure that you don’t fail the same way

twice. If you hold back on ideas because of the fear of failing, you’ll stay

confined to the monotony of the status quo and your business will never make any

significant leaps. The important thing to remember is to recover and try again.

You can hold pitching contests for your employees and develop new ideas that

they will be asked to present in front of management.

An Introduction to Machine Learning Engineering for Production/MLOps — Phases in MLOps

It is common knowledge that data rules the AI world, pretty much. Our models, at

least in the case of supervised learning, are only as good as our data. It is

important, especially when working in a team, to be on the same page with

regards to the data you have. Consider the same handwriting recognition task

that we defined earlier. Suppose you and your team decide to discard poorly

clicked images for the time being. Now, what is a poorly clicked image? It might

be different for your teammate and it might be different for you. In such cases,

it is important to establish a set of rules to define what a poorly clicked

image is. Maybe if you struggle to read more than 5 words on the page, you

decide to discard it. Something of that sort. This is an extremely important

step even in research as having ambiguity in data and labels will only lead to

more confusion for the model. Another important thing to be taken into

consideration is the type of data you are dealing with, i.e, structured or

unstructured. How you work with the data you have largely depends on this

aspect. Unstructured data includes images, audio signals, etc and you can carry

out data augmentation in these cases to increase the size of your dataset.

Data Scientists and ML Engineers Are Luxury Employees

Apart from the interest in the field, another main reason is a bit more

practical. I have spent so much time and energy learning the necessary topics

(think probability, statistics, calculus, linear algebra, distributed computing,

machine learning, deep learning…) that I want this knowledge to stick in. And we

are all humans. Even if you are a genius, if you don’t practice what you learn,

the knowledge goes away. So when your boss asks you (for the tenth time in a

row) to create a piece of software or an analysis that has nothing to do with

machine learning, what is that you think? Are you happy? Another important

factor is that the field is moving at lightning speed. It was already the case

when I was in software engineering, but now it is not even comparable. Not a day

goes by without hearing from the latest breakthrough, the newest shiny deep

learning architecture, this great new book that every ML practitioner should

read, etc. When you are not practicing ML in your day job, you are left with

practicing it during your free time. It is OK for a little while, but it is not

sustainable in the long run. We are all humans. We need time off to relax and be

with our loved ones. Don’t get me wrong. I love learning new things.

Neo’s Governance Model Projected to Transform Blockchain Space

From an architectural perspective, Neo N3 has also optimized to deliver a more

streamlined user experience, including switching from a UTXO to a pure account

model, reconfiguring the virtual machine, adding a state root service,

upgrading block synchronization mechanisms, and introducing new data

compression mechanisms. Since The release of the Neo N3 TestNet, performance

is already up by approximately 50 times, and the MainNet is set to launch soon

in the near future. ... Under POW consensus governance models, arithmetic

power is the right, and all the newly generated revenue is owned by nodes who

maintain a monopoly over arithmetic power. Meanwhile, POS consensus models

primarily distribute tokens to those who hold the most money — thus,

distribution of benefits under both systems is far from equitable. In

addition, POW and POS models require users to pay high processing fees for

transferring transactions and using on-chain applications. As a result,

platforms such as Ether and EOS have been plagued by high fees, resulting in

transaction congestion along with GAS fees worth hundreds of dollars on Ether.

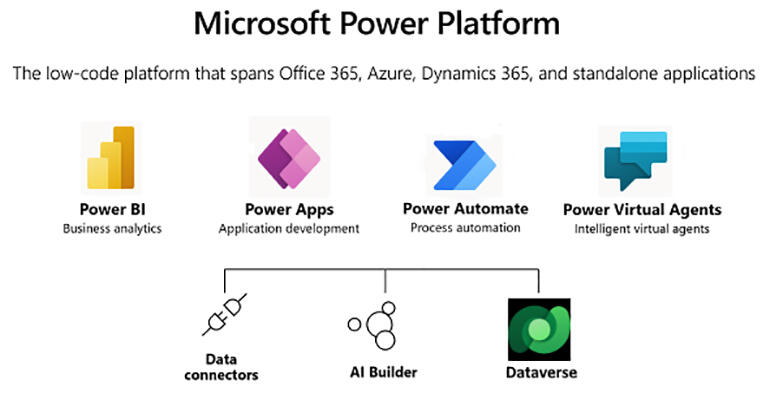

Microsoft Power Platform and low code/no code development: Getting the most out of Fusion Teams

One aspect of the Fusion Teams approach are new tools for professional

developers and IT pros, including integration with both Visual Studio and Visual

Studio Code. At the heart of this side of the Teams development model is the new

PowerFX language, which builds on Excel's formula language and blends in a

SQL-like query language. PowerFX lets you export both Power Apps designs and

formulas as code, ready for use in existing code repositories, so IT teams can

manage Power Platform user interfaces alongside their line-of-business

applications. Microsoft has delivered a new Power Platform command line tool,

which can be used from the Windows Terminal or from the terminals in its

development platforms. The Power Platform CLI can be used to package apps ready

for use, as well as to extract code for testing. One advantage of this approach

is that a user building their own app in Power Apps can pass it over to a

database developer to help with query design. Code can be edited in, say, Visual

Studio Code, before being handed back with a ready-to-use query. Fusion teams

aren't about forcing everyone into using a lowest common denominator set of

tools; they're about building and sharing code in the tools you use the most.

The encouraging acceleration of cloud adoption in financial services

When regulations are constantly evolving, in multiple jurisdictions, a

cloud-based approach to CLM is much more agile and adaptable to emerging

challenges. Using a system that can be updated to always be compliant, provides

risk management teams and ultimately the C-suite and board with the confidence

that they are future proofed against evolving regulation and will avoid hefty

financial penalties from regulators. ... Transformation plans rarely, if ever,

begin and end in any one CIO’s tenure – they are a continual process to move

things forward for the organisation – but the efforts of individual leaders need

to pave the way for the next without tying their hands and forcing them down a

path that may present issues later down the line. ... Whether banks are just

looking to digitise existing processes or to use AI and ML to make more

intelligent decisions and look for fraudulent behavioural patterns, the fact

that more conversations are being had in the financial service world about

cloud, or that these conversations are going somewhere, gives me confidence that

we’re moving in the right direction and there are good days to come.

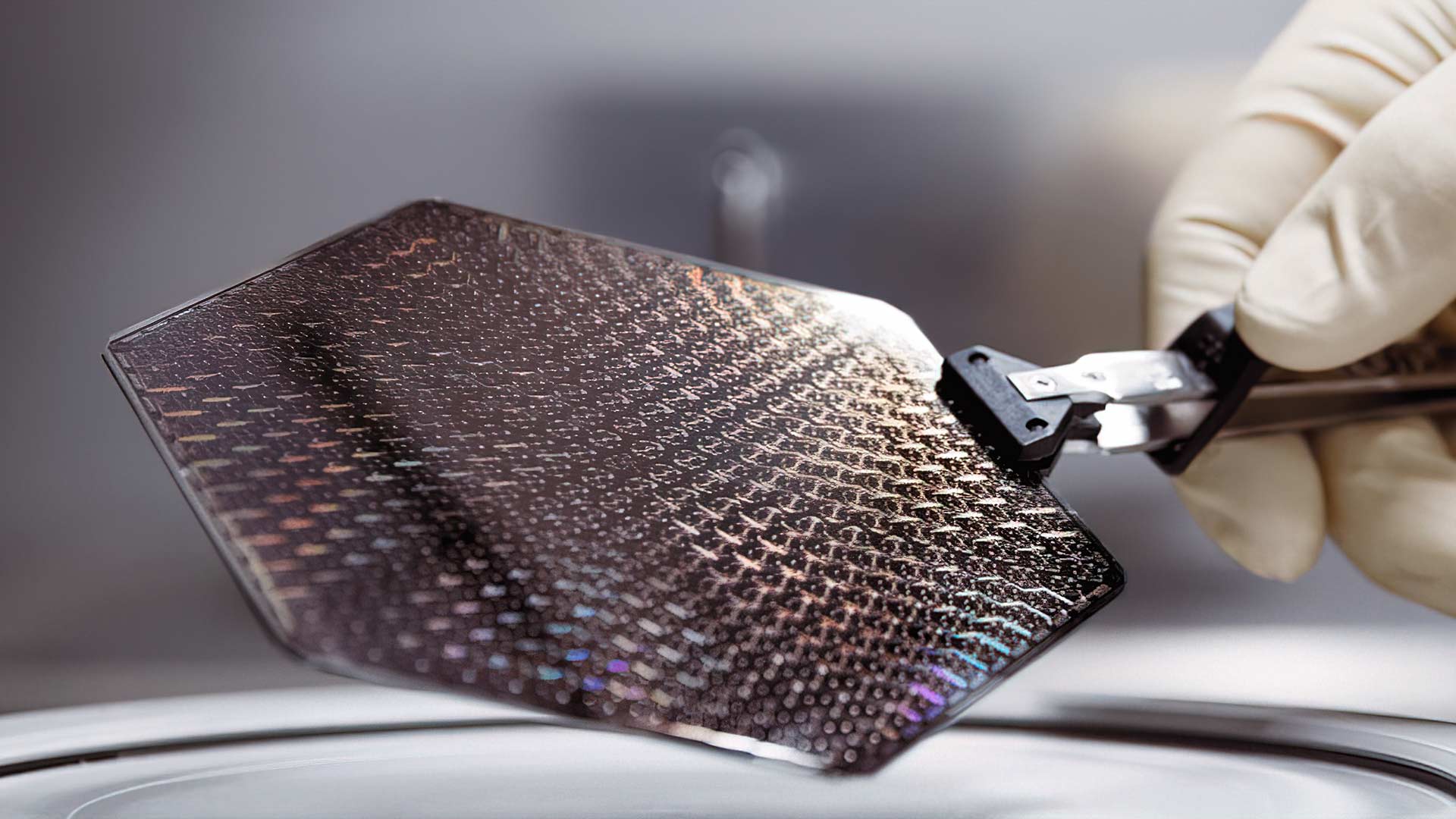

The chip shortage is real, but driven by more than COVID

The problem is that demand is so great that existing production capacity can’t

keep up. Before there was COVID, digital transformation was driving sales.

“There was a pretty large movement in the enterprise towards more digitalization

across different sectors of the markets in different verticals,” said Morales.

“I think the pandemic only accelerated that,” he said. “All of the connected

everythings--smart cities, smart roadway, smart campuses, smart airports, smart,

autonomous everything--I think this [shortage] was going to happen anyway, it

just happened faster,” said Fenn. Another problem facing chip makers is that

demand for processors was across-the-board, much of it for older technology that

isn’t the first choice for what the vendors would like to sell. Intel, TSMC,

GlobalFoundries, Samsung, and other advanced chip makers are pushing into 7nm

and 5nm designs that smart refrigerators and cars don’t need. They do fine with

40nm or 28nm designs, and no one is investing in more fabs to make more. So the

existing older fabs will continue to run at full capacity for the foreseeable

future, with no room for error and no plans to build more.

Easy Guide to Remote Pair Programming

Solitary programmers who feel well programming alone and are efficient shouldn’t

be forced to pair program. There are so many reasons why one would like to work

alone, and not in a pair. We can think Think about people who are very

introverted, deep experts in a difficult domain, or people who aren’t used to

collaborating with other people. No practice should be forced on anyone, but

rather explained, slowly introduced; we need to know and accept that some people

won’t like it, and won’t use it. Another situation when (remote) pair

programming doesn’t work is when there is a strong push against collaboration in

the whole organization. The management can instill these values that we need to

work on individually; everyone needs to be evaluated for their own individual

work as otherwise evaluation will be very difficult. There can be many

situations where accountancy, evaluation and task-keeping needs to be written

according to the particular rules of the organization. Pair programming won’t

work in this environment. There are also organizations where there are strong

silos, and you might be able to work in a pair in your own narrow

specialization, but never with other specialization.

Quote for the day:

"Don't be buffaloed by experts and

elites. Experts often possess more data than judgement." --

Colin Powell

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69525052/DSCF1179.0.0.jpg)