Programming Fairness in Algorithms

Machine learning fairness is a young subfield of machine learning that has

been growing in popularity over the last few years in response to the rapid

integration of machine learning into social realms. Computer scientists,

unlike doctors, are not necessarily trained to consider the ethical

implications of their actions. It is only relatively recently (one could argue

since the advent of social media) that the designs or inventions of computer

scientists were able to take on an ethical dimension. This is demonstrated in

the fact that most computer science journals do not require ethical statements

or considerations for submitted manuscripts. If you take an image database

full of millions of images of real people, this can without a doubt have

ethical implications. By virtue of physical distance and the size of the

dataset, computer scientists are so far removed from the data subjects that

the implications on any one individual may be perceived as negligible and thus

disregarded. In contrast, if a sociologist or psychologist performs a test on

a small group of individuals, an entire ethical review board is set up to

review and approve the experiment to ensure it does not transgress across any

ethical boundaries.

This Algorithm Doesn't Replace Doctors—It Makes Them Better

Operators of paint shops, warehouses, and call centers have reached the same

conclusion. Rather than replace humans, they employ machines alongside people,

to make them more efficient. The reasons stem not just from sentimentality but

because many everyday tasks are too complex for existing technology to handle

alone. With that in mind, the dermatology researchers tested three ways

doctors could get help from an image analysis algorithm that outperformed

humans at diagnosing skin lesions. They trained the system with thousands of

images of seven types of skin lesion labeled by dermatologists, including

malignant melanomas and benign moles. One design for putting that algorithm’s

power into a doctor’s hands showed a list of diagnoses ranked by probability

when the doctor examined a new image of a skin lesion. Another displayed only

a probability that the lesion was malignant, closer to the vision of a system

that might replace a doctor. A third retrieved previously diagnosed images

that the algorithm judged to be similar, to provide the doctor some reference

points.

Redefining Leadership In The Age Of Artificial Intelligence

Intelligent behaviour has long been considered a uniquely human attribute. But

when computer science and IT networks started evolving, artificial

intelligence and people who stood by them were on the spotlight. AI in today’s

world is both developing and under control. Without a transformation here, AI

will never fully deliver the problems and dilemmas of business only with data

and algorithms. Wise leaders do not only create and capture vital economic

values, rather build a more sustainable and legitimate organisation. Leaders

in AI sectors have eyes to see AI decisions and ears to hear employees

perspective. A futuristic AI leader plans to work not just for now but also

for the years ahead. A company’s development in AI involves automating

business processes using robotic technologies, gaining insight through data

analysis and enhancement, cost-effective predictions based on algorithms and

engagement with employees through natural language processing chatbots,

intelligent agents and machine learning. Without a far-sighted leader,

bringing all this to reality will be merely impossible.

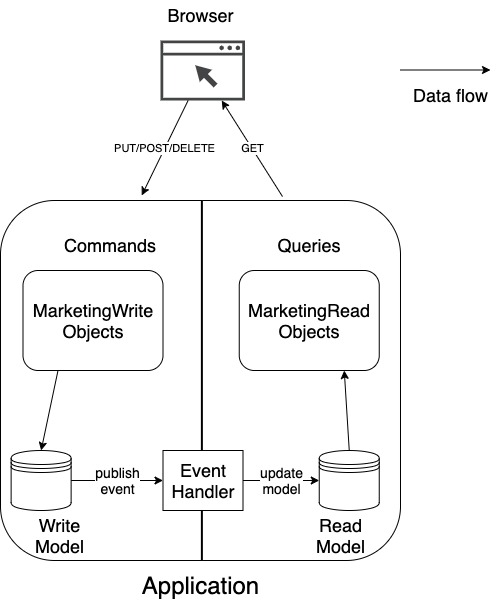

Blockchain: En Route to the Global Supply Chain

In the context of a large-scale shipping operation, for instance, there may be

thousands of containers filled with millions of packages or assets. Using a

system that can track every asset with full certainty, any concerns can be

eliminated about whether the items are where they are supposed to be, or if

anything is missing. As blockchain expands, so too will the data it records,

which in turn increases trust. By ensuring via this secured digital ledger

that an asset has moved from a warehouse to a lorry on a Thursday afternoon,

more data can then be added. For example, it can show that the asset moved

from a specific shelf in a warehouse on a specific street and was moved by a

specific truck operated by a specific driver. Securing the location data with

full trust provides assurance that things are happening correctly and means

that financial transactions can be made with more confidence. Layering mapping

capabilities and rich location data to a blockchain record also enables fraud

detection. Without blockchain, it cannot be certain that the delivery updates

provided are in fact accurate. Blockchain makes transactions transparent and

decentralised, enabling the possibility to automatically verify their accuracy

by matching the real location of an item with the location report from a

logistics company.

A closer look at Microsoft Azure Arc

At Ignite, Microsoft provided its answer on how Azure Arc brings cloud control

on premises. The cornerstone of Azure Arc is Azure Resource Manager, the nerve

center that is used for creating, updating, and deleting resources in your

Azure account. That encompasses allocating compute and storage to specific

workloads and then monitoring performance, policy compliance, updates and

patches, security status, and so on. You can also fire up and access Azure

Resource Manager through several paths ranging from the Azure Portal to APIs

or command line interface (CLI). It provides a single pane of glass for

indicating when specific servers are out of compliance; specific VMs are

insecure; or certificates or specific patches are out of date – and it can

then show recommended remedial actions for IT and development teams to take.

While it requires at least some connection to the Azure Public Cloud, it can

run offline when the network drops. Microsoft has built a lot of flexibility

as to the environments that Azure Arc governs. It can be used for controlling

bare metal environments as well as virtual machines running on any private or

public cloud, SQL Server, or Kubernetes (K8s) clusters.

Why haven’t we ‘solved’ cybersecurity?

Cybersecurity-related incentives are misaligned and often perverse. If you had

a real chance to become a millionaire or even a billionaire by ignoring

security and a much smaller chance if you slowly baked in security, which path

would you choose? We also fail to account for, and sometimes flat out ignore,

the unintended consequences and harmful effects of the innovative technology

and ideas we create. Who would have thought that a 2003 social media app,

built in a dorm room, would later help topple governments and make the creator

one of the richest people in the world? Cybersecurity companies and individual

experts face the difficult challenge of balancing personal gain versus the

greater good. If you develop a new offensive tool or discover a new

vulnerability, should you keep it secret or make a name for yourself through

disclosure? Concerns over liability and competitive advantage inhibit the

sharing of best practices and threat information that could benefit the larger

business ecosystem. Data has become the coin of the realm in the modern age.

Data collection is central to many business models, from mature multi-national

companies to new start-ups. Have a data blind spot?

Top Technologies To Achieve Security And Privacy Of Sensitive Data In AI Models

Differential privacy is a technique for sharing knowledge or analytics about a

dataset by drawing the patterns of groups within the dataset and at the same

time reserving sensitive information concerning individuals in the dataset.

The concept behind differential privacy is that if the effect of producing an

arbitrary single change in the database is small enough, the query result

cannot be utilised to infer much about any single person, and hence provides

privacy. Another way to explain differential privacy is that it is a

constraint on the algorithms applied to distribute aggregate information on a

statistical database, which restricts the exposure of individual information

of database entries. Fundamentally, differential privacy works by adding

enough random noise to data so that there are mathematical guarantees of

individuals’ protection from reidentification. This helps in generating the

results of data analysis which are the same whether or not a particular

individual is included in the data. Facebook has utilised the technique

to protect sensitive data it made available to researchers analysing the

effect of sharing misinformation on elections. Uber employs differential

privacy to detect statistical trends in its user base without exposing

personal information.

Getting Started with Mesh Shaders in Diligent Engine

Originally, hardware was only capable of performing a fixed set of operations

on input vertices. An application was only able to set different

transformation matrices (such as world, camera, projection, etc.) and instruct

the hardware how to transform input vertices with these matrices. This was

very limiting in what an application could do with vertices, so to generalize

the stage, vertex shaders were introduced. Vertex shaders were a huge

improvement over fixed-function vertex transform stage because now developers

were free to implement any vertex process algorithm. There was however a big

limitation - a vertex shader takes exactly one vertex as input and produces

exactly one vertex as output. Implementing more complex algorithms that would

require processing entire primitives or generate them entirely on the GPU was

not possible. This is where geometry shaders were introduced, which was an

optional stage after the vertex shaders. Geometry shader takes the whole

primitive as an input and may output zero, one or more primitives.

Need for data management frameworks opens channel opportunities

Today's huge influx of data is resulting in multiple inefficiencies, according

to Mike Sprunger, senior manager of cloud and network security at Insight

Enterprises, a global technology solution provider. He cited the example of an

employee who generates a spreadsheet and shares it with half a dozen

co-workers, who then send the spreadsheet to half a dozen others. The 1 MB

file morphs into 36 MBs, and when that information is backed up, data volumes

double again. As cloud and flash technologies lowered storage pricing

dramatically, many companies simply added more storage capacity as data

demands grew. While companies stored more, they purged less. Furthermore,

industry and government rules and guidelines for maintaining data have been

evolving. So it can be unclear how to meet regulatory requirements, Sprunger

noted, and decide what data can go and what must be kept. Compounding the

challenge, communication between IT and business units is often mediocre or

nonexistent. So neither group understands the business requirements or the

technical possibilities of deleting outdated data, he added.

RASP 101: Staying Safe With Runtime Application Self-Protection

Feiman says solutions like RASP and WAF have emerged from "desperation" to

protect application data but are insufficient. The market needs a technology

that is focused on detection rather than prevention. Indeed, in an effort to

address the problems with RASP, he and his team at WhiteHat are in the process

of beta testing an application security technology that performs app testing

without instrumentation. As far as existing RASP technologies go, it's

unlikely they'll stick around in their current form. Rather than an

independent technology, Feiman believes RASP will ultimately get absorbed into

application runtime platforms like the Amazon AWS and Microsoft Azure cloud

platforms. This could happen through a combination of acquisitions and

companies like AWS building their own lightweight RASP capabilities into their

technologies. "The idea will stay, the market hardly will," says

Feiman. On that, Sqreen's Aviat disagrees, saying RASP is "indeed a

standalone technology." "I expect RASP to become a crucial element of any

application security strategy, just like WAF or SCA is today – in fact, RASP

is already referenced by NIST as critical to lowering your application

security risk," he said.

Quote for the day:

"The leader has to be practical and a realist, yet must talk the language of the visionary and the idealist." -- Eric Hoffer