State of enterprise machine learning in 2020: 7 key findings

"Machine learning has the ability to, in a lot of cases, reduce errors, which can help a company make more money and save money," Oppenheimer said. "Like in jobs where there's a lot of data entry or processing, where there might be a lot of humans involved, where it's error prone and it's slightly slow, machine learning can automate a lot of that and make it more precise. It liberates those humans who are doing basic data entry to do higher level tasks, which humans are better suited for." While medium to large companies, in particular, are primarily focused on cutting costs, small companies are more interested in improving the customer experience, the report found. Smaller companies are trying to retain customers and have steady business--a problem that larger companies may not have. When thinking about how to use machine learning, optimization is a huge use case, Oppenheimer said. ... Machine learning projects will still be in early stages at organizations in 2020: 21% of businesses said they would be evaluating use cases, and 20% identified themselves as early-stage adopters in machine learning production, the report found.

Experiences from Mob Programming at an Insurance Startup

Mob programming brings the team lots of feedback. Victoor said that being together the whole time helps a lot when making technical decisions. It also gives them a lot of courage to tackle complex issues and tough refactorings. Rouve mentioned that the main benefit of mob programming is continuous sharing and learning; plus, mob programming forces the team to be aligned on best practices and coding standards. "It daily improves our work by communicating more efficiently," he said. ... During a mob session, all ideas are discussed. This is really great for problem-solving. When you are alone and you need to solve a problem, you are biased. If you are a senior developer, you may think of a solution that you have applied in the past to a similar problem. This solution may not be the simplest, or the most efficient to the current problem. When mobbing, everyone can speak up, share ideas and concerns. This is really a great way to build simpIe designs, shared by every single member of the team.

Cisco targets hyperscalers with silicon, high-end routers

“Moore’s law is stalling," wrote Jonathan Davidson senior vice president and general manager of Cisco Service Provider Networking in a blog about Silicon One. "While the rest of the industry slows down from the physics of traditional approaches, we have unlocked new dimensions of innovation. By rethinking silicon design entirely, we can deliver industry-leading performance today and create a ‘fast lane’ to the future. “In the past, multiple types of silicon have been used across a network and even within a single device. Feature development was inconsistent. Telemetry varied dramatically. Operators had to spend too much time and effort coordinating and testing parity of new features across the network. Now, a single silicon architecture can serve different market segments, different functions, and various form factors for a unified experience that dramatically reduces costs of operations and time-to-value for new services.” Another component of Silicon One is that it will be available for white-box vendors or hyperscalers developing their own networking systems – one of the few times Cisco has been a merchant silicon vendor in its own right. Its chip technology is typically used just in its own equipment.

Must Buy Smart Travel Gadgets for 2020

Monoprice is known for quality generic brand tech products like USB cables, wall mounts, adapters, power banks at a cheaper price point. If you are looking to add a power bank to your next travel packing list, then look out for the Monoprice holiday deals. One of the specials they have is their own brand Select Series power banks. They are currently offering 15% off for 10,000mAh, 20,000mAh, and 27,200mAh battery capacity power banks. When you are traveling with it, you are guaranteed to never run out of power since you can fully charge your iPhone or Android phones three times before the power runs out. ... A portable hard drive can be a traveler’s best friend, especially for gamers and photographers. Western Digital has everything you need for your next trip. Although cloud storage is great and useful, don’t forget that Internet connectivity is not always available everywhere in the world. You don’t want to stop taking pictures because your digital camera is running out of space. Also, it is always good to back up your pictures and other digital assets in both cloud storage and external hard drive.

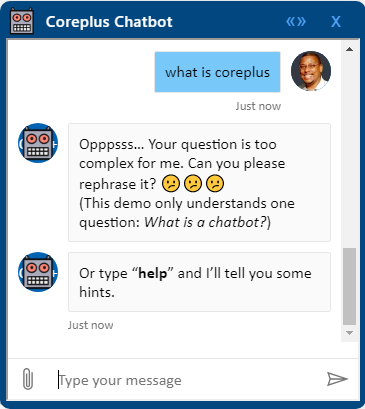

Security 101: What Is a Man-in-the-Middle Attack?

MitM attacks are attempts to "intercept" electronic communications – to snoop on transmissions in an attack on confidentiality or to alter them in an attack on integrity. "At its core, digital communication isn't all that much different from passing notes in a classroom – only there are a lot of notes," explains Brian Vecci, field CTO at Varonis. "Users communicate with servers and other users by passing these notes. A man-in-the-middle attack involves an adversary sitting between the sender and receiver and using the notes and communication to perform a cyberattack." ... "People think they are accessing a legitimate hotspot," he says, "but, in fact, they are connecting to a device that allows the hacker to log all their keystrokes and steal logins, passwords, and credit card numbers." Another popular MitM tactic is a fraudulent browser plugin installed by a user, thinking it will offer shopping discounts and coupons, Guruswamy says. "The plugin then proceeds to watch over user's browsing traffic, stealing sensitive information like passwords [and] bank accounts, and surreptitiously sends them out-of-band," he says.

For IT pros, adding blockchain skills can pad your paycheck – by a lot

Understanding how blockchain integrates with artificial intelligence, machine learning, robotics, and IoT is seen largely as a plus for technologists at the moment. But it will be a requirement in the future as these other technologies mature and adoption rates increase. Salaries for blockchain developer or "engineer" positions are high, with median salaries in the U.S. hovering around $130,000 a year; that compares to general software developers, whose annual median pay is $105,000, according to Matt Sigelman, CEO of job data analytics firm Burning Glass Technologies. People with experience with specific blockchain iterations such as Solidity and Hyperledger Composer are in even higher demand – and that demand is increasing steadily, said Eric Piscini, a principal in the technology and banking practices at Deloitte Consulting LLP. Universities are some of the best places to learn blockchain skills, though there are online courses available from vendors as well. According to a new Gartner research note, 75% of IoT technology adopters in the U.S. have already adopted blockchain or are planning to adopt it by the end of 2020.

VISA Warns of Ongoing Cyber Attacks on Gas Pump PoS Systems

PFD says that in the first incident it identified, unknown attackers were able to compromise their target using a phishing email that allowed them to infect one of the systems on the network with a Remote Access Trojan (RAT). This provided them with direct network access, making it possible to obtain credentials with enough permissions to move laterally throughout the network and compromise the company's POS system as "there was also a lack of network segmentation between the Cardholder Data Environment (CDE) and corporate network." The last stage of the attack saw the actors deploying a RAM scraper that helped them collect and exfiltrate customer payment card data. During the second and third incidents, PFD states that the threat actors used malicious tools and TTPs attributable to the financially-motivated FIN8 cybercrime group.

Implement CI/CD for Multibranch Pipeline in Jenkins

Jenkins is a continuous integration server that can fetch the latest code from the version control system (VCS), build it, test it, and notify developers. Jenkins can do many things apart from just being a Continuous Integration (CI) server. Originally known as Hudson, Jenkins is an open-source project written by Kohsuke Kawaguchi. As Jenkins is a Java-based project, before installing and running Jenkins on your machine, first, you need to install Java 8. The Multibranch Pipeline allows you to automatically create a pipeline for each branch on your Source Code Management (SCM) repository with the help of Jenkinsfile. Jenkins pipelines can be defined using a text file called Jenkinsfile. You can implement pipeline as code using Jenkinsfile, and this can be defined by using a domain-specific language (DSL). With Jenkinsfile, you can write the steps needed for running a Jenkins pipeline. The Multibranch Pipeline project type enables you to implement different Jenkinsfile for different branches of the same project.

What soft skills are most needed in IT? Toronto Women in IT winners share

Technology is getting to be the easy part, with so many off-the-shelf systems that can be sold to any business leader. The talent that the IT leader brings to the table is ensuring they use discipline to not jump to a solution until the problem is fully understood, articulated, and agreed upon. It is only when everyone collaborates and then agrees on exactly what problem they are trying to solve, that a technical solution can truly be sought. ... Humility and adaptability are also important soft skills for anyone working in IT. You must be willing to admit mistakes and learn from what went wrong in order to drive the best product forward. Being too focused on perfection prevents you from doing that. You also need to be adaptable to be able to respond to changes quickly and implement feedback at all stages in the development process. You can cultivate new soft skills over the course of your career if you commit to being a life-long learner and exploring new ways of getting things done. Move beyond what is known and familiar to you in order to stretch your thinking and add to your repertoire of soft skills.

Supreme Court to Have Final Say in Oracle v. Google Java API Battle

Google holds fast that APIs are not copyrightable and the reuse of software interfaces is necessary to make systems interoperable. The issue is whether copyright law prohibits reimplementing—i.e., reusing—the software interfaces that are necessary to connect dozens of platforms to millions of applications on billions of devices. Without interfaces, your contact list cannot access your email program, which cannot send a message using the operating system, which cannot access your phone in the first place. Each is an island. Countless other examples abound. The information age depends on the reuse of interfaces. In 2018, an appeals court ruled in favor of Oracle and overturned previous rulings that favored Google. Dissatisfied with the lower court’s decision, Google petitioned the Supreme Court to hear its case. Previously, the Supreme Court had refused to hear Google’s petition but finally granted it on November 15th 2019. Given that Google filed the petition, the case is now dubbed "Google v. Oracle" instead of "Oracle v. Google".

Quote for the day:

"Great leaders go forward without stopping, remain firm without tiring and remain enthusiastic while growing." -- Reed Markham