More than two-thirds of the open Redis servers contained malicious keys and three-quarters of the servers contained malicious values, suggesting that the server is infected. Also, according to Imperva's honeypot data, the infected servers with “backup” keys were attacked from a medium-sized botnet (610 IPs) located in China (86 percent of IPs). Researchers said that the firm's customers were attacked more than 75k times, by 295 IPs that run publicly available Redis servers. The attacks included SQL injection, cross-site scripting, malicious file uploads, remote code executions etc. Researchers said the numbers suggest that attackers are harnessing vulnerable Redis servers to mount further attacks on the attacker's behalf. Nadav Avital, security research team leader at Imperva, said that the reason why 75 percent of open Redis servers are infected with malware was most likely because they are being directly exposed to the internet. “However, this is highly unrecommended and creates huge security risks. To help protect Redis servers from falling victim to these infections, they should never be connected to the internet and, because Redis does not use encryption and stores data in plain text, no sensitive data should ever be stored on the servers,” said Avital.

Artificial intelligence will improve medical treatments

The potential benefits are great. As Tom Devlin, a stroke neurologist at Erlanger, observes, “We know we lose 2m brain cells every minute the clot is there.” Yet the two therapies that can transform outcomes—clot-busting drugs and an operation called a thrombectomy—are rarely used because, by the time a stroke is diagnosed and a surgical team assembled, too much of a patient’s brain has died. Viz.ai’s technology should improve outcomes by identifying urgent cases, alerting on-call specialists and sending them the scans directly. Another area ripe for AI’s assistance is oncology. In February 2017 Andre Esteva of Stanford University and his colleagues used a set of almost 130,000 images to train some artificial-intelligence software to classify skin lesions. So trained, and tested against the opinions of 21 qualified dermatologists, the software could identify both the most common type of skin cancer, and the deadliest type (malignant melanoma), as successfully as the professionals. That was impressive. But now, as described last month in a paper in the Annals of Oncology, there is an AI skin-cancer-detection system that can do better than most dermatologists. Holger Haenssle of the University of Heidelberg, in Germany, pitted an AI system against 58 dermatologists.

In Transforming Their Companies, CIOs Are Changing, Too

The goal of IT isn’t merely to speed operations and introduce new and shinier ways to do things; it’s also to produce qualitative improvements. When an organization generates greater value for customers, everyone wins. For example, at Alaska Airlines, which operates 1,200 daily flights and accommodates 44 million passengers, the focus is on delivering a consistent experience to passengers, employees, and others. Every technology, process, and service touches this concept, which boosts the odds that the airline delivers a “unique brand experience at every touch point, digital and otherwise,” explained Charu Jain, the airline’s vice president and CIO. She constantly works to align the business plan with the technology, she said. Jain accomplishes this by focusing on a few key areas: identifying strategy and priorities; establishing clearly defined metrics; tapping analytics for constant feedback; ensuring that groups and teams are in lockstep with one another; and taking calculated risks, failing fast, and moving forward. Her goal, she said, is to encourage ownership and accountability across the organization. She reinforces progress by “celebrating the small wins accomplished through an innovative spirit.”

Measuring DevOps Success

Speed without quality is of little value to the organization, so the next set of DevOps metrics are the failure rate (the percentage of releases that have problems) and the number of tickets how many issues a release has). Each organization or team needs to find its appropriate balance between speed and quality. The initial focus on quality numbers should be relative trends, not absolute values, to make sure that the teams are progressing in the right direction. Drilling down into failure causes should identify which steps of the process, such as code review or test coverage, need attention. An important detail from bug reports and trouble tickets is whether they are internal, or user reported. Mature DevOps teams have failure rates less than 10 percent, according to the 2017 Puppet Report, and have an increasing percentage of issues captured by monitoring tools before being reported by users. The metrics that underperforming DevOps organizations often miss are those related to a customer’s experience with the application, their usage (or not) of new features, and the resulting impact to the business. These measurements are outside the realm of traditional development and QA tools, and mean changing mindsets and adopting new tools.

Honda Gets Ready For The 4th Industrial Revolution

With the tremendous amount of data that’s created from a wide variety of sources including sensors on cars, customer surveys, smartphones and social media, Honda’s research and development team uses data analytics tools to comb through data sets in order to gain insights it can incorporate into future auto designs. As the company’s big data maturity has increased, its engineers are learning to work with and leverage data, that had previously been to cumbersome to find meaning, thanks to the assistance of big data technology and analytics tools. There are more than 100 Honda R&D engineers who are now trained in big data analytics. Thanks to the sensors on Honda vehicles and feedback from customers, the team is able to make adjustments to the design of its fleet for things they would have never realized were an issue without the data insight. The analytics tools help Honda “explore big data and ultimately design better, smarter, safer automobiles,” said Kyoka Nakagawa, chief engineer TAC, Honda R&D.

AI at Google: our principles

We recognize that such powerful technology raises equally powerful questions about its use. How AI is developed and used will have a significant impact on society for many years to come. As a leader in AI, we feel a deep responsibility to get this right. So today, we’re announcing seven principles to guide our work going forward. These are not theoretical concepts; they are concrete standards that will actively govern our research and product development and will impact our business decisions. We acknowledge that this area is dynamic and evolving, and we will approach our work with humility, a commitment to internal and external engagement, and a willingness to adapt our approach as we learn over time. ... While this is how we’re choosing to approach AI, we understand there is room for many voices in this conversation. As AI technologies progress, we’ll work with a range of stakeholders to promote thoughtful leadership in this area, drawing on scientifically rigorous and multidisciplinary approaches. And we will continue to share what we’ve learned to improve AI technologies and practices.

What you need to know about the EU Google antitrust case

There's no deadline for the Commission to complete its investigation, but indications from Brussels are that it will publish a decision in the Android case before August 2018. In the Google Android case, the Commission could theoretically fine it up to $11 billion, or 10 percent of parent company Alphabet's $110 billion worldwide revenue in 2017 -- but recent antitrust fines have come nowhere near that level. There's a separate investigation ongoing into the company's AdSense online advertising service, looking at the restrictions it places on the ability of third-party websites to display search ads from its competitors. That could expose the company to a similar-size fine. And, of course, the Commission has already hit Google with one antitrust fine, for abusing the dominance of its search engine to promote its own comparison shopping services. That cost it €2.42 billion ($2.7 billion) in June 2017, around 3 percent of its prior-year revenue. Other recent fines for abuse of a dominant market position are in the same ballpark. In January 2018 it fined Qualcomm €997 million ($1.2 billion), or just under 5 percent of annual revenue, while Intel's €1.06 billion ($1.3 billion) fine back in June 2014 represented about 3.8 percent of revenue.

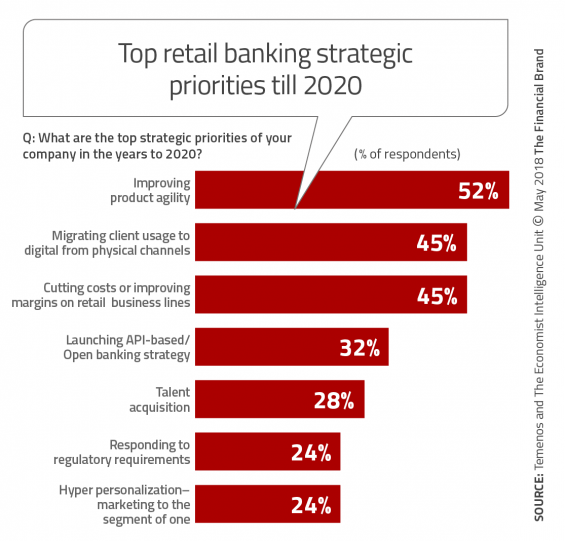

True Digital Banking Solution For Connected Customers

“The banking sector is undergoing a transformation driven by the change in people’s communication habits. The Internet availability created the need for a completely new approach in communicating with clients and in ways to satisfy the expectations of today’s “connected” client. What we want to achieve with our solutions is straightforward communication and convenient banking which is exactly what Halkbank is delivering to its clients. With the Omni-channel platform in place, Halkbank is more adaptable to change and much quicker in delivering product to the market, always ready to answer to high demands of a modern banking customer.”, stated Mr. Milan Pištalo, Account Executive at NF Innova. “The process of modernization and continuous enhancements of the banking services is constantly present, being a must for the competitive advantage in acquiring new, satisfied clients since both banking and finance sector, on a global level, are particularly dynamic.

People know what to expect from a company, and they precisely know how valuable they are for the company.

DevOps shops weigh risks of Microsoft-GitHub acquisition

Many developers at Mitchell International have wanted to use GitHub instead of TFS for a long time, Fong said, but whether that enthusiasm persists is unclear. "Microsoft has said it won't disrupt GitHub, but history has shown some influence has to be there," he said. "If it will be a feature of TFS and Visual Studio, some changes will be needed." Dolby Laboratories is accustomed to dealing with Microsoft licensing, but that familiarity has bred contempt, said Thomas Wong, senior director of enterprise applications at the sound system products company in San Francisco. Even if Microsoft doesn't change GitHub's prices or license agreements, "GitHub could become one conversation in an hour versus the whole conversation" in meetings with the vendor's sales reps, Wong said. GitHub already did fine to connect with the broader ecosystem of DevOps tools such as Jenkins for CI/CD, AWS CodeBuild and CodeDeploy automated provisioning, and Atlassian's Jira issue tracker. "That ecosystem is not something I need Microsoft to build for me," Wong said. A large part of GitHub's appeal was that integration with other popular tools, which may now be at risk under Microsoft's ownership, Mitchell International's Fong said.

Network-intelligence platforms, cloud fuel a run on faster Ethernet

Of particular interest to most observers is the growing migration to 100G Ethernet. “There was on the order of about 1 million 100G Ethernet ports shipped in 2016, this year we expect somewhere near 12 million to ship,” said Boujelbene. “Hyperscalers certainly drove the market early-on but large enterprises are increasingly looking at that technology for the increased speed, price/performance it brings.” Cisco agreed with that observation. “The requirement for more high-speed ports and more data being driven from the dense edges of the network is driving the upgrade of the backbone,” said Roland Acra, senior vice president and General Manager of Cisco’s Data Center Business Group. “We see the need especially from financial and trade floor customers who need the bandwidth and speed.” While 100G is ramping up so is another level of Ethernet speed – the 25G segment, which saw revenue increase 176 percent year-over-year with port shipments growing 359 percent year over year in 1Q18, according to IDC. The push to 25G is largely due to top-of-rack requirements in dense data-center server access ports.

Quote for the day:

"Uncertainty is a permanent part of the leadership landscape. It never goes away." -- Andy Stanley