Quote for the day:

"No person can be a great leader unless he takes genuine joy in the successes of those under him." -- W. A. Nance

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

The agent security mess

The article "The Agent Security Mess" by Matt Asay highlights a critical

vulnerability in enterprise security: the "persistent weak layer" of

over-provisioned permissions. Historically, security risks remained dormant

because humans typically ignore 96% of their granted access rights. However,

the rise of AI agents changes this dynamic entirely. Unlike humans, who act as

a natural governor on permission sprawl, autonomous agents inherit the full

permission surface of the accounts they use. This turns latent permission debt

into immediate operational risk, as agents can rapidly execute broad,

potentially destructive actions across various systems without the hesitation

or distraction characteristic of human users. To address this looming

"avalanche," Asay argues for a shift in software architecture. Instead of

allowing agents to inherit broad employee accounts, organizations must

implement purpose-built identities with aggressively minimal, read-only

permissions by default. This involves decoupling the ability to draft actions

from the ability to execute them and ensuring every automated action is logged

and reversible. Ultimately, AI agents are not creating a new crisis but are

exposing a long-ignored authorization problem, forcing the industry to finally

prioritize robust identity security and governance.

The article "The Agent Security Mess" by Matt Asay highlights a critical

vulnerability in enterprise security: the "persistent weak layer" of

over-provisioned permissions. Historically, security risks remained dormant

because humans typically ignore 96% of their granted access rights. However,

the rise of AI agents changes this dynamic entirely. Unlike humans, who act as

a natural governor on permission sprawl, autonomous agents inherit the full

permission surface of the accounts they use. This turns latent permission debt

into immediate operational risk, as agents can rapidly execute broad,

potentially destructive actions across various systems without the hesitation

or distraction characteristic of human users. To address this looming

"avalanche," Asay argues for a shift in software architecture. Instead of

allowing agents to inherit broad employee accounts, organizations must

implement purpose-built identities with aggressively minimal, read-only

permissions by default. This involves decoupling the ability to draft actions

from the ability to execute them and ensuring every automated action is logged

and reversible. Ultimately, AI agents are not creating a new crisis but are

exposing a long-ignored authorization problem, forcing the industry to finally

prioritize robust identity security and governance.Faster attacks and ‘recovery denial’ ransomware reshape threat landscape

The CSO Online article, based on Mandiant’s M-Trends 2026 report, highlights a

dramatic shift in the cybersecurity landscape where ransomware attacks are

becoming both faster and more strategically focused on "recovery denial." A

striking finding is the collapse of the "hand-off" window between initial

access and secondary threat group activity, which plummeted from over eight

hours in 2022 to a mere 22 seconds in 2025. This acceleration is coupled with

a transition in tactics; voice phishing has overtaken email phishing as a

primary infection vector, signaling a move toward real-time, interactive

social engineering. Furthermore, attackers are increasingly targeting core

infrastructure, such as backup environments, identity systems, and

virtualization platforms, to systematically dismantle an organization’s

ability to restore operations without paying a ransom. Despite these rapid

execution phases, median dwell times have paradoxically risen to 14 days, as

nation-state actors prioritize long-term persistence alongside financially

motivated groups seeking immediate impact. These evolving threats necessitate

a fundamental rethink of defense strategies, urging organizations to treat

their recovery assets as critical control planes that require the same level

of protection as the primary network itself to ensure true resilience.

The CSO Online article, based on Mandiant’s M-Trends 2026 report, highlights a

dramatic shift in the cybersecurity landscape where ransomware attacks are

becoming both faster and more strategically focused on "recovery denial." A

striking finding is the collapse of the "hand-off" window between initial

access and secondary threat group activity, which plummeted from over eight

hours in 2022 to a mere 22 seconds in 2025. This acceleration is coupled with

a transition in tactics; voice phishing has overtaken email phishing as a

primary infection vector, signaling a move toward real-time, interactive

social engineering. Furthermore, attackers are increasingly targeting core

infrastructure, such as backup environments, identity systems, and

virtualization platforms, to systematically dismantle an organization’s

ability to restore operations without paying a ransom. Despite these rapid

execution phases, median dwell times have paradoxically risen to 14 days, as

nation-state actors prioritize long-term persistence alongside financially

motivated groups seeking immediate impact. These evolving threats necessitate

a fundamental rethink of defense strategies, urging organizations to treat

their recovery assets as critical control planes that require the same level

of protection as the primary network itself to ensure true resilience.Attackers are handing off access in 22 seconds, Mandiant finds

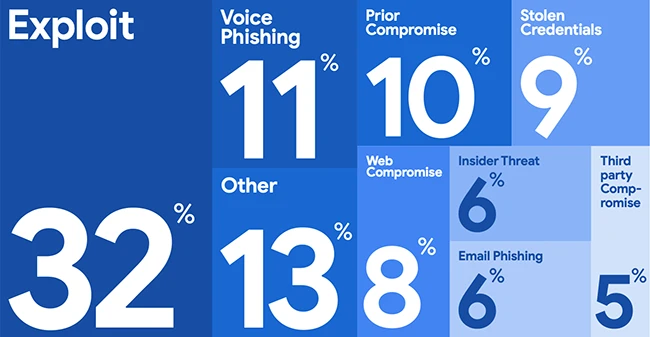

The Mandiant M-Trends 2026 report, based on over 500,000 hours of incident

response data from 2025, highlights a dramatic acceleration in attacker

efficiency and a significant shift in tactical focus. For the sixth

consecutive year, exploits remained the primary infection vector, yet the most

striking finding is the collapse of the "access hand-off" window; the median

time between initial compromise and transfer to secondary threat groups

plummeted from eight hours in 2022 to a mere 22 seconds in 2025. While overall

global median dwell time rose to 14 days—largely due to prolonged espionage

operations—adversaries are increasingly bypassing traditional defenses by

targeting virtualization infrastructure and backup systems to ensure "recovery

deadlock" during extortion. The report also identifies a surge in highly

interactive voice phishing, which has overtaken email as the top vector for

cloud-related compromises. Furthermore, while AI is being incrementally

integrated into reconnaissance and social engineering, Mandiant emphasizes

that the majority of breaches still result from fundamental systemic failures.

These evolving threats, including persistent backdoors with dwell times

exceeding a year, underscore the urgent need for organizations to modernize

their log retention policies and prioritize the security of their "Tier-0"

identity and virtualization assets.

The Mandiant M-Trends 2026 report, based on over 500,000 hours of incident

response data from 2025, highlights a dramatic acceleration in attacker

efficiency and a significant shift in tactical focus. For the sixth

consecutive year, exploits remained the primary infection vector, yet the most

striking finding is the collapse of the "access hand-off" window; the median

time between initial compromise and transfer to secondary threat groups

plummeted from eight hours in 2022 to a mere 22 seconds in 2025. While overall

global median dwell time rose to 14 days—largely due to prolonged espionage

operations—adversaries are increasingly bypassing traditional defenses by

targeting virtualization infrastructure and backup systems to ensure "recovery

deadlock" during extortion. The report also identifies a surge in highly

interactive voice phishing, which has overtaken email as the top vector for

cloud-related compromises. Furthermore, while AI is being incrementally

integrated into reconnaissance and social engineering, Mandiant emphasizes

that the majority of breaches still result from fundamental systemic failures.

These evolving threats, including persistent backdoors with dwell times

exceeding a year, underscore the urgent need for organizations to modernize

their log retention policies and prioritize the security of their "Tier-0"

identity and virtualization assets.From fragmentation to focus: Can one security framework simplify compliance?

In "From Fragmentation to Focus," Sam Peters explores the escalating

complexities of the modern cybersecurity landscape, driven by geopolitical

instability and a rapidly expanding attack surface. As digital transformation

progresses, businesses face a "messy" regulatory environment characterized by

overlapping requirements like GDPR, NIS 2, and DORA. This fragmentation often

leads to duplicated efforts, increased costs, and significant compliance

fatigue for organizations of all sizes. To combat these challenges, the

article positions ISO 27001 as a unifying "gold standard" framework. By

adopting this internationally recognized standard, companies can transition

from reactive defense to proactive risk management. ISO 27001 offers a

flexible, risk-based approach that can be seamlessly mapped to various global

regulations, thereby streamlining operations and reducing overhead. The

article argues that a consolidated security strategy does more than ensure

compliance; it fosters a security-first culture, builds digital trust, and

serves as a critical driver for competitive advantage and long-term business

resilience. Ultimately, moving toward a single, structured framework allows

leaders to navigate uncertainty with greater confidence, transforming security

from a burdensome cost center into a strategic asset that supports sustainable

growth in an increasingly volatile global market.

Microservices Without Drama: Practical Patterns That Work

The article "Microservices Without Drama: Practical Patterns That Work" offers

a pragmatic roadmap for implementing microservices without succumbing to

architectural complexity. It emphasizes that while microservices enable

independent team movement, they should only be adopted when data boundaries

are crisp to avoid the "distributed monolith" trap. A core principle is

absolute data ownership, where each service manages its own dataset, accessed

via stable, versioned contracts using OpenAPI or AsyncAPI. The author

advocates for a balanced communication strategy, favoring synchronous calls

for immediate reads and asynchronous events for decoupled integrations.

Operational success relies on "boring fundamentals" like standardized

Kubernetes deployments, GitOps for configuration, and robust observability

through OpenTelemetry and Prometheus. Reliability is further bolstered by

defensive patterns, including circuit breakers, retries, and idempotency,

ensuring the system remains resilient during failures. Security is addressed

through mTLS and strict secrets management, moving beyond fragile IP-based

allowlists. Ultimately, the piece argues that microservices provide true

freedom only when teams invest in consistent standards and treat interfaces as

public infrastructure. By prioritizing data integrity and operational

repeatability over architectural trends, organizations can reap the benefits

of scalability without the associated drama of unmanaged complexity.

The article "Microservices Without Drama: Practical Patterns That Work" offers

a pragmatic roadmap for implementing microservices without succumbing to

architectural complexity. It emphasizes that while microservices enable

independent team movement, they should only be adopted when data boundaries

are crisp to avoid the "distributed monolith" trap. A core principle is

absolute data ownership, where each service manages its own dataset, accessed

via stable, versioned contracts using OpenAPI or AsyncAPI. The author

advocates for a balanced communication strategy, favoring synchronous calls

for immediate reads and asynchronous events for decoupled integrations.

Operational success relies on "boring fundamentals" like standardized

Kubernetes deployments, GitOps for configuration, and robust observability

through OpenTelemetry and Prometheus. Reliability is further bolstered by

defensive patterns, including circuit breakers, retries, and idempotency,

ensuring the system remains resilient during failures. Security is addressed

through mTLS and strict secrets management, moving beyond fragile IP-based

allowlists. Ultimately, the piece argues that microservices provide true

freedom only when teams invest in consistent standards and treat interfaces as

public infrastructure. By prioritizing data integrity and operational

repeatability over architectural trends, organizations can reap the benefits

of scalability without the associated drama of unmanaged complexity.The end of cloud-first: What compute everywhere actually looks like

The article "The End of Cloud-First" explores a fundamental transition toward

a "compute-everywhere" architecture, where centralized cloud environments are

no longer the default destination for every workload. This evolution is driven

by the reality that the network is not a neutral substrate; bandwidth and

latency constraints, coupled with the explosion of IoT data, have made the

traditional cloud-first assumption increasingly untenable. The emerging model

operates across three distinct layers: a gateway layer for protocol

translation, an edge layer for localized processing near data sources, and a

centralized cloud layer reserved for heavy-lifting tasks like model training

and global analytics. Modern machine learning advancements now allow for

efficient inference on constrained devices, empowering local hardware to

filter and classify data autonomously rather than merely forwarding raw

telemetry. However, this decentralized approach introduces significant

operational complexity. IT leaders must now manage vast fleets of devices with

intermittent connectivity and navigate a landscape where partial system

failures are a normal steady state. Software updates become logistical

challenges rather than simple deployments. Ultimately, the focus is shifting

from simple cloud migration to sophisticated orchestration, ensuring that

intelligence and compute are placed precisely where they deliver value while

balancing performance, cost, and reliability.

The article "The End of Cloud-First" explores a fundamental transition toward

a "compute-everywhere" architecture, where centralized cloud environments are

no longer the default destination for every workload. This evolution is driven

by the reality that the network is not a neutral substrate; bandwidth and

latency constraints, coupled with the explosion of IoT data, have made the

traditional cloud-first assumption increasingly untenable. The emerging model

operates across three distinct layers: a gateway layer for protocol

translation, an edge layer for localized processing near data sources, and a

centralized cloud layer reserved for heavy-lifting tasks like model training

and global analytics. Modern machine learning advancements now allow for

efficient inference on constrained devices, empowering local hardware to

filter and classify data autonomously rather than merely forwarding raw

telemetry. However, this decentralized approach introduces significant

operational complexity. IT leaders must now manage vast fleets of devices with

intermittent connectivity and navigate a landscape where partial system

failures are a normal steady state. Software updates become logistical

challenges rather than simple deployments. Ultimately, the focus is shifting

from simple cloud migration to sophisticated orchestration, ensuring that

intelligence and compute are placed precisely where they deliver value while

balancing performance, cost, and reliability.

We’re fighting over GPUs and memory – but power manufacturing may decide who scales first

In this article, Matt Coffel argues that while the global tech industry

remains fixated on GPU shortages and silicon supply chains, the true

bottleneck for scaling artificial intelligence lies in electrical

manufacturing capacity. As data center power demands are projected to surge

from 33 GW to 176 GW by 2035, the availability of critical infrastructure—such

as switchgear, transformers, and power distribution units—has become the

decisive factor in operational readiness. AI-intensive workloads demand

unprecedented power densities and constant uptime, yet the manufacturing

sector is currently struggling to keep pace with the rapid acceleration of AI

deployment. Traditional lead times of eighteen to twenty-four months clash

with the immediate needs of hyperscalers, exacerbated by a shortage of skilled

trades and over-customized engineering. To overcome these constraints, Coffel

suggests that operators must shift toward standardization, modularization, and

prefabricated power systems while engaging manufacturers much earlier in the

design process. Ultimately, the ability to scale will not be determined solely

by who possesses the most advanced chips, but by who can most efficiently

deploy the resilient electrical infrastructure required to keep those

processors running at scale.

In this article, Matt Coffel argues that while the global tech industry

remains fixated on GPU shortages and silicon supply chains, the true

bottleneck for scaling artificial intelligence lies in electrical

manufacturing capacity. As data center power demands are projected to surge

from 33 GW to 176 GW by 2035, the availability of critical infrastructure—such

as switchgear, transformers, and power distribution units—has become the

decisive factor in operational readiness. AI-intensive workloads demand

unprecedented power densities and constant uptime, yet the manufacturing

sector is currently struggling to keep pace with the rapid acceleration of AI

deployment. Traditional lead times of eighteen to twenty-four months clash

with the immediate needs of hyperscalers, exacerbated by a shortage of skilled

trades and over-customized engineering. To overcome these constraints, Coffel

suggests that operators must shift toward standardization, modularization, and

prefabricated power systems while engaging manufacturers much earlier in the

design process. Ultimately, the ability to scale will not be determined solely

by who possesses the most advanced chips, but by who can most efficiently

deploy the resilient electrical infrastructure required to keep those

processors running at scale.

Spec-Driven Development: The Key to Protecting AI-Generated Data Products

In "Spec-Driven Development: The Key to Protecting AI-Generated Data Products," Guy Adams explores the rising threat of semantic drift in the era of AI-accelerated data engineering. Semantic drift occurs when data metrics gradually lose their original meaning through successive updates, potentially leading to costly business errors when executives rely on inaccurate interpretations of "headcount" or other key figures. While traditional DataOps focuses on recording what was built, it often fails to document the underlying intent, a gap that AI-assisted development significantly widens. To counter this, Adams advocates for spec-driven development—a software engineering methodology that prioritizes clear, structured specifications before coding begins. By defining a data product’s purpose and constraints upfront, organizations can leverage agentic AI to audit every proposed change against the original requirements. This ensures that new implementations maintain coherence rather than undermining a product’s utility. Although maintaining manual specifications was historically cost-prohibitive, Adams argues that current AI capabilities make automated spec maintenance both feasible and essential. Ultimately, adopting this "left-shifted" documentation approach allows enterprises to build drift-proof data products that remain reliable even as AI agents accelerate the pace of development and modification across complex enterprise systems.IT Leaders Report Massive M&A Wave While Facing AI Readiness and Security Challenges

According to a recent ShareGate survey published by CIO Influence, IT leaders

are navigating an unprecedented surge in mergers and acquisitions (M&A),

with 80% of respondents currently involved in or planning such events. This

massive wave, fueled by a 43% increase in global deal value during 2025, has

positioned M&A as a primary catalyst for IT modernization. However, this

acceleration brings significant hurdles, particularly regarding cybersecurity

and AI readiness. While 64% of organizations migrate to Microsoft 365

specifically to bolster security, 41% of leaders identify compliance and data

protection as top concerns during these transitions. The study also highlights a

shift in leadership; IT operations and security teams, rather than business

executives, are the primary drivers of AI adoption, such as Microsoft Copilot.

Despite 62% of organizations already deploying Copilot, they face substantial

blockers including poor data quality, complex governance, and access control

issues. Furthermore, 55% of teams select migration tools before fully assessing

integration risks, which can jeopardize long-term stability. Ultimately, the

report emphasizes that for M&A success, IT must evolve into a strategic

partner that integrates robust governance and security into the foundation of

every digital migration.

According to a recent ShareGate survey published by CIO Influence, IT leaders

are navigating an unprecedented surge in mergers and acquisitions (M&A),

with 80% of respondents currently involved in or planning such events. This

massive wave, fueled by a 43% increase in global deal value during 2025, has

positioned M&A as a primary catalyst for IT modernization. However, this

acceleration brings significant hurdles, particularly regarding cybersecurity

and AI readiness. While 64% of organizations migrate to Microsoft 365

specifically to bolster security, 41% of leaders identify compliance and data

protection as top concerns during these transitions. The study also highlights a

shift in leadership; IT operations and security teams, rather than business

executives, are the primary drivers of AI adoption, such as Microsoft Copilot.

Despite 62% of organizations already deploying Copilot, they face substantial

blockers including poor data quality, complex governance, and access control

issues. Furthermore, 55% of teams select migration tools before fully assessing

integration risks, which can jeopardize long-term stability. Ultimately, the

report emphasizes that for M&A success, IT must evolve into a strategic

partner that integrates robust governance and security into the foundation of

every digital migration.