Red teaming – getting prepared for the inevitable

A red teaming exercise is undertaken with the aim of exploring areas that other

assessments would overlook to determine the overall attack chain. Unlike a

penetration testing exercise, which usually lasts for around a week or two, a

red teaming engagement should be considerably longer. The total elapsed time of

an engagement will be several months, or even up to a year, with the team

carrying out a series of different exercises during that time and allowing time

gaps in between. During the exercise, the team works to identify vulnerabilities

and formulate plans on how criminals could exploit the identified weaknesses.

These could lie within a business’ people, network, company inboxes, or even

physical access to offices. There are several stages to a red teaming

engagement, both on a technical and physical level. ... The red team will spend

a significant portion of time mapping out the various physical and technical

access points to an organisation before they attempt to breach. The preparation

for a red teaming exercise takes significantly longer than other security

assessments, as there is often a very specific set of targets in mind, rather

than testing any and every area of the business.

Navigating Active Directory Security: Dangers and Defenses

Threat actors typically need initial access on a domain-joined system in an

organization, says Natarajan, and they can achieve it in multiple ways,

including spear-phishing emails with malicious attachments, drive-by download

attacks, and exploiting a vulnerability in an Internet-facing system. Once a

victim runs the malicious binaries, the attacker has a better chance of getting

initial access over the system. They could exploit other system flaws to gain

administrative privileged access, and AD reconnaissance tools can help them

understand the directory structure and choose their targets. Various

mis-configurations – which experts agree are plentiful in AD environments – can

help them escalate their privileges to domain administrator. "To me, it's almost

more attractive because there's not a patch for that," says Will Schroeder,

technical architect at SpecterOps, of misconfigurations from an attacker's

perspective. "There are ways that people can fix it, but over time this kind of

debt and misconfiguration can build up." Because AD systems are so complex,

little things can create large security holes over time.

Programming Evolution: How Coding Has Grown Easier in the Past Decade

In the past decade, APIs have played a huge role in the programming evolution.

It's easy for developers to have a love-hate relationship with APIs. APIs create

additional security risks that programmers need to manage. They often place

limits on which functionality you can implement within an API-dependent app

because you can only do whatever the API supports. And APIs can become single

points of failure for applications that depend centrally on them. On the other

hand, APIs make the lives of programmers easier in the sense that they make it

fast and simple to integrate disparate services and data. Until about 10 years

ago, if you wanted to import data from a third-party platform into your app, you

probably would have had to resort to an "ugly" technique--such as scraping the

data off of a web interface. Today, you can easily and systematically import the

data using the platform's API ... Until about a decade ago, not only were there

relatively few open standards that major vendors supported, but companies often

went out of their way not to make their platforms compatible with those of

external organizations.

Understanding and stopping 5 popular cybersecurity exploitation techniques

Criminals use stack pivoting to bypass protections like DEP by chaining ROP

gadgets in a return-oriented programming attack. With stack pivoting, attacks

can pivot from the real stack to a new fake stack, which can be an

attacker-controlled buffer such as the heap. The future flow of program

execution can be controlled from the heap. While Windows provides export address

filtering (EAF), a next-gen cybersecurity solution can provide an access filter

that prevents the reading of Windows executables (PE) headers and export/import

tables by code, using a special protection flag to protect memory areas. An

access filter should also support allowlist so heuristics can be tweaked as

needed. ... Many advanced, next-gen cybersecurity solutions place hooks on

sensitive API functions to intercept and perform checks, such as antivirus

scanning, before allowing the kernel to service the request. Criminals can take

advantage of the fact that only sensitive functions are monitored. By calling an

unmonitored, non-sensitive function at an offset (to intentionally address an

important kernel service instead), cybercriminals can often evade security

software.

AI has become a design problem

All the best data, model, and development practices in the world cannot fully

guarantee perfectly behaved AI. In the end, good user interface design has to

appropriately present AI to end users. An effective user interface can, for

instance, tell the user the provenance of its insight, recommendations, and

decisions. ... Historically, UIs presented data as matter-of-fact. Common

lists of data were not suspect; they were simply regurgitating what was

stored. But increasingly, presentations of data are sourced, culled, and

shaped by AI and therefore carry with them the suspect nature of the AI’s

curation. UI design must introduce new mechanisms to allow users to inspect

data provenance and reasoning and introduce visual cues to better share data

confidence and bias to the user. As we navigate the intricacies of a

technology already integrated into many of our systems, we must design these

systems in a responsible manner, mindful of transparency, privacy, and

fairness. Design can frame AI-driven user experiences to end users in a manner

that engenders trust and helps the end user understand the scope, strengths,

and weaknesses of a given system. In turn, fear and mistrust are alleviated

around the mysterious black boxes.

4 Key Observability Metrics for Distributed Applications

Latency is the amount of time it takes between a user performing an action and

its final result. For example, if a user adds an item to their shopping cart,

the latency would measure the time between the item addition and the moment

the user sees a response that indicates its successful addition. If the

service responsible for fulfilling this action degraded, the latency would

increase, and without an immediate response, the user might wonder whether the

site was working at all. To properly track latency in an Impact Data context,

it's necessary to follow a single event throughout its entire lifetime. ...

Tracking error rates is rather straightforward. Any 5xx (or even 4xx) issued

as an HTTP response by your server should be tagged and counted. Even

situations that you've accounted for, such as caught exceptions, should be

monitored because they still represent a non-ideal state. These issues can act

as warnings for deeper problems stemming from defensive coding that doesn't

address actual problems. Kuma can capture the error codes and messages thrown

by your service, but this represents only a portion of actionable data.

How to avoid the network-as-a-service shell game

Our Rule One says that your project has to meet financial targets, meaning a

target ROI. NaaS makes it easier to figure out whether a project meets CFO

targets, but remember that anything sold as a service has to include a profit

margin for the seller. The cloud has not replaced every data center, not

because of CIO intransigence but because the cloud isn’t always cheaper. NaaS

wouldn’t always be cheaper either, so a NaaS-based project is going to have to

prove it’s a better strategy than capital purchasing would be. Your trip to

the CFO’s office just got more complicated. Another issue with NaaS is cost

control. With traditional networking, you pay a fixed amount for fixed

capacity. Your cost is predictable. Any kind of consumption-based pricing

risks generating some truly eye-popping bills if the usage is greater than

expected, and most such systems really don’t make it easy to ensure that

excess usage doesn’t happen. Serverless cloud computing customers are already

whining over multi-hundred-percent cost overruns. It seems like you can either

face your CFO during project approval or face your CFO when you blow your

budget. The latter isn’t likely a great career move for you.

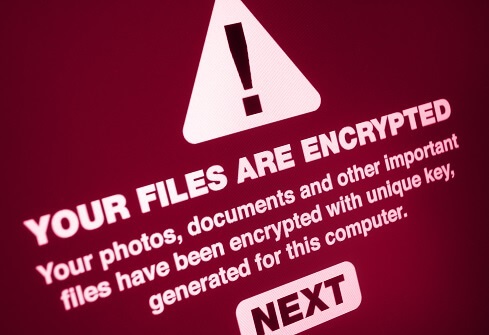

What You Need to Know About Ransomware Insurance

Ransomware insurance is like any other type of cyber insurance. "Cyber

insurance is about assessing the cyber risk, determining the potential losses

due to attacks, and then obtaining coverage," said Bhavani Thuraisingham, a

professor at the University of Texas at Dallas, as well as the executive

director of the university’s Cyber Security Research and Education Institute.

The unique challenge with ransomware is that once an attacker gets into the

system, they have access to everything within. "[They aren't] just stealing

your data but crippling your system by encrypting all of the data and files so

that you can't have access unless you pay them a ransom," she explained. "It's

like someone breaking into your house and stealing your jewelry, but also

kidnapping your child and demanding a ransom," Thuraisingham quipped.

Ransomware insurance is generally sold along with, or in addition to, a

general cyber insurance policy. The appropriate cyber liability insurance

policy depends primarily on the applicant's industry and operations, observed

Jack Dowd an account executive at insurance provider The Dowd

Agencies.

Ensuring digital maturity in the boardroom

Becoming digitally mature allows organisations to future-proof their business.

Something that became clear during the pandemic was that the ability to remain

agile is paramount. Digital transformation enables this. Utilising cloud

technologies gives enterprises the freedom and flexibility to work wherever

and however it is necessary. From here, businesses can further foster a

flexible culture, promoting a better work-life balance for employees. However,

as society climbs back to normal, many within the boardroom will understand

that there are more benefits to digital transformation than remote working.

Scalability is an essential factor. Technology is not bound to physical

restrictions, digital services and solutions can be increased, enhanced and

altered at a moment’s notice. This not only helps to keep organisations agile,

but also provides the foundations of future growth. These increased levels of

scalability and agility combine to enable greater growth and profitability for

businesses. Efficient and cost effective processes allow leaders to focus on

wider business opportunities, and greater access to data produces better

decision making, faster.

Ransomware Landscape: Notorious REvil Is Only One Operator

Many ransomware-wielding attackers will first attempt to contact victims

directly and get them to pay a ransom, promising that if the organization does

so quickly, then attackers will never leak their data or attempt to "name and

shame" them. Hence the number of victims who simply pay remains unknown.

Furthermore, the damage caused by a single attack from a more sophisticated

ransomware operation, such as REvil, can be severe. Miami-based Kaseya's

software is used by a number of managed service providers to manage clients'

endpoints, and up to 60 MSPs and 1,500 of their clients were infected by REvil

- aka Sodinokibi - ransomware just in that single attack. REvil has also been

tied to the attack against meat-processing giant JBS - who paid attackers an

$11 million ransom - and many other attacks. Another operation, called

DarkSide, claimed credit for the May attack against Colonial Pipeline Co.,

which supplies 45% of the fuel used along the East Coast. Shortly after the

attack, DarkSide claimed it would shut down its ransomware-as-a-service

operation because of unwanted publicity and attention.

Quote for the day:

"It is the responsibility of

leadership to provide opportunity, and the responsibility of individuals to

contribute." -- William Pollard

No comments:

Post a Comment