Mind the gap – how close is your data to the edge?

A lesser talked about technology might be the key to unlocking the potential of

technologies like SD-WAN, 5G, edge computing, and IoT. Network Functions

Virtualisation (NFV) hosting pushes the limits of modern networks by allowing

companies to create an on-demand virtual edge to their networks, closing that

gap between an enterprise’s network and the various edge devices where

transactions and/or data from mission-critical applications are created. Having

NFV as part of your SD-WAN and cloud connectivity strategy enables traffic to

make the smallest numbers of hops on the public internet before it passes

through a private, secure software-defined network (SDN). In other words, you

shorten that first mile, and optimise the middle- and last-mile by having your

data travel safely and quickly over a private connection. Think about every time

you tap your card at a PoS terminal on a shopping trip or a night out. To

process this transaction, your financial details need to travel to a central

point and back again. How long do you want those details traveling across public

infrastructure? With a virtual edge, this distance can be significantly

minimised, providing predictable, secure connectivity.

Discovering Symbolic Models From Deep Learning With Inductive Biases

Symbolic Model Framework proposes a general framework to leverage the advantages

of both traditional deep learning and symbolic regression. As an example, the

study of Graph Networks (GNs or GNNs) can be presented, as they have strong and

well-motivated inductive biases that are very well suited to complex problems

that can be explained. Symbolic regression is applied to fit the different

internal parts of the learned model that operate on a reduced size of

representations. A Number of symbolic expressions can also be joined together,

giving rise to an overall algebraic equation equivalent to the trained Graph

Network. The framework can be applied to more such problems as rediscovering

force laws, rediscovering Hamiltonians, and a real-world astrophysical

challenge, demonstrating that drastic improvements can be made to

generalization, and plausible analytical expressions are being made distilled.

Not only can it recover the injected closed-form physical laws for the Newtonian

and Hamiltonian examples, but it can also derive a new interpretable closed-form

analytical expression that can be useful in astrophysics.

Is Security An Illusion? How A Zero-Trust Approach Can Make It A Reality

One of the first steps to implementing zero-trust measures is to find an

infrastructure and website security partner that specializes in zero trust and

can provide consultation and solutions. Using a partner like this can enable

an easy implementation across your company’s environment and systems. Look for

a partner that can identify segments and microsegments important to your

organization and ensure that security measures are implemented company-wide.

This includes thinking about the IT environment in segments and microsegments,

which include data like PII or customer data as separate areas that need to be

accessed independently. For example, if your organization has implemented a

third-party application or applications that support HR functions (including

payroll, employee information and more), these partners can help ensure that

individuals with access to one of the components (or segments) will not be

able to access any of the other segments without separate authorization.

Multifactor authentication (MFA) is also a core component of zero-trust

implementation.

Common Linux vulnerabilities admins need to detect and fix

Perhaps, from a security perspective, the most important individual piece of

software running on a consumer PC is your web browser. Because it's the tool

you use most to connect to the internet, it's also going to be the target of

the most attacks. It's therefore important to make sure you've incorporated

the latest patches in the release version you have installed and to make sure

you're using the most recent release version. Besides not using unpatched

browsers, you should also consider the possibility that, by design, your

browser itself might be spying on you. Remember, your browser knows everywhere

on the internet you've been and everything you've done. And, through the use

of objects like cookies (data packets web hosts store on your computer so

they'll be able to restore a previous session state), complete records of your

activities can be maintained. Bear in mind that you "pay" for the right to use

most browsers -- along with many "free" mobile apps -- by being shown ads.

Consider also that ad publishers like Google make most of their money by

targeting you with as for the kinds of products you're likely to buy and, in

some cases, by selling your private data to third parties.

Agile Methodology Finally Infiltrates the C-Suite

What started as a snowball slowly gathered mass and speed, launching a genuine

agile revolution. Today, that revolution has finally reached the executive

suite. Agility is officially a C-level concern. In fact, a recent survey of

senior business leaders, conducted by IDC and commissioned by ServiceNow,

found that 90% of European CEOs consider agility critical to their company's

success. That’s no surprise after a year in which the ability to react quickly

and effectively to new business challenges took center stage. CEOs are

increasingly aware of the success agile companies enjoy. In my experience,

these organizations are well-positioned to create great customer and employee

experiences, drive productivity, and attract and retain the best talent.

However, rather than talking about agility as a standalone C-suite priority, I

see it as a foundational enabler of these three key organizational priorities.

... When measured against five types of organizational agility, IDC found

that one third of organizations sit in the lower “static” or “disconnected”

tiers, while nearly half are categorized as the “in motion” middle tier of the

agility journey. Just one in five (21%) have attained the top levels of

“synchronized” and “agile.”

Preparing for your migration from on-premises SIEM to Azure Sentinel

Many organizations today are making do with siloed, patchwork security

solutions even as cyber threats are becoming more sophisticated and

relentless. As the industry’s first cloud-native SIEM and SOAR (security

operation and automated response) solution on a major public cloud, Azure

Sentinel uses machine learning to dramatically reduce false positives, freeing

up your security operations (SecOps) team to focus on real threats. Moving to

the cloud allows for greater flexibility—data ingestion can scale up or down

as needed, without requiring time-consuming and expensive infrastructure

changes. Because Azure Sentinel is a cloud-native SIEM, you pay for only the

resources you need. In fact, The Forrester Total Economic Impact™ (TEI) of

Microsoft Azure Sentinel found that Azure Sentinel is 48 percent less

expensive than traditional on-premises SIEMs. And Azure Sentinel’s AI and

automation capabilities provide time-saving benefits for SecOps teams,

combining low-fidelity alerts into potential high-fidelity security incidents

to reduce noise and alert fatigue.

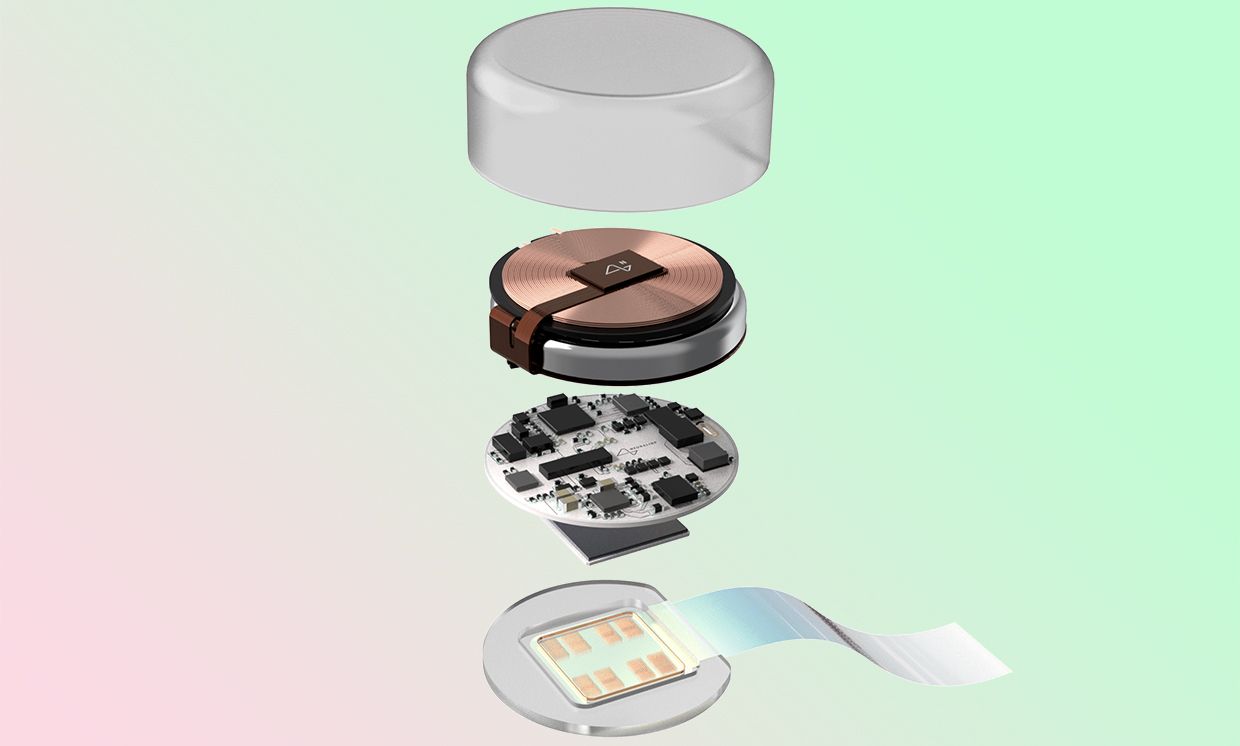

Exclusive Q&A: Neuralink’s Quest to Beat the Speed of Type

Most machine learning is open-loop. Say you have an image and you analyze it

with a model and then produce some results, like detecting the faces in a

photograph. You have some inference you want to do, but how quickly you do it

doesn't generally matter. But here the user is in the loop—the user is

thinking about moving and the decoder is, in real time, decoding those

movement intentions, and then taking some action. It has to act very quickly

because if it’s too slow, it doesn't matter. If you throw a ball to me and it

takes my BMI five seconds to infer that I want to move my arm forward—that’s

too late. I’ll miss the ball. So the user changes what they’re doing based on

visual feedback about what the decoder does: That’s what I mean by closed

loop. The user makes a motor intent; it’s decoded by the Neuralink device; the

intended motion is enacted in the world by physically doing something with a

cursor or a robot arm; the user sees the result of that action; and that

feedback influences what motor intent they produce next. I think the closest

analogy outside of BMI is the use of a virtual reality headset—if there’s a

big lag between what you do and what you see on your headset, it’s very

disorienting, because it breaks that closed-loop system.

Inside Google’s Quantum AI Campus

Google Quantum AI Lab connects the different pieces of quantum computing

together using open source software and Cloud APIs. Its products include

open-source libraries such as Cirq, OpenFermion, and TensorFlow Quantum. Cirq

is Google’s way of defining and modifying quantum circuits. It allows

programmers to design and create quantum circuits, analyse it using simulators

and then send it to hardware using Google’s quantum computing service API.

OpenFermion, on the other hand, is a library for quantum simulations,

especially quantum chemistry and electronic structure calculations. The

error-corrected quantum computer will be the size of a tennis court, said Eirk

Lucero, Lead Engineer, Quantum Operations and Site Lead at Google Santa

Barbara. Within the quantum computer, one million qubits will operate in

concert, directed by a surface code error correction. Marissa Giustina,

Quantum Electronics Engineer and Research Scientist at Google, is part of the

team building the cryogenic hardware that facilitates information transfer.

She said the systems inside the campus look like racks of electronics

operating at room temperature, and connected to a big cylinder– the dilution

refrigerator, as seen below.

Scum uses an iterative approach to Product Development, which means that the

team delivers usable work frequently. This small change is significant because

by delivering usable work, the Scrum team is learning by doing and is not

building up technical debt, or unfinished work, which will need to be completed

later. By delivering a done, usable increment each Sprint, the Scrum team

eliminates waste and can gather feedback on what was delivered, enabling faster

learning. The graphic below by Henrik Knilberg is my favorite example of this

concept. ... In the end, the team met its goal of delivering the required

business functionality by the deadline. I’m confident this would have been

impossible without the Scrum framework. By making decisions based on what they

knew at the time and adapting to what they learned through experience, the team

also became more efficient. By focusing only on the essentials, the team was

able to eliminate waste. And, by delivering usable work frequently, the team was

able to gain more transparency about the work that was ahead of them,

eliminating the accumulation of technical debt.

Autonomous Security Is Essential if the Edge Is to Scale Properly

The emerging problem in front of us is how to deliver secure dynamic networking

at this extreme scale while meeting the economic and security requirements of

the various tiers of service. For instance, SD-WAN for large enterprises has a

price point that allows for manual life-cycle operations, but secure networking

for small and midsize business and the IIoT do not. Security policy operations

are manually arbitrated in the enterprise market today, but such manual

operations will never work at the future scales we're talking about. Finally,

enterprise networks are relatively static, but the fully connected,

smart-everything world ahead of us will feature highly dynamic, zero-trust

networks at extreme scale. The upshot: For secure networking to function at the

scale and price we need, it must become autonomous. When you unpack the nature

of cloud-delivered secure endpoint services, you immediately discover the common

limiting factor for cost, security, scale, and reliability: people. Right now,

secure network operations are manually arbitrated. For example, deployment of a

single secure SD-WAN endpoint takes five to nine worker-hours.

Quote for the day:

"A leadership disposition guides you

to take the path of most resistance and turn it into the path of least

resistance." -- Dov Seidman

No comments:

Post a Comment