Financial institutions must be prepared however for cybercriminals’ methods countering new defences with continuing evolving means of their own. Instead of executing cyberattacks with the intention of stealing money or making fraudulent payments, cyber criminals may target the machine learning processes, embedding fraudulent mechanics into the way the AI engines work. “One of the big concerns, especially at the regulatory level for the future, is ultimately the underlying data integrity,” Holt says. “So, if the attackers don’t do big enormous payouts immediately but attempt to alter the underlying data, how would that be spotted?” Therein lies the danger for financial services companies which are overly optimistic about the potentials for AI in cybersecurity. Dries Watteyne, head of SWIFT’s cyber fusion centre, urges caution in this area. “When talking about the potential of machine learning, I think we shouldn’t forget everything we achieved to date without it.”

Windows Subsystem for Linux 2 Moving into General Availability

As discussed previously, WSL2 is a change in architecture from WSL 1. Where WSL 1 required a translation layer between the Linux system calls and the Windows NT kernel, WSL 2 ships with a lightweight VM running a full Linux kernel. This VM runs directly on the Windows Hypervisor layer. This kernel includes full system call compatibility and allows for running apps like Docker and FUSE natively on Linux. With this new implementation, the Linux kernel has full access to the Windows file system. This new release brings large improvements to performance especially for interactions that require accessing the file system. According to Craig Loewan, Program Manager at Microsoft, this could be between a 3 to 6 times performance improvement depending on how file intensive the application is. He further mentions that unzipping tarbars could see a 20 times performance increase. With this upcoming new version of Windows 10, currently known as version 2004, Microsoft has indicated that the installation and updating process of WSL2 will be streamlined.

Zoom vs. Microsoft Teams: Video chat apps for working from home, compared

Teams has a similar feel to Slack -- you can talk to team members privately or in specific channels, and you can call attention to the whole group or just an individual with the mention feature. You can video chat with up to 250 people at once with Teams, or present live to up to 10,000 people. Share meeting agendas prior to a conference, invite external guests to join a meeting, and access past meeting recordings and notes. Meetings can be scheduled in the Teams app or through Outlook. ... The Zoom video conference app works for Android, iOS, PC and Mac. The app offers a basic free plan that hosts up to 100 participants. There are also options for small and medium business teams ($15-$20 a month per host) and large enterprises for $20 a month per host with a 50-host minimum. You can adjust meeting times, and select multiple hosts. Up to 1,000 users can participate in a single Zoom video call, and 49 videos can appear on the screen at once. The app has HD video and audio capabilities, collaboration tools like simultaneous screen-sharing and co-annotation, and the ability to record meetings and generate transcripts.

Creating a Text-to-speech engine with Google Tesseract and Arm NN on Raspberry Pi

The network’s architecture can be divided into three significant steps. The first one takes the input image and then extracts features using several convolutional layers. These layers partition the input image horizontally. For each partition, these layers determine the set of image column features. The sequence of column features is used in the second step by the recurrent layers. The recurrent neural networks (RNNs) are typically composed of long short-term memory (LTSM) layers. LTSM revolutionized many AI applications, including speech recognition, image captioning, and time-series analysis. OCR models use RNNs to create the so-called character-probability matrix. This matrix specifies the confidence that the given character is found in the specific partition of the input image. Thus, the last step uses this matrix to decode the text from the image. Usually, people use the connectionist temporal classification (CTC) algorithm. The CTC aims at converting the matrix into the word or sequence of words that makes sense.

Collaboration answers the call

Senior Reporter Matthew Finnegan, who covers collaboration for Computerworld, addresses the question in the back of everyone's mind: "Remote working, now and forevermore?" Surveys show that the majority of people prefer to work from home — and in organizations that have had mature work-from-home policies for a while, many employees have settled into their new reality as if it's no big deal. The office won't go away overnight. But as long as productivity endures, and as collaboration tools inevitably improve, why not allow people to work wherever they like? Matthew and IDG TechTalk's Juliet Beauchamp discuss these and other possibilities on a special episode of Today in Tech. One thing's for sure: Videoconferencing is proving itself the lifeblood of remote work. But can networks handle it? By all accounts, the public internet and even cloud services have held up remarkably well. Yet as analyst Zeus Kerravala observes in "Videoconferencing quick fixes need a rethink when the pandemic is over," written by Network World contributor Sharon Gaudin, those who return to the office and want to continue Zooming or Webexing could face obstacles.

Why Industries Should Prepare For Mass Blockchain Adoption

First and foremost, the token market is likely to be significantly reduced this year, and only the most highly demanded and well-developed projects will remain as the digital assets traded on exchanges that are increasingly being forced to comply with legal requirements. Another change this year will be a gradual transition to turnkey solutions. The idea of blockchain turnkey solutions was first presented by Bitwings, an official blockchain-based solution of the leading Spanish mobile operator Wings Mobile. Its goal was to create the most secure standards for e-devices without compromising the operating system and its performance. To integrate turnkey solutions, companies need to conduct internal research: analysis of the current market and existing problems, and the potential of the blockchain in different sectors. It’s also worth studying the existing centralized and decentralized solutions and deciding how to integrate the solution into production processes without disrupting their performance. The latter is the most important point; it is one that all executive officers should pay attention to. They must consider the most efficient options for integrating blockchain into their working processes.

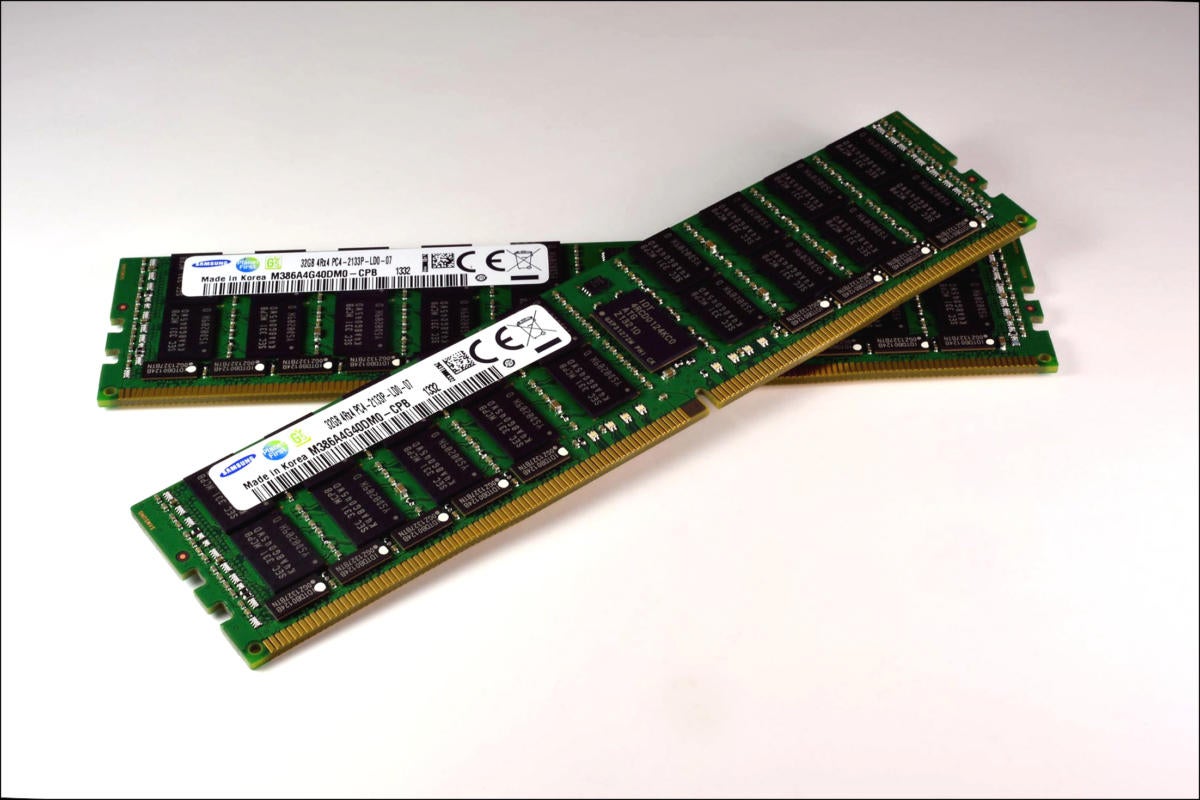

DDR5 memory promises a significant speed boost

"What's important about DDR5 is the high level of integration it provides," says Jim Handy, principal analyst with Objective Analysis, an analyst firm specializing in the memory market. "The people who defined this spec took advantage of the fact that Moore's Law not only reduces DRAM's price per bit, but it also makes it cheaper to add increasing amounts of powerful logic to the chip. They have artfully used this to improve the CPU-DRAM bandwidth, to move the Memory Wall a little farther out." The Same Bank Refresh is a good example, Handy says. "For DRAM's entire history a chip couldn't provide data while it was being refreshed. Now Same Bank Refresh allows data to be accessed in banks that aren't undergoing refresh. This does a lot to improve data communication." So when will this start to show up? Last year an Intel roadmap was leaked to the hobbyist press that showed Intel was planning to move to DDR5 and PCI Express 5 (completely skipping PCIe v4) in 2021. Micron has begun sampling DDR5, Hynix said it plans to begin volume production at the end of this year, and Samsung plans to start DDR5 production next year.

Don’t Leave “Ethical Tech” Out of Your Digital Transformation Plan

Few organizations and their leaders develop an overall approach to the ethical impacts of technology use—at least not at the start of a digital transformation. In a recent study, just 35 percent of respondents said their organization’s leaders spend enough time thinking about and communicating the impact of digital initiatives on society. But in order to be truly savvy in the age of advanced, connected, and autonomous technologies, leaders must think beyond designing and implementing technologically driven capabilities. They should consider how to do so responsibly from the start. At Deloitte, we see a relationship between a company’s digital and technological progress—in other words, its tech savviness—and its focus on various ethical issues related to technology. Our research found that 57 percent of respondents from organizations considered to be “digitally maturing” say their organization’s leaders spend adequate time thinking about and communicating digital initiatives’ societal impact, compared with only 16 percent of respondents from companies in the early stages of their digital transformation.

Duplication, fragmentation hamper interoperability efforts, impact patient safety

Duplicate records might also contain incomplete or outdated information and can affect the quality of care by forcing clinicians to make care decisions without important information such as recent lab results, allergies and current medications. Back in 2019, Verato and AdVault partnered on a cloud-based patient matching platform which aims to expand secure identity matching so care teams have seamless access to medical records. Patient matching specialist Verato, which has also partnered with healthcare IT security specialist Imprivata, is of the belief that alignment of disparate patient record platforms will help eliminate duplicate records, establish more accurate care histories and improve patient safety. In a 2016 Ponemon Institute survey, 86% of respondents said they witnessed a medical error as a direct result of misidentification, and indicated that 35% of all denied claims are due to misidentification, which can cost hospitals up to $1.2 million a year. "Many systems still do not communicate and store data in disjointed architectures and an upsurge of identifiers continues to be created," Doug Brown, managing partner of Black Book, said in a statement.

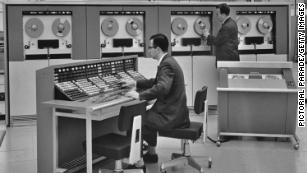

COBOL, COVID-19, and Coping with Legacy Tech Debt

With a history that stretches back three generations, COBOL was developed for a different breed of compute, Edenfield says. “These were massive machines that did certain things like number crunching,” he says. “It wasn’t fancy.” COBOL was designed to move across multiple machines and frankly to be readable, Edenfield says. “People could learn it quickly and it was easier than an assembly language where you are programming in very cryptic commands.” As new compute demands emerged, programming languages evolved, Edenfield says. Agile development and other modern processes can be more efficient and fundamentally different from how COBOL and other early programming languages were handled. Despite such advances, it is a challenge to escape those legacy roots. “Because COBOL was so prevalent, they can’t get out of it,” he says. “There’s so much of it. It’s running all the backroom, payment processing for all your major financial institutions; all your big companies have it.” It was common for organizations to constantly build up COBOL-based systems for decades, Edenfield says, with the programmers retiring or moving on. “Pretty soon, the people who wrote the systems aren’t there anymore,” he says.

Quote for the day:

"Many people go fishing all of their lives without knowing it is not fish they are after." -- Henry David Thoreau