Years-Long ‘SilentFade’ Attack Drained Facebook Victims of $4M

“Our investigation uncovered a number of interesting techniques used to

compromise people with the goal to commit ad fraud,” said Sanchit Karve and

Jennifer Urgilez with Facebook, in a Thursday analysis unveiled this week at

the Virus Bulletin 2020 conference. “The attackers primarily ran malicious ad

campaigns, often in the form of advertising pharmaceutical pills and spam with

fake celebrity endorsements.” Facebook said that SilentFade was not downloaded

or installed by using Facebook or any of its products. It was instead usually

bundled with potentially unwanted programs (PUPs). PUPs are software programs

that a user may perceive as unwanted; they may use an implementation that can

compromise privacy or weaken user security. In this case, researchers believe

the malware was spread via pirated copies of popular software (such as the

Coreldraw Graphics graphic design software for vector illustration and page

layout, as seen below). Once installed, SilentFade stole Facebook credentials

and cookies from various browser credential stores, including Internet

Explorer, Chromium and Firefox.

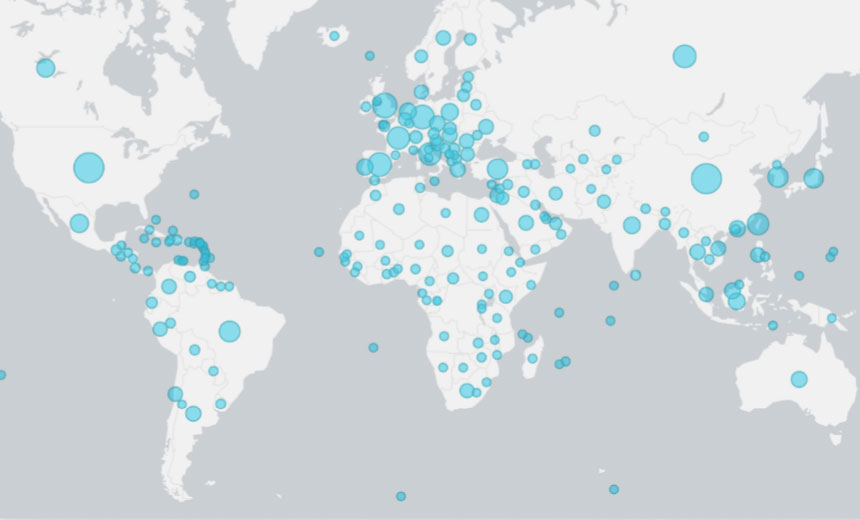

How to be great at people analytics

Most companies still face critical obstacles in the early stages of building

their people analytics capabilities, preventing real progress. The majority of

teams are still in the early stages of cleaning data and streamlining

reporting. Interest in better data management and HR technologies has been

intensive, but most companies would agree that they have a long way to go.

Leaders at many organizations acknowledge that what they call their

“analytics” is really basic reporting with little lasting impact. For example,

a majority of North American CEOs indicated in a poll that their organizations

lack the ability to embed data analytics in day-to-day HR processes

consistently and to use analytics’ predictive power to propel better decision

making.3 This challenge is compounded by the crowded and fragmented landscape

of HR technology, which few organizations know how to navigate. So, while the

majority of people analytics teams are still taking baby steps, what does it

mean to be great at people analytics? We spoke with 12 people analytics teams

from some of the largest global organizations in various sectors—technology,

financial services, healthcare, and consumer goods—to try to understand what

teams are doing, the impact they are having, and how they are doing it.

6 Data Management Tips for Small Business Owners

You might not have the vast resources and people-power of your larger

competitors, but even small e-commerce organizations can glean useful insights

from data if it is presented in an engaging way. Rather than relying on raw,

potentially overwhelming databases full of indecipherable figures, you should

aim to generate reports which showcase pertinent trends visually. This should

let you analyze information more precisely and without needing to spend hours

sifting through spreadsheets. In addition, data visualization has the benefit

of making it straightforward to share your findings with others, whether or

not they have a background in data science and analysis. A chart or graph can

express everything you need to get across in a presentation about sales

projections, site performance, and customer satisfaction, without needing

lengthy verbal explanations as well. While the biggest scandals involving data

loss and theft tend to hit the headlines whenever they involve major

organizations and internationally recognized brands, that does not mean that

smaller firms are immune from scrutiny in this respect.

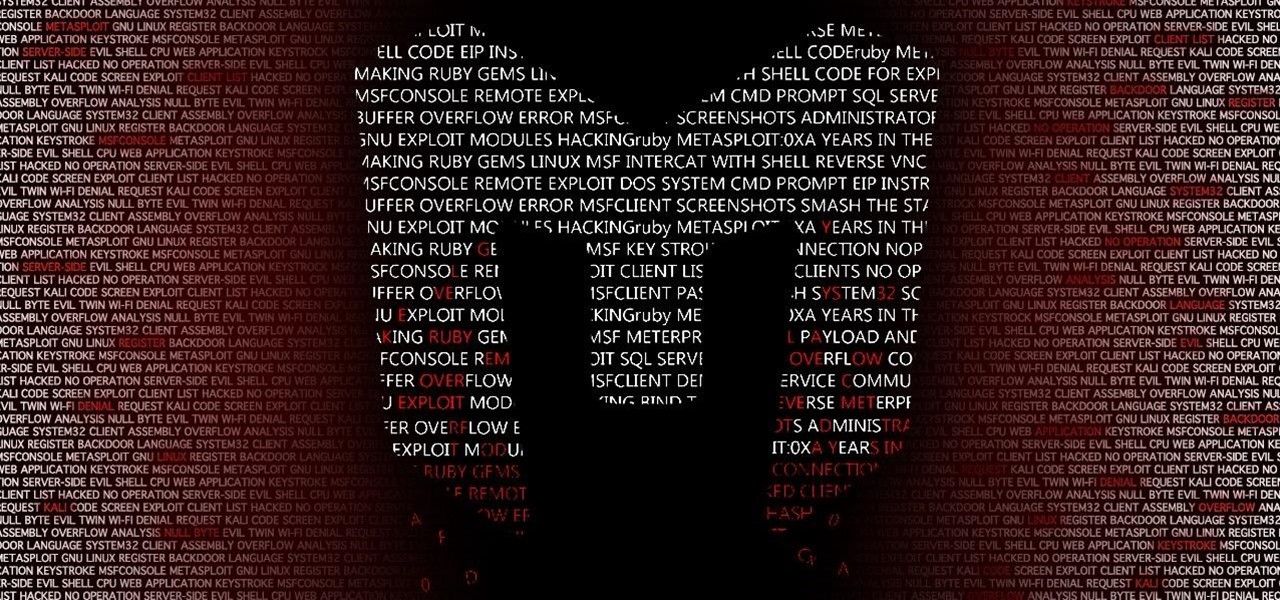

Metasploit — A Walkthrough Of The Powerful Exploitation Framework

If you hack someone without permission, there is a high chance that you will

end up in jail. So if you are planning to learn hacking with evil intentions,

I am not responsible for any damage you cause. All my articles are purely

educational. So, if hacking is bad, why learn it in the first place? Every

device on the internet is vulnerable by default unless someone secures it.

It's the job of the penetration tester to think like a hacker and attack their

organization’s systems. The penetration tester then informs the organization

about the vulnerabilities and advises on patching them. Penetration testing is

one of the highest-paid jobs in the industry. There is always a shortage of

pen-testers since the number of devices on the internet is growing

exponentially. I recently wrote an article on the top ten tools you should

know as a cybersecurity engineer. If you are interested in learning more about

cybersecurity, check out the article here. Right. Enough pep talk. Let’s look

at one of the coolest pen-testing tools in the market — Metasploit. ...

Metasploit is an open-source framework written in Ruby. It is written to be an

extensible framework, so that if you want to build custom features using Ruby,

you can easily do that via plugins.

IoT in Manufacturing: The Success Story Nobody's Talking About

Efficient manufacturing processes rely almost entirely on predictability.

Factory operators need to know how long each step in a process takes, what

resources are needed, and how long the process can operate continuously before

needing breaks for maintenance and other periodic tasks. That overarching need

for predictability makes it difficult for operators to know how the addition

of new equipment might impact output. It also makes them hesitant to make

changes to existing equipment, even if they’re all but certain that the

changes would be an improvement. That brings us to another vital and emerging

use of IoT technology in manufacturing. Factory operators are using the myriad

data streaming from their connected devices to make precise computer models of

their industrial equipment. These digital twins, as they’re known, allow

operators to test any proposed equipment tweaks or replacements to see the

exact effect they’ll have on the output. This helps them to make seamless

upgrades and changes to their processes without fear of upsetting the delicate

balance that ensures predictability. If the question is whether IoT is living

up to its promise and proving useful in manufacturing – the answer is a

resounding yes.

Digital Transformation Can Be Risky. Here’s What You Need To Know

The business mantra “culture eats strategy for breakfast” applies differently

when you’re talking about digital transformation, said Pam Hrubey, managing

director in consulting services at Crowe. For example, an

American-headquartered durable goods company acquired businesses across the

globe. The company needed to upgrade equipment and streamline IT processes,

but it chose to begin the transformation by attempting to align cultures

between the parent company and the businesses abroad. Its initial process led

to discontent among international workers who ended up feeling like outsiders

because they were not made aware that the goal was to sync technology and

processes. “To transform a business practice or to change a business model,

you have to have a robust plan,” Hrubey said. “When you start with culture you

often confuse people if you don’t have a plan in place, if people don’t

understand what change is planned or why a change is necessary.” Companies

also need to understand that a transformation affects the entire organization

and might include stakeholders across departments. “So many different

people in the company need to come together to do it right,” said Czerwinski.

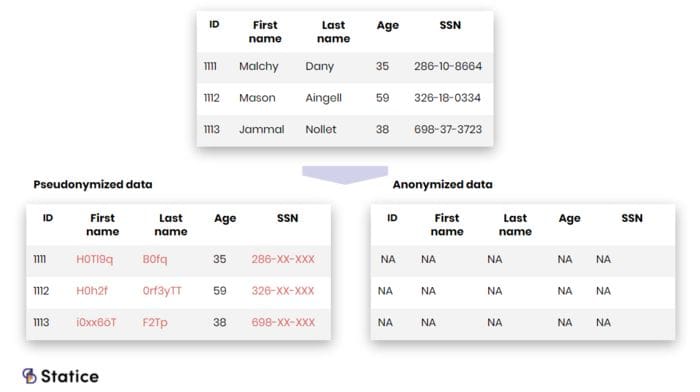

Data Protection Techniques Needed to Guarantee Privacy

Traditionally, a risk hierarchy existed between these two types of attributes.

Direct identifiers were perceived as more “sensitive” than quasi-identifiers.

In many data releases, only the former attributes were subject to some privacy

protection mechanism, while the latter were released in clear. Such releases

were often followed by prompt re-identification of the supposedly ‘protected’

subjects. It soon became apparent that quasi-identifiers could be just as

‘sensitive’ as direct identifiers. With the GDPR, this notion has finally made

it into law: both types of attributes are put on the same level, identifiers

and quasi-identifiers attributes are personal data and present an equally

important privacy breach risk. Nowadays protection laws strictly regulate

personal data processing. This makes a strong case for implementing privacy

protection techniques. Indeed, failure to comply exposes companies to severe

penalties. Besides, implementing proper privacy protections might lead to

customer trust increase. In a world plagued by data breaches and privacy

violations, people are increasingly concerned about what happens to their

data. And finally, data breaches targeting personal data are costing companies

money. Personal data remains the most expensive item to lose in a breach.

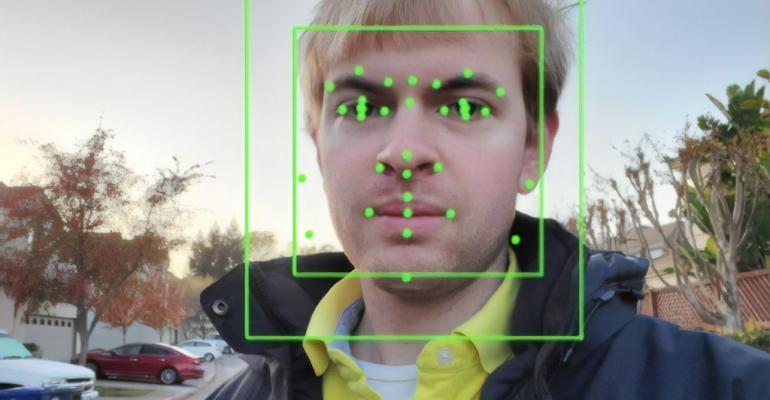

How AI Is Used in Data Center Physical Security Today

"There is a critical need to make full use of the massive amounts of data

being generated by video surveillance cameras and AI-based solutions are the

only practical answer," Memoori managing director James McHale said in a

recent report. Video surveillance cameras generate a massive amount of data,

McHale told DCK, and AI is the only practical way to process it all. AI

systems can also be used to analyze thermal images. "Thermal cameras have been

a significant growth area this year as a direct consequence of the COVID-19

pandemic," he told us. Today, many thermal cameras are just thermal

information, but customers are increasingly looking for systems with cameras

that can collect both thermal and traditional images and apply neural network

algorithms for processing them. But there's a general lack of understanding

about how to use this technology appropriately for pandemic controls, he

added. Plus, the pandemic is negatively affecting some sectors of the economy,

impacting spending and changing the way that companies buy technology.

"Customers will be demanding more value from their investments and will be

less willing to commit to upfront capital expenditure," he said.

QR Codes: A Sneaky Security Threat

Hacking an actual QR code would require some serious skills to change around

the pixelated dots in the code’s matrix. Hackers have figured out a far easier

method instead. This involves embedding malicious software in QR codes (which

can be generated by free tools widely available on the internet). To an

average user, these codes all look the same, but a malicious QR code can

direct a user to a fake website. It can also capture personal data or install

malicious software on a smartphone that initiates actions like this: Add a

contact listing: Hackers can add a new contact listing on the user’s phone and

use it to launch a spear phishing or other personalized attack; Initiate

a phone call: By triggering a call to the scammer, this type of exploit can

expose the phone number to a bad actor; Text someone: In addition to

sending a text message to a malicious recipient, a user’s contacts could also

receive a malicious text from a scammer; Write an email: Similar to a

malicious text, a hacker can draft an email and populate the recipient and

subject lines. Hackers could target the user’s work email if the device lacks

mobile threat protection ...

Exploiting enhanced data management to create value in the ‘new normal’

The pandemic has fundamentally changed the way people view, access and

retrieve data. It has also put new burdens on already stretched IT departments

and electronic delivery – now that the footprint of use has extended to

people’s homes. Data management upgrades can deliver significant benefits: An

investment in advanced data management services offers the opportunity to

automate and enhance process and workflow efficiency, eliminating errors and

freeing up staff to focus on creating value elsewhere; and Machine

Learning technologies offer new opportunities to make better use of your data

– to implement data copy management now that digital archives have become even

more important, apply proper retention strategies, as well as unearth new

revenue streams and cost saving opportunities. ... These days, virtually every

human on the planet is taking up data, and the pandemic has made consumption

grow even faster. Each meme or news story shared and every meeting recorded

all needs to be stored somewhere. And the larger the army of remote workers

conducting business from their home offices, the greater data storage capacity

will be required by every company.

Quote for the day:

"However beautiful the strategy, you should occasionally look at the results." -- Winston Churchill