New Cyber Security Style Guide helps bridge the communication gap

Security without communication is worthless. You can scream yourself blue in the face, but if no one groks what you're saying, then you're wasting your time. Information security is an unintuitive discipline, in many ways backwards from how we think about security and power and threats in meatspace. Worse, the security community has developed its own slang over the years that deliberately excludes outsiders. All fields do this, of course, and if infosec were metalworking or plumbing or air traffic control, that would be fine and dandy. Ordinary people don't have a pressing need to understand the inner workings of those fields. The human race has moved online, and information security affects everyone now. It used to be we lived in the "real world" and "went online." Now we live online and visit the "real world." Soon even that will fade, until the only "real world" left will be quaint amusement parks that offer the unplugged experience

Companies ready to spend on IT hardware again

While undoubtedly enterprises are moving software applications from “on-premises data centers to the cloud,” that’s not the whole story, Huberty says. Currently, 21 percent of computing is accomplished in the cloud. That number will indeed rise, as we expect, and should be 44 percent by 2021. However, because enterprise cloud plans are beginning to solidify, or become less vague, firms are now ready to upgrade the IT gear they are retaining or think they’ll need. “They aren't abandoning on-premises computing. Instead, many are adopting a hybrid IT model in which applications move between a public cloud and their own internal data centers,” she explains. Other factors coming into play and contributing to the optimism, according to Morgan Stanley, include more cash being available because of tax law changes in the U.S. and advantages to depreciating equipment costs in the first year due to economic growth. A weak dollar and lower memory costs are also helping the shift.

How digital service providers should prepare for the NIS Directive

Last year, the European Commission published a draft implementation regulationfor DSPs, which Elizabeth Denham, the UK’s information commissioner, commented on. She criticised “the overly rigid parameters” of the regulation, which “may be undesirable and may lead to a failure to report incidents which nevertheless have a substantial impact on the users of the service and which should, by the nature of the impact, be considered for regulatory action”. The European Commission has since approved the final draft, and the UK government has released the findings of a public consultation on how it should implement and regulate the NIS Directive. IT Governance has also published a compliance guide. Each of these documents will help you understand where the NIS Directive fits into the cyber security landscape. DSPs will have to be particularly organised, as they are expected to define their own information security measures proportionate and appropriate to the potential risks they face.

CIOs ill-prepared for IT changes to enable digital business transformation

The Hackett Group reported that 64% of respondents lack confidence in their IT organisation’s capability to support transformation execution. This is all the more worrying given Hackett’s analysis, which predicted that in 2018, IT’s workload will increase by more than the number of full-time employees in IT. The Hackett Group suggested this would mean that IT needs a 2% productivity boost, on average, just to keep pace. However, it said the largest percentage increases in workload (5%) and IT staff (4.2%) are happening outside corporate IT. Instead, business groups appear to be investing in their own IT capabilities. Hackett’s benchmark study said: “Digital transformation goals are at least partial motivators for this, in that IT needs to help business units transform and differentiate customer experiences, locating IT resources closer to the end-customer’s facilities.”

The Irrational Exuberance That is Blockchain

In 2017, we saw some evolution on that front as blockchain platforms such as Hypeledger Fabric announced new versions closer to enterprise use and Ethereum progressed towards making these solutions perform and scale to suit enterprise needs. However, the exuberance has also led to new levels of hucksterism. For example, we have seen companies with dubious blockchain abilities add blockchain to their name or business to try to increase their stock price. In response, the U.S. Securities and Exchange Commission (SEC) said it will crack down on such companies It is critical at this stage in blockchain’s evolution that hype is recognized, and the emergent nature of the technology and its capabilities are clearly understood. ... Gartner does not expect large returns on blockchain until 2025. Which means today companies will have to try different blockchain projects to determine if there is value for them in blockchain — that is, whether there will be new revenue possibilities, cost savings or improvements in their customers’ user experience.

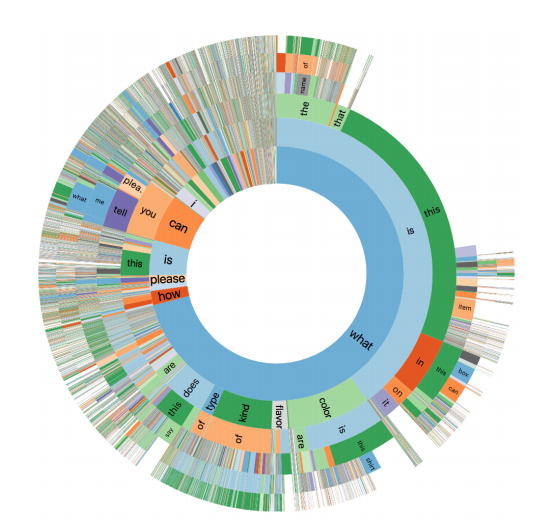

AI Is Now Analyzing Candidates' Facial Expressions During Video Job Interviews

Have you ever lied during a job interview? Most of us have, at least a little. But next time around, artificial intelligence may be watching your face's every move, assessing the honesty of your answers, as well as your emotions in general. It may also try to determine whether your personality is a good fit for the job. ... Applicants, who often find the company's job opportunities through Facebook or LinkedIn, can skip uploading their resumés and simply use their LinkedIn profiles if they wish. They then spend about 20 minutes playing a dozen neuroscience-based games intended to evaluate their personalities for such things as embracing or avoiding risk, to see if their personalities are a good fit for the particular job. Then they perform a video interview, with preset questions, which they can do on a smartphone or tablet as well as a computer. That's where AI comes in, measuring their facial expressions to capture their moods and further assess their personality traits.

6 Experts Discuss How AI Will Change The Future Of Wall Street (Part 1)

The technology behind AI has been around for more than 40 years, but for AI to work one needs two other ingredients: massive computing power at a reasonable price and massive amounts of data to train the AI. ... The biggest issue is the aversion of asset owners to “black box” strategies. Many consider AI as another version of algorithmic trading (to some extent this is true), and algorithmic strategies have not performed well in the past. While investors are comfortable with having AI playing an important role in many parts of their lives, they seem to prefer human judgment to AI when it comes to the investment process. Another potential obstacle is that an AI approach to trading requires a whole new organization structure for trading operations. While it is desirable to put discretionary traders in silos to reduce group thinking and correlations among traders, this approach will backfire when applied to AI trading, which requires a team effort to test thousands of strategies in order to pick the best.

HSBC ready to do live trade finance transactions on blockchain

It’s worth noting, however, that the technology is still a long way from commercial use, for HSBC at least. As well as developing the platform and the solution, a network must be in place so that the full transaction can be completed on the blockchain, which means on-boarding other banks, regulators, customs and all parts of the trade cycle. “We see that developing throughout the year so that in 2019, around the same time, we should be in a position to have both the network of banks, corporates and others, and the app ready to use on a wider scale,” Kroeker said. Meanwhile, the bank is hoping that its adventures in blockchain will leave it well-placed to cater for the “digital natives” in Asean, which is projected to be one of the world’s growth hubs for digital services over the coming years. The press conference was called to discuss the bank’s digital agenda in the region, which is shaping up to be an online battleground in the years to come.

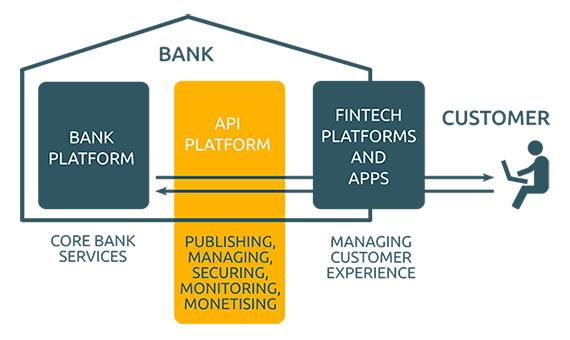

10 Common Mistakes To Avoid In Fintch Software Development

Financial Technology, or FinTech, is a relatively new aspect of the financial industry, which focuses on applying technology to improve financial activities. This has the potential to open the doors to new kinds of applications and services for customers, as well as more competitive financial technology. However, like all new technologies, there are mistakes lurking. In contrast to software domains like end-user web apps or mobile application development, a software bug in FinTech may not just lead to annoyed users. In the wrong piece of software, bugs can result in hundreds of millions of dollars lost. The list below are some of the most common mistakes we see in software projects in general—and FinTech software development in particular—that you should watch out for when launching into the FinTech sector.

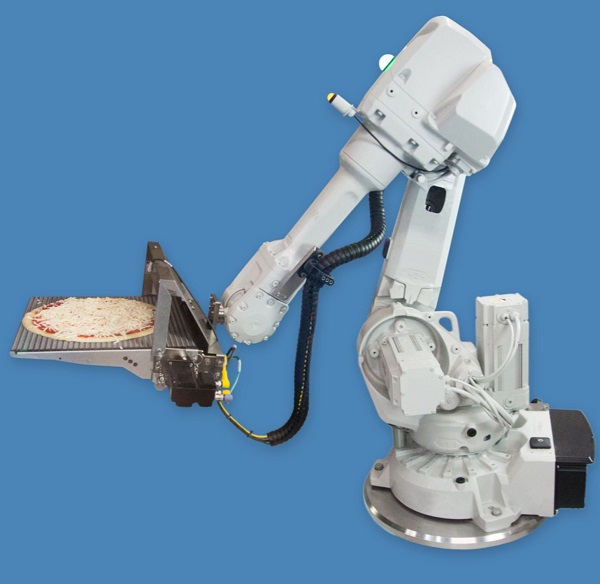

The future of IoT device management

One potential vision for the future of consumer IoT – one which might be a lot more appealing to consumers - involves IoT devices whose identity and firmware are managed using a standardized process and entirely independently from the application layer service. When you buy a connected consumer IoT device, you should be able to securely associate that device’s identity with your personal identity and securely manage its software and firmware using a familiar, standardized workflow supported by all device vendors. This means that any consumer IoT device should be easily associated with any consumer IoT gateway that supports its protocols and be able to get to the device vendor’s management service. You then need a way to associate that device with any provider of application layer services that you choose. When you sign up for an application layer service, you should be able to easily allow the application to discover relevant IoT devices associated with this identity and provision them for use.

Quote for the day:

"When people talk, listen completely. Most people never listen." -- Ernest Hemingway