GenAI in healthcare: The state of affairs in India

Currently, the All-India Institute of Medical Sciences (AIIMS) Delhi is the only

public healthcare institution exploring AI-driven solutions. AIIMS, in

collaboration with the Ministry of Electronics & Information Technology and

the Centre for Development of Advanced Computing (C-DAC) Pune, launched the

iOncology.ai platform to support oncologists in making informed cancer treatment

decisions. The platform uses deep learning models to detect early-stage ovarian

cancer, and available data shows this has already improved patient outcomes

while reducing healthcare costs. This is one of the few key AI-driven

initiatives in India. Although AI adoption in the healthcare provider segment is

relatively high at 68%, a large portion of deployments are still in the PoC

phase. What could transform India’s healthcare with Generative AI? What could

help bring care to those who need it most? ... India has tremendous potential in

machine intelligence, especially as we develop our own Gen AI capabilities. In

healthcare, however, the pace of progress is hindered by financial constraints

and a shortage of specialists in the field. Concerns over data breaches and

cybersecurity incidents also contribute to this aversion.

OWASP Beefs Up GenAI Security Guidance Amid Growing Deepfakes

To help organizations develop stronger defenses against AI-based attacks, the

Top 10 for LLM Applications & Generative AI group within the Open Worldwide

Application Security Project (OWASP) released a trio of guidance documents for

security organizations on Oct. 31. To its previously released AI cybersecurity

and governance checklist, the group added a guide for preparing for deepfake

events, a framework to create AI security centers of excellence, and a curated

database on AI security solutions. ... The trajectory of deepfakes is quite easy

to predict — even if they are not good enough to fool most people today, they

will be in the future, says Eyal Benishti, founder and CEO of Ironscales. That

means that human training will likely only go so far. AI videos are getting

eerily realistic, and a fully digital twin of another person controlled in real

time by an attacker — a true "sock puppet" — is likely not far behind.

"Companies want to try and figure out how they get ready for deepfakes," he

says. "The are realizing that this type of communication cannot be fully trusted

moving forward, which ... will take people some time to realize and adjust." In

the future, since the telltale artifacts will be gone, better defenses are

necessary, Exabeam's Kirkwood says.

Open-source software: A first attempt at organization after CRA

The Cyber Resilience Act was a shock that awakened many people from their

comfort zone: How dare the “technical” representatives of the European Union

question the security of open-source software? The answer is very simple:

because we never told them, and they assumed it was because no one was

concerned about security. ... The CRA requires software with automatic updates

to roll out security updates automatically by default, while allowing users to

opt out. Companies must conduct a cyber risk assessment before a product is

released and throughout 10 years or its expected lifecycle, and must notify

the EU cybersecurity agency ENISA of any incidents within 24 hours of becoming

aware of them, as well as take measures to resolve them. In addition to that,

software products must carry the CE marking to show that they meet a minimum

level of cybersecurity checks. Open-source stewards will have to care about

the security of their products but will not be asked to follow these rules. In

exchange, they will have to improve the communication and sharing of best

security practices, which are already in place, although they have not always

been shared. So, the first action was to create a project to standardize them,

for the entire open-source software industry.

10 ways hackers will use machine learning to launch attacks

Attackers aren’t just using machine-learning security tools to test if their

messages can get past spam filters. They’re also using machine learning to

create those emails in the first place, says Adam Malone, a former EY partner.

“They’re advertising the sale of these services on criminal forums. They’re

using them to generate better phishing emails. To generate fake personas to

drive fraud campaigns.” These services are specifically being advertised as

using machine learning, and it’s probably not just marketing. “The proof is in

the pudding,” Malone says. “They’re definitely better.” ... Criminals are also

using machine learning to get better at guessing passwords. “We’ve seen

evidence of that based on the frequency and success rates of password guessing

engines,” Malone says. Criminals are building better dictionaries to hack

stolen hashes. They’re also using machine learning to identify security

controls, “so they can make fewer attempts and guess better passwords and

increase the chances that they’ll successfully gain access to a system.” ...

The most frightening use of artificial intelligence are the deep fake tools

that can generate video or audio that is hard to distinguish from the real

human. “Being able to simulate someone’s voice or face is very useful against

humans,” says Montenegro.

Breaking Free From the Dead Zone: Automating DevOps Shifts for Scalable Success

If ‘Shift Left’ is all about integrating processes closer to the source code,

‘Shift Right’ offers a complementary approach by tackling challenges that

arise after deployment. Some decisions simply can’t be made early in the

development process. For example, which cloud instances should you use? How

many replicas of a service are necessary? What CPU and memory allocations are

appropriate for specific workloads? These are classic ‘Shift Right’ concerns

that have traditionally been managed through observability and

system-generated recommendations. Consider this common scenario: when

deploying a workload to Kubernetes, DevOps engineers often guess the memory

and CPU requests, specifying these in YAML configuration files before anything

is deployed. But without extensive testing, how can an engineer know the

optimal settings? Most teams don’t have the resources to thoroughly test every

workload, so they make educated guesses. Later, once the workload has been

running in production and actual usage data is available, engineers revisit

the configurations. They adjust settings to eliminate waste or boost

performance, depending on what’s needed. It’s exhausting work and, let’s be

honest, not much fun.

5 cloud market trends and how they will impact IT

“Capacity growth will be driven increasingly by the even larger scale of those

newly opened data centers, with generative AI technology being a prime reason

for that increased scale,” Synergy Research writes. Not surprisingly, the

companies with the broadest data center footprint are Amazon, Microsoft, and

Google, which account for 60% of all hyperscale data center capacity. And the

announcements from the Big 3 are coming fast and furious. ... “In effect,

industry cloud platforms turn a cloud platform into a business platform,

enabling an existing technology innovation tool to also serve as a business

innovation tool,” says Gartner analyst Gregor Petri. “They do so not as

predefined, one-off, vertical SaaS solutions, but rather as modular,

composable platforms supported by a catalog of industry-specific packaged

business capabilities.” ... There are many reasons for cloud bills increasing,

beyond simple price hikes. Linthicum says organizations that simply “lifted

and shifted” legacy applications to the public cloud, rather than refactoring

or rewriting them for cloud optimization, ended up with higher costs. Many

organizations overprovisioned and neglected to track cloud resource

utilization. On top of that, organizations are constantly expanding their

cloud footprint.

The Modern Era of Data Orchestration: From Data Fragmentation to Collaboration

Data systems have always needed to make assumptions about file, memory, and

table formats, but in most cases, they've been hidden deep within their

implementations. A narrow API for interacting with a data warehouse or data

service vendor makes for clean product design, but it does not maximize the

choices available to end users. ... In a closed system, the data warehouse

maintains its own table structure and query engine internally. This is a

one-size-fits-all approach that makes it easy to get started but can be

difficult to scale to new business requirements. Lock-in can be hard to avoid,

especially when it comes to capabilities like governance and other services

that access the data. Cloud providers offer seamless and efficient

integrations within their ecosystems because their internal data format is

consistent, but this may close the door on adopting better offerings outside

that environment. Exporting to an external provider instead requires

maintaining connectors purpose-built for the warehouse's proprietary APIs, and

it can lead to data sprawl across systems. ... An open, deconstructed system

standardizes its lowest-level details. This allows businesses to pick and

choose the best vendor for a service while having the seamless experience that

was previously only possible in a closed ecosystem.

New OAIC AI Guidance Sharpens Privacy Act Rules, Applies to All Organizations

The new AI guidance outlines five key takeaways that require attention, and

though the term “guidance” is used some of these constitute expansions of

application of existing rules. The first of these is that Privacy Act

requirements for personal information apply to AI systems, both in terms of

user input and what the system outputs. ... The second AI guidance takeaway

stipulates that privacy policies must be updated to have “clear and

transparent” information about public-facing AI use. The third takeaway notes

that the generation of images of real people, whether it be due to a

hallucination or intentional creation of something like a deepfake, are also

covered by personal information privacy rules. The fourth AI guidance takeaway

states that any personal information input into AI systems can only be used

for the primary purpose for which it was collected, unless consent is

collected for other uses or those secondary uses can be reasonably expected to

be necessary. The fifth and final takeaway is perhaps a case of burying the

lede; the OAIC simply suggests that organizations not collect personal

information through AI systems at all due to the ” significant and complex

privacy risks involved.”

DevOps Moves Beyond Automation to Tackle New Challenges

“The future of DevOps is DevSecOps,” Jonathan Singer, senior product marketing

manager at Checkmarx, told The New Stack. “Developers need to consider

high-performing code as secure code. Everything is code now, and if it’s not

secure, it can’t be high-performing,” he added. Checkmarx is an application

security vendor that allows enterprises to secure their applications from the

first line of code to deployment in the cloud, Singer said. The DevOps

perspective has to be the same as the application security perspective, he

noted. Some people think of seeing the environment around the app, but

Checkmarx thinks of seeing the code in the application and making sure it’s

safe and secure when it’s deployed, he added. “It might look like the security

teams are giving more responsibility to the dev teams, and therefore you need

security people in the dev team,” Singer said Checkmarx is automating the

heavy mental lifting by prioritizing and triaging scan results. With the

amount of code, especially for large organizations, finding ten thousand

vulnerabilities is fairly common, but they will have different levels of

severity. If a vulnerability is not exploitable, you can knock it out of the

results list. “Now we’re in the noise reduction game,” he said.

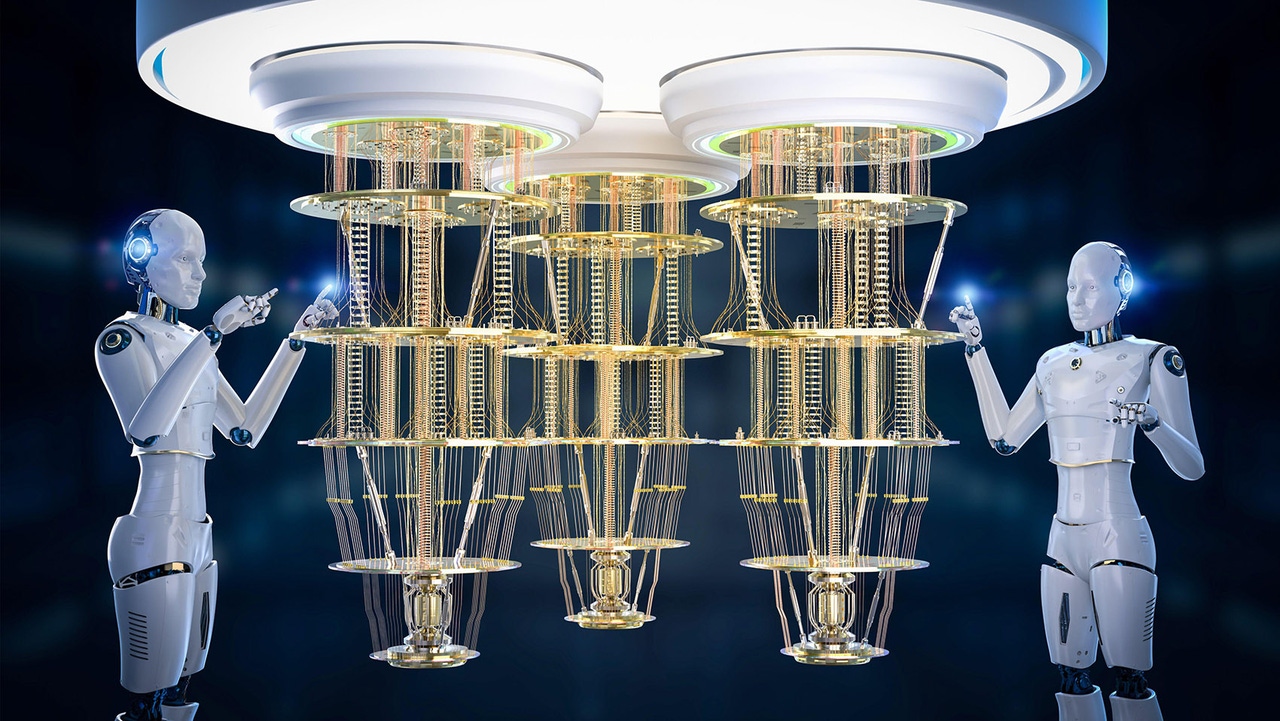

How Quantum Machine Learning Works

While quantum computing is not the most imminent trend data scientists need to

worry about today, its effect on machine learning is likely to be

transformative. “The really obvious advantage of quantum computing is the

ability to deal with really enormous amounts of data that we can't really deal

with any other way,” says Fitzsimons. “We've seen the power of conventional

computers has doubled effectively every 18 months with Moore's Law. With

quantum computing, the number of qubits is doubling about every eight to nine

months. Every time you add a single qubit to a system, you double its

computational capacity for machine learning problems and things like this, so

the computational capacity of these systems is growing double exponentially.”

... Quantum-inspired software techniques can also be used to improve classical

ML, such as tensor networks that can describe machine learning structures and

improve computational bottlenecks to increase the efficiency of LLMs like

ChatGPT. “It’s a different paradigm, entirely based on the rules of quantum

mechanics. It’s a new way of processing information, and new operations are

allowed that contradict common intuition from traditional data science,” says

Orús.

Quote for the day:

"I find that the harder I work, the

more luck I seem to have." -- Thomas Jefferson