The race is on for quantum-safe cryptography

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69438999/VRG_4614_7_NIST.0.jpg)

Existing encryption systems rely on specific mathematical equations that

classical computers aren’t very good at solving — but quantum computers may

breeze through them. As a security researcher, Chen is particularly interested

in quantum computing’s ability to solve two types of math problems: factoring

large numbers and solving discrete logarithms. Pretty much all internet security

relies on this math to encrypt information or authenticate users in protocols

such as Transport Layer Security. These math problems are simple to perform in

one direction, but difficult in reverse, and thus ideal for a cryptographic

scheme. “From a classical computer’s point of view, these are hard problems,”

says Chen. “However, they are not too hard for quantum computers.” In 1994, the

mathematician Peter Shor outlined in a paper how a future quantum computer could

solve both the factoring and discrete logarithm problems, but engineers are

still struggling to make quantum systems work in practice. While several

companies like Google and IBM, along with startups such as IonQ and Xanadu, have

built small prototypes, these devices cannot perform consistently, and they have

not conclusively completed any useful task beyond what the best conventional

computers can achieve.

Lightbend’s Akka Serverless PaaS to Manage Distributed State at Scale

Up to now, serverless technology has not been able to support stateful,

high-performance, scalable applications that enterprises are building today,

Murdoch said. Examples of such applications include consumer and industrial IoT,

factory automation, modern e-commerce, real-time financial services, streaming

media, internet-based gaming and SaaS applications. “Stateful approaches to

serverless application design will be required to support a wide range of

enterprise applications that can’t currently take advantage of it, such as

e-commerce, workflows and anything requiring a human action,” said William

Fellows, research director for cloud native at 451 Research. “Serverless

functions are short-lived and lose any ‘state’ or context information when they

execute.” Lightbend, with Akka Serverless, has addressed the challenge of

managing distributed state at scale. “The most significant piece of feedback

that we’ve been getting from the beta is that one of the key things that we had

to do to build this platform was to find a way to be able to make the data be

available in memory at runtime automatically, without the developer having to do

anything,” Murdoch said

Can We Balance Accuracy and Fairness in Machine Learning?

While challenges like these often sound theoretical, they already affect and

shape the work that machine learning engineers and researchers

produce. Angela Shi looks at a practical application of this conundrum when

she explains the visual representation of bias and variance in bulls-eye

diagrams. Taking a few steps back, Federico Bianchi and Dirk Hovy’s article

identifies the most pressing issues the authors and their colleagues face in the

field of natural learning processing (NLP): “the speed with which models are

published and then used in applications can exceed the discovery of their risks

and limitations. And as their size grows, it becomes harder to reproduce these

models to discover those aspects.” Federico and Dirk’s post stops short of

offering concrete solutions—no single paper could—but it underscores the

importance of learning, asking the right (and often most difficult) questions,

and refusing to accept an untenable status quo. If what inspires you to take

action is expanding your knowledge and growing your skill set, we have some

great options for you to choose from this week, too.

The secret of making better decisions, faster

While agility might be critical for sporting success, that doesn't mean it's

easily achieved. Filippi tells ZDNet he's spent many years building a strong

team, with great heads of department who are empowered to make big calls. "Most

of the time you trust them to get on with it," he says. "I'm more of an

orchestrator – you cannot micromanage a race team because there's just too much

going on. The pace and the volume of work being achieved every week is just

mind-blowing." Hackland has similar experiences at Williams F1. Employees are

empowered to take decisions and their confidence to make those calls in the

factory or out on the track is a crucial component of success. "The engineer

who's sitting on the pit wall doesn't have to ask the CIO if we should pit," he

says. "The decisions that are made all through the organisation don't feed up to

one single individual. Everyone is allowed to make decisions up or down the

organisation." As well as being empowered to make big calls, Hackland says a

no-blame culture is critical to establishing and supporting decentralised

decision making in racing teams.

How to avoid the ethical pitfalls of artificial intelligence and machine learning

Disconnects also exist between key functional stakeholders required to make

sound holistic judgements around ethics in AI and ML. “There is a gap between

the bit that is the data analytics AI, and the bit that is the making of the

decision by an organisation. You can have really good technology and AI

generating really good outputs that are then used really badly by humans, and as

a result, this leads to really poor outcomes,” says Prof. Leonard. “So, you have

to look not only at what the technology in the AI is doing, but how that is

integrated into the making of the decision by an organisation.” This problem

exists in many fields. One field in which it is particularly prevalent is

digital advertising. Chief marketing officers, for example, determine marketing

strategies that are dependent upon the use of advertising technology – which are

in turn managed by a technology team. Separate to this is data privacy which is

managed by a different team, and Prof. Leonard says each of these teams don’t

speak the same language as each other in order to arrive at a strategically

cohesive decision.

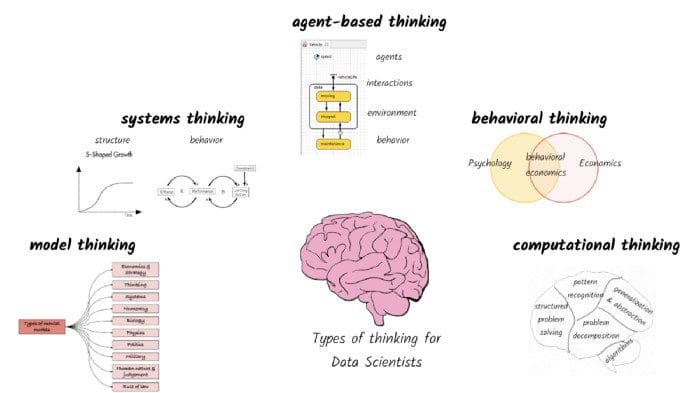

Five types of thinking for a high performing data scientist

As data scientists, the first and foremost skill we need is to think in terms of

models. In its most abstract form, a model is any physical, mathematical, or

logical representation of an object, property, or process. Let’s say we want to

build an aircraft engine that will lift heavy loads. Before we build the

complete aircraft engine, we might build a miniature model to test the engine

for a variety of properties (e.g., fuel consumption, power) under different

conditions (e.g., headwind, impact with objects). Even before we build a

miniature model, we might build a 3-D digital model that can predict what will

happen to the miniature model built out of different materials. ... Data

scientists often approach problems with cross-sectional data at a point in time

to make predictions or inferences. Unfortunately, given the constantly changing

context around most problems, very few things can be analyzed statically. Static

thinking reinforces the ‘one-and-done’ approach to model building that is

misleading at best and disastrous at its worst. Even simple recommendation

engines and chatbots trained on historical data need to be updated on a regular

basis.

Double Trouble – the Threat of Double Extortion Ransomware

Over the past 12 months, double extortion attacks have become increasingly

common as its ‘business model’ has proven effective. The data center giant

Equinix was hit by the Netwalker ransomware. The threat actor behind that attack

was also responsible for the attack against K-Electric, the largest power

supplier in Pakistan, demanding $4.5 million in Bitcoin for decryption keys and

to stop the release of stolen data. Other companies known to have suffered such

attacks include the French system and software consultancy Sopra Steria; the

Japanese game developer Capcom; the Italian liquor company Campari Group; the US

military missile contractor Westech; the global aerospace and electronics

engineering group ST Engineering; travel management giant CWT, who paid $4.5M in

Bitcoin to the Ragnar Locker ransomware operators; business services giant

Conduent; even soccer club Manchester United. Research shows that in Q3 2020,

nearly half of all ransomware cases included the threat of releasing stolen

data, and the average ransom payment was $233,817 – up 30% compared to Q2 2020.

And that’s just the average ransom paid.

Evolution of code deployment tools at Mixpanel

Manual deploys worked surprisingly well while we were getting our services up

and running. More and more features were added to mix to interact not just with

k8s but also other GCP services. To avoid dealing with raw YAML files directly,

we moved our k8s configuration management to

Jsonnet. Jsonnet allowed us to add templates

for commonly used paradigms and reuse them in different deployments. At the same

time, we kept adding more k8s clusters. We added more geographically distributed

clusters to run the servers handling incoming data to decrease latency perceived

by our ingestion API clients. Around the end of 2018, we started evaluating a

European Data Residency product. That required us to deploy another full copy of

all our services in two zones in the European Union. We were now up to 12

separate clusters, and many of them ran the same code and had similar

configurations. While manual deploys worked fine when we ran code in just two

zones, it quickly became infeasible to keep 12 separate clusters in sync

manually. Across all our teams, we run more than 100 separate services and

deployments.

When physics meets financial networks

Generally, physics and financial systems are not easily associated in people's

minds. Yet, principles and techniques originating from physics can be very

effective in describing the processes taking place on financial markets.

Modeling financial systems as networks can greatly enhance our understanding of

phenomena that are relevant not only to researchers in economics and other

disciplines, but also to ordinary citizens, public agencies and governments. The

theory of Complex Networks represents a powerful framework for studying how

shocks propagate in financial systems, identifying early-warning signals of

forthcoming crises, and reconstructing hidden linkages in interbank systems. ...

Here is where network theory comes into play, by clarifying the interplay

between the structure of the network, the heterogeneity of the individual

characteristics of financial actors and the dynamics of risk propagation, in

particular contagion, i.e. the domino effect by which the instability of some

financial institutions can reverberate to other institutions to which they are

connected. The associated risk is indeed "systemic", i.e. both produced and

faced by the system as a whole, as in collective phenomena studied in

physics.

What’s Driving the Surge in Ransomware Attacks?

The trend involves a complex blend of geopolitical and cybersecurity factors,

but the underlying reasons for its recent explosion are simple. Ransomware

attacks have gotten incredibly easy to execute, and payment methods are now much

more friendly to criminals. Meanwhile, businesses are growing increasingly

reliant on digital infrastructure and more willing to pay ransoms, thereby

increasing the incentive to break in. As the New York Times notes, for years

“criminals had to play psychological games to trick people into handing over

bank passwords and have the technical know-how to siphon money out of secure

personal accounts.” Now, young Russians with a criminal streak and a cash

imbalance can simply buy the software and learn the basics on YouTube tutorials,

or by getting help from syndicates like DarkSide — who even charge clients a fee

to set them up to hack into businesses in exchange for a portion of the

proceeds. The breach of the education publisher involving the false pedophile

threat was a successful example of such a criminal exchange. Meanwhile, Bitcoin

has made it much easier for cybercriminals to collect on their schemes.

Quote for the day:

"To make a decision, all you need is

authority. To make a good decision, you also need knowledge, experience, and

insight." -- Denise Moreland

No comments:

Post a Comment