4 tips to help data scientists maximise the potential of AI and ML

Velocity and Better Metrics: Q&A with Doc Norton

First of all, as velocity is typically story points per iteration and story points are abstract and estimated by the team, velocity is highly subject to drift. Drift is subtle changes that add up over time. You don’t usually notice them in the small, but compare over a wider time horizon and it is glaringly obvious. Take a team that knows they are supposed to increase their velocity over time. Sure enough, they do. And we can probably see that they are delivering more value. But how much more? How can we be sure? In many cases, if you take a set of stories from a couple of years ago and ask this team to re-estimate them, they’ll give you an overall number higher than the original estimates. My premise is that this is because our estimates often drift higher over time. The bias for larger estimates isn’t noticeable from iteration to iteration, but is noticeable over quarters or years. You can use reference stories to help reduce this drift, but I don’t know if you can eliminate it. Second of all, even if you could prove that estimates didn’t drift at all, you’re still only measuring one dimension - rate of delivery.

With machine learning, business process scalability has made leaps and bounds, but it’s important not to get side-tracked by that, according to Edell. Instead, focus on the things that are going wrong, rather than attempting to improve the things that are already working. “The most common mistake really anyone can make when building an ML solution is to lose sight of the problem they are trying to solve,” he said. “As such, we can spend a lot of time making the tech better, but forgetting why we’re using the tech in the first place. “For example, we may spend a lot of time and money improving the accuracy of a face recognition engine from 92pc to 95pc, when we could have spent that time improving what happens when the face recognition is wrong – which might bring more value to the customer than an incremental accuracy improvement.” The potential that emerging technologies can have for overcoming challenges with data science, no matter the industry, is monumental. But for the sectors that are client and consumer-facing, the needs of customers should still come first.

Velocity and Better Metrics: Q&A with Doc Norton

First of all, as velocity is typically story points per iteration and story points are abstract and estimated by the team, velocity is highly subject to drift. Drift is subtle changes that add up over time. You don’t usually notice them in the small, but compare over a wider time horizon and it is glaringly obvious. Take a team that knows they are supposed to increase their velocity over time. Sure enough, they do. And we can probably see that they are delivering more value. But how much more? How can we be sure? In many cases, if you take a set of stories from a couple of years ago and ask this team to re-estimate them, they’ll give you an overall number higher than the original estimates. My premise is that this is because our estimates often drift higher over time. The bias for larger estimates isn’t noticeable from iteration to iteration, but is noticeable over quarters or years. You can use reference stories to help reduce this drift, but I don’t know if you can eliminate it. Second of all, even if you could prove that estimates didn’t drift at all, you’re still only measuring one dimension - rate of delivery.

'Graboid' Cryptojacking Worm Spreads Through Containers

This is the first time the researchers have seen a cryptojacking worm spread through containers in the Docker Engine (Community Edition). While the worm isn't sophisticated in its tactics, techniques or procedures, it can be repurposed by the command-and-control server to run ransomware or other malware, the researchers warn. The Unit 42 research report did not note how much damage Graboid has caused so far or if the attackers targeted a particular sector. "If a more potent worm is ever created to take a similar infiltration approach, it could cause much greater damage, so it's imperative for organizations to safeguard their Docker hosts," the Unit 42 report notes. "Once the [command-and-control] gains a foothold, it can deploy a variety of malware," Jay Chen, senior cloud vulnerability and exploit researcher at Palo Alto Networks, tells Information Security Media Group. "In this specific case, it deployed this worm, but it could have potentially leveraged the same technique to deploy something more detrimental. It's not dependent on the worm's capabilities."

Data Literacy—Teach It Early, Teach It Often Data Gurus Tell Conference Goers

No one can understand everything, he said. That’s why the “real sweet spot” is the communication between the data scientists and the experts in various fields of inquiry to determine what they are seeking from the data and how it can be used. And there’s also an ethical component so that the data are not used to arrive at false conclusions. Sylvia Spengler, the National Science Foundation’s program director for Information and Intelligence systems, said that solving today’s big questions requires an interdisciplinary approach across all the sciences. “We need a deep integration across a lot of disciplines,” she said. “This is made for data science and data analytics. But it puts a certain edge on actually being able to deal with the kinds of data coming at you because they are so incredibly different.” Spengler said this integration can only happen through teams of people working on it. “You have to be able to collaborate. Those soft skills are critical. It’s not just your brains but your empathy because it makes you capable of taking multiple perspectives,” she said.

Linux security hole: Much sudo about nothing

At first glance the problem looks like a bad one. With it, a user who is allowed to use sudo to run commands as any other user, except root, can still use it to run root commands. For this to happen, several things must be set up just wrong. First the sudo user group must give a user the right to use sudo but doesn't give the privilege of using it to run root commands. That can happen when you want a user to have the right to run specific commands that they wouldn't normally be able to use. Next, sudo must be configured to allow a user to run commands as an arbitrary user via the ALL keyword in a Runas specification. The last has always been a stupid idea. As the sudo manual points out, "using ALL can be dangerous since in a command context, it allows the user to run any command on the system." In all my decades of working with Linux and Unix, I have never known anyone to set up sudo with ALL. That said, if you do have such an inherently broken system, it's then possible to run commands as root by specifying the user ID -1 or 4294967295. Thus, if the ALL keyword is listed first in the Runas specification, an otherwise restricted sudo user can then run root commands.

News from the front in the post-quantum crypto wars with Dr. Craig Costello

One good thing was that this notion of public key cryptography, it arrived, I suppose, just in time for the for the internet. In the seventies, the invention of public key cryptography came along, and that’s the celebrated Diffie-Hellman protocol that allows us to do key exchange. Public key cryptography is kind of a notion that sits above however you try to instantiate it. So public key cryptography is a way of doing things, and how you choose to do them, or what mathematical problem you might base it on, I guess, is how you instantiate public key cryptography. So initially, the two proposals that were proposed back in the seventies were what we call the discrete log problem in finite field. When you compute powers of numbers, and you do them in a finite field, you might start with a number and compute some massive power of it and then give someone the residue, or the remainder, of that number and say, what was the power that I raised this number to in the group? And the other problem is factorization, so integer factorization.

Beamforming explained: How it makes wireless communication faster

The mathematics behind beamforming is very complex (the Math Encounters blog has an introduction, if you want a taste), but the application of beamforming techniques is not new. Any form of energy that travels in waves, including sound, can benefit from beamforming techniques; they were first developed to improve sonar during World War II and are still important to audio engineering. But we're going to limit our discussion here to wireless networking and communications. By focusing a signal in a specific direction, beamforming allows you deliver higher signal quality to your receiver — which in practice means faster information transfer and fewer errors — without needing to boost broadcast power. That's basically the holy grail of wireless networking and the goal of most techniques for improving wireless communication. As an added benefit, because you aren't broadcasting your signal in directions where it's not needed, beamforming can reduce interference experienced by people trying to pick up other signals. The limitations of beamforming mostly involve the computing resources it requires; there are many scenarios where the time and power resources required by beamforming calculations end up negating its advantages.

A First Look at Java Inline Classes

The goal of inline classes is to improve the affinity of Java programs to modern hardware. This is to be achieved by revisiting a very fundamental part of the Java platform — the model of Java's data values. From the very first versions of Java until the present day, Java has had only two types of values: primitive types and object references. This model is extremely simple and easy for developers to understand, but can have performance trade-offs. For example, dealing with arrays of objects involves unavoidable indirections and this can result in processor cache misses. Many programmers who care about performance would like the ability to work with data that utilizes memory more efficiently. Better layout means fewer indirections, which means fewer cache misses and higher performance. Another major area of interest is the idea of removing the overhead of needing a full object header for each data composite — flattening the data. As it stands, each object in Java's heap has a metadata header as well as the actual field content. In Hotspot, this header is essentially two machine words — mark and klass.

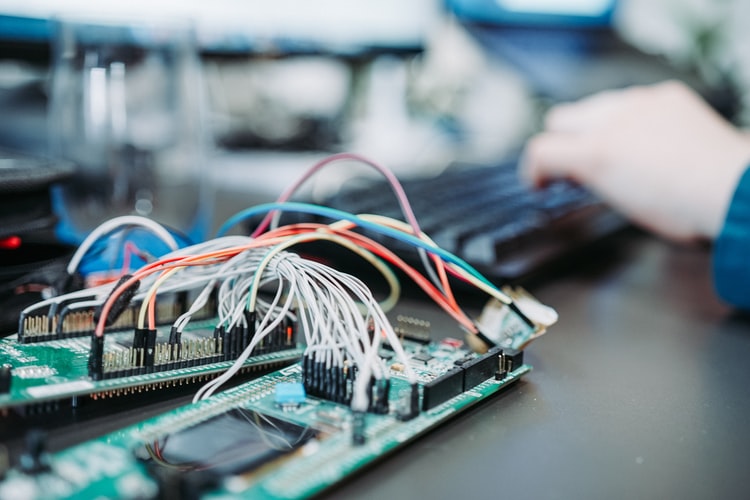

Developer Skills for Successful Enterprise IoT Projects

The first step of any successful IoT project is to define the business goals and build a proof-of-concept system to estimate if those goals are reachable. At this stage, you need only a subset of the skills listed in this article. But once a project is so successful that it moves beyond the proof-of-concept level, the required breadth and depth of the team increases. Often, individual developers possess several of the skills. Sometimes, each skill on the list will require their own team. The amount of people needed depends both on the complexity of the project and on success. More success usually means more work but also more revenue that can be used to hire more people. Most IoT projects include some form of custom hardware design. The complexity of the hardware varies considerably between projects. In some cases, it is possible to use hardware modules and reference designs, for which a basic electrical engineering education is enough. More complex projects need considerably more experience and expertise. To build Apple-level hardware, you need an Apple-level hardware team and an Apple-level budget.

IoT in Vehicles: The Trouble With Too Much Code

The threat and risk surface of internet of things devices deployed in automobiles is exponentially increasing, which poses risks for the coming wave of autonomous vehicles, says Campbell Murray of BlackBerry. To get a sense of how complicated today's cars are, Campbell notes that while A380 airplane runs around four million lines of code, an average mid-size car has 100 million lines of code. Statistically, that means there are likely many software defects. Using a metric of .015 bugs per line of code, that means cars with that much code could have as many as 150 million bugs, Campbell says in an interview with Information Security Media Group. Reducing those code bases is one way to reduce the risks, he says. "It's absolutely astonishing - the number of vulnerabilities that could exist in there," Campbell says. Meanwhile, enterprises deploying IoT need to remember the principles of safe computing: assigning the least amount of privileges, using dual-factor authentication and strong access controls, he adds.

Quote for the day:

"Leading people is like cooking. Don't stir too much; It annoys the ingredients_and spoils the food" -- Rick Julian

This is the first time the researchers have seen a cryptojacking worm spread through containers in the Docker Engine (Community Edition). While the worm isn't sophisticated in its tactics, techniques or procedures, it can be repurposed by the command-and-control server to run ransomware or other malware, the researchers warn. The Unit 42 research report did not note how much damage Graboid has caused so far or if the attackers targeted a particular sector. "If a more potent worm is ever created to take a similar infiltration approach, it could cause much greater damage, so it's imperative for organizations to safeguard their Docker hosts," the Unit 42 report notes. "Once the [command-and-control] gains a foothold, it can deploy a variety of malware," Jay Chen, senior cloud vulnerability and exploit researcher at Palo Alto Networks, tells Information Security Media Group. "In this specific case, it deployed this worm, but it could have potentially leveraged the same technique to deploy something more detrimental. It's not dependent on the worm's capabilities."

Data Literacy—Teach It Early, Teach It Often Data Gurus Tell Conference Goers

No one can understand everything, he said. That’s why the “real sweet spot” is the communication between the data scientists and the experts in various fields of inquiry to determine what they are seeking from the data and how it can be used. And there’s also an ethical component so that the data are not used to arrive at false conclusions. Sylvia Spengler, the National Science Foundation’s program director for Information and Intelligence systems, said that solving today’s big questions requires an interdisciplinary approach across all the sciences. “We need a deep integration across a lot of disciplines,” she said. “This is made for data science and data analytics. But it puts a certain edge on actually being able to deal with the kinds of data coming at you because they are so incredibly different.” Spengler said this integration can only happen through teams of people working on it. “You have to be able to collaborate. Those soft skills are critical. It’s not just your brains but your empathy because it makes you capable of taking multiple perspectives,” she said.

Linux security hole: Much sudo about nothing

At first glance the problem looks like a bad one. With it, a user who is allowed to use sudo to run commands as any other user, except root, can still use it to run root commands. For this to happen, several things must be set up just wrong. First the sudo user group must give a user the right to use sudo but doesn't give the privilege of using it to run root commands. That can happen when you want a user to have the right to run specific commands that they wouldn't normally be able to use. Next, sudo must be configured to allow a user to run commands as an arbitrary user via the ALL keyword in a Runas specification. The last has always been a stupid idea. As the sudo manual points out, "using ALL can be dangerous since in a command context, it allows the user to run any command on the system." In all my decades of working with Linux and Unix, I have never known anyone to set up sudo with ALL. That said, if you do have such an inherently broken system, it's then possible to run commands as root by specifying the user ID -1 or 4294967295. Thus, if the ALL keyword is listed first in the Runas specification, an otherwise restricted sudo user can then run root commands.

News from the front in the post-quantum crypto wars with Dr. Craig Costello

One good thing was that this notion of public key cryptography, it arrived, I suppose, just in time for the for the internet. In the seventies, the invention of public key cryptography came along, and that’s the celebrated Diffie-Hellman protocol that allows us to do key exchange. Public key cryptography is kind of a notion that sits above however you try to instantiate it. So public key cryptography is a way of doing things, and how you choose to do them, or what mathematical problem you might base it on, I guess, is how you instantiate public key cryptography. So initially, the two proposals that were proposed back in the seventies were what we call the discrete log problem in finite field. When you compute powers of numbers, and you do them in a finite field, you might start with a number and compute some massive power of it and then give someone the residue, or the remainder, of that number and say, what was the power that I raised this number to in the group? And the other problem is factorization, so integer factorization.

Beamforming explained: How it makes wireless communication faster

The mathematics behind beamforming is very complex (the Math Encounters blog has an introduction, if you want a taste), but the application of beamforming techniques is not new. Any form of energy that travels in waves, including sound, can benefit from beamforming techniques; they were first developed to improve sonar during World War II and are still important to audio engineering. But we're going to limit our discussion here to wireless networking and communications. By focusing a signal in a specific direction, beamforming allows you deliver higher signal quality to your receiver — which in practice means faster information transfer and fewer errors — without needing to boost broadcast power. That's basically the holy grail of wireless networking and the goal of most techniques for improving wireless communication. As an added benefit, because you aren't broadcasting your signal in directions where it's not needed, beamforming can reduce interference experienced by people trying to pick up other signals. The limitations of beamforming mostly involve the computing resources it requires; there are many scenarios where the time and power resources required by beamforming calculations end up negating its advantages.

A First Look at Java Inline Classes

The goal of inline classes is to improve the affinity of Java programs to modern hardware. This is to be achieved by revisiting a very fundamental part of the Java platform — the model of Java's data values. From the very first versions of Java until the present day, Java has had only two types of values: primitive types and object references. This model is extremely simple and easy for developers to understand, but can have performance trade-offs. For example, dealing with arrays of objects involves unavoidable indirections and this can result in processor cache misses. Many programmers who care about performance would like the ability to work with data that utilizes memory more efficiently. Better layout means fewer indirections, which means fewer cache misses and higher performance. Another major area of interest is the idea of removing the overhead of needing a full object header for each data composite — flattening the data. As it stands, each object in Java's heap has a metadata header as well as the actual field content. In Hotspot, this header is essentially two machine words — mark and klass.

Developer Skills for Successful Enterprise IoT Projects

The first step of any successful IoT project is to define the business goals and build a proof-of-concept system to estimate if those goals are reachable. At this stage, you need only a subset of the skills listed in this article. But once a project is so successful that it moves beyond the proof-of-concept level, the required breadth and depth of the team increases. Often, individual developers possess several of the skills. Sometimes, each skill on the list will require their own team. The amount of people needed depends both on the complexity of the project and on success. More success usually means more work but also more revenue that can be used to hire more people. Most IoT projects include some form of custom hardware design. The complexity of the hardware varies considerably between projects. In some cases, it is possible to use hardware modules and reference designs, for which a basic electrical engineering education is enough. More complex projects need considerably more experience and expertise. To build Apple-level hardware, you need an Apple-level hardware team and an Apple-level budget.

IoT in Vehicles: The Trouble With Too Much Code

The threat and risk surface of internet of things devices deployed in automobiles is exponentially increasing, which poses risks for the coming wave of autonomous vehicles, says Campbell Murray of BlackBerry. To get a sense of how complicated today's cars are, Campbell notes that while A380 airplane runs around four million lines of code, an average mid-size car has 100 million lines of code. Statistically, that means there are likely many software defects. Using a metric of .015 bugs per line of code, that means cars with that much code could have as many as 150 million bugs, Campbell says in an interview with Information Security Media Group. Reducing those code bases is one way to reduce the risks, he says. "It's absolutely astonishing - the number of vulnerabilities that could exist in there," Campbell says. Meanwhile, enterprises deploying IoT need to remember the principles of safe computing: assigning the least amount of privileges, using dual-factor authentication and strong access controls, he adds.

Quote for the day:

"Leading people is like cooking. Don't stir too much; It annoys the ingredients_and spoils the food" -- Rick Julian

No comments:

Post a Comment