Why you need to get your team up to speed on privacy-aware development

Prepare for privacy policies to reign supreme in programming; they already figure prominently in legislation. Take right-to-be-forgotten clauses, which are included in both the GDPR and the California Consumer Privacy Act (CCPA). The clauses require companies to quickly identify data on their systems that are covered by the privacy regulations and delete the data after the prescribed period of time has passed. All data being held by companies—even machine-learning data—may be impacted by the policy, said RiskIQ's Hunt. Sometimes these records can be spread across databases, data warehouses, backups, and spreadsheets, he said. "If the user's information was used to train a machine-learning model to serve them ads, that model may or may not need to be retained if a single user requests to be forgotten," Hunt said. "But what if 650,000 users file requests? If they represent a similar demographic, the model would certainly need to be retrained in order to truly 'forget' about those users."

Automation improves firewall migration and network security

When it comes to firewall migration, do you migrate every object and rule, or do you try to clean up the rule sets as you go? If you make changes, there is a high probability you will make mistakes that result in application failures or insufficient security, where you block too much or don't block enough, respectively. The least disruptive mechanism involves converting rules from one vendor's configuration to another vendor's configuration while applying some simple heuristics to identify orphaned objects. ... The first step for the automation process was to extract the objects, rules and interface information from the source firewall. We decided to import the extracted information into Excel spreadsheets so data items could be identified by spreadsheet column. While the conversion to Excel was a manual process, we only needed to do it once and finished relatively quickly. ... The scripts were a big win. It was easily a 20-to-1 ratio of manual effort versus running the script.

High performance computing: Do you need it?

With compute-demanding use cases rapidly becoming the norm, UNC-Chapel Hill began working with Google Cloud and simulation and analysis software provider Techila Technologies to map out its journey into cloud HPC. The first step after planning was a proof of concept evaluation. "We took one of the researchers on campus who was doing just a ton of high memory, interactive compute, and we tried to test out his workload," Roach says. The result was an unqualified success, he notes. "The researcher really enjoyed it; he got his work done." The same task could have taken up to a week to run on the university's on-premises cluster HPC. "He was able to get a lot of his run done in just a few hours," Roach says. On the other side of the Atlantic, the University of York also decided to take a cloud-based HPC approach. James Chong, a Royal Society Industry Fellow and a professor in the University of York's Department of Biology, notes that HPC is widely used by faculty and students in science departments such as biology, physics, chemistry and computer science, as well as in linguistics and several other disciplines.

Alibaba’s Chairman Daniel Zhang: “Data is the Petroleum, Computing Power is the Engine”

According to Qi, the chip enables optimization for computer vision applications including classification, object detection and segmentation. For instance, on the Taobao app, there is an option named “photograph and search” that allows users to take photos of whatever product they see and search for similar items in the app. There are altogether 1 billion new photos added into the image gallery each day. The recognition process for traditional GPU took as long as one hour, meanwhile, it takes Hanguang 800 only five minutes to complete the task. The applications of AI vary from the municipal government level to enterprises and ordinary consumers. Among them, one of the most talked about has been the city brain, first implemented around three years ago in Hangzhou. According to Zeng Zhenyu, VP of Alibaba Cloud Intelligence and expert on Alibaba’s industrial brain and city brain, “The city brain is built on top of the Alibaba Aspara Operating System, which provides a city-level data middle station. ...”

First off, to take advantage of the latency benefits 5G opens up, compute resources have to be closer to the user–whether that’s you, me, an industrial robot or a security camera; that’s just physics. Second, in order to use data to initiate an action, you need the ability to conduct real-time data analysis. But with these edge investments, what’s the business case? “Let’s look at how we monetize 5G and edge,” Chan said. She gave the example of a smart venue, which she characterized as a “fertile ground” for innovation that also brings a global reach as “sports transcend all cultures.” For example, Intel worked with Arizona State University on an IoT project. Sensors collected a variety of metrics for retailers in the venue. The learning: people in the cheap seats spend more money per person on not just tickets but things like food and merchandise than people who buy premium seats. “That kind of data is very valuable for people in that micro-ecosystem.” From LTE to 5G, Chan laid out a proof of concept Intel worked on in conjunction with the National Hockey League.

Africa Data Centres believes this is the way of the future, because we can offer services in all the countries in which we are present, to any international customer who wants to come to Africa. We can offer them the same design, the same procedures, the same contracts, which allows them to focus on their growth and development in Africa, in a way that is both secure, visible and scalable. Our strategy is not a short term one. Our aim is to digitalise Africa in the long term, to execute a long-term industrial project, and interconnect our data centres all over the continent. Our strategy is long term, Pan-African, and not to be acquired at any time soon. In terms of trends in Africa, Africa Data Centres is looking to firstly service clients in important regions. We are also in the process of building a network of data centres in the five leading countries in Africa, which we see as South Africa, Kenya, Egypt, Nigeria, and Morocco. These five are our current focus and will become hubs. The next step is developing a sub-network of edge data centres around those hubs in the countries we see as second tier.

To address the data science skills gap, software developers have created new programs that can perform many of the analytical tasks previously reserved for data scientists. This has turned data science into a commodity and allowed everyday users to become “citizen data scientists” with a little bit of training. But simply having more citizen data scientists running analyses doesn’t necessarily deliver value to the organization. What’s more vital is the ability to approach data and analytics from a business perspective. Bilingual talent can do that. They can turn data into predictive models, and then translate those models within the context of critical decisions and operations, such as for demand forecasting or preventative maintenance on production equipment. Moreover, they can clearly explain the derived insights, ideas, and plans of action to senior executives in a way that they understand. Bilingual talent can make for very inspiring leaders within a manufacturing organization, capable of driving significant positive change.

Good cybersecurity comes from focusing on the right things, but what are they?

“Anyone who has spent a significant amount of time in this industry understands that you can make a positive impact and be successful without sacrificing job security – as long as technology keeps evolving, the threats and vulnerabilities will evolve along with it,” he noted. Demonstrating your own value outside of a crisis is a challenge, but it’s a challenge that every infosec professional should do their best to overcome. One aspect of this is changing the organizations’ mindset regarding security. “Our job is to enable organizations to create value securely and to quantify the risk of the alternative, not to put up obstacles and police our organization,” he added. Another aspect is changing their own mindset, i.e. the tendency to look at cybersecurity as a problem that could be solved if only they could invest more in security products or hire more people. This usually leads to inordinate investment in niche problems and applying outdated solutions to new challenges.

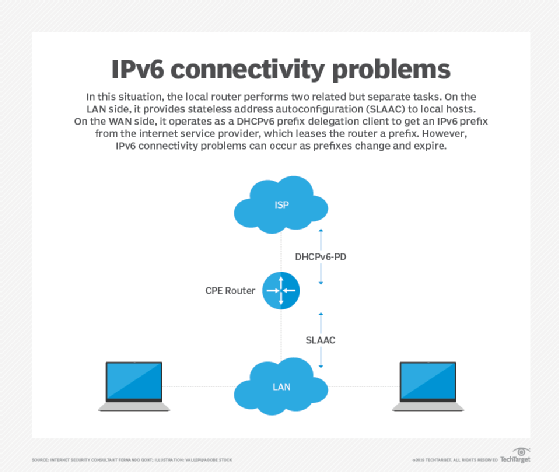

Understanding why IPv6 renumbering problems occur

A network may be renumbered in many different ways without the local routers being able to signal hosts that the existing prefixes should be phased out. ... In this situation, the local router performs two related, but separate functions. On the LAN side, it operates as a SLAAC router, providing network configuration information to the local hosts. On the WAN side, it operates as a DHCPv6-PD (DHCPv6 prefix delegation) client to dynamically obtain an IPv6 prefix from the upstream internet service provider (ISP). The ISP will typically lease the router a /48 prefix, which the local router will advertise as a /64 subprefix on the local network via SLAAC. Once a prefix has been leased by the upstream ISP, the local router will only communicate with the upstream DHCPv6 server when expiration of the leased prefix is imminent, enabling it to renew the lease before it expires. Other than that, there will be no further communication between the DHCPv6-PD client on the router and the DHCPv6-PD server at the ISP. In situations where the local router crashes and reboots, for example, the router will typically request a new IPv6 prefix from the upstream network, and the leased prefix may be different from the previously leased prefix.

Wearing Two Hats: CISO and DPO

What's it like to serve in the dual roles of CISO and DPO? Gregory Dumont, who has both responsibilities at SBE Global, a provider of repair and after-sales service solutions to the electronics and telecommunication sectors, explains how the roles differ. While a CISO looks at risks from a business, financial and operations point of view, a DPO, or data protection officer - a role required under the European Union's General Data Protection Rule - looks at the same risks from a data subject's (consumer) point of view, Dumont, who is based in the U.K., explains in an interview with Information Security Media Group. In his DPO role, Dumont says, he considers such questions as: "What are the risks in terms of the loss of privacy and loss of freedom from a data subject's point of view?" As CISO, Dumont faces the challenge of managing multiple vendors under strict GDPR regulations. "We have suppliers; we have customers. Sometimes my customers are also my suppliers. You have to make sure that you have contracts that cover all of these interactions. ..." he says.

Quote for the day:

"Leadership happens at every level of the organization and no one can shirk from this responsibility." -- Jerry Junkins

No comments:

Post a Comment