Critical PDF Warning: New Threats Leave Millions At Risk

The PDFex vulnerability exploits ageing technology which was not designed with contemporary security considerations in mind. In essence, taking advantage of the very universality and portability of the PDF format. And while it might seem like a fairly specific attack, most companies rely on secured PDF documents for the transmission of contracts, board papers, financial documents, transactional data. There is an expectation that such documents are secure. Clearly, they are not. The PDFex attack is designed to exfiltrate the encrypted data to the attacker when the document is opened with a password—being decrypted in the process. The PDFex researchers, “in cooperation with the national CERT section of BSI,” have contacted all vendors, “provided proof-of-concept exploits, and helped them fix the issues.” Of even more concern, is the multiple vulnerabilities that have been disclosed and which impact the popular Foxit Reader PDF application specifically—Foxit claims it has 475 million users. Affecting Windows versions of Foxit’s reader, the vulnerabilities enable remote code execution on a target machine.

Much-attacked Baltimore uses ‘mind-bogglingly’ bad data storage

After the attack in May, Baltimore Mayor Bernard C. “Jack” Young not only refused to pay, he also sponsored a resolution, unanimously approved by the US Conference of Mayors in June 2019, calling on cities to not pay ransom to cyberattackers. Baltimore’s budget office has estimated that due to the costs of remediation and system restoration, the ransomware attack will cost the city at least $18.2 million: $10 million on recovery, and $8.2 million in potential loss or delayed revenue, such as that from property taxes, fines or real estate fees. The Robbinhood attackers had demanded a ransom of 13 Bitcoins – worth about US $100,000 at the time. It may sound like a bargain compared with the estimated cost of not caving to attackers’ demands, but paying a ransom doesn’t ensure that an entity or individual will actually get back their data, nor that the crooks won’t hit up their victim again. The May attack wasn’t the city’s first; nor was it the first time that its IT systems and practices have been criticized in the wake of attack.

'The Dukes' (aka APT29, Cozy Bear) threat group resurfaces with three new malware families

According to researchers, three new malware samples, dubbed FatDuke, RegDuke and PolyglotDuke, linked to a cyber campaign most likely run by APT29. The most recent deployment of these new malwares was tracked in June 2019. The ESET researchers have named all activities of Apt29 (past and present) collectively as Operation Ghost. This cyber campaign has been running since 2013 and has successfully targeted the Ministries of Foreign Affairs in at least three European countries. The researchers compared the techniques and tactics used by APT29 in its recent attacks to those used in group's older attacks. They found many similarities in these campaigns, including the use of Windows Management Instrumentation for persistence, use of steganography in images to hide communications with Command and Control (C2) servers, and use of social media, such as Reddit and Twitter, to host C2 URLs. The researchers also found similarities in the targets hit during the newer and older attacks - ministries of foreign affairs.

Misconfigured Containers Open Security Gaps

The knowledge gap surrounding security risks and the blunders it causes are, by far, the biggest threat to organizations using containers, observed Amir Jerbi, co-founder and CTO of Aqua Security, a container security software and support provider. "Vulnerabilities in container images -- running containers with too many privileges, not properly hardening hosts that run containers, not configuring Kubernetes in a secure way -- any of these, if not addressed adequately, can put applications at risk," he warned. Examining the security incidents targeting containerized environments over the past 18 months, most were not sophisticated attacks but simply the result of IT neglecting basic best practices. he noted. ... While most container environments meet basic security requirements, they can also be more tightly secured. It's important to sign your images, suggested Richard Henderson, head of global threat intelligence for security technology provider Lastline. "You should double-check that nothing is running at the root level."

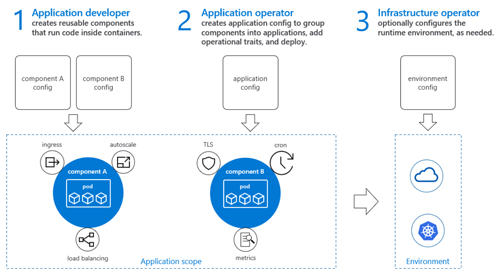

Microsoft and Alibaba Back Open Application Model for Cloud Apps

OAM is a standard for building native cloud applications using "microservices" and container technologies, with a goal of establishing a platform-agnostic approach. It's kind of like the old "service-oriented architecture" dream, except maybe with less complexity. The OAM standard is currently at the draft stage, and the project is being overseen by the nonprofit Open Web Foundation. Microsoft apparently doesn't think too highly of the Open Web Foundation as its "goal is to bring the Open Application Model to a vendor-neutral foundation," the announcement explained. Additionally, Microsoft and Alibaba Cloud disclosed that there's an OAM specification specifically designed for Kubernetes, the open source container orchestration solution for clusters originally fostered by Google. This OAM implementation, called "Rudr," is available at the "alpha" test stage and is designed to help manage applications on Kubernetes clusters. ... Basic OAM concepts can be found in the spec's description. It outlines how the spec will account for the various roles involved with building, running and porting cloud-native apps.

Why AI Ops? Because the era of the zettabyte is coming.

“It’s not just the amount of data; it’s the number of sources the data comes from and what you need to do with it that is challenging,” Lewington explains. “The data is coming from a variety of sources, and the time to act on that data is shrinking. We expect everything to be real-time. If a business can’t extract and analyze information quickly, they could very well miss a market or competitive intelligence opportunity.” That’s where AI comes in – a term originally coined by computer scientist, John McCarthy, in 1956. He defined AI as “the science and engineering of making intelligent machines.” Lewington thinks that the definition of AI is tricky and malleable, depending on who you talk to. “For some people, it’s anything that a human can do. To others, it means sophisticated techniques, like reinforcement learning and deep learning. One useful definition is that artificial intelligence is what you use when you know what the answer looks like, but not how to get there.” No matter what definition you use, AI seems to be everywhere. Although McCarthy and others invented many of the key AI algorithms in the 1950s, the computers at that time were not powerful enough to take advantage of them.

Fake Tor Browser steals Bitcoin from Dark Web users

Purchases made in these marketplaces are usually done so using cryptocurrency such as Bitcoin (BTC) in order to mask the transaction and user's identity. If a user visits these domains and tries to make a purchase by adding funds to their wallet, the script activates and attempts to change the wallet address, thereby ensuring funds are sent to an attacker-controlled wallet instead. The payload will also try to alter wallet addresses offered by Russian money transfer service QIWI. "In theory, the attackers can serve payloads that are tailor-made to particular websites. However, during our research, the JavaScript payload was always the same for all pages we visited," the researchers say. It is not possible to say how widespread the campaign is, but the researchers say that PasteBin pages promoting the Trojanized browser have been visited at least half a million times, and known wallets owned by the cybercriminals have 4.8 BTC stored -- equating to roughly $40,000. ESET believes that the actual value of stolen funds is likely to be higher considering the additional compromise of QIWI wallets.

Server Memory Failures Can Adversely Affect Data Center Uptime

The Intel® MFP deployment resulted in improved memory reliability due to predictions based on the capture of micro-level memory failure information from the operating system’s Error Detection and Correction (EDAC) driver, which stores historical memory error logs. Additionally, by predicting potential memory failures before they happen, Intel® MFP can help improve DIMM purchasing decisions. As a result, Tencent was able to reduce annual DIMM purchases by replacing only DIMMs that have a high likelihood to cause server crashes. Because Intel® MFP is able to predict issues at the memory cell level, that information can be used to avoid using certain cells or pages, a feature known as page offlining, which has become very important for large scale data center operations. Tencent was therefore able to improve their page offlinging policies based on Intel® MFP’s results. Using Intel® MFP, server memory health was analyzed and given scores based on cell level EDAC data.

Three Keys To Delivering Digital Transformation

More digitally mature organisations are beginning to view digital transformation as not just an internal technology infrastructure upgrade. It is more than an opportunity to move costly in-house capabilities to the cloud, or shift sales and marketing to online multi-channel provision. The focus today is on a more fundamental review of business practices, a realignment of operations toward core values, and a stronger relationship between creators and consumers of services. Within this context, digital modernisation programmes taking place across many organisations are accelerating the digitisation of their core assets, rebalancing spending toward digital engagement channels, fixing flaws in their digital technology stacks, and replacing outdated technology infrastructure with cloud-hosted services. Such programmes are essential for organisations to remain competitive and relevant in a world that increasingly rewards those that can adapt quickly to market changes, raise the pace of new product and service delivery, and maintain tight stakeholder relationships.

Virtual voices: Azure's neural text-to-speech service

Microsoft Research has been working on solving this problem for some time, and the resulting neural network-based speech synthesis technique is now available as part of the Azure Cognitive Services suite of Speech tools. Using its new Neural text-to-speech service, hosted in Azure Kubernetes Service for scalability, generated speech is streamed to end users. Instead of multiple steps, input text is first passed through a neural acoustic generator to determine intonation before being rendered using a neural voice model in a neural vocoder. The underlying voice model is generated via deep learning techniques using a large set of sampled speech as the training data. The original Microsoft Research paper on the subject goes into detail on the training methods used, initially using frame error minimization before refining the resulting model with sequence error minimisation. Using the neural TTS engine is easy enough. As with all the Cognitive Services, you start with a subscription key and then use this to create a class that calls the text-to-speech APIs.

Quote for the day:

"A person must have the courage to act like everybody else, in order not to be like anybody." -- Jean-Paul Sartre

No comments:

Post a Comment