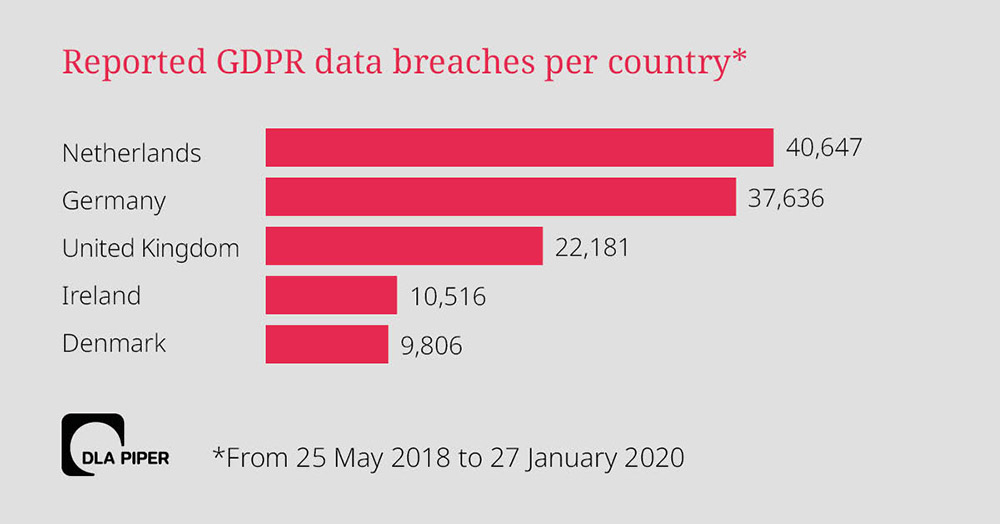

Big GDPR Fines in UK and Ireland: What's the Holdup?

"Although the impact of COVID-19 may explain some of the current, continued

delay, quite why what may end up being over a year to resolve these matters

since the ICO announced its intentions to fine may leave some wondering

whether GDPR enforcement is going as quickly as it should," he says. "In

addition, what was also expected to be a showcase for the first significant

fines under GDPR in the U.K. may now be a letdown." But Brian Honan, who heads

Dublin-based cybersecurity consultancy BH Consulting, says that seeing an

extended legal process isn't surprising, especially because GDPR enforcement

norms have yet to be set. "The regulator, be that the ICO or any other

regulator, has to ensure their case is a legally watertight as it can be

before issuing a fine or a penalty. This is very important as organizations,

particularly large ones with deep legal resources, will no doubt challenge any

penalties imposed on them," he says. "The BA and Marriott cases are a prime

example of this," says Honan, who's also a cybersecurity adviser to Europol,

the EU's law enforcement intelligence agency. "We also have to take into

account many of the regulators have limited resources, and their staff have to

ensure they support the rights of all data subjects as best they can."

How to set up a chaos engineering game day

It isn't easy to run a chaos engineering game day. Nonetheless, it should be both fun and instructive. Manifold has hosted several styles of chaos engineering game days. Examples include 30-minute tabletop events as well as multi-hour active failure events that involve the full engineering team. A recent offsite Manifold event involved dice rolls, character classes and prizes for surviving the chaos incident. To maintain a chaos engineering program, employees must enjoy the challenge. "Uncontrolled chaos will happen to your system -- save your seriousness for that," said James Bowes, CTO of Manifold. Role-playing game days are a great way to keep it interesting. With each chaos engineering game day, the organization should build up its resistance to digital failure. "As you proceed, and if you are successful, it should become more difficult to find parts of the system to break," Bowes said. Let the participants know that the goal is to find problems; if they break something, consider that a success. But keep other teams and stakeholders informed.It’s Time to Rethink Leadership Around Leading for Resilience

If you lead with the assumption that something somewhere and at some time will

jump out and attack, you naturally prepare to defend yourself. This

preparation doesn’t distract you from moving forward, but it does prove

critical when you need to protect yourself. If your entire supply chain is

dependent upon the ongoing support of unfriendly or at least unaligned actors

and subject to pendulum swings in the political environment, you diversify the

supply chain risk. By the same token, minimizing business model risk by

diversifying channels is essential. Moving forward, expect every restaurant

and food-service operator that is interested in surviving and thriving to

develop robust online and takeout systems and internal processes. I’ve lost

interest or empathy for the old-line retailers of my childhood now teetering

on the brink of the abyss. They’ve had more than two decades to reset for

resilience and diversify their business models, develop new channels, embrace

technology, and make themselves relevant to consumers. A few have pulled this

off and merit kudos. The rest will likely soon join the growing heap of old

brands that will be lost to memory in a few short years.

Work in a COVID-19 world: Back to the office won’t mean back to normal

We’re now able to say, “Okay, what might be the new normal beyond this?” We recognize that there will be re-integration back into our worksites done in the current COVID-19 environment. But beyond COVID, post-vaccines, as we think about our business continuity going forward, I do think that we will be moving into, very purposefully, a more hybrid work arrangement. That means new, innovative, in-office opportunities because we still want people to be working face-to-face and have those in-person sort of collisions, as we call them. Those you can’t do at all or they are harder to do on videoconferencing. But there can be a new balance between in-office and remote work -- and fine-tuning our own practices – that will enable us to be as effective as possible in both environments. So, no doubt, we have already started to undertake that as a post-COVID approach. We are asking what it will look like for us, and then how do we then make sure from a philosophical and a strategy perspective that the right practices are put into place to enable it.Cloud infrastructure operators should quickly patch VMware Cloud Director flaw

The reason the flaw has not been rated critical is likely because attackers

technically need authenticated access to VMware Cloud Director to exploit it.

However, according to Citadelo's Zatko, that's not hard to achieve in practice

since most cloud providers offer trial accounts to potential customers that

involve access to the Cloud Director interface. In most cases there is no real

identity verification either for such accounts, so attackers can gain easy

access without providing their real identities. This highlights a larger issue

with assessing risk based only on vulnerability scores: Severity scores don't

always reflect or take into account the real-world conditions in which

vulnerable systems might typically exist. Certain configuration or deployment

choices can make a vulnerability much easier or harder to exploit than the

advisory or the CVSS score suggests. Zatko is concerned that VMware Cloud

Director users did not take the issue too seriously based on the advisory

alone. More than two weeks after the patches had already been out, his company

tested another Fortune 500 organization that used the product and it was still

vulnerable.

OpenAI Announces GPT-3 AI Language Model with 175 Billion Parameters

OpenAI made headlines last year with GPT-2 and their decision not to release the 1.5 billion parameter version of the trained model due to "concerns about malicious applications of the technology." GPT-2 is one of many large-scale NLP models based on the Transformer architecture. These models are pre-trained on large text corpora, such as the contents Wikipedia, using self-supervised learning. In this scenario, instead of using a dataset containing inputs paired with expected outputs, the model is given a sequence of text with words "masked" and it must learn to predict the masked words based on the surrounding context. After this pre-training, the models are then fine-tuned with a labelled benchmark dataset for a particular NLP task, such as question-answering. However, researchers have found that the pre-trained models perform fairly well even without fine-tuning, especially for large models pre-trained on large datasets. Earlier this year, OpenAI published a paper postulating several "laws of scaling" for Transformer models.10 open source cloud security tools to know

PacBot, also known as Policy as Code Bot, is a compliance monitoring platform.

You implement your compliance policies as code, and PacBot checks your

resources and assets against those policies.You can use PacBot to

automatically create compliance reports and resolve compliance violations with

predefined fixes. Use the Asset Group feature to organize your resources

within the PacBot UI dashboard, based on certain criteria. For example, you

can group all your Amazon EC2 instances by state -- such as pending, running

or shutting down -- and view them together. You can also limit the scope of a

monitoring action to one asset group, for more targeted compliance. PacBot was

created by T-Mobile, which continues to maintain it.It can be used with AWS

and Azure. ... Pacu is a penetration testing toolkit for AWS

environments. It provides a red team a series of attack modules that aim to

compromise EC2 instances, test S3 bucket configurations, disrupt monitoring

capabilities and more. The toolkit currently has 36 plugin modules and

includes built-in attack auditing for documentation and test timeline

purposes. Pacu is written in Python and maintained by Rhino Security Labs, a

penetration testing provider.

NIS security regulations proving effective, but more work to do

The government said it now plans to make some technical changes to the regulatory regime to ensure it remains proportionate and targeted and will be considering a number of amendments to be taken up. These changes are likely to centre on cost recovery, to better enable competent authorities to conduct regulatory activity; the implantation of an improved appeals mechanism; more clarity around the wider enforcement regime; the introduction of support to manage risks to organisational supply chains; the introduction of best-practice sharing; and a number of measures to account for any changes that may be needed, or may become possible, after the end of the Brexit transition period. Kuan Hon, a director in the technical team at law firm Fieldfisher, said that based on the statistics presented in the report, there had clearly been very limited enforcement of the NIS regulations so far, with no fines having been levied, and fewer incidents reported to regulators than DCMS anticipated. However, she added, compliance and incident reporting costs had been much higher than first expected.Cisco takes aim at supporting SASE

Reed stated that secure access and optimal performance are a must. “The rapid

adoption of SD-WAN for connecting to multi-cloud applications provides

enterprises with the opportunity to rethink how access and security are

managed from campus to cloud to edge,” he stated. “With 60% of organizations

expecting the majority of applications to be in the cloud by 2021 and over 50%

of the workforce to be operating remotely, new networking and security models

such SASE offer a new way to manage the new normal.” According to Reed, the

goal of SASE is to provide secure access to applications and data from

on-premises data centers or cloud platforms, with access determined by

identities that are defined by combinations of characteristics including

individuals, groups, locations, devices, and services. Service edge refers to

global points of presence (PoP), IaaS, or colocation facilities where local

traffic from branches and endpoints is secured and forwarded to the

appropriate destination without first traveling through corporate data

centers. By delivering security and networking services together from the

cloud, organizations will be able to securely connect any user or device to

any application and optimize user experience, Reed stated.

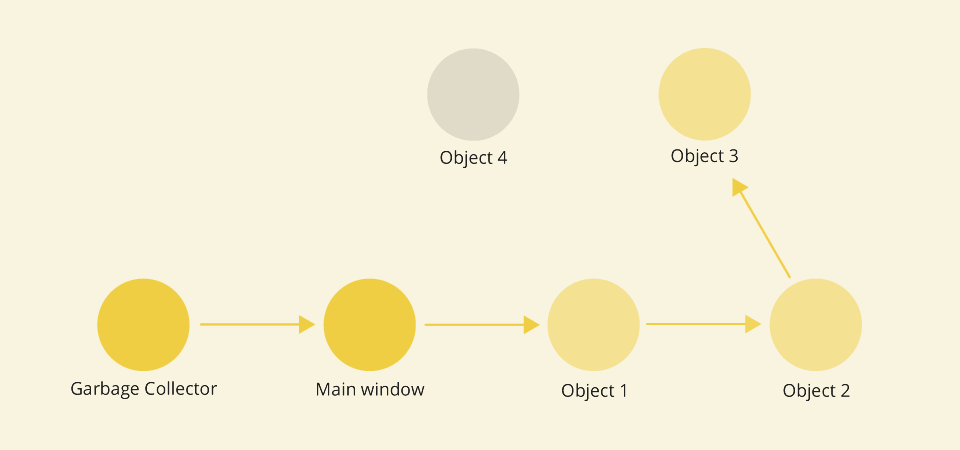

Causes of Memory Leaks in JavaScript and How to Avoid Them

The fastest way for a memory usage check is to take a look at the browser Task

Managers (not to be confused with the operating system's Task Manager). They

provide us with an overview of all tabs and processes currently running in the

browser. Chrome's Task Manager can be accessed by pressing Shift+Esc on Linux

and Windows, while the one built into Firefox by typing about:performance in

the address bar. Among other things, they allow us to see the JavaScript

memory footprint of each tab. If our site is just sitting there and doing

nothing, but yet, the JavaScript memory usage is gradually increasing, there’s

a good chance we have a memory leak going on. Developer Tools are providing

more advanced memory management methods. By recording in Chrome's Performance

tool, we can visually analyze the performance of a page as it's running. Some

patterns are typical for memory leaks, like the pattern of increasing heap

memory use shown below. Other than that, both Chrome and Firefox Developer

Tools have excellent possibilities to further explore memory usage with the

help of the Memory tool.

Quote for the day: