Towards Artificial General Intelligence

Of course, there is no way a machine can feel and experience thoughts like a

human, but it can compute and relate concepts, and encode human-like experience

(e.g., a snake is dangerous and scary, therefore it must be avoided). So, what

might be the solution in developing such relational networks, which could bring

about a general form of AI called artificial general intelligence (AGI), which

could 'think' like a human and in the way which was proposed in Dartmouth

College in 1956? Simply more parameters in a neural network? Recent work

conducted in my own lab with colleagues in Belgium has suggested that a new

approach of functional contextualism (which differs from current forms of

cognitivism — e.g., of memory, attention, and reasoning through logic) may be

the solution to progress AI into the generalized form of AGI, where the system

learns and understands concepts and how these relate to other concepts (through

something called relational frames), and the context in which cues within the

environment influence functions and the meaning or uses of such

concepts.

The Shape of Things to Come: GraphQL and the Web of APIs

The inflection point for GraphQL, however, is still a ways off. While there are

some major companies using GraphQL, such as Shopify, REST is still the most-used

API format in many other companies — including prominent API-based public

companies Stripe and Twilio. I asked Jhingran whether he sees those types of

companies pivoting to GraphQL over time? He first noted that he doesn’t see

GraphQL usurping REST. “We typically find that the REST layer that enterprises

have built, [they] have embedded business logic into it. And the GraphQL there,

in general, will be a composition layer — as opposed to incorporating deep

business logic. And therefore, both will actually co-exist.” However, Jhingran

does think that more and more companies will start using GraphQL for their

external services, a trend that will happen in stages. “Backend teams are

becoming comfortable with GraphQL for the apps that are built by the team,” he

said, meaning applications developed internally. Backend developers will take

more time to get comfortable using GraphQL APIs from third-party companies,

although Jhingran pointed to GitHub and Shopify’s GraphQL APIs as early

examples.

Stanford cryptography researchers are building Espresso, a privacy-focused blockchain

Espresso Systems, the company behind the blockchain project, is led by Fisch,

chief operating officer Charles Lu and chief scientist Benedikt Bünz,

collaborators at Stanford who have each worked on other high-profile web3

projects, including the anonymity-focused Monero blockchain and BitTorrent

co-founder Bram Cohen’s Chia. They’ve teamed up with chief strategy officer Jill

Gunter, a former crypto investor at Slow Ventures who is the fourth Espresso

Systems co-founder, to take their blockchain and associated products to market.

To achieve greater throughput, Espresso uses ZK-Rollups, a solution based on

zero-knowledge proofs that allow transactions to be processed off-chain.

ZK-Rollups consolidate multiple transactions into a single, easily verifiable

proof, thus reducing the bandwidth and computational load on the consensus

protocol. The method has already gained popularity on the Ethereum blockchain

through scaling solution providers like StarkWare and zkSync, according to

Fisch. At the core of Espresso’s strategy, though, is a focus on privacy and

decentralization.

5 Tips on Managing a Remote-first Development Team

Working from home makes it harder to remain connected with team members and

stakeholders as it reduces not only the frequency of our communication but also

its quality. We tend to rely more on email and instant messaging, both purely

written media (if you exclude the occasional GIF). How can we, as managers and

leaders, ensure that we are fostering healthy and effective communication in our

teams and with our stakeholders? Meet 1o1 often and effectively. Unfortunately,

one of the first casualties of working remotely tends to be 1o1 meetings. Most

managers, particularly early career ones, have difficulties leading good 1o1s.

Engineers seem to be particularly averse to bad meetings, seeing them as

distractions or as waste of time. This also applies to stakeholders, making

addressing concerns, conveying important updates or solving project constraints

more difficult. The importance of 1o1 meetings cannot be understated. In its

famous Project Oxygen, Google found that managers with higher feedback scores

also tended to have more frequent and higher quality 1o1 meetings with their

teams.

Blockchain and GDPR (General Data Protection Regulation)

The most obvious method to sidestep the GDPR is simply not to put any individual

data on the blockchain relating to any private citizen or resident of the EU.

However, this drastically reduces the usefulness of blockchains for any public

application, such as health record tracking, social media, reputation reporting

systems associated with online sales, and identity systems such as an

international passport. The GDPR does not specify if subsequent corrections to

the data are acceptable, if the original incorrect data is still present in

earlier blocks on the blockchain. ... A further possibility is to ensure that

all private data stored on the blockchain is encrypted. In such a situation, the

company responsible for data care can provide evidence of the deletion of the

data by ensuring that the decryption key is destroyed. Another approach may be

to shift the responsibility for protecting the private key to the individual

whose data is being stored on the blockchain.

IT talent: 3 tips to kickstart employee career development

Training typically focuses on hard skills, which is not surprising for a

technical role, but it is critical to understand the importance of soft skill

training for IT professionals. Soft skills are particularly essential amidst

digital transformation efforts, which cannot be done in a silo and require

strong communication among many departments. I recently had the opportunity to

participate in an eye-opening leadership course on driving strategic growth,

which reinforced for me how important it is to take the time to build and

strengthen soft skills. This course ran over three months and consisted of

lectures, formal learning, and time to practice. Ultimately, we returned to

the larger group to share learnings from our practice time. This learning

process reminded me how important it is to build learning programs around soft

skills. While teaching soft skills can require time and effort, incorporating

them into skills training can positively impact your IT employees’ experience

and set them on a path of growth and development.

Indian govt kicks off the consultation process to build a fairness assessment framework for AI/ML systems

“We have been studying various aspects of AI/ ML where some standardisation

or testing and certification framework could be established. Moreover, we

have studied the works of various researchers where biases in various AI/ ML

systems deployed by leading corporates and governments are deliberated.

Biases in AI/ ML Systems are a real threat, and ensuring fairness in such

applications is very important to build public trust in AI/ ML Systems.

Accordingly, we have initiated discussions for evolving a framework for

fairness certification of such systems,” said Avinash Agarwal, DDG,

Telecommunication Engineering Centre. TEC aims to set up standard operating

procedures (SOP) to assess the fairness of various AI/ ML systems and create

a benchmark. Systems that conform to the specifications will be given a

fairness certification, ensuring product credibility and public trust in AI/

ML. “To achieve this, we will follow a consultative process for framing

standards, specifications and test schedules. Then, we plan to prepare a

draft document based on the various inputs received and release it for

public consultations.

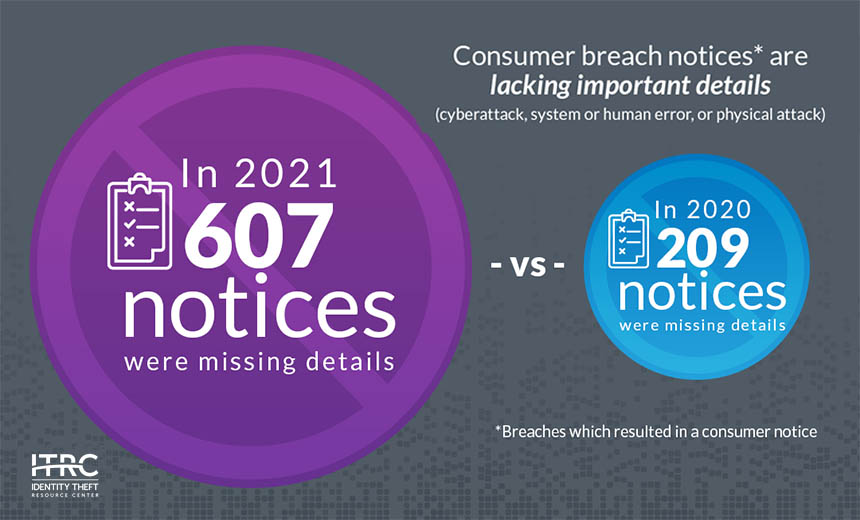

SOARs vs. No-Code Security Automation: The Case for Both

This is not to say that you’re required to ditch your SOAR and replace it

with a lightweight security automation platform like Torq. Many businesses

that have dedicated cybersecurity teams may opt to continue to use their

SOARs as the place where they detect and manage the most complex threats,

such as active, targeted attacks by professional threat actors. But for

managing more mundane risks — like blocking phishing emails, securing

sensitive data or detecting malicious users — lightweight no-code security

automation is a more practical solution. It’s much easier to deploy, and it

empowers all stakeholders to support security operations, even at

organizations that have minimal cybersecurity resources. By extension,

no-code security automation is the key to thriving in the face of today’s

pervasive threats. When you operate in a world that sees 26,000 DDoS attacks

and 4,000 ransomware attacks each day, and where threat actors are

constantly probing your systems for an open door, you need more agility and

automated remediation than a SOAR alone can deliver.

Closing the data quality gap

Data agility has become a central pillar of building a supple business, one

that can move, and pivot quickly as new information arises. In the simplest

terms, data agility is the distance between the data that informs a decision

and the decision itself. This means that poor quality data will lead to poor

decision making. Pairing trustworthy contact data with an agile data

management programme enables organisations to make their data actionable,

allowing for better and faster decisions when pursuing new and existing

opportunities. That’s why 94% of business leaders believe having agility in

both business and data practices is important in responding to the pandemic.

Achieving greater agility requires business leaders to rethink their use of

technology and be more open to integrating it into their businesses. Half

the human brain is devoted to processing visual images and processes data at

60 bits per second. That might go some way to explain why four out of 10

leaders say they are looking for easy-to-use solutions; in turn this helps

enable data and business users alike to visualise, read, write, and argue

with data insights.

Digesting Blockchain

The servers are located in different computers around the network, therefore

being distributed (peer-to-peer or P2P). Once a transaction is made, the new

information is replicated and received by the nodes within the P2P network

and added to the corresponding block open at that time. The block will

contain the transaction information and each transaction will be assigned a

"hash" once it has been validated by the network nodes —the cryptographic

hash is like the digital fingerprint of the transaction and is represented

by a sequence of numbers. A hash is a function that converts one value into

another, and the latter contains a fixed amount of numbers or figures. The

information about the transaction recorded in the block can include details

regarding who, what, how, how much, or when the transaction happened. The

information contained in the block can neither be changed nor its hash.

Accordingly, If the information in a block is changed the whole sequence of

blocks will become invalid.

Quote for the day:

"The test we must set for ourselves

is not to march alone but to march in such a way that others will wish to

join us." -- Hubert Humphrey