Security Problems Worsen as Enterprises Build Hybrid and Multicloud Systems

"Most organizations have had a cloud attack surface for years and didn't know

about it," said Andrew Douglas, managing director in cybersecurity at Deloitte

& Touche. "They were using SaaS applications and piloting different cloud

providers going back a decade." Companies are under increasing pressure to move

to the cloud, which the pandemic has accelerated. While security technologies

are becoming more comprehensive and robust, IT managers are tempted to skip over

the security planning steps and jump straight into putting new solutions into

production. "There's been a lot of temptation to move quickly," said Douglas.

"Our clients are trying to accelerate their organizations' move to the cloud,

but whether they put in the time and investment in implementing security – well,

that has been lagging." The biggest challenge faced by those that do want to

invest in security planning upfront is getting an accurate view of all their

assets. "What do we have out there? What are we spinning out on a daily basis?

What are the subscriptions we have in the cloud? What infrastructure as code?

What serverless functionality? ..."

What Colonial Pipeline Means for Commercial Building Cybersecurity

Smart buildings are particularly vulnerable to cyberattacks as more Internet of

Things devices are deployed and the use of remote management tools increases.

While IT systems are typically focused on the core security triad of

confidentiality, integrity, and availability of information, the BMS security

triad is different. The BMS focus should be on the availability of operational

assets, integrity/reliability of the operational process, and confidentiality of

operational information. The deployment of a multidisciplinary defense approach

across system levels requires a cost-benefit balanced focus on operations,

people, and technology. Managing cyber-risks starts with organizational

governance and executive-level commitments. This can include developing a

cybersecurity strategy with a defined vision, goals, and objectives, as well as

metrics, such as the number of building control system vulnerability assessments

completed. In addition, senior leadership needs to ensure that the right

technologies are procured and deployed, defenses are deployed in layers, access

to the BMS via the IT network is limited as much as possible, and detection

intrusion technologies are deployed.

Getting the board on board: a cost-benefit analysis approach to cyber security

A cost-benefit analysis is a method used to evaluate a project by comparing its

losses and gains — essentially a quantified and qualified list of pros and cons.

Undertaking a cost-benefit analysis is a great way to assess projects because it

reduces the evaluation complexity to a single figure. As you can imagine, this

makes a cost-benefit analysis an invaluable tool when it comes to explaining the

intricacies and selling the value of a robust cyber security strategy to your

board. One of the most important things to emphasise in your cost-benefit

analysis is the trade-off between paying to prevent a mess versus paying to

clean up a mess. A recent Cabinet Office report stated the estimated cost of

cyber crime to the UK economy is a whopping £27 billion. And when it comes to

individual attacks, a Sophos survey in April 2021 found that the average total

cost of recovery from a ransomware attack has more than doubled in a year,

increasing from $761,106 in 2020 to $1.85 million in 2021. Of course, investing

in preventative cyber security measures also comes at a cost.

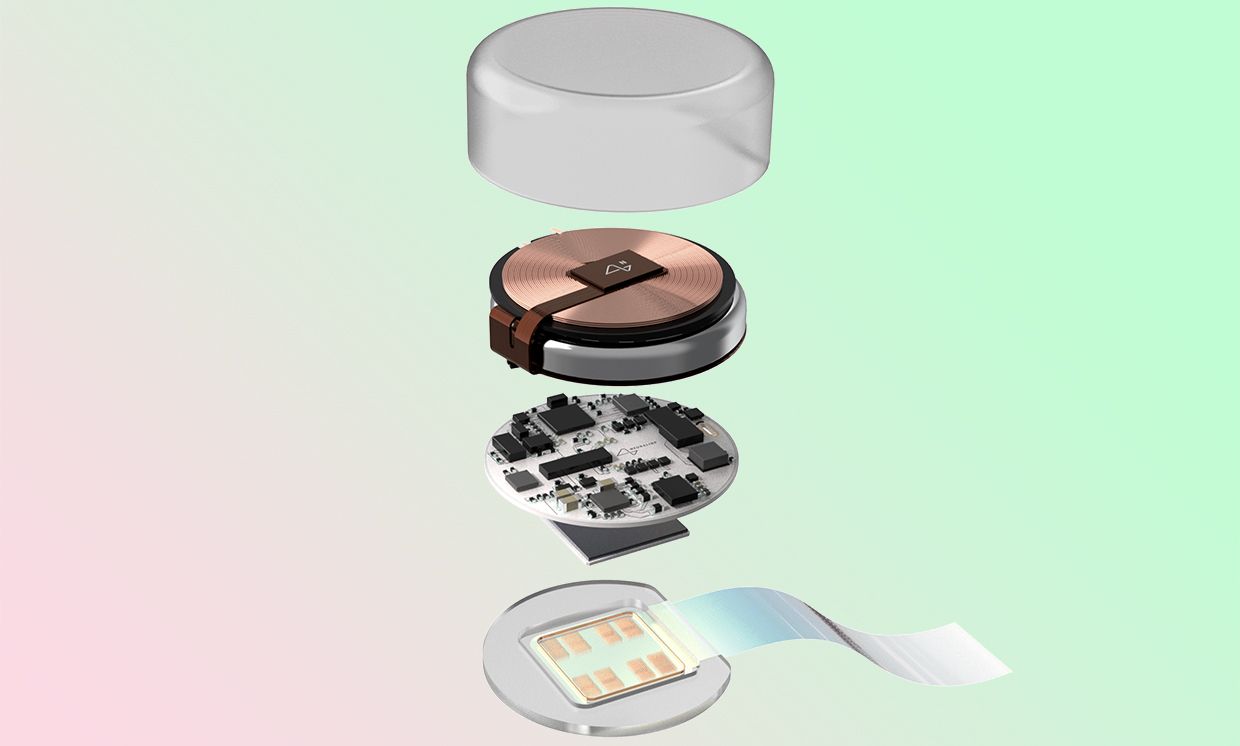

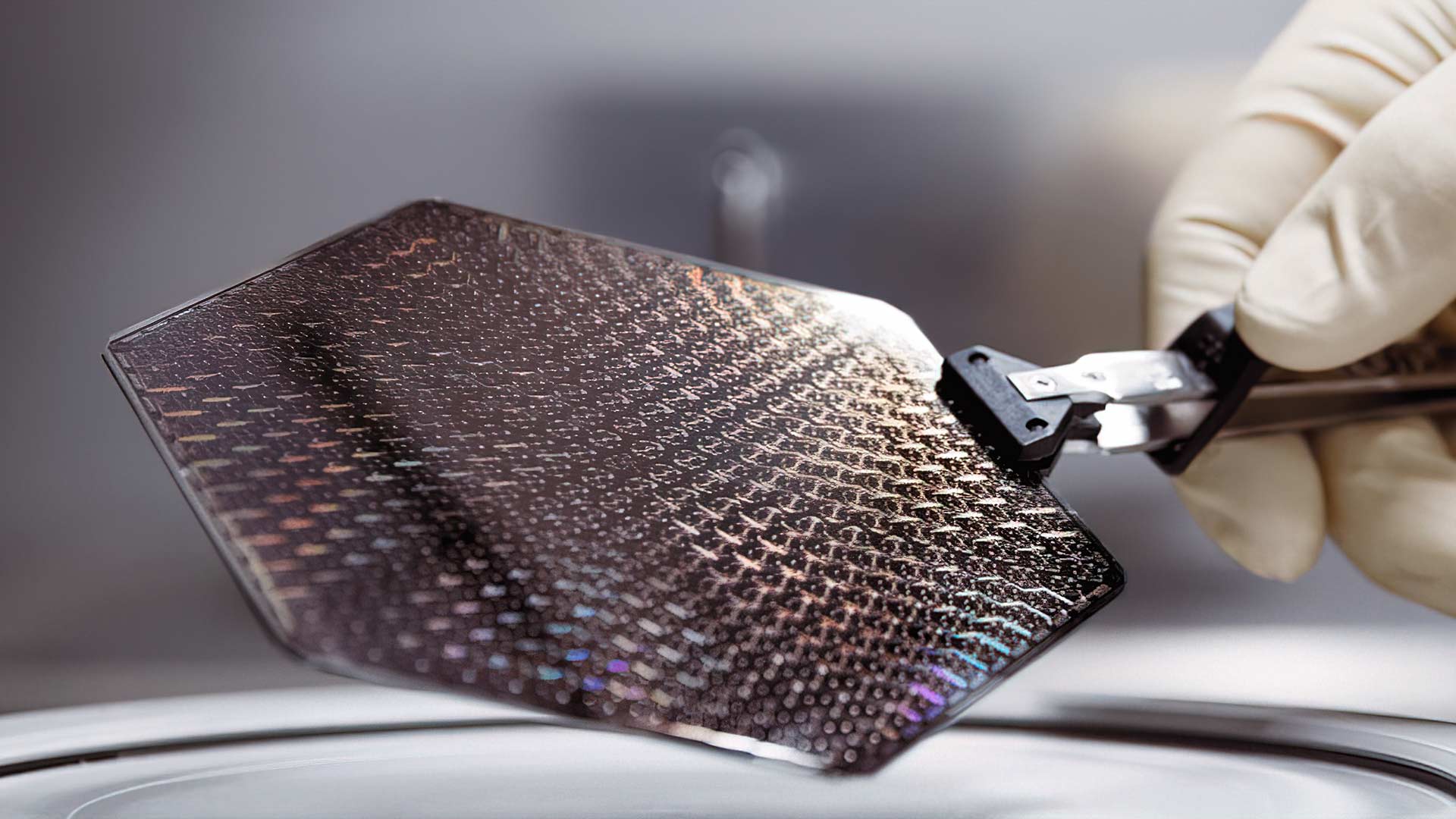

OQC Delivers the UK’s first Quantum Computing as-a-Service

OQC’s core innovation, the Coaxmon, solves these challenges using a

three-dimensional architecture that moves the control and measurement wiring out

of plane and into a 3D configuration. This vastly simplifies fabrication,

improving coherence and – crucially – boosting scalability. This key advantage

underpins the company’s confidence in its strategy to “build the core and

partner with the best”. Just four years after it was founded, having attracted

nearly £2m of UK government support and some of the leading scientists and

engineers in the field, the pre-Series A startup is now a leader in the “noisy

intermediate-scale quantum” (NISQ) era of quantum computing. Yet OQC is doing so

with a fundamental advantage when it comes to scaling up to future generations

of quantum machines. This radical design innovation and its proven effectiveness

so far is driving the company in its mission to help its customers explore the

possibilities of quantum advantage. It is also a great example of the value of

the National Quantum Technologies Programme in supporting excellent research and

the growth of start-ups helping to create a vibrant UK quantum sector.

How I avoid breaking functionality when modifying legacy code

If you were to leave the code as it was written during the first pass (i.e.,

long functions, a lot of bunched-up code for easy initial understanding and

debugging), it would render IDEs powerless. If you cram all capabilities an

object can offer into a single, giant function, later, when you're trying to

utilize that object, IDEs will be of no help. IDEs will show the existence of

one method (which will probably contain a large list of parameters providing

values that enforce the branching logic inside that method). So, you won't know

how to really use that object unless you open its source code and read its

processing logic very carefully. And even then, your head will probably hurt.

Another problem with hastily cobbled-up, "bunched-up" code is that its

processing logic is not testable. While you can still write an end-to-end test

for that code (input values and the expected output values), you have no way of

knowing if the bunched-up code is doing any other potentially risky processing.

Also, you have no way of testing for edge cases, unusual scenarios,

difficult-to-reproduce scenarios, etc. That renders your code untestable, which

is a very bad thing to live with.

Regulating digital transformation in Saudi Arabia

Due to the rapidly changing situation, it is necessary to regulate all matters

related to digitization and digitalization processes. Therefore, the Kingdom

launched the Digital Government Authority (DGA) to serve as an umbrella

organization for all digital matters in Saudi Arabia. In today’s article, we

shall highlight the scope and powers of the authority. First of all, it is

important to get acquainted with the definition of digitization, as knowing the

basic concepts from the regulator’s point of view will certainly correct our

understanding of all relevant terms and determine their actual purpose. We will

start with the most popular term, which is digital transformation. It means

transforming and developing business models strategically to become digital

models based on data, technology, and communication networks. Hence the role of

digital government in supporting administrative, organizational, and operational

processes within and between government entities to achieve digital

transformation, develop, improve and enable easy and effective access to

government information and services.

White boxes in the enterprise: Why it’s not crazy

The cultural problem is simple; your current network vendor will resent your

white-box decision and will likely blame every hiccup or fault you experience

on the new gear. When that happens, and you go to your white-box vendor to get

their side, they may well blame everything on the software, or they may point

the finger back at the proprietary vendor. The software supplier will return

the favor by pointing at everyone, and all the players may point at your own

integration efforts as the source of the problem. If you had finger-pointing

in a two-vendor network, white boxes can make that look like a love feast by

comparison. The technical problem is one of management. All network devices

have to be managed, and the management systems and practices most enterprises

use tend to be tuned to their current devices. White-box management is usually

set by the software, and you can’t necessarily expect much of a choice in how

the management features work. That means your current network-operations

people will have to contend with multiple management choices depending on the

devices they use.

Augmenting Organizational Agility Through Learnability Quotient

Architects have an important role to play as well in achieving organizational

agility. There are articles and books which talk about achieving the stability

of the tech organization via design patterns, reference architectures, best

practices, leveraging checklists, etc. One good example is Fundamentals of

Software Architecture, by Mark Richards and Neal Ford. To instill and improve

the dynamic capability of the organization, technical directions alone are not

enough. The architect’s vision and strategy for the organization can only be

achieved if the organization is capable of executing with the right knowledge.

With ever-changing and evolving tech, having social capital across the

organization is vital. More so in growing startups where the organization’s

dynamics keep changing rapidly. Focusing efforts on the learnability of the

organization by building a learning community and having a learning culture

across the organization is crucial and architects become the linchpin for

that. Matthew Skelton and Manuel Pais summarize perfectly well: Modern

architects must be sociotechnical architects; focusing purely on technical

architecture will not take us far.

Ways to Cultivate a Cyber-Aware Culture

Everyone's vulnerable to phishing scams, from the receptionist to the CEO. No

one is exempt. If you think you're immune because you have a better grasp on

security than everyone else, well, that's not how it works. Security must be

everyone's job. The best way to secure a business is to start at the top. A

cyber aware culture involves cooperation between departments and ongoing

education for all employees, irrespective of how high they are up at the

hierarchy. Whether you're filling out a security awareness questionnaire or

writing your organization's next policy document, focusing on the following

three elements will help you stay true to your cyber aware culture. ... When

we talk of a cyber-aware culture, enterprises need to understand that there's

more to it than technology. It's about people, processes, culture, and

engagement. Security leaders need to take a holistic approach to cyber risk

management. The only way to steer clear of cyber fines and compromised

customers is by involving employees. Empower your people so they become part

of the solution instead of a liability.

Back-office bank UX: the lessons to learn from the Citi-Revlon tale

Any company undertaking digitisation must have a clear understanding of the

key service, or services, it provides to end-customers. This should be their

first port of call. It is then best practice to map the processes involved in

delivering those services, including all people and systems. By gathering this

information, technology teams will encounter employees that inhabit distinct

roles across lifecycles, including subject experts, business leaders, and

end-customers. The goal is to gain a near-complete understanding of the

existing landscape from employees. This can then be manifested in a service

blueprint, a journey map, or a process framework. The organisation should

anticipate that different outputs or levels of depth may result, depending on

the scope and scenario. While it may seem like many organisations, especially

within banking, would already have this institutional knowledge, we have

learned that this type of knowledge usually exists in small pockets, not

across entire organisational lines. Gaining some understanding of the existing

process across the organisation will therefore help clarify opportunities to

converge siloed processes, increase efficiency, improve communications, and

drive to any other pre-defined business objectives.

Quote for the day:

"Becoming a leader is synonymous with

becoming yourself. It is precisely that simple, and it is also that

difficult." -- Warren G. Bennis