Charity on the Internet: How to identify scammers

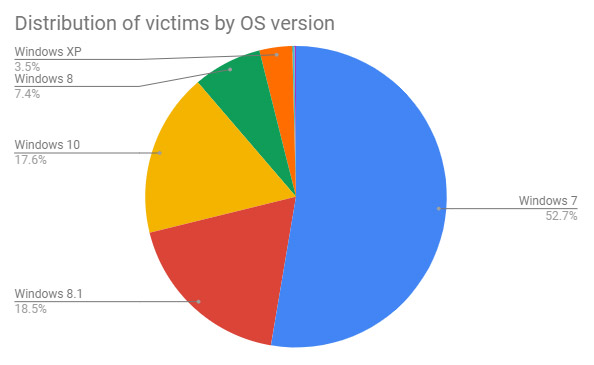

Facebook has been experiencing a wave of fake fundraising campaigns. The pattern is familiar: Attackers create groups from scratch to which they add a couple of posts. They provide bank transfer details along with a bunch of tear-jerking comments. The groups tend to follow a template. The group’s name contains an appeal for help, and the posts provide emotional stories, usually about terminally ill children whose suffering is illustrated by photos and videos that are posted on the page. Some of the posts are practically word-for-word copies of posts in other fraudulent groups. The only details that differ in each group are the child’s name, his or her diagnosis, and the name of the hospital where they are receiving treatment. Frequently the contact information and the bank transfer details are the same for multiple groups — which, by the way, is the most reliable indicator of the scam. New scam groups appear every month, and even though complaints shut them down quickly, some users are inevitably taken in and transfer money to the scammers.

Artificial intelligence and machine learning are the next frontiers for ETFs, says industry pro

The thinking behind this is that the collective wisdom of every smart beta ETF out there — including Goldman’s — is better than the mindset of any individual set of stock pickers. “You’re going to add the data to it that, quite frankly, a human brain just can’t digest,” said Tull. So, the key question becomes, is there any evidence that machine learning can actually outperform when it comes to picking stocks? Dave Nadig, who runs ETF.com, says there is. He points to the AI Powered Equity ETF (AIEQ), which has risen 17%, besting the S&P 500 this year. The fund, run by Equbot, uses both A.I. and IBM Watson to find opportunities in the market. “I think this is the next generation, frankly, of financial product development,” said Nadig. “Machine learning sounds big and scary, but all it is, is really just taking data and things you already know, how things perform, to generate rules – as opposed to hiring a bunch of CFAs to come up with those rules about what you’re going to buy and sell based on fundamentals.”

Not worried about unethical AI? You should be

Millennials (ages 18-38) are the age group most comfortable with AI, but they also have the strongest opinions that guard rails, or ethical practices, are needed. Whether it’s anxiety over AI, desire for a corporate AI ethics policy, worry about liability related to AI misuse or a willingness to require a human employee-to-AI ratio — it’s the youngest group of employers who consistently voice the most apprehension. For example, 21% of millennial employers are concerned their companies could use AI unethically, compared to 12% of Gen X and only 6% of Baby Boomers. “Our research reveals both employers and employees welcome the increasingly important role AI-enabled technologies will play in the workplace and hold a surprisingly consistent view toward the ethical implications of this intelligent technology,” continued Leeson. “We advise companies to develop and document their policies on AI sooner rather than later – making employees a part of the process to quell any apprehension and promote an environment of trust and transparency.”

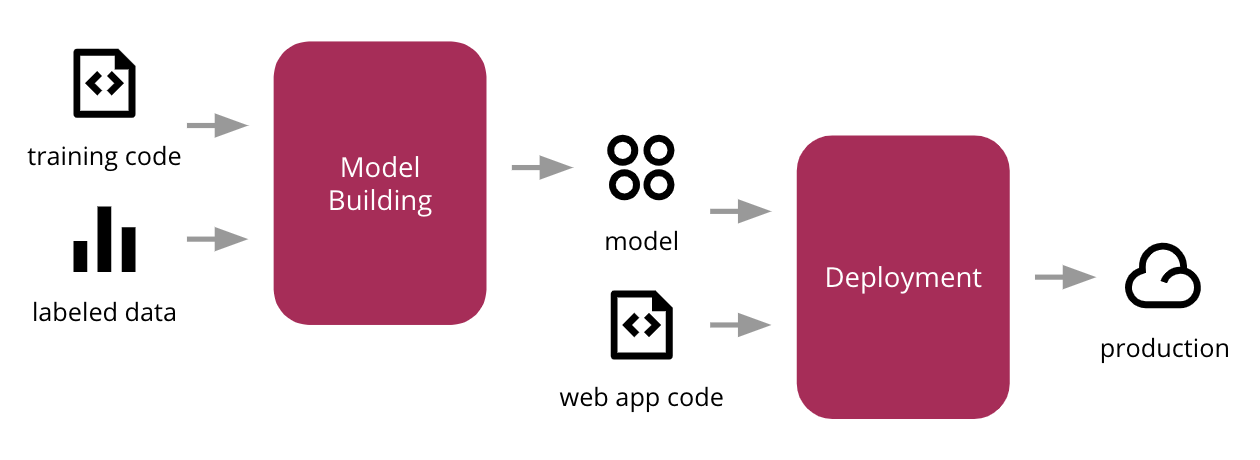

Continuous Delivery for Machine Learning

Regardless of which flavour of architecture you have, it is important that the data is easily discoverable and accessible. The harder it is for Data Scientists to find the data they need, the longer it will take for them to build useful models. We should also consider they will want to engineer new features on top of the input data, that might help improve their model's performance. ... We then stored the output in a cloud storage system like Amazon S3, Google Cloud Storage, or Azure Storage Account. Using this file to represent a snapshot of the input training data, we are able to devise a simple approach to version our dataset based on the folder structure and file naming conventions. Data versioning is an extensive topic, as it can change in two different axis: structural changes to its schema, and the actual sampling of data over time. Our Data Scientist, Emily Gorcenski, covers this topic in more detail on this blog post, but later in the article we will discuss other ways to version datasets over time.

Data Governance and Machine Learning

The advanced AI system providers seem to think that only ML-powered solutions will ultimately satisfy both the regulatory and compliance requirements. Let’s take the example of the banking sector. Currently, the lack of consistency in data definition and quality is a serious deterrent to business operations across the enterprise. ML can help solve regulatory and compliance issues, specifically those related to Data Governance and data security and privacy, faced by different divisions within an enterprise. Now with General Data Protection Regulation (GDPR) requirements in most parts of the world, the advanced technologies are viewed as welcome transitions in global businesses. ... Gartner believes that by 2020, at least 50 percent of Data Governance policies will be driven by metadata. The greatest strengths of metadata are the implementation of accountability at every step, a common vocabulary, and an auditable process for compliance. Then ML technologies can move from the science labs to business halls. ... Surprisingly, during a survey of business executives, only 12 percent of the respondents acknowledged the presence of a defined Data Strategy in their enterprises.

Meet FPGA: The Tiny, Powerful, Hackable Bit of Silicon at the Heart of IoT

In a CPU, the configuration of the chip is established at the chip foundry. Programming governs the movement of bits through the pre-set architecture. In an FPGA, though, the configuration is defined by hardware-definition language (HDL) that's loaded from storage — frequently static random access memory (SRAM) — at device boot time. This means the architecture of the device can be optimized for a particular task — and updated or upgraded as vulnerabilities are discovered or new capabilities are licensed. The ability to update the FPGA is seen as a positive security step because vulnerabilities can be addressed with new definitions. FPGAs are growing in popularity among device manufacturers because they fit more easily into an "agile" work process than do ASICs. While ASICs have to be defined in a manufacturing process that can take weeks or months in production quantities, FPGAs are defined by software that can be revised as frequently as releases can be dropped — many times a day during development.

How Robot CEOs Could Save Capitalism

In the wake of Big Tech, as questions about ethics dominate national conversations and the technology industry focuses on more ethical approaches to A.I., Wallis’ recommendation that the private sector fixes itself through the checks and balances of competition could prove to be a valuable and effective solution to rebuilding America’s middle class. While America’s largest companies begin to deliberate a new definition for the purpose of corporations, technologists and startups seeking to create ethical technology would be wise to explore ways A.I. can improve our economy while doing the least harm to the human workforce. By creating new technology to replace exorbitantly paid CEOs with A.I. that can do their jobs more efficiently while potentially offering more stability to companies, America’s corporations could benefit while the overall workforce remains intact. In turn, billions of dollars that would have gone to CEOs could be freed up to be redistributed through the economy directly, allowing companies to pay sensible wages that can keep pace with inflation without borrowing from the Federal Reserve.

Securing Your Kubernetes Pipeline

Automation is the answer to this challenge. It is nearly impossible to track changes manually, so you have to automate some parts of the process for maximum efficiency. This is done by integrating security compliance into the development and deployment processes. To be able to take this step, however, you have to define clear security and compliance policies first. Integrating security and compliance as early in the pipeline as possible is also highly recommended. This means securing not just the app or code, but also the CI/CD pipeline itself. Fortunately, there are more ways to achieve this. You can, for instance, use the IaC approach to create a standardized deployment stack. Since infrastructure is baked into the deployment package, it is much easier to make sure that a consistent cloud infrastructure is maintained. Another approach is adding (and enforcing) security policies, which we will get to in a second. Using tools like Kritis, Ops can enforce security policies at a much early stage in the development process. The policies govern how new updates and micro-services are deployed.

Using cloud, big data and biometrics to build the airport of the future

Ibbitson's vision of a joined-up airport system has been shaped by the position he holds within Dubai Airports. A focus on integration is inherent to this role: one year after joining the company as CIO, Ibbitson added responsibility for engineering services, taking on his current title of executive vice president for technology and infrastructure. His role is to bring IT and engineering together, helping the organisation make more efficient use of data across its business environment. Considerable progress has already been made and some of this preparatory work will form the foundation for the airport of the future. ... This platform, which supports a move toward multi-factor authentication, provides an integrated access point for accessing the organisation's data. Ibbitson's work on data integration forms part of his ongoing efforts to refine identity management processes and to introduce biometrics for the airport of the future. The aim is that airlines across DXB and DWC will be able to automate identity checks, allowing passengers to use their verified identity to move smoothly around the airports.

Darktrace unveils the Cyber AI Analyst: a faster response to threats

Typically, a human analyst will spend half an hour to half a day investigating a single suspicious security incident. They will look for patterns, form hypotheses, reach conclusions about how to mitigate the threat and share the findings with the rest of the business. The AI cyber security company claim its new solution accelerates this process, continuously conducting investigations behind the scenes and operating at a speed and scale beyond human capabilities. And crucially, Darktrace say it can conduct expert investigations into hundreds of parallel threads simultaneously and instantly communicate its findings in the form of an actionable security narrative. “Cyber AI Analyst emulates the human thought processes that take place when a security analyst performs an investigation on a security incident. It’s like having an extra member of staff that is an expert in their field, and reports on issues in seconds, instead of hours.”

Quote for the day:

"People leave companies for two reasons. One, they don't feel appreciated. And two, they don't get along with their boss." -- Adam Bryant

/cdn.vox-cdn.com/uploads/chorus_image/image/64824601/acastro_180730_1777_facial_recognition_0001.0.jpg)