The big concern from enterprises this week was not being locked out of Google Docs for a time but the fact that Google was scanning documents and other files. Even though this is spelled out in the terms of service, it’s uncomfortably Big Brother-ish, and raises anew questions about how confidential and secure corporate information really is in the cloud. So, do SaaS, IaaS, and PaaS providers make it their business to go through your data? If you read their privacy policies (as I have), the good news is that most don’t seem to. But have you actually read through them to know who, like Google, does have the right to scan and act on your data? Most enterprises do a good legal review for enterprise-level agreements, but much of the use of cloud services is by individuals or departments who don’t get such IT or legal review.

How microservices governance has evolved from SOA

Governance with monoliths is centralized. Decisions are made top-down, and rigid control is maintained to ensure uniformity across the organization and the application stack. Over time, this model degenerates, creates a system that becomes technologically and architecturally stagnant and slows down the pace of innovation. Teams are forced to merely conform to the set order of things rather than look for new, creative solutions to problems. For microservices governance, a decentralized model works best. Just as the application itself is broken down into numerous interdependent services, large, siloed teams are broken down into small, multifunctional teams. This follows the progression from development, testing and IT teams morphing into smaller DevOps teams.

5 cyber threats every security leader must know about

The first is Consumer IoT. These are the devices we are most familiar with, such as smartphones, watches, appliances, and entertainment systems. Users insist on connecting many of these to their business networks to check e-mail and sync calendars, while also browsing the Internet and checking on how many steps they have taken in the day. The list of both work and leisure activities these devices can accomplish continues to increase, and the crossover between these two areas presents increasing challenges to IT security teams. ... The cloud is transforming how business is conducted. Over the next few years, as much as 92 percent of IT workloads will be processed by cloud data centers, with the remaining 8 percent continuing to be processed in traditional on-premises data centers.

Inside-Out: How IoT Changes Everything

"Design thinking is a way to place the user at the heart of the innovation process," he said. "Our company strategy is really that innovation is not coming from startups or technologies, but from the end users and the customer observation. It's really focused on the end user. We are working, for example, with ethnologists and psychologists to understand the problems and to describe the problems. It's really important for us." Celier explained that VISEO created specialized innovation centers as part of their One Roof program. The idea is to bring clients into their production studios, much like filmmakers bring all the talent into a studio for producing movies. "We are incubating our customer's project in our building. It's a way to go faster. They come with their vision, their idea, and they leave with a platform or product," he said.

Cybersecurity thwarts productivity and innovation, report says

The top priority of most organizations — cybersecurity — is hindering productivity and innovation, according to a recent report by Silicon Valley-based virtualization firm Bromium. Based on a survey of 500 chief information security officers in large organizations in the U.S., U.K. and Germany, 74 percent of respondents said end users were frustrated by how security requirements disrupt operations. "Our research found, on average, an organization gets complaints from users twice a week saying that legitimate work activity is being blocked or rejected by over-zealous security systems," the report reads. Citing that most — 88 percent — of organizations use a prohibition approach to cybersecurity, the firm suggests "a new approach" that allows more technological innovation within the organization.

Securing Smart Homes

“The industry is starting to get educated about the need for [better security],” Dirvin says. “Now they ask more questions about it and are willing to spend more time and effort,” but not always money. Manufacturers of smart home devices typically haven’t had to think about security in the same way as a medical device maker or a manufacturer of industrial automation. “It’s a whole new area for them, so they’re rushing to build connectivity and incorporate these devices into a broader IoT strategy,” says Warren Kurisu, director of product management in the embedded systems division at Mentor, a Siemens business. “The security, from a software perspective, is something they’re just now starting to realize that they need to do.” This is especially true in the wake of the Mirai attack. The number of connected devices is expected to reach 20.4 billion by 2020, according to Gartner.

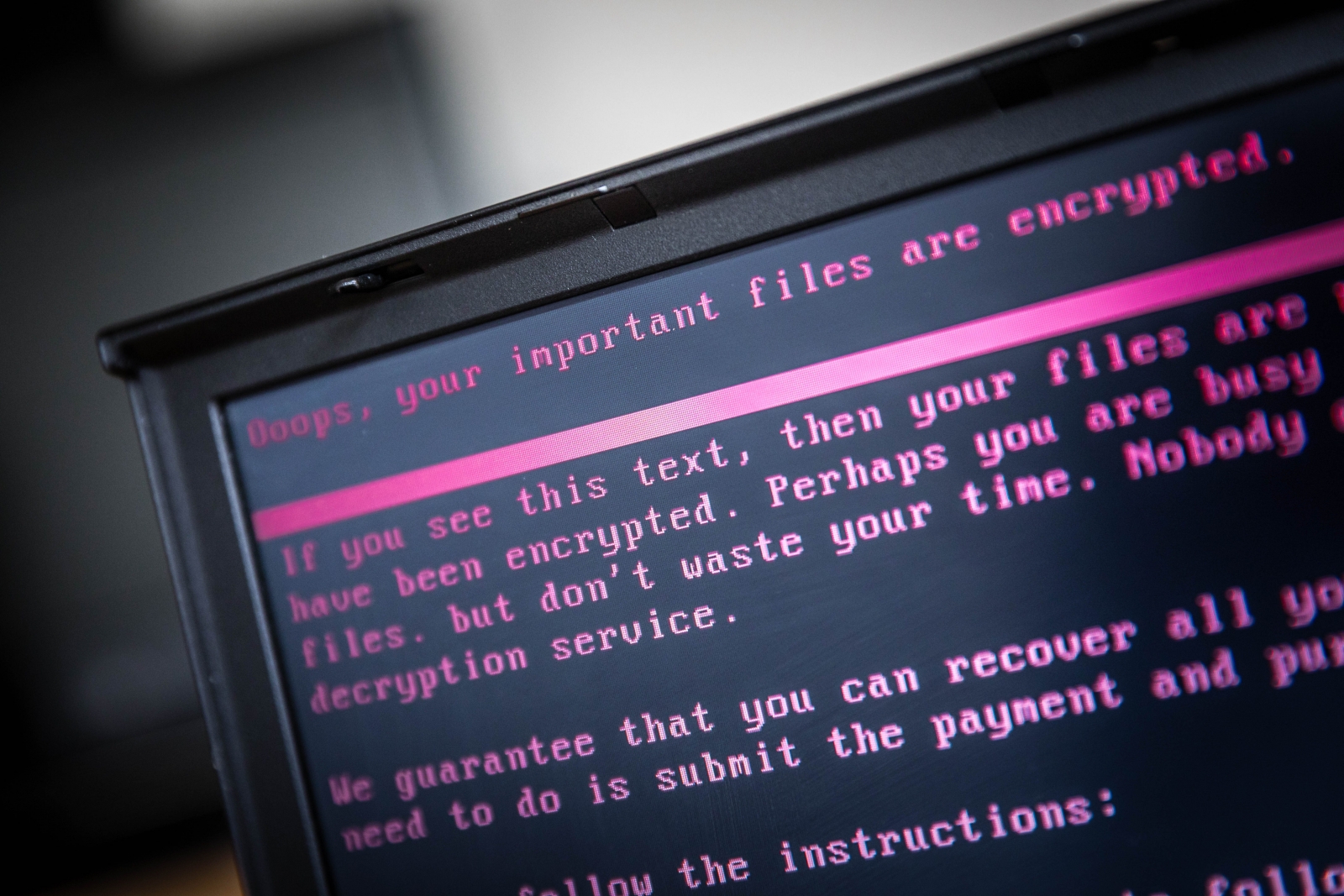

Was BadRabbit a distraction? Malware 'used to cover up smaller phishing attacks'

"There is an open, let's say instantly obvious attack, while underneath there is a hidden, fairly well-thought-out attack, to which nobody pays attention," police chief Serhiy Demedyuk told attendees while speaking at the Reuters Cyber Security Summit in Kiev. "During these attacks, we repeatedly detected more powerful, quiet attacks that were aimed at obtaining financial and confidential information." He said the so-called "hybrid attack" – meaning a multi-pronged assault – was also found to be targeting users of a popular form of Russian accounting software called 1C. "The main theory we're working on now is that they [the hackers in both attacks] were one and the same," Demedyuk added. "The goal was to get remote and undetected access."

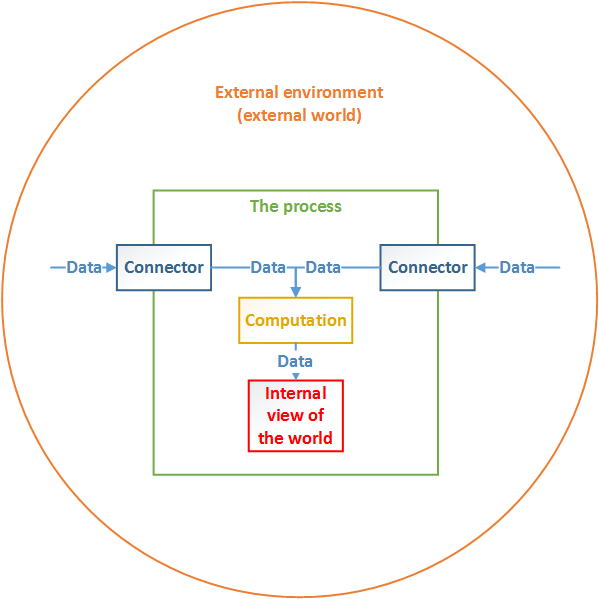

The Internet of Things is about much more than just connecting devices

The connected nature towards which we are migrating will allow manufacturers to better understand what their customers require on a real-time basis. This in turn enables the manufacturer to recalibrate not only the actual manufacturing part of the business and what they procure, but also to become highly competitive, super in-tune with what their customer requirements are, down to quality requirements per customer. That transparency will drive product improvement and customer satisfaction to new levels. Manufacturers will not order more raw material than they need. Think about latency and how this will be addressed. Consider this example: a customer wants a product; there’s the procurement of materials, import, export, shipping, logistics, manufacturing – it can take up to six months or more.

7 habits of highly effective digital transformations

The collaborative efforts have paid off. “As a result of sharing practices, we have identified cases where we see a common failure mode in our continuous integration, delivery and operational practices — and then we are able to propagate the fix across all teams and improve and correct across all teams,’’ Fairweather says. Management also conducted a survey of its strategic foundational technology program. Fairweather recalls one comment an employee gave as feedback: “Instead of being a cog in the wheel I’m a better-informed contributor. The best part of learning from peers is gaining new contacts. We are more united as global organization in pursuing these 10 areas because we had done this.’’ ... As organizations get larger, different groups can begin to cut themselves off from one another, creating silos of information, he says.

6 Steps Up: From Zero to Data Science for the Enterprise

Different stakeholders have different views about the desire for a Customer360, but perhaps the most clarifying is that for a company to truly drive value and delight its customers, the business must understand those customers and approach every question from their perspective. Without a Customer360 built on a foundation of data science, the business will only ever have a qualitative view of customers. I believe a true, quantitative understanding of customers relies on rigorous data science. Less attention has been paid to the concept of a Product360, but it's no less important. Depending on the business, a Product360 can potentially drive more value through cost savings and cost avoidance than the business can derive from new revenue. The ultimate goal of a Product360 is creating assets that allow the business to explore each product from earliest inception through the end of its lifecycle.

Quote for the day:

"Instinct is intelligence incapable of self-consciousness." -- John Sterling