Traditionally security was an afterthought in the development cycle, but over the past few years, it’s quickly become a core part of the process. Now aptly called DevSecOps, the process incorporates security earlier into the development and testing software phases as a means to achieve faster, higher quality outcomes that are both innovative and secure. While DevSecOps is growing in popularity, organisations are still struggling to combat malware injections or data breaches, because their developer and IT teams don’t have the security knowledge or skills needed to launch products threat-free. ... With more than half of organisations using DevOps practices across their business or within teams, the personal debt is bound to have a real impact on the productivity of businesses, the safety of its products, and the quality of applications that ultimately form the foundation of today’s digital economy.

Cybersecurity is key for the smart cities of tomorrow

Without a secure cyber foundation, smart cities will crumble. Built on a secure cyber foundation, smart cities will thrive. We were encouraged to see that the proposed legislation specifically focused on developing a “skilled and savvy domestic workforce to support smart cities.” At the heart of the secure smart cities of tomorrow will be a dynamic IT workforce, confident and capable of training and re-training on a consistent basis to stay ahead of the latest threats. Our research shows that just 35 percent of government officials believe that their organizations are well equipped to handle the cyber requirements of smart city projects. Moreover, 40 percent of government officials and personnel cite skills gaps and a lack of necessary technology expertise a primary concern affecting the expansion of smart city initiatives.

Enterprises 'radically outstripping' traditional technology: Nokia

"Nokia is seeing a watershed moment for the industry as significant global trends and changes to the cost base of what we have traditionally considered carrier capabilities are changing the dynamic of the way carriers are addressing the business market for telecommunications," he added. "The challenge now is for our industry -- carriers and suppliers -- to meet business halfway and ensure they understand that we are here to contribute to their future. "Industrial network requirements are rapidly shifting, and networks are changing to meet those needs." Labelling 5G as more than just the next evolution of the traditional network, Conway said it will accelerate this transformation of industry. "5G is specifically being designed to cater for the tens of billions of devices expected for our automated future," he said.

Cyber threats are among top dangers, says Nato

One of the biggest challenges is bringing innovation faster in Nato’s approach to cyber defence, he said. “This is one of the objectives where we still need to push a little harder,” he added. Ducaru said recognising cyber space as an operational domain requires a change of assumption. Previously, Nato worked under the assumption that it could rely on its systems and the integrity of the information, he said. “We concluded that this assumption was no longer valid, and that we needed to change our training, education and planning with the assumption that systems will be disrupted, that we will constantly be under cyber attack, and that we will need to achieve missions under these conditions,” he said. As a result, Nato has switched its focus from “information assurance” to “mission assurance” to support essential operations.

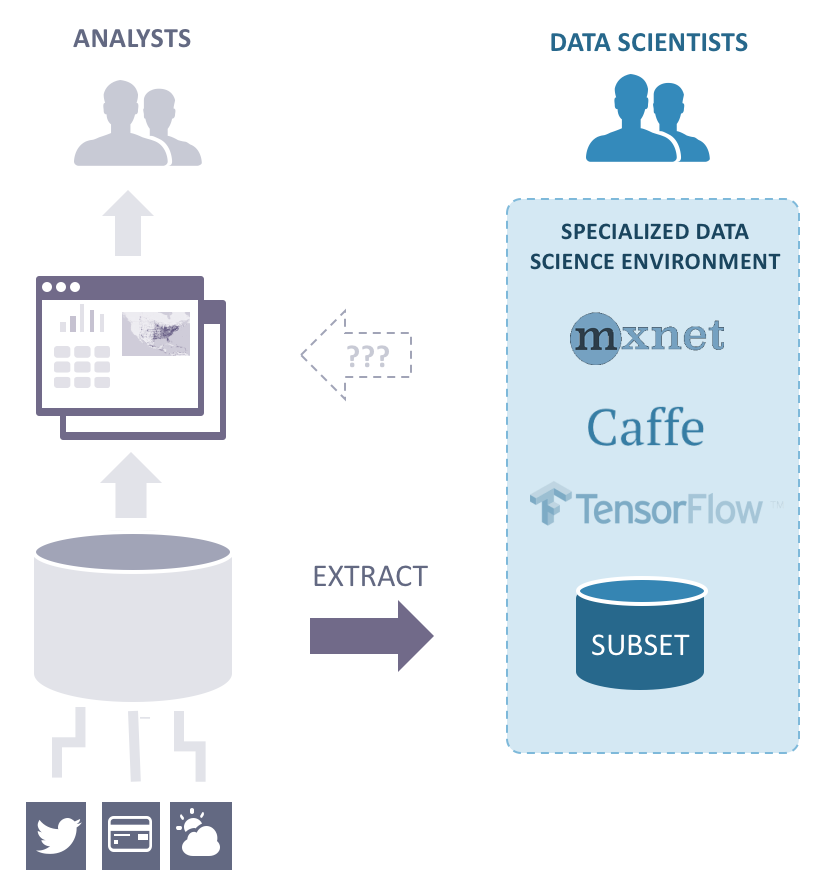

Converging big data, AI, and business intelligence

Although different GPU-based database and data analytics solutions offer different capabilities, all are designed to be complementary to or integrated with existing applications and platforms. Most GPU-accelerated AI databases have open architectures, which allow you to integrate machine learning models and libraries, such as TensorFlow, Caffe, and Torch. They also support traditional relational database applications, such as SQL-92 and ODBC/JDBC. Data scientists are able to create custom user-defined functions to develop, test, and train simulations and algorithms using published APIs. Converging data science with business intelligence into one database, allows you to provide for the criteria necessary for AI workloads, including compute, throughput, data management, interoperability, security, elasticity, and usability.

Olympic Games Face Greater Cybersecurity Risks

While most of the past attacks on sporting events center on IT systems at stadiums and ticket sales and operations, future cyberattacks at the Olympics may occur in eight key areas, says Cooper. The areas include cyberattacks to facilitate terrorism and kidnappings and panic-induced stampedes; altering scoring systems; changing photo and video replay equipment; tampering with athlete care food dispensing systems; infiltrating monitoring equipment; tampering with entry systems; and interfering with transportation systems. "I was surprised to learn there are instances where human decisions are overridden by technology," Cooper said, in reference to a growing reliance on using technology to make the first call in a sporting event, rather than a human referee. She pointed to the reliance of electronic line-calling technology Hawk-Eye that is used in such sports as tennis.

Why Machine Learning and Why Now?

Although machine learning has already matured to the point where it should be a vital part of organizations’ strategic planning, several factors could limit its progress if leaders don’t plan carefully. These limitations include the quality of data, the abilities of human programmers, and cultural resistance to new ways of working with machines. However, the question is when, not if, today’s data analysis methods become quaint relics of earlier times. This is why organizations must begin experimenting with machine learning now and take the necessary steps to prepare for its widespread use over the coming years. What is driving this inexorable march toward a world that was largely constrained to cheesy sci-fi novels just a few decades ago? Advances in artificial intelligence, of which machine learning is a subset, have a lot to do with it.

Creating a Strategy That Works

Distinctive capabilities are not easy to build. They are complex and expensive, with high fixed costs in human capital, tools, and systems. How then do businesses such as IKEA, Natura, and Danaher design and create the capabilities that give them their edge? How do they bring these capabilities to scale and generate results? To answer these questions, we conducted a study between 2012 and 2014 of a carefully selected group of extraordinary enterprises that were known for their proficiency, for consistently doing things that other businesses couldn’t do. From dozens suggested to us by industry experts, we chose a small group, representing a range of industries and regions, that we could learn about in depth — either from published materials or from interviews with current and former executives.

Understanding the hidden costs of virtualisation

Today, data underpins business continuity and therefore user expectations for server uptime are higher than ever before. More than at any time, the prospect of downtime is punishing for a company’s reputation and bottom line, meaning it must be avoided. This places added pressure on IT administrators to keep all machines up and running. Ideally, a fully dynamic and optimised infrastructure is achieved by an IT admin carefully running through a checklist or policy each time a new virtual machine (VM) is “spun up”. In reality, IT administrators are extremely strapped for time and can no longer afford to manually go through checklists. Instead, they are spending their resources on keeping the data centre lights on by ensuring users have access to the data and files they need to keep the business moving forward.

Much GDPR prep is a waste of time, warns PwC

Although some organisations claim to be following a risk-based approach to GDPR compliance, Room said that if that activity is not “anchored to a taxonomy of risk”, the activity is “purposeless”, and purposeless activity is one of the quickest ways of being hit by enforcement action, he said. For organisations that have not done any GDPR preparation with just seven months to go before the compliance deadline of 25 May 2018, Room said the biggest risk is that all the third-party service providers that could help have already been snapped up and are working to capacity. In addition to legislative compliance risk, there is also the risk of failing to deliver a GDPR programme, he said, and regulator risk because the Information Commissioner’s Office and all the other EU data protection authorities also form part of the spectrum of risks.

Quote for the day:

"The final test of a leader is that he leaves behind him in other men, the conviction and the will to carry on." -- Walter Lippmann