Quote for the day:

"A leader has the vision and conviction that a dream can be achieved. He inspires the power and energy to get it done." -- Ralph Nader

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 37 mins • Perfect for listening on the go.

Job disruption by AI remains limited — and traditional metrics may be missing the real impact

This article on computerworld explores the current state of artificial

intelligence in the workforce. Despite widespread alarm, data from Challenger,

Gray & Christmas indicates that AI accounted for roughly 8 to 10 percent

of job cuts in early 2026. Researchers from Anthropic argue that traditional

metrics fail to capture the nuances of AI integration, introducing an

"observed exposure" methodology. This technique combines theoretical large

language model capabilities with actual usage data, revealing that while

certain roles—such as computer programmers and customer service

representatives—have high exposure to automation, actual deployment lags

significantly behind technical potential. Currently, AI functions primarily as

a tool for task-based augmentation rather than full-scale replacement, which

enhances worker productivity but complicates entry-level hiring. The report

suggests that while immediate mass unemployment hasn't materialized, the

long-term impact will require a fundamental re-engineering of workflows. This

shift may disproportionately affect younger workers as companies struggle to

balance AI efficiency with the necessity of maintaining a pipeline of human

talent. Ultimately, the transition necessitates a strategic realignment of

human roles to ensure sustainable growth in an intelligence-native era.

This article on computerworld explores the current state of artificial

intelligence in the workforce. Despite widespread alarm, data from Challenger,

Gray & Christmas indicates that AI accounted for roughly 8 to 10 percent

of job cuts in early 2026. Researchers from Anthropic argue that traditional

metrics fail to capture the nuances of AI integration, introducing an

"observed exposure" methodology. This technique combines theoretical large

language model capabilities with actual usage data, revealing that while

certain roles—such as computer programmers and customer service

representatives—have high exposure to automation, actual deployment lags

significantly behind technical potential. Currently, AI functions primarily as

a tool for task-based augmentation rather than full-scale replacement, which

enhances worker productivity but complicates entry-level hiring. The report

suggests that while immediate mass unemployment hasn't materialized, the

long-term impact will require a fundamental re-engineering of workflows. This

shift may disproportionately affect younger workers as companies struggle to

balance AI efficiency with the necessity of maintaining a pipeline of human

talent. Ultimately, the transition necessitates a strategic realignment of

human roles to ensure sustainable growth in an intelligence-native era.Why Password Audits Miss the Accounts Attackers Actually Want

This article on BleepingComputer highlights a critical disconnect between

standard compliance-driven password audits and the actual tactics used by

cybercriminals. While traditional audits prioritize technical requirements

like complexity and rotation, they often overlook the context that makes an

account vulnerable. For instance, a password can be statistically "strong" yet

already compromised in a previous breach; research indicates that 83% of

leaked passwords still meet regulatory standards. Furthermore, audits

frequently neglect "orphaned" accounts belonging to former employees or

contractors, which provide silent entry points for attackers. Service

accounts—often over-privileged and exempt from expiry policies—represent

another major blind spot. The piece argues that point-in-time snapshots are

insufficient against continuous threats like credential stuffing. To be truly

effective, security teams must shift toward continuous monitoring,

incorporating breached-password screening and risk-based prioritization. By

expanding the scope to include dormant, external, and service accounts,

organizations can move beyond mere compliance to address the high-value

targets that attackers prioritize. Ultimately, securing a digital environment

requires recognizing that a compliant password is not necessarily a safe one

in the face of modern, targeted exploitation.

This article on BleepingComputer highlights a critical disconnect between

standard compliance-driven password audits and the actual tactics used by

cybercriminals. While traditional audits prioritize technical requirements

like complexity and rotation, they often overlook the context that makes an

account vulnerable. For instance, a password can be statistically "strong" yet

already compromised in a previous breach; research indicates that 83% of

leaked passwords still meet regulatory standards. Furthermore, audits

frequently neglect "orphaned" accounts belonging to former employees or

contractors, which provide silent entry points for attackers. Service

accounts—often over-privileged and exempt from expiry policies—represent

another major blind spot. The piece argues that point-in-time snapshots are

insufficient against continuous threats like credential stuffing. To be truly

effective, security teams must shift toward continuous monitoring,

incorporating breached-password screening and risk-based prioritization. By

expanding the scope to include dormant, external, and service accounts,

organizations can move beyond mere compliance to address the high-value

targets that attackers prioritize. Ultimately, securing a digital environment

requires recognizing that a compliant password is not necessarily a safe one

in the face of modern, targeted exploitation.AI is supercharging cloud cyberattacks - and third-party software is the most vulnerable

The latest Google Cloud Threat Report, as analyzed by ZDNET, highlights a

significant escalation in cybersecurity risks where artificial intelligence is

increasingly being used to "supercharge" cloud-based attacks. The report

reveals a dramatic collapse in the window between the disclosure of a

vulnerability and its mass exploitation, shrinking from weeks to mere days.

Rather than targeting the highly secured core infrastructure of major cloud

providers, threat actors are now focusing their efforts on unpatched

third-party software and code libraries. This shift emphasizes that the modern

supply chain remains a critical weak point for many organizations.

Furthermore, the report notes a transition away from traditional brute force

attacks toward more sophisticated identity-based compromises, including

vishing, phishing, and the misuse of stolen human and non-human identities.

Data exfiltration is also evolving, with "malicious insiders" increasingly

using consumer-grade cloud storage services to move confidential information

outside the corporate perimeter. To combat these AI-powered threats, Google’s

experts recommend that businesses adopt automated, AI-augmented defenses,

prioritize immediate patching of third-party tools, and strengthen identity

management protocols. Ultimately, the report serves as a stark warning that in

the current threat landscape, speed and automation are no longer optional but

essential components of a robust cybersecurity strategy.

The latest Google Cloud Threat Report, as analyzed by ZDNET, highlights a

significant escalation in cybersecurity risks where artificial intelligence is

increasingly being used to "supercharge" cloud-based attacks. The report

reveals a dramatic collapse in the window between the disclosure of a

vulnerability and its mass exploitation, shrinking from weeks to mere days.

Rather than targeting the highly secured core infrastructure of major cloud

providers, threat actors are now focusing their efforts on unpatched

third-party software and code libraries. This shift emphasizes that the modern

supply chain remains a critical weak point for many organizations.

Furthermore, the report notes a transition away from traditional brute force

attacks toward more sophisticated identity-based compromises, including

vishing, phishing, and the misuse of stolen human and non-human identities.

Data exfiltration is also evolving, with "malicious insiders" increasingly

using consumer-grade cloud storage services to move confidential information

outside the corporate perimeter. To combat these AI-powered threats, Google’s

experts recommend that businesses adopt automated, AI-augmented defenses,

prioritize immediate patching of third-party tools, and strengthen identity

management protocols. Ultimately, the report serves as a stark warning that in

the current threat landscape, speed and automation are no longer optional but

essential components of a robust cybersecurity strategy.Change as Metrics: Measuring System Reliability Through Change Delivery Signals

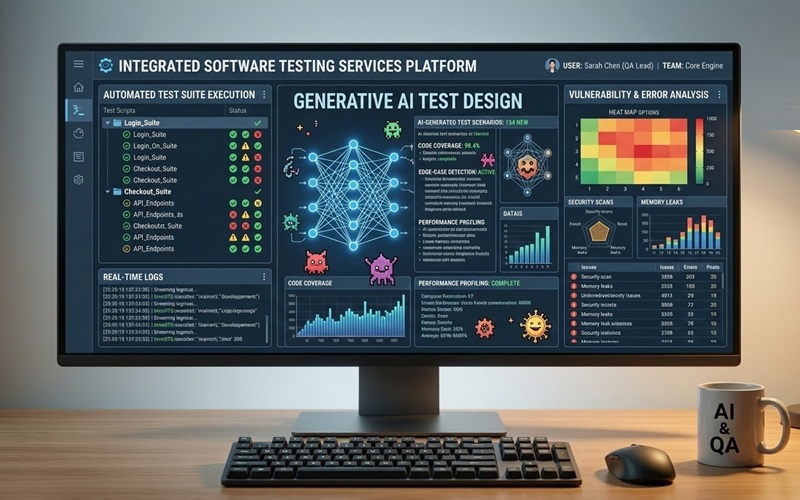

This article highlights that system changes account for the vast majority of production incidents, necessitating their treatment as primary reliability indicators. To manage this risk, the author proposes a framework centered on three core business metrics: Change Lead Time, Change Success Rate, and Incident Leakage Rate. While aligned with DORA principles, this model specifically focuses on delivery quality by distinguishing between immediate deployment failures and latent defects that manifest as post-release incidents. To operationalize these goals, technical control metrics such as Change Approval Rate, Progressive Rollout Rate, and Change Monitoring Windows are introduced to provide actionable insights into pipeline friction and risk. The piece further advocates for a platform-agnostic, event-centric data architecture to collect these signals across diverse, distributed environments. This centralized approach avoids the brittleness of platform-specific logging and provides a unified view of system health. Ultimately, the framework empowers organizations to transform change management from a reactive necessity into a proactive, measurable engineering capability. By integrating these metrics, development teams can effectively balance the need for high-speed delivery with the imperative of system stability, ensuring that rapid innovation does not come at the expense of user experience or operational reliability.The future of generative AI in software testing

In this article on Techzine, experts Hélder Ferreira and Bruno Mazzotta discuss

the transformative shift of AI from a simple task accelerator to a fundamental

structural layer within delivery pipelines. As global IT investment in AI is

projected to surge toward $6.15 trillion by 2026, the software testing landscape

is evolving beyond early challenges like hallucinations and "vibe coding" toward

a sophisticated "quality intelligence layer." The authors outline four critical

areas where AI adds strategic value: generating complex scenario-based datasets,

suggesting high-risk exploratory prompts, automating defect triage to identify

regression patterns, and enabling context-aware execution that prioritizes

testing based on actual risk rather than volume. Crucially, the piece argues

that while AI can significantly enhance velocity, sustainable success depends on

maintaining "humans-in-the-loop" to ensure traceability and accountability. In

this new era, the primary differentiator for enterprises will not be the sheer

amount of AI deployed, but the effectiveness of their governance frameworks. By

linking intent with execution and using AI as connective tissue across the

lifecycle, organizations can achieve a balance where rapid delivery is supported

by explainable automation and human-verified confidence in software quality.

In this article on Techzine, experts Hélder Ferreira and Bruno Mazzotta discuss

the transformative shift of AI from a simple task accelerator to a fundamental

structural layer within delivery pipelines. As global IT investment in AI is

projected to surge toward $6.15 trillion by 2026, the software testing landscape

is evolving beyond early challenges like hallucinations and "vibe coding" toward

a sophisticated "quality intelligence layer." The authors outline four critical

areas where AI adds strategic value: generating complex scenario-based datasets,

suggesting high-risk exploratory prompts, automating defect triage to identify

regression patterns, and enabling context-aware execution that prioritizes

testing based on actual risk rather than volume. Crucially, the piece argues

that while AI can significantly enhance velocity, sustainable success depends on

maintaining "humans-in-the-loop" to ensure traceability and accountability. In

this new era, the primary differentiator for enterprises will not be the sheer

amount of AI deployed, but the effectiveness of their governance frameworks. By

linking intent with execution and using AI as connective tissue across the

lifecycle, organizations can achieve a balance where rapid delivery is supported

by explainable automation and human-verified confidence in software quality.

CIOs cut IT corners to manufacture budget for AI

In this CIO.com article, author Esther Shein examines the aggressive strategies

IT leaders are employing to fund artificial intelligence initiatives amidst

stagnant overall budgets. Faced with intense pressure from boards and executive

leadership to prioritize AI, many CIOs are being forced to make difficult

trade-offs that jeopardize long-term stability. Common tactics include delaying

non-critical infrastructure refreshes, such as server expansions and network

improvements, which are often pushed out by twelve to eighteen months.

Additionally, organizations are aggressively consolidating vendors,

renegotiating contracts, and cutting legacy software subscriptions to free up

capital. Some leaders have even implemented strict "self-funding" mandates where

every new AI project must be offset by equivalent cuts elsewhere. Beyond

technical sacrifices, the human element is also affected, with many departments

reducing reliance on contractors or trimming internal staff to reallocate funds

toward high-impact AI use cases. While these measures enable rapid deployment,

they frequently lead to the accumulation of technical debt and a narrower scope

for implementations. Ultimately, the piece warns that while these "corners" are

being cut to fuel innovation, the resulting lack of focus on foundational

maintenance could present significant operational risks in the future.

In this CIO.com article, author Esther Shein examines the aggressive strategies

IT leaders are employing to fund artificial intelligence initiatives amidst

stagnant overall budgets. Faced with intense pressure from boards and executive

leadership to prioritize AI, many CIOs are being forced to make difficult

trade-offs that jeopardize long-term stability. Common tactics include delaying

non-critical infrastructure refreshes, such as server expansions and network

improvements, which are often pushed out by twelve to eighteen months.

Additionally, organizations are aggressively consolidating vendors,

renegotiating contracts, and cutting legacy software subscriptions to free up

capital. Some leaders have even implemented strict "self-funding" mandates where

every new AI project must be offset by equivalent cuts elsewhere. Beyond

technical sacrifices, the human element is also affected, with many departments

reducing reliance on contractors or trimming internal staff to reallocate funds

toward high-impact AI use cases. While these measures enable rapid deployment,

they frequently lead to the accumulation of technical debt and a narrower scope

for implementations. Ultimately, the piece warns that while these "corners" are

being cut to fuel innovation, the resulting lack of focus on foundational

maintenance could present significant operational risks in the future.Beyond Prompt Injection: The Hidden AI Security Threats in Machine Learning Platforms

In the article "Beyond Prompt Injection: The Hidden AI Security Threats in Machine Learning Platforms," the focus of AI security shifts from headline-grabbing prompt injections to the critical vulnerabilities within MLOps infrastructure. While many security teams prioritize protecting chatbots from manipulation, the underlying platforms used to train and deploy models often present a far more dangerous attack surface. Through a red team engagement, researchers demonstrated how a simple self-registered trial account could be used to achieve remote code execution on a provider’s cloud infrastructure. By deploying a seemingly legitimate but malicious machine learning model, attackers can exploit the fact that these platforms must execute arbitrary code to function. The study highlights a significant risk: once RCE is achieved, weak network segmentation can allow adversaries to bypass trust boundaries and access sensitive internal databases or services. This effectively turns a managed ML environment into a gateway for lateral movement within a corporate network. To mitigate these threats, the article stresses that organizations must move beyond model-centric security and adopt robust infrastructure protections, including strict network isolation, continuous behavior monitoring, and a "zero-trust" approach to user-deployed artifacts, ensuring that the convenience of rapid AI development does not come at the cost of total system compromise.Enterprise agentic AI requires a process layer most companies haven’t built

The VentureBeat article emphasizes that while 85% of enterprises aspire to

implement agentic AI within the next three years, a staggering 76% acknowledge

that their current operations are fundamentally unequipped for this transition.

The core issue lies in the absence of a "process layer"—a critical foundation of

optimized workflows and operational intelligence that provides AI agents with

the necessary context to function effectively. Without this layer, agents are

essentially "guessing," leading to a lack of reliability that causes 82% of

decision-makers to fear a failure in return on investment. The piece argues that

the primary hurdle is not merely technological but rather rooted in

organizational structure and change management. Most companies suffer from

siloed data and fragmented processes that hinder the seamless integration of

autonomous systems. To overcome these barriers, businesses must prioritize

process optimization and operational visibility, ensuring that AI-driven

initiatives are linked to strategic executive outcomes. Simply layering advanced

AI over inefficient, legacy frameworks will likely result in costly friction.

Ultimately, for agentic AI to move beyond experimental pilots and deliver

scalable value, organizations must first build a robust architectural bridge

that connects sophisticated models with the complex, real-world logic of their

daily business operations and high-stakes organizational decision cycles.

The VentureBeat article emphasizes that while 85% of enterprises aspire to

implement agentic AI within the next three years, a staggering 76% acknowledge

that their current operations are fundamentally unequipped for this transition.

The core issue lies in the absence of a "process layer"—a critical foundation of

optimized workflows and operational intelligence that provides AI agents with

the necessary context to function effectively. Without this layer, agents are

essentially "guessing," leading to a lack of reliability that causes 82% of

decision-makers to fear a failure in return on investment. The piece argues that

the primary hurdle is not merely technological but rather rooted in

organizational structure and change management. Most companies suffer from

siloed data and fragmented processes that hinder the seamless integration of

autonomous systems. To overcome these barriers, businesses must prioritize

process optimization and operational visibility, ensuring that AI-driven

initiatives are linked to strategic executive outcomes. Simply layering advanced

AI over inefficient, legacy frameworks will likely result in costly friction.

Ultimately, for agentic AI to move beyond experimental pilots and deliver

scalable value, organizations must first build a robust architectural bridge

that connects sophisticated models with the complex, real-world logic of their

daily business operations and high-stakes organizational decision cycles.Building resilient foundations for India’s expanding Data Centre ecosystem

In "Building resilient foundations for India's expanding Data Centre ecosystem,"

Saurabh Verma explores the rapid evolution of India’s data infrastructure and

the urgent necessity of prioritizing long-term resilience over mere capacity. As

cloud adoption and 5G accelerate growth across hubs like Mumbai, Chennai, and

Hyderabad, the sector faces escalating challenges that demand a sophisticated

understanding of risk management. The article argues that modern data centres

are no longer just IT assets but critical infrastructure whose failure directly

impacts the digital economy. Beyond physical damage, business interruptions

often result in massive financial losses, contractual penalties, and significant

reputational harm. Climate change has emerged as a significant operational

reality, with heatwaves and flooding stressing cooling systems and electrical

grids. Furthermore, the convergence of cyber and physical risks means that

digital disruptions can quickly translate into tangible infrastructure damage.

Construction complexities and logistical interdependencies further amplify

potential losses, making early risk engineering essential for success.

Ultimately, the piece emphasizes that resilience must be a core design pillar

rather than an afterthought. By integrating disciplined risk management from

site selection through operations, Indian providers can gain a commercial

advantage, securing better investment and insurance terms while building a

sustainable, trustworthy backbone for the nation’s digital future.

In "Building resilient foundations for India's expanding Data Centre ecosystem,"

Saurabh Verma explores the rapid evolution of India’s data infrastructure and

the urgent necessity of prioritizing long-term resilience over mere capacity. As

cloud adoption and 5G accelerate growth across hubs like Mumbai, Chennai, and

Hyderabad, the sector faces escalating challenges that demand a sophisticated

understanding of risk management. The article argues that modern data centres

are no longer just IT assets but critical infrastructure whose failure directly

impacts the digital economy. Beyond physical damage, business interruptions

often result in massive financial losses, contractual penalties, and significant

reputational harm. Climate change has emerged as a significant operational

reality, with heatwaves and flooding stressing cooling systems and electrical

grids. Furthermore, the convergence of cyber and physical risks means that

digital disruptions can quickly translate into tangible infrastructure damage.

Construction complexities and logistical interdependencies further amplify

potential losses, making early risk engineering essential for success.

Ultimately, the piece emphasizes that resilience must be a core design pillar

rather than an afterthought. By integrating disciplined risk management from

site selection through operations, Indian providers can gain a commercial

advantage, securing better investment and insurance terms while building a

sustainable, trustworthy backbone for the nation’s digital future.CVE program funding secured, easing fears of repeat crisis

The Common Vulnerabilities and Exposures (CVE) program has successfully secured

stable funding, alleviating industry-wide fears of a repeat of the 2025 crisis

that nearly crippled global vulnerability tracking. As detailed in the CSO

Online report, the Cybersecurity and Infrastructure Security Agency (CISA) and

the MITRE Corporation have renegotiated their contract, transitioning the

26-year-old program from a discretionary expenditure to a protected line item

within CISA's budget. This structural change effectively eliminates the "funding

cliff" that previously required a last-minute emergency extension. While CISA

leadership emphasizes that the program is now fully funded and evolving, some

experts note that the specifics of the "mystery contract" remain opaque. The

resolution comes at a critical time, as the cybersecurity community had already

begun developing contingencies, such as the independent CVE Foundation, to

reduce reliance on a single government source. Despite the financial stability,

challenges regarding transparency, modernization, and international governance

persist. The article underscores that while the immediate threat of a service

lapse has faded, the incident served as a stark reminder of the global security

ecosystem's fragility. Moving forward, the focus shifts toward ensuring this

essential public resource remains resilient against future political or

administrative shifts within the United States government.

The Common Vulnerabilities and Exposures (CVE) program has successfully secured

stable funding, alleviating industry-wide fears of a repeat of the 2025 crisis

that nearly crippled global vulnerability tracking. As detailed in the CSO

Online report, the Cybersecurity and Infrastructure Security Agency (CISA) and

the MITRE Corporation have renegotiated their contract, transitioning the

26-year-old program from a discretionary expenditure to a protected line item

within CISA's budget. This structural change effectively eliminates the "funding

cliff" that previously required a last-minute emergency extension. While CISA

leadership emphasizes that the program is now fully funded and evolving, some

experts note that the specifics of the "mystery contract" remain opaque. The

resolution comes at a critical time, as the cybersecurity community had already

begun developing contingencies, such as the independent CVE Foundation, to

reduce reliance on a single government source. Despite the financial stability,

challenges regarding transparency, modernization, and international governance

persist. The article underscores that while the immediate threat of a service

lapse has faded, the incident served as a stark reminder of the global security

ecosystem's fragility. Moving forward, the focus shifts toward ensuring this

essential public resource remains resilient against future political or

administrative shifts within the United States government.

No comments:

Post a Comment