Things happen in the real world that don’t happen in your test environment. Yet what does that mean from a QA perspective? We did everything we were supposed to do in the training phase and our AI model passed meeting expectations, but it’s not passing in the “inference” phase when the AI model is operationalized. This means we need to have a QA approach to deal with AI models in production. Problems that arise with AI models in the inference phase are almost always issues of data. We know the algorithm works. We know that our training model data and hyperparameters were configured to the best of our ability. That means that when AI models are failing we have data problems. Is the input data bad? If the problem is bad data – fix it. Is the AI model not generalizing well? Is there some nuance of the data that needs to be added to the training model? If the answer is the latter, that means we need to go through a whole new cycle of developing an AI model with new training data and hyperparameter configurations to deal with the right level of fitting to that data.

TLS 1.3: A Good News/Bad News Scenario

While TLS 1.3 enables much better end-to-end privacy, it can break existing security controls in enterprise networks that rely on the ability to decrypt traffic in order to perform deep-packet inspection to look for malware and evidence of malicious activity. Well-known examples of those security controls include next-generation firewalls, intrusion prevention systems, sandboxes, network forensics, and network-based security analytics products. These security controls rely on access to a static, private key in order to decrypt traffic for inspection. The use of such keys is replaced in TLS 1.3 by the requirement to use the Diffie-Hellman Ephemeral perfect forward secrecy key exchange. That exchange occurs for each session or conversation that is established between endpoints and servers. In addition, the certificate itself is encrypted, which denies those tools access to valuable metadata for additional analysis. The ephemeral key exchange is not new to TLS. TLS 1.2 also included it as an option.

Who's Responsible When IT Goes Awry?

"IT professionals tend to be pleasers. They say 'yes’ to a lot of things when they should say 'no," said Dave Gartenberg, chief HR officer at professional services firm Avanade. "Sometimes they'll agree to do something with less budget or less line leader involvement in order to be helpful. You'll see a lot of projects moving forward with the best of intentions when in fact anyone on the outside looking in can see it would never stand a chance. I hold the IT leaders accountable for making sure from the start the conditions for success were contracted internally." Peter Kraatz, portfolio manager of Cloud and Data Center Transformation Consulting at IT solutions services provider Insight Enterprises said the lack of governance also contributes to IT issues. “IT has to own the mechanical bits of governance: Who's got what role, who's going to pull what triggers and when. Why we’re doing them is something that's owned by the business," said Kraatz. "The business has to tell us when we’re running out of budget on Amazon or we’ve got the wrong workloads. I think we’re allergic to talking to one another.”

Raspberry Pi-style Jetson Nano is a powerful low-cost AI computer from Nvidia

Nvidia released a series of benchmarks showing the Jetson Nano outperforming competitors when running various computer vision models. The results show the Jetson Nano beating the $35 Raspberry Pi 3 (no mention of the model), the Pi 3 with a $90 Intel Neural Compute Stick 2, and the newly released Google Coral board that uses the Edge TPU (Tensor Processing Unit). These tests involved running a range of computer vision models carrying out object detection, classification, pose estimation segmentation and image processing. Specifically, the Jetson showed superior performance when running inference on trained ResNet-18, ResNet-50, Inception V4, Tiny YOLO V3, OpenPose, VGG-19, Super Resolution, and Unet models. The Jetson Nano was the only board to be able to run many of the machine-learning models and where the other boards could run the models, the Jetson Nano generally offered many times the performance of its rivals. Nvidia's senior manager of product for autonomous machines Jesse Clayton told TechRepublic's sister site ZDNet that Jetson Nano's GPU could run a broader range of machine-learning models than the specialist silicon found in Google's Edge TPU.

Terrified Of The Internet, Putin Signs Laws Making It Illegal To Criticize

Russia's efforts to clamp down on anything resembling free speech on the internet continues unabated. Putin's government has spent the last few years effectively making VPNs and private messenger apps illegal. While the government publicly insists the moves are necessary to protect national security, the actual motivators are the same old boring ones we've seen here in the States and elsewhere around the world for decades: fear and control. Russia doesn't want people privately organizing, discussing, or challenging the government's increasingly-authoritarian global impulses. After taking aim at VPNs, Putin signed two new bills this week that dramatically hamper speech, especially online. One law specifically takes aim at the nebulous concept of "fake news," specifically punishing any online material that "exhibits blatant disrespect for the society, government, official government symbols, constitution or governmental bodies of Russia." In other words, Russia wants to ban criticism of Putin and his corrupt government

Stanford Aims to Make Artificial Intelligence More Human

First, ensuring as best we can that the advancement of artificial intelligence ends up serving the interests of human beings, and not displacing or undermining human interests. The essential thing is to ensure that as machines become more and more intelligent and are capable of carrying out more and more complicated tasks that otherwise would have to be done by human beings, that the role we give to machine intelligence supports the goals of human beings and the values we have in the communities we live in, rather than step-by-step displacing what humans do. Second, the bet that the institute is making here at Stanford is that the advancement of artificial intelligence will happen in a better way if, instead of just putting technologists and AI scientists in the lab and having them work really hard, we do it in partnership with humanists and social scientists. So the familiar role of the social scientist or philosopher is that the technologists do their thing and then we study it out in the wild; the economist measures the effects of technology and the disruption it has, or the philosopher tries to worry about the values that are disrupted in some way by technology.

How AI Can Transform Customer Experience By Listening Better to the Voice of Customers

While financial dealings, business transactions, and operational updates can be quantified and computed upon, the same cannot be said of human interactions. With natural language being the free-flowing mode of communication amongst people, the spoken and written words contain a treasure trove of information. And today, this remains largely under-leveraged. Whether it is periodic customer surveys, chatter on social media, feedback on review websites, interactions through contact centers, or ongoing communications with customer service professionals, all these touch-points are peppered with vital clues that can help answer the million-dollar question, “What do customers really want?” However, many enterprises use archaic approaches to customer survey and digital listening programs. Textual feedback from these programs is often subjected to superficial text analytics that don’t go beyond simple text summaries, frequency counts of words, or naive sentiment analysis. These squander valuable customer signals, falling short on intelligence and actionability.

The Future of A.I. Isn’t Quite Human

At first glance, an A.I. brought to life on the red carpet may feel jarring. But A.I. is already operating in many aspects of our lives: It controls your Facebook news feed, it helps make your salad, and it opposes you in video games. And while a fleet of Protoss carriers gliding across a choke point in Starcraft II may appear less “real” than Shudu in her gown on a real red carpet — or the virtual avatars created by Facebook and spotlighted in a Wired feature last week — cutting-edge work happening behind the scenes in these virtual worlds may actually say quite a bit more about an emerging universe of the almost-human, where the line between person and machine blurs. After all, Google wouldn’t spend upwards of $500 million on nothing. The company’s DeepMind property uses advanced algorithmic learning to mimic and surpass human play style in games, but that’s nothing compared to what’s coming. “This is not going away,” Morgan Young, the CEO and co-founder of Quantum Capture who worked on Shudu’s BAFTAs project, tells OneZero. “This is just the beginning of how powerful characters can be when they’re combined with A.I.”

Cyber Crime Competes Against the Good Guys for Talent

One factor that has benefited cyber crime is the professionalization of the threat space. Previously more disparate, the underground functions very much like legitimate businesses operating under a “supply and demand” philosophy. Product/service competition and as-a-service offerings fuels the growth of the maturing marketplace. This forces developers and sellers to provide quality merchandise at competitive prices. An aggressive marketing strategy helps gain market share with favorable reviews from customers and forum administrators providing corroboration of production utility and the bona fides of sellers. It is common for sellers to offer 24×7 help support, as well as customizable features to prospective customers. Moreover, the goods and services provided in the underground are not exclusively tailored for experienced cyber crime actors. Some products target inexperienced customers thereby lowering the bar to gain entry into cyber criminal operations. This allows anyone that can pay the price point to engage in hostile activities, either on their own via user-friendly graphic user interfaces, or just paying for the service, hiring “professionals” to do the job.

Mirai Botnet Code Gets Exploit Refresh

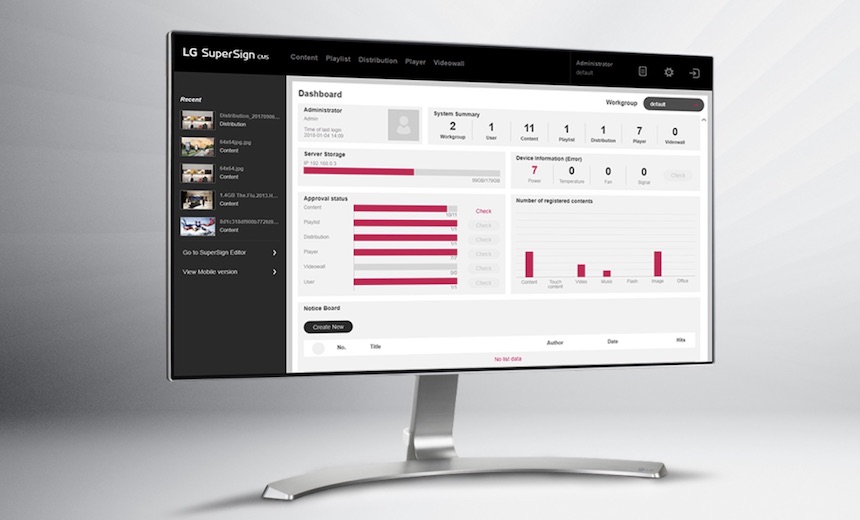

In the latest version of Mirai, meanwhile, Palo Alto's Nigam says researchers found two unexpected exploits: one for the WePresent WiPG-1000 Wireless Presentation system and another for a content management system developed by LG to manage screen-based signage. Neither of the exploits had been seen in the wild before. Both types of software are most likely to be used by businesses. "In particular, targeting enterprise links also grants it access to larger bandwidth, ultimately resulting in greater firepower for the botnet for DDoS attacks," Nigam writes. The exploit for LG targets a vulnerability (CVE-2018-17173) in its LG SuperSign EZ CMS 2.5, which ships as part of LG's WebOS operating system in smart TVs. The vulnerability was disclosed in September 2018. The exploit in WePresent attacks a command injection vulnerability. The vulnerability was contained within several versions of software in WePresent WiPG-1000 devices, which are wireless routers designed for screen sharing. Barco, the device's developer, has patched the vulnerability.

Organizations need to make mobile security a priority in 2019

The challenge is, WiFi relies on mostly insecure protocols and standards, making them easy to impersonate or intercept, mislead and redirect traffic. This can be done independently on how new or updated your device is; it’s only related to how the underlying WiFi infrastructure works. There are times when you don’t even need to perform any action to have an attack on you perpetrated. Do you remember that WiFi network you connected to while having lunch the other day? In order to make your life easier, your device will connect to it automatically if it recognizes the network. Even when it’s not the same network, it just has to claim to be it. From over in the corner, the hacker effortlessly hijacks your session, captures your credentials, delivers a targeted exploit, and assumes full control of every function on your smartphone - including those that login to your company’s Wi-Fi and send emails in your name. This year, Zimperium attended Mobile World Conference (MWC) in Barcelona and RSA in San Francisco - - the attendance for the two shows combined was more than 150,000 executives, salespeople, media, etc.

Quote for the day:

The essential question is not, "How busy are you?" but "What are you busy at?" -- Oprah Winfrey

No comments:

Post a Comment