What is Keras? The deep neural network API explained

Keras was created to be user friendly, modular, easy to extend, and to work with Python. The API was “designed for human beings, not machines,” and “follows best practices for reducing cognitive load.” Neural layers, cost functions, optimizers, initialization schemes, activation functions, and regularization schemes are all standalone modules that you can combine to create new models. New modules are simple to add, as new classes and functions. Models are defined in Python code, not separate model configuration files. The biggest reasons to use Keras stem from its guiding principles, primarily the one about being user friendly. Beyond ease of learning and ease of model building, Keras offers the advantages of broad adoption, support for a wide range of production deployment options, integration with at least five back-end engines (TensorFlow, CNTK, Theano, MXNet, and PlaidML), and strong support for multiple GPUs and distributed training. Plus, Keras is backed by Google, Microsoft, Amazon, Apple, Nvidia, Uber, and others.

How do you best talk to your board about cybersecurity?

Boards are maturing both in their interest in and understanding of cybersecurity. They are now asking much more specific questions, particularly as they wish to increase this understanding. In conducting this research, we had the pleasure of working with board members who have been privy to this security journey. We wanted to understand where the gap is for them and how we can help close it. One of the key problems in communicating security to any stakeholder group (including boards) is that we (security pros) assume that we know what our audience wants and proceed to throw information at them as per our desires. But because our expertise typically lies in the field of technology, not human psychology or communication, our assumptions about what they want are often far removed from reality. We rarely take the time to ask, for fear of appearing stupid. In this research, we did just that: We asked. As a result, we had the opportunity to understand board members’ journeys through the murky and often technical and confusing waters of cybersecurity.

The internet of human things: Implants for everybody and how we get there

Let me be clear on my motivation for wanting to make wearables -- and eventually implants -- our default method of brick-and-mortar payment: I hate physical wallets. I don't like dragging around a thick hunk of cow hide filled with a bunch of credit cards I don't use that often. Then, there are loyalty program cards and various IDs I have, such as my license, various types of permits, and medical insurance and drug plan stuff when I have to pick up prescriptions. Have you ever lost or had your wallet stolen? Or your keys? The amount of work it takes to get your life back in order is ridiculous. How many of you do the paranoid "life check" triple play every day for your wallet, keys, and smartphone? When I am traveling, I might do that three times a day, easy. So now, let us imagine a future where you don't have to walk around carrying cow hide stuffed with plastic cards and cash. A future without losing wallets and the disruption that ensues. A future where many of us can leave our homes every day with literally nothing on our person except a smartphone and perhaps a wearable device.

Panasonic IoT strategy is all about big data analytics

It's all about pain points. What's the problem that you're trying to solve? Believe it or not, it may sound like an easy question, but the answers are really difficult. Because A, to get your middle management or middle-ranked individuals, to be able to speak to pain points is difficult because they see that as an admission of some guilt. Getting them out of that mode, and getting them into a comfort zone where they can openly talk about the pain points is really challenging. Because you can get a set of pain points from the top-level executives, but you need to let some level of granularity on those pain points. Without the granularity you're unable to pinpoint on the specifics and recommended a solution. So what we have done is, for instance, in our industrial manufacturing operations, we have people who walk into manufacturing floors, we talk to executives, we talk to engineers, and we take a third-party view on what the problems are, and identify these pains, and then try to prioritize what the return would be on those pain points.

Giving algorithms a sense of uncertainty could make them more ethical

The algorithm could handle this uncertainty by computing multiple solutions and then giving humans a menu of options with their associated trade-offs, Eckersley says. Say the AI system was meant to help make medical decisions. Instead of recommending one treatment over another, it could present three possible options: one for maximizing patient life span, another for minimizing patient suffering, and a third for minimizing cost. “Have the system be explicitly unsure,” he says, “and hand the dilemma back to the humans.” Carla Gomes, a professor of computer science at Cornell University, has experimented with similar techniques in her work. In one project, she’s been developing an automated system to evaluate the impact of new hydroelectric dam projects in the Amazon River basin. The dams provide a source of clean energy. But they also profoundly alter sections of river and disrupt wildlife ecosystems. “This is a completely different scenario from autonomous cars or other [commonly referenced ethical dilemmas], but it’s another setting where these problems are real,” she says. “There are two conflicting objectives, so what should you do?”

The Technical Case for Mixing Cloud Computing and Manufacturing

The movement in manufacturing needs to be around the growth of IaaS usage, including cloud-delivered servers, databases, data integration, and other core components needed to provide the types of services listed earlier in this article. AWS provides all of these components, as does Google and Microsoft. That said, what keeps many manufacturing companies out of the cloud is the lack of skills and knowledge. It takes a specific skill set to properly integrate existing ‘some-time’ systems that provide no real-time visibility or automated responses with new cloud-based systems that provide the ability to operationalize new and existing data points. The objective is to provide a quick ROI, as well as the ability to move operations in more productive and less expensive directions. The fundamentals are well understood and are becoming easier to understand by the manufacturing organizations. What’s missing is a stepwise approach that spells out the cloud conversion approach with enough detail to provide the company with a path to tactical and strategic success.

Beyond the Dashboard: How AI Changes the Way We Measure Business

Many BI companies see the potential of AI and have jumped on the bandwagon. Most today generate point-and-click automated insights that surface significant trends, anomalies, and clusters in the day, usually for a highly constrained data set, such as a chart or dashboard. The trick is to do this at scale and in real time. Most BI vendors don’t have the processing power to do that, let alone run it continuously in the background for multiple KPIs simultaneously. With automated insights, the dashboard becomes a jumping off point for obtaining deep insights about business processes. These insights might pop up above or below a KPI, or upon a click; or they might be encoded in text via a natural language generation tool that displays or speaks a deep analysis of the dashboard KPIs. ... FinancialForce applies Salesforce's Einstein AI engine to sales and financial data to generate rich, action-oriented views of customers. Its dashboards display color-coded indicators of customer health, and with a single click, an analysis of under-performing health indicators along with recommendations for improvement.

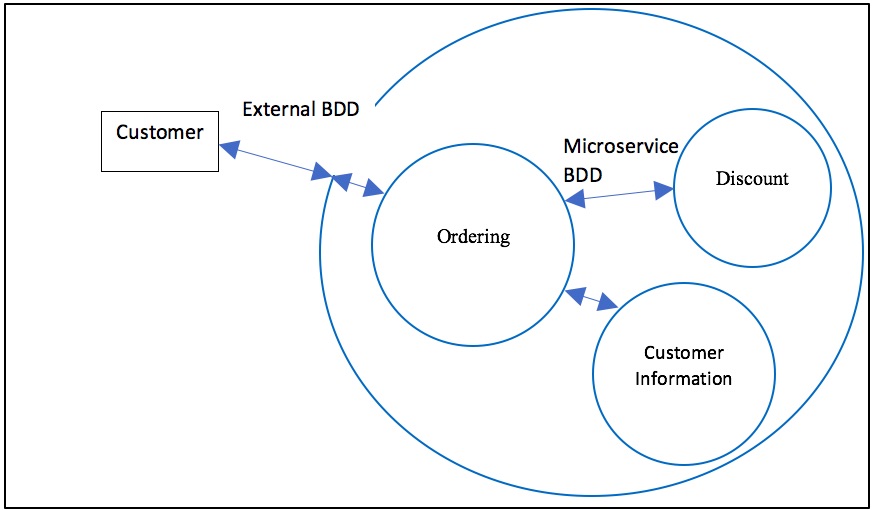

Developing Microservices with Behavior Driven Development & Interface Oriented Design

BDD involves the triad – the three perspectives of the customer, of the developer, and of the tester. It’s usually applied for the external behavior of an application. Since microservices are internal, the customer perspective is that of the internal consumer, that is, the parts of the implementation which uses the service. So the triad collaboration is between the consumer developers, the microservice developers, and the testers. Behavior is often expressed in a Given-When-Then form, e.g. Given a particular state, When an action or event occurs, Then the state changes and/or an output occurs. Stateless behavior, as business rules and calculations, just shows the transformation from input to output. Interface Oriented Design focuses on the Design Patterns principle “Design to interfaces, not implementations”. A consumer entity should be written against the interface that a producer microservice exposes, not to its internal implementation. These interfaces should be well defined, including how they respond if they are unable to perform their responsibilities. Domain Driven Design (DDD) can help define the terms involved in the behavior and the interface.

Google petitions Supreme Court to reconsider Android Java ruling

Walker said the court initially ruled that the software interfaces were not copyrightable, but that decision was overruled. “A unanimous jury then held that our use of the interfaces was a legal fair use, but that decision was likewise overruled,” he said. “Unless the Supreme Court corrects these twin reversals, this case will end developers’ traditional ability to freely use existing software interfaces to build new generations of computer programs for consumers.” Walker added: “The US constitution authorised copyrights to “promote the progress of science and useful arts’, not to impede creativity or promote lock-in of software platforms.” In response to Walker’s post, Oracle executive vice-president and general counsel, Dorian Daley, wrote: “Google's petition for certiorari presents a rehash of arguments that have already been thoughtfully and thoroughly discredited. “The fabricated concern about innovation hides Google’s true concern: that it be allowed the unfettered ability to copy the original and valuable work of others as a matter of its own convenience and for substantial financial gain.

Securing the Internet of Things: Governments Action Likely in 2019

The landscape is dotted with a few new laws and regulations, such as a California law requiring manufacturers of any devices that connect to the internet to include “reasonable” security features, including unique, user-set passwords for each device rather than generic default credentials that are easier for an intruder to discern. Some security experts, however, have criticized the law as too weak. Well-known consultant Robert Graham wrote, “it’s based on the misconception of adding security features. It’s like dieting …. The key to dieting is not eating more but eating less. The same is true of cybersecurity, where the point is not to add 'security features' but to remove ‘insecure features.’" That reaction shows there’s a lot more to be done. But it will be interesting to see just how aggressively governments push. Will they rely on stronger laws to force the industry to more effectively tackle IoT security? Or gentler approaches, like the United Kingdom’s government website that provides a voluntary code of practice?

Quote for the day:

"Remember teamwork begins by building trust. And the only way to do that is to overcome our need for invulnerability." -- Patrick Lencioni

No comments:

Post a Comment