Elusive hacker-for-hire group Bahamut linked to historical attack campaigns

According to BlackBerry, Bahamut relies heavily on manipulating its victims

through a constantly shifting web of fake social media accounts and personas

and even fake news websites and applications that don't appear to be malicious

and often generate original content. This is meant to exploit the victims'

interests and earn their trust. "First encounters with Bahamut begin

innocently," the researchers said. "One might start with a simple direct

message on Twitter or LinkedIn from an attractive woman, but with no

suspicious link to click. Another might occur when scrolling through Twitter

or Facebook in the form of a tech news article. Maybe you’d be taking a break

at work and checking out a fitness website. Or perhaps you’re a supporter of

Sikh rights looking for news about their movement for independence. You’d

click, and nothing bad would appear to happen. On the contrary, you’d

experience a legitimate, yet fabricated reality." One example is a technology

news website that was at some point focused on mobile device reviews. At some

point it was taken over by the group and the tone and nature of the articles

changed to include security research and geopolitical themes.

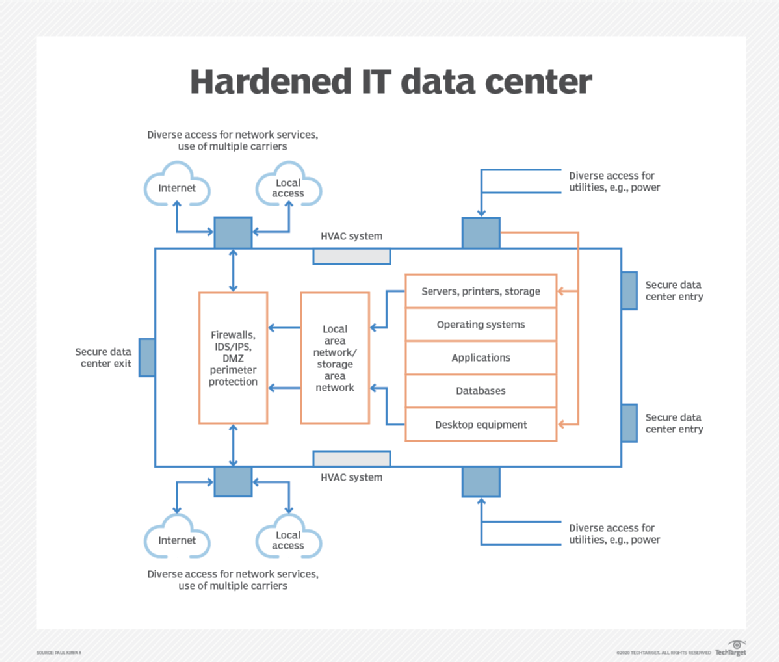

Why MSPs Are Hacker Targets, and What To Do About It

Cyber defense doesn't come for free, and this is a significant challenge for

MSPs. There are really only two places where an MSP can look to increase

security standards for their end customers: The first is convincing the SMB to

spend more on security, which is often a difficult upsell given already tight

IT budgets. The second is to eat into their thin margins while still

maintaining the ability to update defenses as needed by the threat

landscape. The vast majority of cybersecurity defense solutions are

purpose-built for the enterprise, bringing in a plethora of technology bells

and whistles often too overwhelming or unnecessary for the SMB. All too often,

there's chatter around cybersecurity proselytizing the merits of artificial

intelligence, machine learning, and behavioral analytics — all of which come

with high costs. The truth is, MSPs need solutions that cater to their

specific needs, not just from a technical point of view but also financial and

operational perspectives in order to get to the coveted 80/20. Small

businesses have gained operational agility with the rise of the cloud and

software-as-a-service, and with that, attackers have evolved to go after the

lowest-hanging fruit.

4 common C programming mistakes — and 5 tips to avoid them

Allocated memory (done using the malloc function) isn’t automatically disposed

of in C. It’s the programmer’s job to dispose of that memory when it’s no

longer used. Fail to free up repeated memory requests, and you will end up

with a memory leak. Try to use a region of memory that’s already been freed,

and your program will crash—or, worse, will limp along and become vulnerable

to an attack using that mechanism. Note that a memory leak should only

describe situations where memory is supposed to be freed, but isn’t. If a

program keeps allocating memory because the memory is actually needed and used

for work, then its use of memory may be inefficient, but strictly speaking

it’s not leakage. ... So why is the burden of checking an array’s bounds left

to the programmer? In the official C specification, reading or writing an

array beyond its boundaries is “undefined behavior,” meaning the spec has no

say in what is supposed to happen. The compiler isn’t even required to

complain about it. C has long favored giving power to the programmer even at

their own risk. An out-of-bounds read or write typically isn’t trapped by the

compiler, unless you specifically enable compiler options to guard against it.

Securing mobile devices, apps, and users should be every CIO’s top priority

The current distributed remote work environment has also triggered a new

threat landscape, with malicious actors increasingly targeting mobile devices

with phishing attacks. These attacks range from basic to sophisticated and are

likely to succeed, with many employees unaware of how to identify and avoid a

phishing attack. The study revealed that 43% of global employees are not sure

what a phishing attack is. “Mobile devices are everywhere and have access to

practically everything, yet most employees have inadequate mobile security

measures in place, enabling hackers to have a heyday,” said Brian Foster, SVP

Product Management, MobileIron. “Hackers know that people are using their

loosely secured mobile devices more than ever before to access corporate data,

and increasingly targeting them with phishing attacks. Every company needs to

implement a mobile-centric security strategy that prioritizes user experience

and enables employees to maintain maximum productivity on any device,

anywhere, without compromising personal privacy.” The study found that four

distinct employee personas have emerged in the everywhere enterprise as a

result of lockdown, and mobile devices play a more critical role than ever

before in ensuring productivity.

Here Comes the Internet of Plastic Things, No Batteries or Electronics Required

The researchers, who have been steadily working on the technology since their

original paper, have leveraged mechanical motion to provide the power for

their objects. For instance, when someone opens a detergent bottle, the

mechanical motion of unscrewing the top provides the power for it to

communicate data. “We translate this mechanical motion into changes in antenna

reflections to communicate data,” said Gollakota. “Say there is a Wi-Fi

transmitter sending signals. These signals reflect off the plastic object; we

can control the amount of reflections arriving from this plastic object by

modulating it with the mechanical motion.” To ensure that the plastic objects

can reflect Wi-Fi signals, the researchers employ composite plastic filament

materials with conductive properties. These take the form of plastic with

copper and graphene filings. “These allow us to use off-the-shelf 3D printers

to print these objects but also ensure that when there is an ambient Wi-Fi

signal in the environment, these plastic objects can reflect them by designing

an appropriate antenna using these composite plastics,” said Gollakota.

Robotic Process Automation Is Coming: Here Are 5 Ways To Prepare For It

Typically, the most suitable tasks for RPA relate to “busy work” – meaning any

work that involves a great number of repetitive actions, such as opening and

searching records, transferring data between different digital locations, and

repetitive mouse clicks. These sorts of tasks are prime candidates for

automation. At the other end of the scale, jobs that involve creative thought

and human decision making generally are not suitable for automation. ... Just

because something can be automated doesn't mean it should be. Here, you'll

need to identify which – of all the things it's possible to automate – are

your key priorities. I recommend focusing on those tasks that help your

organization achieve its overall aims, but currently consume a

disproportionate amount of employees’ time. It’s usually a good idea to go for

“quick wins” first, as these will help to establish the usefulness of RPA

while winning over minds that may be resistant to the idea of reducing

repetitive workloads or fearful of what it could mean for jobs and

organizational culture. ... Having decided on the best ways to deploy RPA in

your organization, you can begin researching the technologies that are

available and the potential partners you may need to work with to create a

successful deployment.

Bridging India’s cybersecurity gender gap

While India produces roughly 1.5 million engineer graduates each year, less

than 30% of them are women and too many find it hard to get jobs. Many of them

are the products of little-known colleges where they gain limited technical

skills and graduate with certificates that few potential employers recognize.

At the same time, India’s cybersecurity industry is growing fast. By 2025 it

is forecast to be worth USD 35 billion as governments, companies, and startups

seek to safeguard data. The demand for skilled cybersecurity workers has

soared accordingly, but women still only make up around 11% of the sector’s

workforce, both in India and globally. Dhasmana and Vedashree decided two

years ago to help bridge that gender gap by setting up CyberShikshaa, which in

Hindi means ‘cyber education.’ “As a tech industry organization, Microsoft

felt it was our responsibility to create very strong career pathways,

especially for young women to join the technology sector,” says Dhasmana.

DSCI’s Vedashree says there was a need to evangelize cybersecurity as a career

option for new female grads. “So, we aligned our charters for skills

development in cyber fields and women in security and crafted this program

together.”

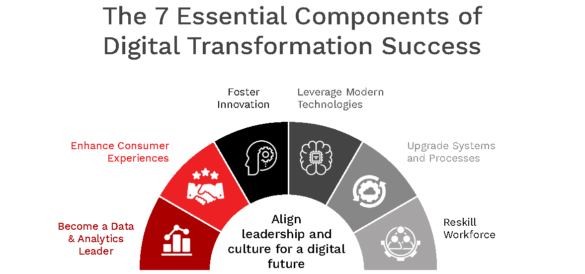

Accelerating Digital Transformation with Data, People and Technologies

The first priority in embarking on digital transformation is to make

information more accessible to everyone across the organisation. A report from

Harvard Business Review shows that 55% of organisations agreed that data

analytics for decision-making is extremely important today, and 92% confirmed

the increasing importance of data and analytics through 2020 and 2021. Yet,

despite the rise in the value of data analytics in the current era, many

organisations are burdened with outdated processes. According to IDC, 70% of

an analyst’s time is spent searching for data, and 44% of data workers’ time

results in unsuccessful attempts. Together with the lack of talented

professionals to harness the true power of data, these struggles further

refrain business leaders from creating a modern analytic environment for their

organisation. With siloed data residing all over the place, one of the

key challenges faced by companies is the advent of a variety of tools and

platforms, which are either too complicated or lack sufficient training. These

tools, therefore, set them back for truly achieving a digital transformation.

Emotet 101: How the Ransomware Works -- and Why It's So Darn Effective

Emotet exists in several different versions and incorporates a modular design.

This makes it more difficult to identify and block. It uses social engineering

techniques to gain entry into systems, and it is good at avoiding detection.

What's more, Emotet campaigns are constantly evolving. Some versions steal

banking credentials and highly sensitive enterprise data, which cybercrooks

may threaten to release publicly. "This may serve as additional leverage to

pay the ransom," Shier explains. An initial e-mail may look like it originated

from a trusted source, such as a manager or top company executive, or it may

offer a link to what appears to be a legitimate site or service. It usually

relies on file compression techniques, such as ZIP, that spread the infection

through various file formats, including .doc, docx, and .exe. This hides the

actual file name as it moves around within a network. These documents may

contain phrases such as "payment details" or "please update your human

resources file" to trick recipients into activating payloads. Some messages

have recently revolved around COVID-19. They often arrive from a legitimate

e-mail address within the company — and they can include both benign and

infected files.

Your next next digital transformation project should look very different

Almost three-quarters (70%) of CIOs agree or strongly agree that the pandemic

has increased the collaboration between the technology team and the business;

more than half (52%) say it has created a culture of inclusivity within their

teams, too. Yet while absence has made the heart grow fonder during the

extreme conditions of social distancing, CIOs will have to work hard to ensure

the close virtual bonds that have been fostered during lockdown are able to

flourish when we return to something like normal working conditions. To

that end, Haake says her firm's research with KPMG suggests the most important

factor for CIOs in the post-COVID age is strong cultural leadership. "That's

what a good digital leader is going to have to keep an eye on if they want to

be successful," she says. Pioneering CIOs will lead a cultural transformation

that hones the capabilities of the IT team and intertwines these talents with

the demands of the business. Digital leaders who build that tight bond will be

much more likely to deliver timely tech solutions that really do change the

business for the better.

Quote for the day:

"The greatest good you can do for another is not just share your riches, but reveal to them their own." - Benjamin Disraeli