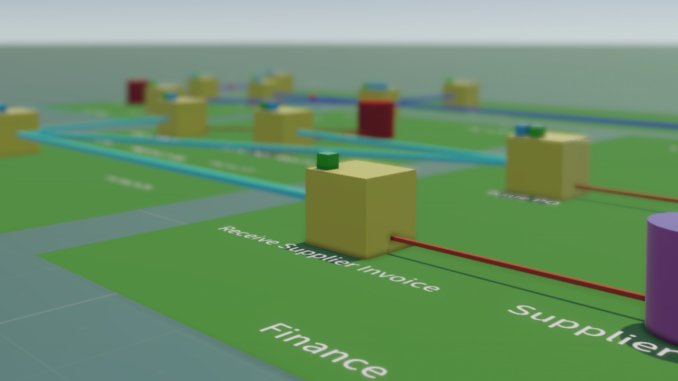

Business Case – Why Enterprise Architecture Needs to Change – Part I

The solution to moving out of the “stone age” is to use a digital end-to-end

approach for Architecture content (whether EA or SA), and provide openness and

transparency across EA, project, and reusable component

Architectures. Just like any digital approach to any business problem,

the use of structured data is key. The best-structured data language for

Architecture is arguably the ArchiMate notation which has a rich notation

covering the depth and breadth of Architecture modelling, and also a rich set

of connectors to link elements. ... Even if the new hire has significant

experience in the given industry, the new organisation’s IT platform and

processes will likely vary greatly from the person’s past experience. It

takes several months or longer for new staff to accumulate enough knowledge

about how the business and IT platform work to operate effectively without

help from other staff and operate effectively. The cost of this knowledge

gap is the new person delivering outcomes slower than other staff and

consuming time of other staff unnecessarily by simply asking questions like

‘what systems do we have?’, ‘what does the business do?’, ‘how does system X

work?’ and so on.

Why API security is a fast-growing threat to data-driven enterprises

API security focuses on securing this application layer and addressing what

can happen if a malicious hacker interacts with the API directly. API security

also involves implementing strategies and procedures to mitigate

vulnerabilities and security threats. When sensitive data is transferred

through API, a protected API can guarantee the message’s secrecy by making it

available to apps, users and servers with appropriate permissions. It also

ensures content integrity by verifying that the information was not altered

after delivery. “Any organization looking forward to digital transformation

must leverage APIs to decentralize applications and simultaneously provide

integrated services. Therefore, API security should be one of the key focus

areas,” said Muralidharan Palanisamy, chief solutions officer at AppViewX.

Talking about how API security differs from general application security,

Palanisamy said that application security is similar to securing the main

door, which needs robust controls to prevent intruders. At the same time, API

security is all about securing windows and the backyard.

Artificial Intelligence Can Enhance Banking Compliance

Technology has changed our society, and banks and other financial

institutions have digitalized their operations at a rapid pace as well.

However, the financial crime compliance units of these institutions still

rely mainly on heavy manual processes. The banking compliance units’ key

reason for their cautious approach in the utilisation of AI and automation

has been uncertainty about technology. Do regulators approve machine-based

decision-making, and is machine learning logic fair in identifying

suspicious activities? However, there is a clear need for utilising

technology in financial crime compliance. During the last number of years,

Ireland has witnessed a rise in financial crime, with illegal proceeds

making their way into the financial system, often from international

sources. Last month, data from Banking and Payments Federation Ireland

showed that over €12m was transferred illegally through so-called ‘money

mule’ accounts in the first six months of the year. When compared to the

same period last year, the quantity of bank accounts linked to the criminal

practice in Ireland almost doubled to 3,000 between January and June 2022.

Big tech has not monopolized big A.I. models, but Nvidia dominates A.I. hardware

Interest in A.I. software startups targeting business use cases also

remains formidable. While the total amount invested in such companies fell

33% last year as the venture capital market in general pulled back on

funding in the face of fast-rising interest rates and recession fears, the

total was still expected to reach $41.5 billion by the end of 2022, which

is higher than 2020 levels, according to Benaich and Hogarth, who cited

Dealroom for their data. And the combined enterprise value of public and

private software companies using A.I. in their products now totals $2.3

trillion—which is also down about 26% from 2021—but remains higher than

2020 figures. But while the race to build A.I. software may remain wide

open for new entrants, the picture is very different when it comes to the

hardware on which these A.I. applications run. Here Nvidia’s graphics

processing units completely dominate the field and A.I.-specific chip

startups have struggled to make any inroads. The State of AI notes that

Nvidia’s annual data center revenue alone—$13 billion—dwarfs the valuation

of chip startups such as SambaNova ($5.1 billion), Graphcore ($2.8

billion) and Cerebras ($4 billion).

Predictive Analytics in Healthcare

Clinicians, healthcare associations and health insurance companies use

predictive analytics to articulate the probability of their cases

developing certain medical conditions, similar as cardiac problems,

diabetes, stroke or COPD. Health insurance companies were early adopters

of this technology, and healthcare providers now apply it to identify

which cases need interventions to avert conditions and enhance health

outcomes. Clinicians also use predictive analytics to identify cases whose

conditions are progressing into sepsis. As is the case with numerous

operations of predictive analytics in healthcare, still, the capability to

use this technology to read how a case’s condition might progress is

limited to certain conditions and far from widely deployed. Healthcare

associations also use predictive analytics to identify which hospital in

patients are probable to exceed the average length of stay for their

conditions by assaying case, clinical and departmental data. This insight

allows clinicians to acclimate care protocols to observe the cases’

treatments and recoveries on track. That in turn helps cases avoid

overstays, which not only drive up expenses and divert limited hospital

resources, but also may endanger cases by keeping them in surroundings

that could expose them to secondary infections.

How to Set Yourself Up For Success As a New Data Science Consultant With No Experience

The key is to know what you’re good at and focus on it. Going out on your

own as a consultant is scary enough — ensure that you’re going to be

marketing and using skills that you’re comfortable with. Having confidence

that you can successfully produce results using your tools and skills of

choice goes a long way to becoming a successful consultant. Additionally,

do some market research to see where your niche could lay. While they say

that data scientists should all be generalists in the beginning, I believe

that consultants should focus on specializing themselves in niches that

complement their skills and their alternative knowledge. For example, I

would focus on becoming a data science consultant who specializes in

helping companies solve their environmental problems — this would combine

my specialized skills (data science) with my alternative knowledge and

educational background in environmental science. Companies love working

with consultants who have first-hand experience in their sector, so it

can’t hurt to play to your strengths, past employment, education, or

interest background.

The future of employment in IT sector

Whilst the companies keep up with the changing economic climate, what’s

become undeniable is the war for recruiting good talent, now more than

ever. There has been a significant change in employees’ needs and

priorities. Cream talent is re-evaluating their careers based on aspects

like flexibility, career growth and employee value proposition. Companies

must therefore invest in ‘Active Sourcing’ to create a rich pipeline and

not only recruit them but also train them for the upcoming 4th industrial

revolution. It needs to invest in their skills and holistic development,

not forgetting to create a safe, healthy work environment to retain the

talent. As dynamic as it is, one cannot deny the menace of tech burnout.

This blog describes it perfectly, ‘Tech burnout refers to the extreme

exhaustion and stress that many employees in the technology sector

experience. While burnout has always been an issue in many industries, 68%

of tech workers feel more burned out than they did when they worked at an

office.’ Technology is the most rapidly evolving industry with a

challenging work environment.

On the Psychology of Architecture and the Architecture of Psychology

Most of our intelligence, however, consists of patterns that we execute

efficiently, automatically and quickly. Some of these are natural

elements, which are fixed: e.g. a propensity to communicate and use tools,

to perform ‘mental travel’ — memory, scenarios, fantasy — and all of

it based on pattern creation and reinforcement. Some of these elements may

even be genetic (like basic strategies such as wait-and-see versus

go-for-it you can observe in small children), but most of it is probably

learned. All of this is part of Kahneman’s ‘System 1’. We learn by

employing our capability to employ logic and ratio and our

copying-and-being-reinforced capability — and while we do a lot more of

the latter two than the former, culturally, we tend to believe that the

reverse is true. Learning by reinforcement also includes learning by

doing. Chess grand masters have very effective fast ‘patterns’ in the

‘malleable instinct’ part of their brains, and the difference between

grand master and good amateurs is not their power of logic and ratio —

calculating, thinking moves ahead — but their patterns that identify

potential good moves before they start to calculate , and these patterns

come from playing a lot of games. You also have to maintain your patterns:

it is ‘use it or lose it’.

7 Common Data Quality Problems

Data inconsistencies: This problem occurs when multiple systems are

storing information without using an agreed upon, standardized method of

recording and storing information. Inconsistency is sometimes compounded

by data redundancy. ... Fixing this problem requires the data be

homogenized (or standardized) before or as it comes in from various

sources, possibly through the use of an ETL data pipeline. Incomplete

data: This is generally considered the most common issue impacting Data

Quality. Key data columns will be missing information, often causing

analytics problems downstream. A good method for solving this is to

install a reconciliation framework control. This control would send out

alerts (theoretically to the data steward) when data is missing. Orphaned

data: This is a form of incomplete data. It occurs when some data is

stored in one system, but not the other. If a customer’s name can be

listed in table A, but their account is not listed in table B, this would

be an “orphan customer.” And if an account is listed in table B, but is

missing an associated customer, this would be an “orphan account.”

Building IT Infrastructure Resilience For A Digitally Transformed Enterprise

At a minimum, resiliency means having stable operations, consistent

revenue, manageable security risks, efficient workflows, and an informed

and agile employee base. Having visibility over the operating systems of

network devices can reduce network downtime and open doors to further

efficiencies. If a business is resilient, it can maintain stable network

operations, drive down IT costs and deliver a more robust service at a

lower cost. Overall, when businesses can dramatically lower IT expenses

and have better visibility, they can expend resources on separate projects

that improve the quality of service—a win for all. From a regulatory

perspective, regulators now want to see everything documented. Take mobile

banking, for example; regulators want to know everything, including what

code is being used on which servers as well as which people and processes

have access to which services. Intelligently automated network operations

can allow enterprises to be better equipped to answer the questions that

regulators ask, such as how they're validating and how often they're doing

a failover.

Quote for the day:

"A good general not only sees the way to victory; he also knows when

victory is impossible." -- Polybius

No comments:

Post a Comment