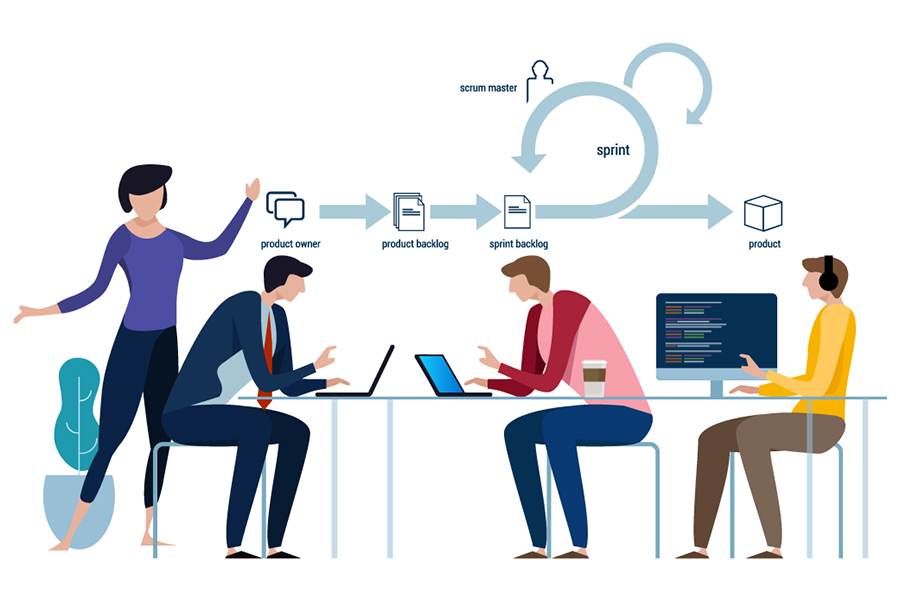

Embedded finance won’t make every firm into a fintech company

One fintech’s choices on these matters may be completely different from another

if they address different segments — it all boils down to tradeoffs. For

example, deciding on which data sources to use and balancing between onboarding

and transactional risk look different if optimizing for freelancers rather than

larger small businesses. In contrast, third-party platform providers must be

generic enough to power a broad range of companies and to enable multiple use

cases. While the companies partnering with these services can build and

customize at the product feature level, they are heavily reliant on their

platform partner for infrastructure and core financial services, thus limited to

that partner’s configurations and capabilities. As such, embedded platform

services work well to power straightforward commoditized tasks like credit card

processing, but limit companies’ ability to differentiate on more complex

offerings, like banking, which require end-to-end optimization. More generally

and from a customer’s perspective, embedded fintech partnerships are most

effective when providing confined financial services within specific user flows

to enhance the overall user experience.

Company size is a nonissue with automated cyberattack tools

As mentioned earlier, cybercriminals will change their tactics to derive the

most benefit and least risk to themselves. Dark-side developers are helping

matters by creating tools that require minimal skill and effort to operate.

"Ransomware as a Service (RaaS) has revolutionized the cybercrime industry by

providing ready-made malware and even a commission-based structure for threat

actors who successfully extort a company," explains Little. "Armed with an

effective ransomware starter pack, attackers cast a much wider net and make

nearly every company a target of opportunity." A common misconception related to

cyberattacks is that cybercriminals operate by targeting individual companies.

Little suggests cyberattacks on specific organizations are becoming rare. With

the ability to automatically scan large chunks of the internet for vulnerable

computing devices, cybercriminals are not initially concerned about the company.

... Little is very concerned about a new bad-guy tactic spreading quickly —

automated extortion. The idea being once the ransomware attack is successful,

the victim is threatened and coerced automatically.

Paying with a palm print? We’re victims of our own psychology in making privacy decisions

Unfortunately we’re victims of our own psychology in this process. We will often

say we value our privacy and want to protect our data, but then, with the

promise of a quick reward, we will simply click on that link, accept those

cookies, login via Facebook, offer up that fingerprint and buy into that shiny

new thing. Researchers have a name for this: the privacy paradox. In survey

after survey, people will argue that they care deeply about privacy, data

protection and digital security, but these attitudes are not supported in their

behaviour. Several explanations exist for this, with some researchers arguing

that people employ a privacy calculus to assess the costs and benefits of

disclosing particular information. The problem, as always, is that certain types

of cognitive or social bias begin to creep into this calculus. We know, for

example, that people will underestimate the risks associated with things they

like and overestimate the risks associated with things they dislike.

Ransomware Payments Explode Amid ‘Quadruple Extortion’

“While it’s rare for one organization to be the victim of all four techniques,

this year we have increasingly seen ransomware gangs engage in additional

approaches when victims don’t pay up after encryption and data theft,” Unit 42

reported. “Among the dozens of cases that Unit 42 consultants reviewed in the

first half of 2021, the average ransom demand was $5.3 million. That’s up 518

percent from the 2020 average of $847,000,” researchers observed. More

statistics include the highest ransom demand of a single victim spotted by Unit

42, which rose to $50 million in the first half of 2021, up from $30 million

last year. So far this year, the largest payment confirmed by Unit 42 was the

$11 million that JBS SA disclosed after a massive attack in June. Last year, the

largest payment Unit 42 observed was $10 million. Barracuda has also tracked a

spike in ransom demands: In the attacks that it’s observed, the average ransom

ask per incident was more than $10 million, with only 18 percent of the

incidents involving a ransom demand of less than that.

How a Simple Crystal Could Help Pave the Way to Full-scale Quantum Computing

For more than two decades global control in quantum computers remained an idea.

Researchers could not devise a suitable technology that could be integrated with

a quantum chip and generate microwave fields at suitably low powers. In our work

we show that a component known as a dielectric resonator could finally allow

this. The dielectric resonator is a small, transparent crystal which traps

microwaves for a short period of time. The trapping of microwaves, a phenomenon

known as resonance, allows them to interact with the spin qubits longer and

greatly reduces the power of microwaves needed to generate the control field.

This was vital to operating the technology inside the refrigerator. In our

experiment, we used the dielectric resonator to generate a control field over an

area that could contain up to four million qubits. The quantum chip used in this

demonstration was a device with two qubits. We were able to show the microwaves

produced by the crystal could flip the spin state of each one.

How To Transition from a Data Analyst into a Data Scientist

What do you want to be – a data analyst or a data scientist? Do you need such a

transition? Why do you need this shift of being a data scientist? The most

important question that might haunt most analysts would be ‘how do you want to

see your career graph grow?’ This is where the big difference comes in. With a

choice of path that will make you a data scientist, your career becomes more

challenging with new possibilities to design learning models which will set your

skills apart from the herd. Keep aside time to study research papers by

prominent data scientists. Most of these will be readily available on the

internet free of cost. Find your areas of interest and subjects of your

inclination in the field, and take notes. When you spend large sections of your

time understanding data science, you must validate your learning with facts. You

will find such facts when you read the works of prominent computer and data

scientists like Geoffrey Hinton, Rachel Thomas, and Andrew Ng, among many

established experts who contributed to data science with their studies in ML,

neural networks, and tools for designing models.

Philips study finds hospitals struggling to manage thousands of IoT devices

Hospital cybersecurity has never been more crucial. An HHS report found that

there have been at least 82 ransomware incidents worldwide this year, with 60%

of them specifically targeting US hospital systems. Azi Cohen, CEO of CyberMDX,

noted that hospitals now have to deal with patient safety, revenue loss and

reputational damage when dealing with cyberattacks, which continue to increase

in frequency. Almost half of hospital executives surveyed said they dealt with a

forced or proactive shutdown of their devices in the last six months due to an

outside attack. Mid-sized hospital systems struggled mightily with downtime from

medical devices. Large hospitals faced an average shutdown time of 6.2 hours and

a loss of $21,500 per hour. But the numbers were far worse for mid-sized

hospitals, whose IT directors reported an average of 10 hours of downtime and

losses of $45,700 per hour. "No matter the size, hospitals need to know about

their security vulnerabilities," said Maarten Bodlaender, head of cybersecurity

services at Philips.

Does it Matter? Smart home standard is delayed until 2022

Richardson said that one big reason for the delay is that the software

development kit (SDK) needs more work. He also stressed that with most

standards-setting efforts, the goal is to deliver a specification, not a

functioning SDK that developers can implement to test and use to build products.

This is true. There is a world of difference between functioning software and a

written spec. A developer working on Matter who didn’t want to be named told me

he wasn’t surprised by the delay, and thought it might actually help smaller

companies, because it gives them more time to work with the specification and

meet the product launches expected from Amazon, Google, and Apple with more

fully developed products of their own. He also added that he thought the SDK

performed well in a controlled environment, but still needed more work. I was

less convinced by the CSA’s argument that adding more companies to the working

group (back in May there were 180 members and now there are 209) had caused

delays. By that logic, we may never see a standard.

Methods for Saving and Integrating Legacy Data

The IT person tells management the legacy database has maybe another month

before it completely crashes. This is bad news for management. The database has

a huge amount of valuable data that needs to be transferred somewhere for

purposes of storage, until a solution for transforming and transferring the

legacy data to the new system can be found. Simply losing the data, which

contains information that must be saved for legal reasons, and/or contains

valuable customer information, would damage profits, and is unacceptable. Two

options for saving the legacy data in an emergency are: 1) transforming the

files into a generalized format (such as PDF, Excel, TXT) and storing the new,

readable files in the new database, and 2) transferring the legacy data to a VM

copy of the legacy database, which is supported by a cloud. Thomas Griffin, of

the Forbes Technology Council, wrote “The first step I would take is to move all

data to the cloud so you’re not trapped by a specific technology. Then you can

take your time researching the new technology. Find out what competitors are

using, and read to see what tools are trending in your industry.”

Is Your Current Cybersecurity Strategy Right for a New Hybrid Workforce?

To support a secure and productive hybrid workforce, enterprises need a

technology platform that scales and adapts to their changing business

requirements. This requires adopting a modular approach to support hybrid

workers that include integrating zero trust network access (ZTNA) for access to

private or on-premises applications, a multi-mode cloud access security broker

(CASB) for all types of cloud services and web security on-device to protect

user privacy. Securing corporate data on managed and BYOD devices are critical

for businesses with hybrid workforces. ZTNA surmounts the challenges associated

with VPN and provides greater protection. It uses the zero-trust principle of

least privilege to give authorised users secure access to specific resources one

at a time. This is accomplished through identity and access management (IAM)

capabilities like single sign-on (SSO) and multi-factor authentication (MFA), as

well as contextual access control.

Quote for the day:

"Leadership involves finding a parade

and getting in front of it." - John Naisbitt

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69705204/acastro_181017_1777_brain_ai_0001.0.jpg)