Doing data warehousing the wrong way

Ask enterprises how they feel about their data warehouses, and a high

percentage express dissatisfaction. They struggle to load data. They have

unstructured data but the data warehouse can’t handle it, etc. These aren’t

necessarily problems with the data warehouse, however. I’d hazard a guess that

usually, the dissatisfaction arises from trying to force the data warehouse (or

analytical database if you prefer) to do something for which it’s not well

suited. Here’s one way the error starts, according to Sammer: By now, everyone

has seen the rETL (reverse ETL) trend: You want to use data from app #1 (say,

Salesforce) to enrich data in app #2 (Marketo, for example). Because most shops

are already sending data from app #1 to the data warehouse with an ELT tool like

Fivetran, many people took what they think was a shortcut, doing the

transformation in the data warehouse and then using an rETL tool to move the

data out of the warehouse and into app #2. The high-priced data warehouses and

data lakes, ELT, and rETL companies were happy to help users deploy what seemed

like a pragmatic way to bring applications together, even at serious cost and

complexity.

5 ways AI can help solve the privacy dilemma

Protecting privacy while allowing the economy to flourish is a data challenge.

AI, machine learning, and neural networks have already transformed our lives,

from robots to self-driving cars to drug development to a generation of smart

assistants that will never double book you. There is no doubt that AI can power

solutions and platforms that protect privacy while giving people the digital

experiences they want and allowing businesses to profit. What are those

experiences? It’s simple and intuitive to every Internet user. We want to be

recognized only when it makes our lives easier. That means recognizing me so I

don’t have to go through the painful process of re-entering my data. It means

giving me information — and yes, serving me an ad — that is timely, relevant,

and aligns with my needs. The opportunities within the “personalization

economy,” as I call it, are vast. McKinsey published two white papers about the

size of the opportunity and how to do it right. Interestingly — and tellingly —

the word “privacy” isn’t mentioned a single time in either of those white

papers. That oversight is remarkable and overlooks the tension between privacy

and personalization.

Building a Strong Business Case for Security and Compliance

Cybersecurity is not a service or product; it is prudent to show how

protecting an organisation from losses is the only way for any financial

benefit to be gained. Try to communicate to the board in numbers, for example,

show that a £1 investment would stop a security event that could potentially

cost £10 to the company. That way, it should be possible to get the board to

vote on your side by demonstrating the business case and return on investment

in security measures and protection. In order for the board to determine their

investment decision in security, you should give them data that focuses on any

threat vectors that are already evident, such as inadequate services for

security awareness and employee training, processes and policies that are not

adequately applied and recorded or a lack of data backup practices and

patching updates. Formulating a risk/reward equation using a tiered security

approach is a good way forward, as you can then direct investments towards

incident response and detecting compliance. Once you have created a robust and

compelling business case for your organisation, you need to share the proposal

with the board.

How to Stop Failing at Data

Data projects are doomed when the people who plan and the people who execute

don’t have the same tools, the same access, or even the same goals. Data

scientists are really good at asking the right questions and running

exploratory models, but they don’t know how to scale. Meanwhile, data

engineers are experts at making data pipelines that scale, but they don’t know

how to find the insights. We’ve been using tools that require such a high

level of specialist expertise that it’s impossible to get everyone on the same

page. Because data scientists only ever touch small subsets of the data,

there’s no way for them to extrapolate their models to function at scale. They

don’t have access to production-grade data technology, so they have no way of

understanding the constraints of building complex pipelines. Meanwhile, data

engineers are being handed algorithms to implement with the barest context of

the business problem they’re trying to solve and with little understanding of

how and why data scientists have settled on this solution. There may be some

back and forth, but there’s rarely enough common ground to build a

foundation.

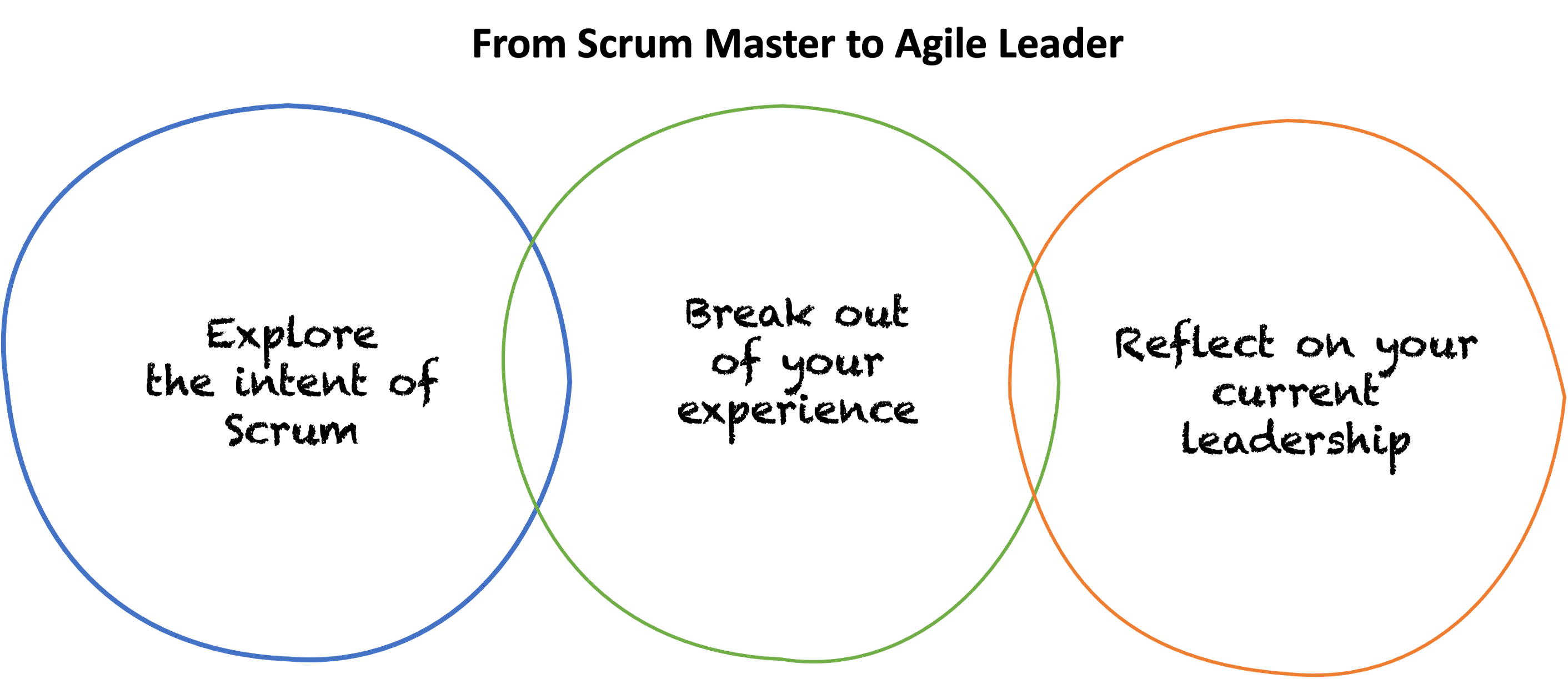

Exploring the Gaps in Scrum Mastery

Often people assume that Scrum is just a work management approach that helps

us increase efficiency by organizing our tasks. Instead, it is intended to

enable people to work in focused, collaborative, autonomous teams that use

empiricism, creativity, and innovation to pursue opportunities to deliver

value to customers by solving complex problems. To be creative in solving

challenging problems, the Scrum Team must feel safe enough to experiment,

fail, and learn through empiricism. They need to view each backlog item,

interaction, and piece of data as an opportunity to learn and optimize. If

these things are not possible, the team will not thrive. How do we, as Scrum

Masters, build an environment where this is possible? To help groups of people

form into high-functioning teams, they need ownership, inspiring purpose, and

self-accountability. These traits inspire curiosity and will encourage them to

take responsibility for their own work, how they work as a team, and how they

work with those outside of the team. How do we, as Scrum Masters, build an

environment where this is possible?

Agile/Scrum is a Failure – Here’s Why

The Church of Agile is being corrupted from within by institutional forces

that [can’t] adapt to the radical humanity [of] collaborative,

self-organizing, cross-functional teams. … Agile wasn’t supposed to be this

way. … Agile is supposed to be centered on people, not processes. … But

many businesses instead prioritize controlling their commodity human

resources. … Companies have dressed it up in Scrum’s clothing, claiming Agile

ideology while reasserting Waterfall’s hierarchical micromanagement. …

Properly implemented Scrum or Kanban [should] lead to the desired outcome

within finite time and budget. … Stories as mini-Waterfalls [treat]

the engineer as a cog in their employer’s machine … with no understanding of

the craft, creativity, and critical thinking required to solve such complex

problems. … Scrumfall relies, in other words, on the product team … providing

a complete and perfect specification before development begins. And it relies

on the development team … planning out a complete and perfect implementation

before a single line of code is written. … The invading Waterfall

taskmasters hidden in Scrum’s Trojan Horse absolutely hate

uncertainty.

All About Ecstasy, a Language Designed for the Cloud

Ecstasy’s emphasis on predictability is perhaps best illustrated via the type

system, known as the Turtles Type System, because it is bootstrapped on

itself. As in Smalltalk, everything in Ecstasy is an object, and all Ecstasy

types are built out of other Ecstasy types. In other words, unlike in Java or

C#, there is no secondary primitive type system and chars, ints, bits, and

booleans are all objects. In common with Java and C# there is a single root

called Object — although, In Ecstasy, Object is an interface, not a class.

Technically the type system supports a long and rather intimidating-looking

list of features. It is fully generic and fully reified, covariant,

module-based, transitively closed, type-checked and type-safe. The majority of

type safety checks are performed by the compiler and re-checked by the

link-time verifier, with only those checks in which the types cannot be fully

known beforehand performed at runtime — specifically to allow support for type

variance. “The Ecstasy language rules automatically handle covariance and

contravariance,” Purdy wrote in an email response to The New Stack.

An offensive mindset is crucial for effective cyber defense

Threat intelligence is a key component to developing an offensive mindset.

That’s why proactive cybersecurity auditing can be one of the best courses of

action in stopping cyberattacks before they can impact an organization. To

implement the right changes to cybersecurity strategy, an organization needs

to understand fully existing network vulnerabilities. This can be accomplished

through a few different tactics, including penetration testing and

vulnerability scanning. Penetration testing involves a person purposefully

hacking into a network to identify weaknesses to an organization’s system,

while vulnerability scanning consists of an automated test that looks for

potential security vulnerabilities. Both tactics enable organizations to

better grasp the mind of a hacker and understand the “how” behind a potential

attack. Something else to be considered – under the right circumstances – is

the possibility of hiring a former hacker. Their insight could prove to be

extremely helpful, as aptitude in identifying weaknesses can be a useful

asset. Many former hackers find roles as a penetration tester / red team

member fulfills their desire to expose system flaws while doing so legally,

for the betterment of security.

Why businesses need to help employees build friendships

The past few years have made this worse. At many companies, the entire staff

quickly became remote, and the days of team lunches, onsite gyms, happy hours,

and chats in the hallway disappeared. Suddenly, that company culture ceased to

exist. Even as some people returned to the office many weeks or months later,

many others did not. As companies institute remote or hybrid working

environments on a permanent basis, there are fewer opportunities to build

relationships with colleagues in person. The loss of work friendships is

likely one reason so many people are choosing to leave their jobs, as CNBC

reported. And among those who stay, success and creativity take a hit. In a

recent study, Yasin Rofcanin, a professor of management at the University of

Bath in the UK, and a group of colleagues found that friendship between

coworkers is the most crucial element for enhancing employee performance. The

isolation takes perhaps the biggest toll on mental and emotional health.

Feelings of isolation are deeply intertwined with stress and anxiety. Without

other people to lean on, it can be much more difficult for colleagues to find

the resiliency they need to face each workday.

The three most dangerous types of internal users to be aware of

Cautious users are willing to comply with new protocol changes, but just need

some time to fully adjust. They may need more gentle encouragement than the

typical user, as they take more of a “wait-and-see” approach to new cyber

security changes. This may be due to fear that any changes could disrupt their

workflow. This can pose a serious risk as vulnerabilities are more exposed

during major changes to security. ... Traditionalist users are generally hostile

to change and often do not trust IT help desks, thinking that the processes for

asking for help are too time consuming. Because they do not engage with

understanding how these new changes will directly impact their everyday

workloads, some may either wait until the last minute before integrating the new

security changes, or resist altogether. ... Like traditionalists, overachievers

may ignore cyber training sessions, emails from IT, or avoid learning new

authentication processes – seeing these as below their skill level. However,

this group of users is often overlooked when an assessment is performed, as

through their own experiences, they may feel that the resources within the

organisation are not adequate.

Quote for the day:

"Increasingly, management's role is not

to organize work, but to direct passion and purpose." --

Greg Satell

No comments:

Post a Comment