Recent technology advancements in cloud networking point the way toward a NoOps approach to cloud networking, meaning the automation of processes that now depend on direct control by human networking experts. One advancement is that the as-a-service revolution is finally reaching cloud networking infrastructure. What began with virtualized networking hardware has more recently progressed to software-defined (SD) cloud routing, enabling distributed, heterogeneous networking infrastructure to traverse public cloud, on-premises, hybrid cloud and multicloud environments. SD cloud routing centralizes and automates networking functions that previously required hands-on, time-consuming attention by highly certified human experts. As a result, SD cloud routing shifts networking infrastructure control directly to CloudOps and DevOps engineers, who are no longer dependent on networking professionals to establish or maintain their cloud networking infrastructure.

8 key elements of an effective disaster recovery plan

As the Southeast U.S. continues to recover in the aftermath of Hurricane Florence, it’s important for IT leaders to consider the effect hurricanes and other natural disasters have on healthcare information needs - both now, in the future and before the next challenge strikes. The sobering fact is more than half of organizations (58 percent) are not ready for a major loss of data. In fact, 60 percent will go bankrupt within six months, according to data from Washington, D.C.-based research firm Clutch. ... In order to prepare for a disaster, organizations need a strong DR plan and must be willing to go beyond above and beyond it in implementation. Not only should a company build its core processes into a DR plan and have a team that is designated for DR tasks but it should also perform a risk assessment to best determine what challenges might arise and how dangerous each of those elements is. One way to do this is through security penetration testing, in which an organization tests its system’s security by trying to exploit its weaknesses. Since disaster recovery is extremely important in healthcare complianceand other regulatory industries, it is best to also incorporate compliance into security and DR planning.

Cooperation vital in cyber security, says former Estonian minister

Looking to the future, Kaljurand said states should come together and continue the UN GGE process. “But they have to change the process. If they want the process to be serious and respected, if they want it to be adopted by a wider number of states, [the process] has to be open, transparent and inclusive.” The challenge for states and governments is to find ways of cooperating so that those who want to contribute will have the chance to be part of the process, said Kaljurand. “The UN has to lead by example and say that ‘multi-stakeholder’ means all stakeholders: governments, businesses, industry, civil society, academia and the technical community,” she said. Within the context of the UN, Kaljurand said Western democracies should be much more active in promoting their understanding of the use of information and communication technologies and how technology can change countries in terms of economy, governance, people, education and awareness.

Scaling your developer community with plugins

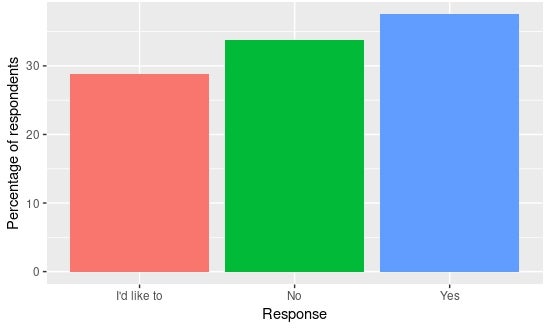

Scale the user community, and the developer community should grow too. But, how? Attracting users isn't easy either—there's a reason we have marketing. Unless you have a big budget for events, materials, adverts, etc., scaling the user community isn't much easier than scaling the developer one. So we have limited options for attracting users, and virtually none for the developers. What are we left with? Well, for ideas we could look to the ways users become developers. You'll always have a few people that were destined to become contributors—the right mix of domain knowledge, interest/drive, and programming skill. But there's a larger group who have an itch to scratch but perhaps aren't so confident in diving into the full code base. These are the people we need to target. As evidence, here's some data from the most recent Foreman Community Survey (the community I work in) showing nearly one-third of the 160 respondents would like to contribute but don't know where to start.

Wi-Fi 6 is coming to a router near you

The basic technology behind Wi-Fi 6, which is still known as 802.11ax on the technical side, promises major advances beyond just higher data rates, including better performance in dense radio environments and higher power efficiency. Wi-Fi 6 is also seen as a possible communications method for internet-of-things (IoT) devices that have low power capabilities and limited battery life. Thanks to a feature called target wake time, Wi-Fi 6 IoT devices can shut down their Wi-Fi connections most of the time and connect only briefly as scheduled in order to transmit data they’ve gathered since the last time, thus extending battery life. Farpoint Group principal and Network World contributor Craig Mathias said that, given the degree to which consumerization is the driving force even behind enterprise IT these days, the re-naming is probably a step in the right direction, but that doesn’t mean that simply labeling 802.11ax as Wi-Fi 6 tells the whole story.

Mapping the Market for Agile Coaches

By no means did we arrive at definitive answers but what we learned is an important contribution to the field. In fact, we believe that this article contains more information on agile coach compensation than has ever been available in one place. In gathering this information, we focused on using only the highest quality, verifiable data. As such the data below comes from only three sources: Agile coaching positions that the five agile coaches who participated in the meeting have had, mostly over the last two years; Positions that the authors have been offered, mostly over the last year; and Information that close, trusted friends of the authors have provided about their coaching positions. ... When extrapolating this information to other situations we caution: The authors are mid-level agile coaches and above. As such, entry level positions are likely to be under-represented; and The authors are located in the greater San Francisco Bay area, one of the most expensive areas in the world. Although not all of the positions are based in this geography, most of them are.

Below the Surface, Microsoft is not the new Apple

When Surface was announced, there was much debate around whether Microsoft could break the pattern of doom when OS licensors compete with their licensees. The company has largely avoided conflict by focusing its device portfolio to compete most directly with Apple's and by embracing Surface Pro-like products from other PC manufacturers. Surface's success has likely emboldened Google and Amazon to produce their own devices while continuing to seek broad licensing. One could even argue that Surface has provided more incentive for Microsoft to step up efforts such as its own stores and retail areas within Best Buy that also feature licensees' PCs. But think about that quip about the Surface not being a PC. What does that say about the merits of other products that are PCs and bound by the same version of Windows? The contrast between the treatment received by Surface and licensees was not pretty when, at its 2017 fall education event, Microsoft introduced the premium Surface Laptop, while the third-party announcements were focused on low-margin, Alcantara-bereft laptops.

How can IT put Windows 10 containers to use?

To use Windows 10 containers with Docker, IT must enable Microsoft Hyper-V on the endpoints it plans to deliver the container to. Microsoft supports two types of Windows containers: Windows Server Containers and Hyper-V Isolation Containers. A Windows Server Container runs directly on the host and shares the host's kernel. Only Windows Server can host Windows Server containers. A Hyper-V Isolation Container runs in a highly optimized virtual machine, making it more secure than a Windows Server Container. Both Windows Server and Windows 10 can host Hyper-V Containers, which is why IT must enable Hyper-V on Windows 10 machines. Docker containers are well-suited for Agile application delivery scenarios, especially for applications based on a microservice architecture where the services within an app run separately from one another. IT can easily create and deploy Windows 10 containers on developer desktops and testing machines and then implement them in production deployments.

Intel, AMD both claim server speed records

Even more impressive is that all systems tested included mitigations for the Spectre and Meltdown vulnerabilities. To mitigate those flaws in the CPU, some functions have to be disabled either in software or at the firmware level, and that can mean performance hits, sometimes significant hits. It shows Intel has a per-core advantage because its top Xeons are 28-core, whereas the AMD Epyc is 32-cores. And one benchmark shows Intel’s AVX512 extensions clobber Epyc, which enables floating point instructions and impacts compute, storage, and networking functions. So, it’s a big deal. The down side? They might be hard to get. Last week CFO and interim CEO Robert Swan published a letter saying that the company was suffering from a shortage of chips due to increasing demand, but Swan assured customers the company would be able to meet demand. The good news for data center operators is that the problem seems to be more on the PC side than server side.

Four critical KPIs for securing your IT environment

So, what should you be measuring when it comes to your security program? As the old saying goes: If you can’t measure it, you can’t manage it. Here are four Key Performance Indicators (KPIs) that can help enterprises navigate the murky waters of cybersecurity and reduce anxiety surrounding the possibility of cyber attacks. ... One practice that is key to this KPI is patching, so be sure to document patch cycles. However, some assets like industrial control systems, stamping presses or systems for other industrial uses may not be able to be patched. Many times, the manufacturer of the equipment will not support an updated operating system. In the case that patching it is not an option, the next best step is to use application whitelisting on the asset, which ensures that it will function as a fixed purpose device. That being said, patching in and of itself is not a silver bullet: There are still many assets in which neither of these options is feasible. If that is the case, the only option is isolation—and isolating an asset in its own network segment, in many cases, is the only way to enhance security.

Quote for the day:

"One of the sad truths about leadership is that, the higher up the ladder you travel, the less you know." -- Margaret Heffernan

No comments:

Post a Comment