Smaller micro datacentres utilising a modular approach are making it possible for data processing facilities to be based either on-site or as close to the location as possible. This edge computing is essential as it gives manufacturers the capabilities to run real-time analytics, rather than vast volumes of data needing to be shipped all the way to the cloud and back for processing. Modular datacentres give operators the scope to ‘pay as you grow’ as and when the time comes for expansion. The rise of modular UPS provides similar benefits in terms of power protection requirements too. And with all the additional revenues a datacentre could make from Industry 4.0, the need for a reliable and robust continuous supply of electricity becomes even more imperative. Transformerless modular UPSs deliver higher power density in less space, run far more efficiently at all power loads so waste less energy, and also don’t need as much energy-intensive air conditioning to keep them cool. Any data centre manager planning to take advantage of manufacturers’ growing data demands would be wise to review their current power protection capabilities.

Angular Application Generator - an Architecture Overview

Most of the time, tools like Angular CLI and Yeoman are helpful if we decide to simplify the source generation by following a very strict standard and pragmatic way to develop an application. In the case of Angular CLI, it can be useful when doing scaffolding. Simultaneously, the Yeoman generator delivers very interesting generators for Angular and brings a great deal of flexibility because you can write your own generator in order to fill your needs. Both Yeoman and Angular CLI enrich the software development through their ecosystems: delivering the bulk of defined templates to build up the initial software skeleton. On the other hand, it could be hard to only use templating. Sometimes standardized rules can be translated into a couple of templates, which would be useful in many scenarios. But that wouldn’t be the case when trying to automate very different forms with many variations, layouts and fields, in which countless combinations of templates could be produced. It would bring only headache and long-term issues, because it reduces the maintainability and incurs technical debt.

Asigra evolves backup/recovery to address security, compliance needs

Asigra addresses the Attack-Loop problem by embedding multiple malware detection engines into its backup stream as well as the recovery stream. As the backups happen, these engines are looking for embedded code and use other techniques to catch the malware, quarantine it, and notify the customer to make sure malware isn’t unwittingly being carried over to the backup repository. On the flip side, if the malware did get into the backup repositories at some point in the past, the malware engines conduct an inspection as the data is being restored to prevent re-infection. Asigra also has added the ability for customers to change their backup repository name so that it’s a moving target for viruses that would seek it out to delete the data. In addition, Asigra has implemented multi-factor authentication in order to delete data. An administrator must first authenticate himself to the system to delete data, and even then the data goes into a temporary environment that is time-delayed for the actual permanent deletion. This helps to assure that malware can’t immediately delete the data. These new capabilities make it more difficult for the bad guys to render the data protection solution useless and make it more likely that a customer can recover from an attack and not have to pay the ransom.

Moving away from analogue health in a digital world

Technology has an important role to play in the delivery of world-class healthcare. Secure information about a patient should flow through healthcare systems seamlessly. The quality, cost and availability of healthcare services depends on timely access to secure and accurate information by authorised caregivers. Interoperability cannot be solved by any one organisation in isolation. What is needed is for providers, innovators, payers, governing bodies and standards development organisations to come together to apply innovative and agile solutions to the problems that healthcare presents. We can clearly see the pressure that healthcare organisations are under at the moment. Researchers at the universities of Cambridge, Bristol, and Utah found that a staggering 14 million people in England now have two or more long-term conditions – putting a major strain on the UK’s healthcare services. To add to this, recent figures show that more than half of healthcare professionals believe the NHS’s IT systems are not fit for purpose.

Shadow IT is a Good Thing for IT Organizations

The move to shadow IT is a good thing for IT. Why? It is a wake-up call. It provides a clear message that IT is not meeting the requirements of the business. IT leaders need to rethink how to transform the IT organization to better serve the business and get ahead of the requirements. There is a significant opportunity for IT play a leading role in business today. However, it goes beyond just the nuts and bolts of support and technology. It requires IT to get more involved in understanding how business units operate and proactively seek opportunities to advance their objectives. It requires IT to reach beyond the cultural norms that have been built over the past 10, 20, 30 years. A new type of IT organization is required. A fresh coat of paint won’t cut it. Change is hard, but the opportunities are significant. This is more of a story about moving from a reactive state to a proactive state for IT. It does require a significant change in the way IT operates for many. That includes both internally within the IT organization and externally in the non-IT organizations. The opportunities can radically transform the value IT brings to driving the business forward.

Fintech is disrupting big banks, but here’s what it still needs to learn from them

Though it’s definitely possible to grow while managing risk intelligently, it’s also true that pressure to match the “hockey-stick” growth curves of pure tech startups can lead fintechs down a dangerous path. Startups should avoid the example of Renaud Laplanche, former CEO of peer-to-peer lender Lending Club, who was forced to resign in 2016 after selling loans to an investor that violated that investor’s business practices, among other accusations of malfeasance. It’s not just financial risk that they may manage badly: the sexual harassment scandal that recently rocked fintech unicorn SoFi shows that other types of risky behavior can impact bottom lines, too. While it might be common for pure tech startups to ask forgiveness, not permission, when it comes to the tactics they use to expand, fintechs should be aware that they’re playing in a different, more risk-sensitive space. Here again, they can learn from banks — who will also, coincidentally, look for sound risk management practices in all their partners. Since the 2008 crisis, financial institutions have increasingly taken a more holistic approach to risk as the role of the chief risk officer (CRO) has broadened.

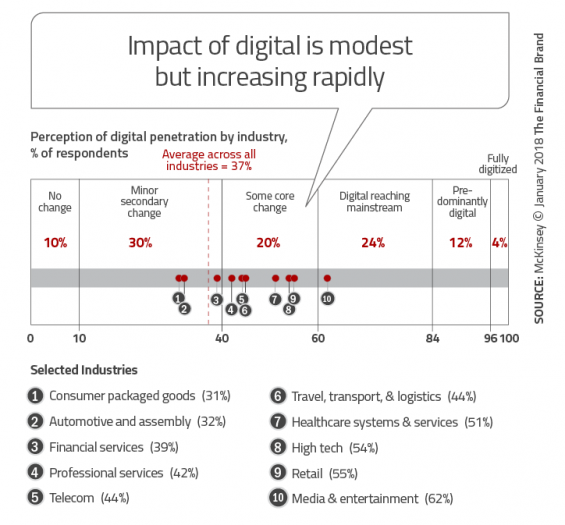

The Banking Industry Sorely Underestimates The Impact of Digital Disruption

According to McKinsey, many organizations underestimate the increasing momentum of digitization. This includes the speed of technological changes, resultant behavioral changes and the scale of disruption. “Many companies are still locked into strategy-development processes that churn along on annual cycles,” states McKinsey. “Only 8% of companies surveyed said their current business model would remain economically viable if their industry keeps digitizing at its current course and speed.” Most importantly, McKinsey found that most organizations also underestimate the work that is needed to transform an organization for tomorrow’s reality. Much more than developing a better mobile app, organizations need to transform all components of an organization for a digital universe. If this is not done successfully, an organization risks being either irrelevant to the consumer or non-competitive in the marketplace … or both. Complacency in the banking industry can be partially blamed on the fact that digitization of banking has only just begun to transform the industry. No industry has been transformed entirely, with banking just beginning to realize core changes.

Why tech firms will be regulated like banks in the future

First, we have become addicted to technology. We live our lives staring at our devices rather than talking to each other or watching where we are going. It’s not good for us and, according to Pew Research, is responsible for more and more suicides, particularly amongst the young. Pew analysis found that the generation of teens they call the “iGen” – those born after 1995 – is much more likely to experience mental health issues than their millennial predecessors. Second is privacy. Facebook and other internet giants are abusing our privacy rights in order to generate ad revenues, as demonstrated by Cambridge Analytica, but they’re not the only one. Google, Alibaba, Tencent, Amazon and more are all making bucks from analyzing our digital footprints and we let them because we’re enjoying it, as I blogged about recently. Third is that the power of these firms is too much. When six firms – Google (Alphabet), Amazon, Facebook, Tencent, Alibaba and Baidu – have almost all the information on all the citizens of the world held digitally, it creates a backlash and a fear. I’ve thought about this much and believe that it will drive a new decentralized internet

Ericsson and SoftBank deploy machine learning radio network

"SoftBank was able to automate the process for radio access network design with Ericsson's service. Big data analytics was applied to a cluster of 2,000 radio cells, and data was analysed for the optimal configuration." Head of Managed Services Peter Laurin said Ericsson is investing heavily in machine learning technology for the telco industry, citing strong demand across carriers for automated networks.To support this, Ericsson is running an Artificial Intelligence Accelerator Lab in Japan and Sweden for its Network Design and Optimization teams to develop use cases. In introducing its suite of network services for "massive Internet of Things" (IoT) applications in July last year, Ericsson had also added automated machine learning to its Network Operations Centers in a bid to improve the efficiency and bring down the cost of the management and operation of networks. According to SoftBank's Tokai Network Technology Department radio technology section manager Ryo Manda, SoftBank is now collaborating with Ericsson on rolling out the solution to other regions. "We applied Ericsson's service on dense urban clusters with multi-band complexity in the Tokai region," Manda said.

Models and Their Interfaces in C# API Design

A real data model is deterministically testable. Which is to say, it is composed only of other deterministically testable data types until you get down to primitives. This necessarily means the data model cannot have any external dependencies at runtime. That last clause is important. If a class is coupled to the DAL at run time, then it isn’t a data model. Even if you “decouple” the class at compile time using an IRepository interface, you haven’t eliminated the runtime issues associated with external dependencies. When considering what is or isn’t a data model, be careful about “live entities”. In order to support lazy loading, entities that come from an ORM often include a reference back to an open database context. This puts us back into the realm of non-deterministic behavior, where in the behavior changes depending on the state of the context and how the object was created. To put it another way, all methods of a data model should be predicable based solely on the values of its properties. ... Parent and child objects often need to communicate with each other. When done incorrectly, this can lead to tightly cross-coupled code that is hard to understand.

Quote for the day:

"Strategy Execution is the responsibility that makes or breaks executives" -- Alan Branche and Sam Bodley

No comments:

Post a Comment