Besieged Cambridge Analytica Shuts Down

Tuesday at Facebook's F8 developer event, the social media giant announced a number of measures to put the control of data use back in the hands of the user, including the ability to scrub all data. "Cambridge Analytica should be viewed as a cautionary tale for any firm handling personal data," says Julie Conroy, director at Aite Group. "Just as the rash of breaches took cybersecurity to a C-suite and board-level issue over the past few years, the firestorm around Cambridge Analytica's various abuses illustrate why consumer data control and privacy also need to be top of mind issues for all company executives." When the news of the Facebook data leak scandal broke in March, the scale of the impact and aftershocks became quickly apparent. Facebook's CEO, Mark Zuckerberg, eventually testified before U.S. House and Senate committees about the firm's privacy practices. Because Zuckerberg has failed to appear before Collins' committee, despite repeated requests, Collins warned Facebook in a Tuesday letter that he's prepared to issue a summons for Zuckerberg's appearance.

What is IO Acceleration? – JetStream Software Briefing Note

Caches are small and volatile; IO Acceleration is large and durable. Caches were designed when memory based storage was very expensive. If the organization could access 50% of its IO operations from cache that was considered effective but it still meant that 50% of the traffic had to cross the network and access data from hard disks. In-memory caches are not durable, meaning that power loss means data loss. The potential for data loss meant they were not safe for write caching so all writes had to go to the hard disk tier. While writes make up less of the IO distribution of the typical environment, they are the slowest part of the IO chain. Flash writes data slower than reads and each write often has an additional set of writes associated with flash management and data protection. The typical sizing of IO Acceleration, on the other hand, allows it to service 90% or more of all read requests and its design lets it work with a variety of storage devices including all-flash arrays and even the cloud. IO acceleration also has durability; protecting data outside of the system on which the acceleration software is installed so that power failure or even server failure does not result in the loss of data.

Blockchain: Prep starts now; adoption comes later

To avoid investments in hardware, early blockchain experiments will likely take place on pay-per-use models such as public cloud. While this will allow projects to scale, companies will have to contend with issues related to costs, security, data privacy, compliance, and vendor lock-in. In the meantime, what should companies do to prepare for blockchain? Now is a good time to start evaluating use cases. Vendor or conference workshops can help educate IT and line-of-business executives to what blockchain can do today and get started on documenting the processes for specific use cases. Workshops are an opportunity to get both internal and external parties involved. Generally speaking, companies wouldn't use a blockchain inside an organization. A stronger value proposition is provided by a consortium blockchain that crosses multiple organizations, which establishes a trusted mechanism for recording transactions, implementing smart contracts, and building other blockchain applications.

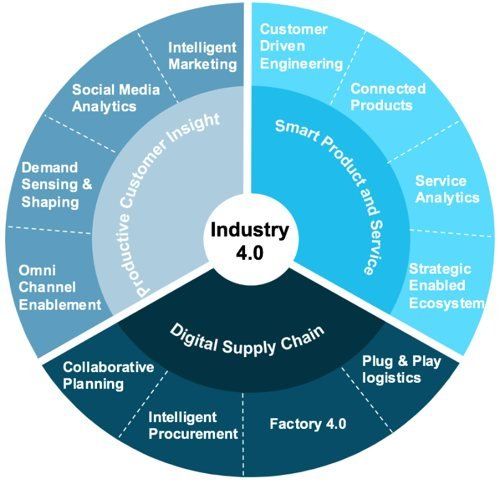

Digitization makes the Supply Chain agile and customer-related

Industry 4.0 truly adds value to operations by providing the capability of analysing large amounts of data. Big Data analytics is one of the pillars of this new revolution and supply chain personnel need to understand that there would simply be more information coming their way. Everything involved in a process, right from a warehouse rack, to a guillotine machine, to a supply container, will have the ability to communicate, which will then require analysis and the CSCO needs to be ready for this. Highly automated process equipment and complex IT infrastructure does not eliminate the need for workers. On the contrary, it creates the need for highly skilled workers, who can effectively utilise the information available at their disposal. Future workforce would need to be competent at problem solving and systems engineering. It is crucial for a leader to understand the current workforce and their capabilities, in order to help modify the existing human resource to be ready for the challenges Industry 4.0 brings. Another key aspect for the CSCO to consider would be the end-to-end visibility across the supply chain.

Why Google Assistant could help make Android wearables more business-friendly

With the latest changes to Wear OS, Google has added two features to make using your watch as an organizational tool easier and more precise. Smart suggestions will generate options to narrow a query, with Google using the example of asking Wear OS about the weather. When a user asks about the weather, the current temperature and conditions appear on the screen—nothing is new there. What is new are suggestions available with a swipe up from the bottom of the screen: An evening forecast, weekend weather, and other recommendations appear as tappable buttons. Suggestions are available for various interactions and functions, similar to Google Assistant suggestions on Android smartphones. Google said it designed smart suggestions for quick interactions on the go, which can be great if you don't want to have an extended conversation with your wrist in public. The second productivity feature Google added to Wear OS is spoken responses, which can be a huge boon for busy people. Instead of displaying text for certain interactions on the screen, Assistant will now speak out loud via a watch's internal speaker or connected Bluetooth device.

9 machine learning myths

Machine learning is proving so useful that it's tempting to assume it can solve every problem and applies to every situation. Like any other tool, machine learning is useful in particular areas, especially for problems you’ve always had but knew you could never hire enough people to tackle, or for problems with a clear goal but no obvious method for achieving it. Still, every organization is likely to take advantage of machine learning in one way or another, as 42% of executives recently told Accenture they expect AI will be behind all their new innovations by 2021. But you’ll get better results if you look beyond the hype and avoid these common myths by understanding what machine learning can and can’t deliver. Machine learning and artificial intelligence are frequently used as synonyms, but while machine learning is the technique that’s most successfully made its way out of research labs into the real world, AI is a broad field covering areas such as computer vision, robotics and natural language processing, as well as approaches such as constraint satisfaction that don’t involve machine learning. Think of it as anything that makes machines seem smart.

NSA: The Silence of the Zero Days

Many organizations would do well to focus more on locking down their systems, and worry less about whether they might get targeted by a zero-day attack. "At the end of the day, if you're bleeding from the eyeballs, just stop the bleeding," BluVector's Lovejoy told me. But as the Equifax breach dramatically demonstrated, it's tough to keep track of all patches. According to software vendor Flexera's Secunia research team, the number of documented, unique vulnerabilities in software increased from 17,147 in 2016 to 19,954 in 2017 - a 14 percent increase - across about 2,000 products from 200 vendors. The good news, Flexera's Alejandro Lavie told me at RSA, is that "86 percent of [newly announced] vulnerabilities have a patch available within 24 hours of their disclosure." But as the NSA's Hogue warned, patches can be quickly reverse-engineered by hackers - criminals, nation-states or otherwise. So organizations need to do a better job of hardening their hardware and software, including not only tracking but also applying patches everywhere they're required, as quickly as possible.

GDPR could be Facebook's toughest data management test yet

One of the most heated exchanges came between conservative minister Julian Knight and Schroepfer, the article said, with Knight saying Facebook was a "morality-free zone," destructive to privacy, and not an innocent party that was wronged by Cambridge Analytica. "Your company is the problem," he said. Facebook’s vice president and chief privacy officer Erin Egan and vice president and deputy general counsel Ashlie Beringer recently posted an update about its GDPR compliance plans and new privacy protections. They introduced new “privacy experiences for everyone on Facebook” as part of GDPR compliance, including updates to its terms and data policy. All users will be asked to review information about how Facebook uses data and make choices about their privacy on the social network. The company said it would begin by rolling these choices out in Europe. "As soon as GDPR was finalized, we realized it was an opportunity to invest even more heavily in privacy,” the posting said. “We not only want to comply with the law, but also go beyond our obligations to build new and improved privacy experiences for everyone on Facebook.”

A Multi-Gateway Payment Processing Library for Java

J2Pay is an open source multi-gateway payment processing library for Java that provides a simple and generic API for many gateways. It reduces developers' efforts when writing individual code for each gateway. It provides flexibility to write code once for all gateways. It also excludes the effort of reading docs for individual gateways. ... While working on J2pay, you will always be passing and retrieving JSON. Yes, no matter which format is native to a gateway API, you will always be using JSON, and for that, I used the org.json library. You do not have to worry about gateway-specific variables like some gateways returning transaction IDs as transId or transnum. Rather, J2Pay will always return transactionId, and it will also give you the same formatted response no matter what gateway you are using. My first and favorite point is that you should not need to read the gateway's documentation because a developer has already done that for you (maybe you are that developer who integrated the gateway).

How to master GDPR compliance with enterprise architecture

With the complexity of modern IT services and the increasing amount of data obtained by companies today, it’s not uncommon to lose visibility into everywhere information exists — and for data to float to unexpected areas — especially within large organizations. The first step towards achieving full compliance is establishing a clear view of your data — where it lives, how your company processes it and how to quickly access it to make key changes. While a daunting and time-consuming task, leveraging EA and application portfolio management (APM) tools can help you gain full visibility into your organization’s data landscape. Regardless of your existing EA sophistication, taking an application-centered approach will create a strong foundation for success. First, identify all existing applications inside of the organization. Use surveys of application owners to uncover which applications involve personal data as defined by the GDPR, ensure that consent has been received by all data subjects and identify all business capabilities that use the impacted applications.

Quote for the day:

"A leader is always first in line during times of criticism and last in line during times of recognition." -- Orrin Woodward

No comments:

Post a Comment