Agile Development: How to Pick the Most Valuable User Stories

A goal that's big enough to be worthwhile will usually have multiple actors involved -- these correspond to the roles of the standard user story template. In a retail environment, improving customer loyalty would involve not only the customer but also the parts of the company that the customer interacts with (shipping, ordering, marketing). This is the second level of the hierarchy: the actors involved in achieving the goal. For any actor, there are probably multiple "impacts" that need to be achieved. For example, if we want to improve customer loyalty, then we want customers to be more satisfied with us, to order more frequently from us and to buy more stuff when they do order from us. These impacts form the third level of an impact map and correspond to both the needs and reasons portions of the standard user template. This means that an impact isn't a deliverable: "Improving shipping" isn't an impact; instead "improving shipping" is a deliverable that might contribute to achieving that "improved customer satisfaction" impact. A good impact also has a measure associated with it that allows the organization to tell when it's been achieved.

The Data Breach Game: The 9 Worst IT Security Practices

Do you have one service account for all of your production servers? Or worse -- I saw this at a client once -- do you have linked servers between all of your database servers, and have those accounts logging in as the system admin? To take this a step further, in your personal life, do you reuse passwords across Web sites? It's not good to do that, even at sites that don't impact your finances. Those small sites are the most likely to get breached, and then the password you used at your favorite cat-grooming message board and your bank is now out there on the dark Web. ... As always, be careful what you click on, especially in e-mail. E-mail is one of our biggest productivity tools, but it's also the biggest security vulnerability in any organization. To be helpful, use a managed e-mail service like Office 365 or commercial Gmail and multifactor authentication (MFA). Bonus points if you don't use text messages for MFA. Finally, make sure that your network is segmented in a way that your CEO opening an e-mail can't infect your domain controllers or database servers.

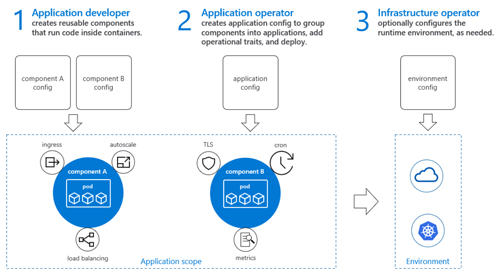

New mainframe uses: Blockchain, containerized apps

Forrester's research found mainframes continue to be considered a critical piece of infrastructure for the modern business – and not solely to run old technologies. Of course, traditional enterprise applications and workloads remain firmly on the mainframe, with 48% of ERP apps, 45% of finance and accounting apps, 44% of HR management apps, and 43% of ECM apps staying on mainframes. But that's not all. Among survey respondents, 25% said that mobile sites and applications were being put into the mainframe, and 27% said they're running new blockchain initiatives and containerized applications. Blockchain and containerized applications benefit from the integrated security and massive parallelization inherent in a mainframe, Forrester said in its report. "We believe this research challenges popular opinion that mainframe is for legacy," said Brian Klingbeil, executive vice president of technology and strategy at Ensono, in a statement. "Mainframe modernization is giving enterprises not only the ability to continue to run their legacy applications, but also allows them to embrace new technologies such as containerized microservices, blockchain and mobile applications."

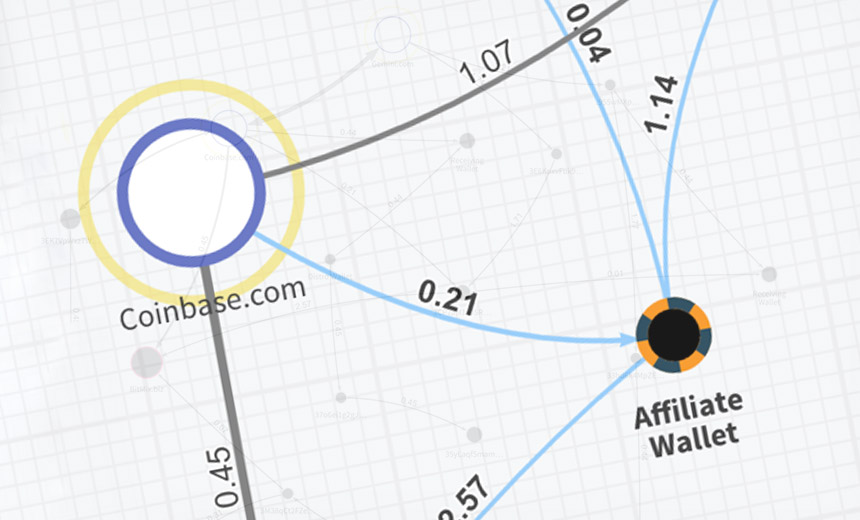

Sodinokibi Ransomware Gang Appears to Be Making a Killing

The group behind Sodinokibi appears to have had a head start on its success. While it's not clear what relationship the GandCrab and Sodinokibi gangs might have, researchers report seeing a clear code overlap in their malware. Security firm Secureworks says that based on multiple clues it believes that the threat groups behind GandCrab and Sodinokibi - aka Sodin and REvil - "overlap or are linked." In other words, one or more developers may not have retired with GandCrab, but helped set up a new operation. Like GandCrab, a customized version of Sodinokibi gets supplied to each individual affiliate, who infects systems with the malware and then shares a cut of the proceeds with organizers. Some affiliates appear to be more technically skilled than others. Coveware, a Connecticut-based ransomware incident response firm, says that at least one affiliate group specializes in hacking IT service providers as well as managed security service providers. Doing so enables the affiliate to distribute the ransomware to hundreds or thousands of endpoints managed by the service provider.

IaaS vs. PaaS options on AWS, Azure and Google Cloud Platform

Many early PaaS providers restricted which technologies they supported, and their software tools were compatible only with their own hosting platforms. It was difficult to migrate from one PaaS offering to another, or adapt a PaaS-based development pipeline to run on a generic IaaS instead. As businesses increasingly sought freedom from cloud lock-in, PaaS became more software-agnostic. Open source options, such as Docker containers orchestrated by Kubernetes, replaced some proprietary tooling. As a result, cloud computing vendors that originally specialized in IaaS added PaaS offerings, and increased compatibility with their respective IaaS offerings. For example, some versions of AWS CodePipeline, a continuous delivery service that forms part of a PaaS framework in the AWS cloud, can deploy applications to virtual machines or containers that run on AWS' IaaS.

Top cloud security controls you should be using

The misconfigured WAF was apparently permitted to list all the files in any AWS data buckets and read the contents of each file. The misconfiguration allowed the intruder to trick the firewall into relaying requests to a key back-end resource on AWS, according to the Krebs On Security blog. The resource “is responsible for handing out temporary information to a cloud server, including current credentials sent from a security service to access any resource in the cloud to which that server has access,” the blog explained. The breach impacted about 100 million US citizens, with about 140,000 Social Security numbers and 80,000 bank account numbers compromised, and eventually could cost Capital One up to $150 million. ... “The challenge exists not in the security of the cloud itself, but in the policies and technologies for security and control of the technology,” according to Gartner. “In nearly all cases, it is the user, not the cloud provider, who fails to manage the controls used to protect an organization’s data,” adding that “CIOs must change their line of questioning from ‘Is the cloud secure?’ to ‘Am I using the cloud securely?’”

Smart Cities are Made Smart by Planning and Strategy

Sleman Saliba underscored that the real “smart” piece of smart cities comes from the data and insights that come from making those connections. “The important stuff is not only on one premise but really interconnections between companies, between cities, between buildings, and leveraging this information that you get by using technologies across companies and factors,” he adds. Markus John provided a great real-world example of where this approach is making a difference, citing the work of city officials in Mannheim, Germany to take 180 hectares of prime land in the city (made available by the 2013 departure of the US Army) and use it as a boost to accelerating its development as a Smart City. He said that communities making this kind of a change have a unique opportunity, both in the center of the city, and – in particular - its suburbs. “Communities are thinking about how can we manage it smartly - in a modern way?,” he said. “It’s not just about delivering power from outside, but about how to make this suburb intelligent, in the way of buffering energy with batteries, maybe solar panels on the roof, optimizing energy consumption in the suburbs. ...”

Why compliance concerns are pushing more big companies to the cloud

In the current climate and looking into the future, we are seeing an acceleration of workloads from on-prem(ises) infrastructure, from on-prem applications into the cloud. That trend is clearly established. I don't think that's going to change, it's only accelerating. But one of the things which might appear a little bit counterintuitive, is as the adoption curve, the classical bell curve, that you see from early adopters to mainstream before it starts flagging down, we're seeing it's gone past the early adopters, it's more mainstream. But one of the interesting trends that I'm seeing is two issues are popping up. One is why there's an inexorable push to move the workload to the cloud, your data to the cloud, your applications to the cloud, for all the reasons why the cloud is becoming popular. Nobody wants to manage hardware, maintenance, capital efficiency, CAPEX v. OPEX transformation. But along with that, there's literally, I would say linear, almost an exponential concern around data security, data privacy, and regulatory compliance.

An indication of this trend is that European startups are struggling to transform into $1 billion-valued unicorn companies. While a few exceptions share the spotlight, overall this upscaling happens at only half the rate seen in the US. In addition to lack of funding, this also comes down to the fact that Europe is a made up of many distinct countries and that despite its efforts to unite around a single market, fragmentation is still part of its identity. In this context, companies face the challenge of scaling up across a continent that has various different national regulations and structures – a task much more complex than in large homogenous markets like China or the US. And so, in the past 20 years, Europe's share of "superstar companies" – the top 10% of companies with more than $1 billion in annual revenue – has all but halved. But while the effects of fragmentation are evident, Hjartar explained, Europe could leverage its national differences to use them as a strength. "We have pockets of leaders spread out across the continent," he said. "We have 5.7 million software developers – that compares to 4.4 million in the US. We have all the building blocks to be successful, but now the biggest hurdle is to link them together with an ambitious vision."

The answer is, you can’t — at least, not all at once. It’s next to impossible to pull a giant organization, with hundreds of ingrained processes, divisions, and stakeholders, into a new digital and competitive landscape in one go. Many companies facing this challenge today invest deeply in research and development, thinking that knowledge alone will help right their course. But there’s no correlation between a company’s increased spending in research and development and a stellar performance. It’s not surprising, then, that you feel overwhelmed. You feel you’re on the precipice of profound change, yet you simply can’t move your company in a way that will achieve your goals or realize your vision. The secret to overcoming these challenges lies in the humble compass. Invented in the second century BC, its principles of directional guidance can be used today to create a road map to the future, one that breaks down choices that are actionable and impactful and delivers tangible results.

Quote for the day:

"A leader should demonstrate his thoughts and opinions through his actions, not through his words." -- Jack Weatherford