How Productivity And Surveillance Technology Can Create A Crisis For Businesses

“The use of productivity and surveillance technology can create crisis

situations for companies and organizations due to the fact that they are not

always clear on what they are getting into,” according to Jeff Colt, founder and

CEO of Aquarium Fish City, an aquarium and aquatic website. “Companies

oftentimes do not fully understand the ramifications of using these tools. For

example, if a company decides to implement surveillance technology in the

workplace, it needs to make sure that it is not violating any laws.

Additionally, it needs to make sure that it is not infringing on any employee

rights or privacy rights,” he said in a statement. “The use of productivity and

surveillance technology can also create crisis situations because some people

may not be comfortable with being monitored by their employers. This could lead

some employees to feel like they are being treated unfairly as well as causing

them to quit their jobs altogether,” Colt noted. ... “The use of these

technologies can have the opposite intended effect when not managed properly,”

Natalia Morozova, managing partner at Cohen, Tucker & Ades, an immigration

law firm.

The benefits of regenerative architecture and unlocking the data potential in buildings

Regenerative architecture is “architecture that focuses on conservation and

performance through a focused reduction on the environmental impacts of a

building.” It can allow buildings to generate their own electricity and provides

structures to sell excess energy back to the grid, creating a comprehensive,

self-sustaining prosumer architecture. By producing their own energy through

solar and wind turbines, these buildings significantly lower their carbon

emissions and have more resilience in the face of extreme weather events. Some

can even reverse environmental damage. But to fully leverage these

opportunities, building owners and facility managers need smarter control of

their energy. The right data, insights, and control help to make fast decisions

and act on them. This is possible through the power digitalization of buildings.

Buildings are responsible for 40% of the world’s CO2 emissions, second only to

manufacturing. Yet, 30% of energy in buildings is wasted, often heating,

cooling, and lighting empty spaces.

Quantum Physics Could Finally Explain Consciousness, Scientists Say

The existence of free will as an element of consciousness also seems to be a

deeply non-deterministic concept. Recall that in mathematics, computer science,

and physics, deterministic functions or systems involve no randomness in the

future state of the system; in other words, a deterministic function will always

yield the same results if you give it the same inputs. Meanwhile, a

nondeterministic function or system will give you different results every time,

even if you provide the same input values. “I think that’s why cognitive

sciences are looking toward quantum mechanics. In quantum mechanics, there is

room for chance,” Danielsson tells Popular Mechanics. “Consciousness is a

phenomenon associated with free will and free will makes use of the freedom that

quantum mechanics supposedly provides.” However, Jeffrey Barrett, chancellor’s

professor of logic and philosophy of science at the University of California,

Irvine, thinks the connection is somewhat arbitrary from the cognitive science

side.

Eclypsium calls out Microsoft over bootloader security woes

The malicious shell activity involves visual elements that could potentially

be detected by users on workstation monitors during the boot process; however,

the vulnerabilities are especially dangerous for servers and industrial

control systems that lack displays. The third vulnerability, CVE-2022-34302,

is even harder to detect, as exploitation would remain virtually invisible to

system owners. The researchers discovered that the New Horizon DataSys

bootloader contains a small file that acts as a built-in bypass for Secure

Boot; the 73 KB file disables the Secure Boot check without turning the

protocol off completely, and it also has the ability to execute additional

bypasses for security handlers. The discovery of the Horizon DataSys built-in

Secure Boot bypass was definitely a "holy crap moment," Shkatov told

SearchSecurity. The researchers said admin access is required for full

exploitation, but they demonstrated an exploit during the presentation that

used a phishing email and a malicious Word document that elevated their

privileges to admin.

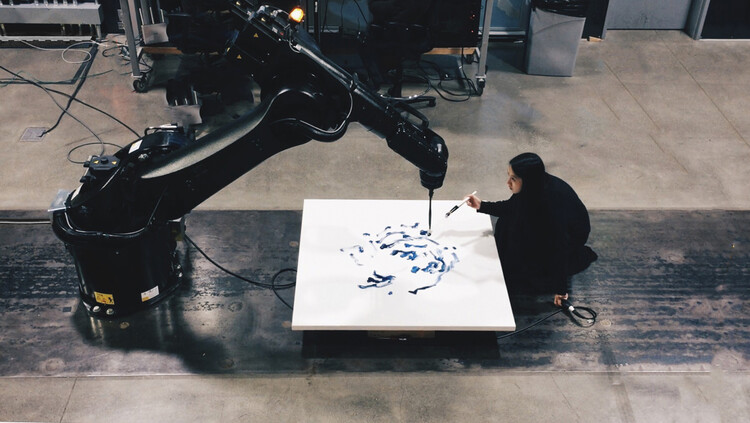

Things You Should Know About Artificial Intelligence and Design

Nearly anyone who lives in the modern world produces data, often on the order

of terabytes per day. We text our friends, stream videos, use fitness apps,

ask Siri about the weather while we look out the window, walk by CCTV cameras,

and the list goes on. Most of these data are unstructured, i.e. not organized

in any clear order. Machine learning provides a way for computers to glean

meaning from this lack of structure. As Armstrong puts it, “even now as you

read, computers sift and categorize your data trails—both unstructured and

structured — plunging deeper into who you are and what makes you tick.” How

does it do this? The short answer is algorithms, statistical analysis, and

prediction. Not sure what any of those words mean? ... As a researcher

dedicated to demystifying emerging technology for landscape architects, I

believe it is vital we get designers of all demographics and digital abilities

to a shared understanding of what AI is so we can all better facilitate its

continued permeation into practice. Big Data. Big Design. does this is in

spades.

The effect of digital transformation on the CIO job

The CIO has always been a super-important role. I'd liken it [in the past] to

the role of a flight engineer. You can't take off if the flight engineer is

not on board; he or she serves a super-important purpose – it's mission

critical, it's a lights-on operation. It's about delivering a really important

capability: to keep the engine, the plane running, in this case, the

enterprise running. We're seeing a big change happen because with digital

transformation -- and using technology to deliver a new business value

proposition -- the world is now starting to center around digital. And the

role of the CIO is changing because he or she's now more and more becoming the

pilot or the co-pilot, helping colleagues and their stakeholders and the rest

of the executive committee to really reimagine the business value proposition

on the back of new technology. And so that's one big change that we're going

through because the [CIO] seat at the table, the role of the individual, is

completely changing. I think another thing that's happening is that tech is no

longer the long pole in the tent. And what I mean by that is when you do

digital transformation, it isn't just the tech, it's the data.

How Can Clinical Trials Benefit From Natural language processing (NLP)?

NLP can help identify patterns in participant responses that may indicate

whether a treatment is effective. This information can improve the accuracy of

trial results and make better decisions about which treatments to pursue. In

addition, NLP can help researchers understand why certain participants respond

well or poorly to a cure. This knowledge can help develop more effective

treatments in the future. Several different NLP tools can be used in clinical

trials. The most commonly used tools include machine learning algorithms, text

mining techniques, and Word2Vec models. Each has advantages and disadvantages.

Therefore, it’s crucial to pick the appropriate equipment for the job.

Fortunately, many software platforms provide pre-built libraries that make it

easy to use NLP in your research projects. Natural language processing (NLP) has

significantly impacted clinical trials by helping researchers identify patterns

in participant feedback. This has allowed for more informed decisions about

modifying or improving treatments.

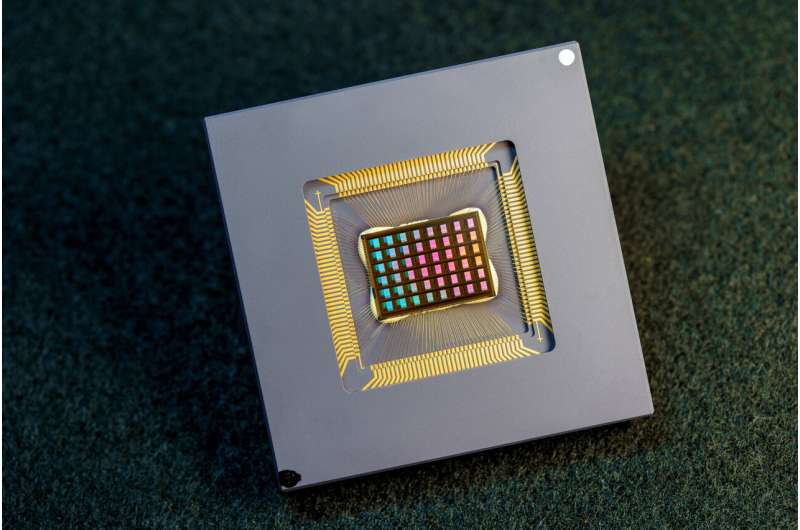

New neuromorphic chip for AI on the edge, at a small fraction of the energy and size of today's computing platforms

The key to NeuRRAM's energy efficiency is an innovative method to sense output

in memory. Conventional approaches use voltage as input and measure current as

the result. But this leads to the need for more complex and more power hungry

circuits. In NeuRRAM, the team engineered a neuron circuit that senses voltage

and performs analog-to-digital conversion in an energy efficient manner. This

voltage-mode sensing can activate all the rows and all the columns of an RRAM

array in a single computing cycle, allowing higher parallelism. In the NeuRRAM

architecture, CMOS neuron circuits are physically interleaved with RRAM weights.

It differs from conventional designs where CMOS circuits are typically on the

peripheral of RRAM weights.The neuron's connections with the RRAM array can be

configured to serve as either input or output of the neuron. This allows neural

network inference in various data flow directions without incurring overheads in

area or power consumption. This in turn makes the architecture easier to

reconfigure.

Monoliths to Microservices: 4 Modernization Best Practices

Surveys have shown that the days of manually analyzing a monolith using sticky

notes on whiteboards take too long, cost too much and rarely end in success.

Which architect or developer in your team has the time and ability to stop what

they’re doing to review millions of lines of code and tens of thousands of

classes by hand? Large monolithic applications need an automated, data-driven

way to identify potential service boundaries. ... When everything was in the

monolith, your visibility was somewhat limited. If you’re able to expose the

suggested service boundaries, you can begin to make decisions and test design

concepts — for example, identifying overlapping functionality in multiple

services. ... We all know that naming things is hard. When dealing with

monolithic services, we can really only use the class names to figure out what

is going on. With this information alone, it’s difficult to accurately identify

which classes and functionality may belong to a particular domain. ... What

qualities suggest that functionality previously contained in a monolith deserves

to be a microservice?

PC store told it can't claim full cyber-crime insurance after social-engineering attack

According to Chief District Judge Patrick Schiltz, who handed down the order, this case treads somewhat new legal ground. In the opinion, Schiltz noted that both SJ's lawsuit and Travelers' dismissal motion only cite three other cases, all from different jurisdictions, that "analyze the concept of direct causation in the context of computer or social-engineering fraud." All of those cases had a major difference in common, the court pointed out – none of them involved insurance policies that cover both computer and social engineering fraud, or make clear that the two types of fraud are different, mutually exclusive categories. This case, therefore, is less of a litmus test for the future of legal disagreements around social engineering insurance payouts, and more an examination of a close reading of contracts. "[Travelers'] Policy clearly anticipates – and clearly addresses – precisely the situation that gave rise to SJ Computers' loss, and the Policy bends over backwards to make clear that this situation involves social-engineering fraud, not computer fraud," Schiltz said.

Quote for the day:

"People only bring up your past when

they are intimidated by your present." -- Joubert Botha

No comments:

Post a Comment