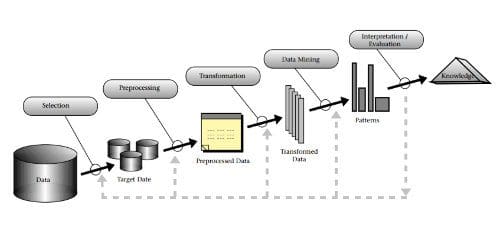

For our purposes, however, we will separate the data preparation from the modeling as its own regimen. As Python is the ecosystem, much of what we will cover will be Pandas related. For the uninitiated, Pandas is a data manipulation and analysis library, is one of the cornerstones of the Python scientific programming stack, and is a great fit for many of the tasks associated with data preparation. Data preparation can be seen in the CRISP-DM model shown above (though it can be reasonably argued that "data understanding" falls within our definition as well). We can also equate our data preparation with the framework of the KDD Process -- specifically the first 3 major steps -- which are selection, preprocessing, and transformation. We can break these down into finer granularity, but at a macro level, these steps of the KDD Process encompass what data wrangling is.

The Difference Between Entrepreneur and Executive

Entrepreneurs must understand that their business(es) should run without them. Systems and structure must be executed by management and each member of an enterprise should know his/her role. When venture capitalists and bankers invest in a new start-up, it is the first thing they look for – business structure. The passionate nature of the founder may get them to the table, but it is true day-to-day business management they look for. Look at Ray Kroc, founder of McDonalds. ... Executives, on the other hand, should take a page from the entrepreneur by looking beyond the numbers and going with their gut. When Mazda introduced the Miata, all the marketing data out there said nothing about a little convertible sports car. It was the last thing on the American consumers’ mind. But Mazda did the unthinkable – they put passion back into driving with a fun and affordable roadster that brought back the days of British MG Midgets and weekends in the country.

Great Data Scientists Don’t Just Think Outside the Box, They Redefine the Box

The data scientists didn’t wait until someone developed a better Machine Learning algorithm. Instead, they looked at the wide variety of Machine Learning and Deep Learning tools and algorithms available to them, and applied them to a different, but related use case. If we can predict the health of a device and the potential problems that could occur with that device, then we can also help customers prevent those problems, significantly enhancing their support experience and positively impacting their environment. ... One of a data scientist’s most important characteristics is that they refuse to take “it can’t be done” as an answer. They are willing to try different variables and metrics, and different type of advanced analytic algorithms, to see if there is another way to predict performance. This graphic measures the activity between different IT systems. Just like with data science, this image shows there’s no lack of variables to consider when building your Machine Learning and Deep Learning models!

This NYC Startup Supercharges Advisors With AI and NLP

By focusing on data that is often overlooked or misclassified such as tickers, instrument names, strategies, investment goals and many other financial entity types, we’re able to provide “4K NLP for financial data” as an input into our engine. Its robust platform includes three new configurable APIs; the first, Personalized Insights, curates personalized stories of “what to say”, the second – Client Prioritization API helps answer the question of “who to talk to” by providing a prioritized list of clients to call, with the reasons for out reach. The company’s third API, Expert Conversation, is a natural language interface with data aggregation, curation and linking capabilities. It is focused on question answering for market, ETF, mutual fund and equities research. It’s a smarter, faster way to get answers to questions that are buried in research reports or sits behind many screens.

Hackers create 'ghost' traffic jam to confound smart traffic systems

The attack manipulates the mechanism I-SIG uses to manage queues, by spoofing the attack vehicle's predicted arrival time and the requested phase of the traffic lights (I-SIG lets vehicles request a green light for their arrival, and decides whether or not to grant it based on the queue it's created of all the incoming requests). “The attacker can change the speed and location in its BSM [Basic Safety Message – El Reg] message to set the arrival time and the requested phase of her choice and thus increase the corresponding arrival table element by one”, the paper said. The attack, they claimed, has a 94 per cent success rate, and on average, would increase delays by 38.2 per cent. The best defence against these and other attacks, the researchers say, is a combination of more robust algorithms, better performance in the roadside units that give the system its realtime feedback, and better validation of vehicle-originated messages.

This crazy invention by an Indian Banker will leave you speechless

The most Interesting Fact about “Bankerpedia” is, The Portal was coded in 6 days on Mobile during the daily commute from Elgin Mills Civil Lines Kanpur to Ghatampur (because he lived in Kanpur and was posted in Ghatampur approx 100 Km of daily travel to & fro). At the time of coding, he didn’t know that one day it’s going to be used by thousands of Learners. His imagination of finding a way to ease the efforts of collecting Notes & sorting out what to study & what to leave and how to share all these notes to the Colleagues who are going to appear in the same exam turned out to be a great Idea. ... he crowd-sourced it with several bankers & to update study material & maintain them, he created Artificial Intelligence Bots to take care of all these things. Apart from this, he has kept Security of Users and user’s data at top priority by using SSL. The usage of SSL technology ensures that all data transmitted between the web server and browser remains encrypted.

How to replace and upgrade a MacBook Pro hard disk

Upon receiving the SSD, I moved the screws from the side of the old disk to the same locations on the new drive, and then installed the drive in the MacBook Pro. I also reconnected the battery to the motherboard and replaced the hard drive retention piece, as well as the bottom cover and all screws. I connected the thumb drive to the MacBook Pro, booted up the laptop while pressing the Option key, and then chose to boot from the thumb drive that read Install OS X El Capitan. I selected the SDD as the disk to which I wanted to install the operating system, and then I marveled at how easy the process was. Next, the installation process failed. I was greeted with a nonsensical error that read "This copy of the Install OS X El Capitan application can't be verified. It may have been corrupted or tampered with during downloading." The file was fine; it wasn't corrupt, nor had it been tampered with.

A multi-sided approach to financing the smart city

Building successful Smart City initiatives requires collaboration between engaged individuals, city governments and a growing range of private commercial organisations. Yet there is a practical difficulty in all of this – finding a way to pay for Smart Cities - not an easy subject at a time when there is enormous pressure on public finances in countries all over the world. Most city and national governments are unable fund new initiatives of this kind from taxpayer income alone, and that leads them to seek partnerships and alliances with commercial bodies and technology specialists to design and deliver new services and new options. This is where problems start to arise. Technology companies are interested in partnering with city governments for their own reasons. They want to test their ideas, gain proofs of concept, access useful development data and other research requirements for building their own businesses.

How to build a data-first culture for a digital transformation

There’s not just one metric you need to pay attention to, but it’s not hundreds either. Organizations can get overly excited about data, then all of a sudden, you’re overwhelmed. So we decided to focus on data that helped us understand customer behavior and eliminate the unknowns. Look-alikes (an algorithmically assembled group of people who resemble, in some way, an existing group) based on existing segments of customers were most valuable, and over time we layered additional elements, such as demographics, behavior, age, current carrier, and location. We then overlay those insights with data from digital properties: website, mobile app, stores, and call centers. And we started to understand better our customers’ journeys across the web, as they called us, tweeted about us, etc. We’re now starting to teach our “bots” to learn more about contextually relevant interactions with the customer. For example, if a customer visits one of our stores, then comes online and looks at various sets of pages or has a pending order, the bot learns how to respond to that specific customer profile.

What Is The Difference Between Artificial Intelligence (AI) And Machine Learning?

As it turned out, one of the absolute best application zones for machine learning for a long time was PC vision, however despite everything it required a lot of hand-coding to take care of business. Individuals would go in and compose hand-coded classifiers like edge identification channels so the program could distinguish where a protest began and halted; shape recognition to decide whether it had eight sides; a classifier to perceive the letters "S-T-O-P." From every one of those hand-coded classifiers they would create calculations to comprehend the picture and "learn" to decide if it was a stop sign. ... Back in that late spring of '56 meeting the fantasy of those AI pioneers was to build complex machines — empowered by rising PCs — that had similar attributes of human intelligence. This is the idea we consider as "General AI" — astounding machines that have every one of our faculties (possibly more), all our reason, and figure simply as we do. You've seen these machines perpetually in motion pictures as companion — C-3PO — and enemy — The Eliminator.

Quote for the day:

"Be determined to handle any challenge in a way that will make you grow." -- Les Brown

No comments:

Post a Comment