Five Pillars of Data Governance: Initiative Sponsorship

It’s an on-going initiative that requires active engagement from executives and business leaders. But unfortunately, the 2018 State of Data Governance Report finds lack of executive support to be the most common roadblock to implementing DG. This is historical baggage. Traditional DG has been an isolated program housed within IT, and thus, constrained within that department’s budget and resources. More significantly, managing DG solely within IT prevented those in the organization with the most knowledge of and investment in the data from participating in the process. This silo created problems ranging from a lack of context in data cataloging to poor data quality and a sub-par understanding of the data’s associated risks. Data Governance 2.0 addresses these issues by opening data governance to the whole organization. Its collaborative approach ensures that those with the most significant stake in an organization’s data are intrinsically involved in discovering, understanding, governing and socializing it to produce the desired outcomes.

How Serverless Computing Reshapes Security

First and foremost, serverless computing, as its names implies, lowers the risks involved with managing servers. While the servers clearly still exist, they are no longer managed by the application owner, and are instead taken care of by the cloud platform operators — for instance, Google, Microsoft, or Amazon. Efficient and secure handling of servers is a core competency for these platforms, and so it's far more likely they will handle it well. The biggest concern you can eliminate is addressing vulnerable server dependencies. Patching your servers regularly is easy enough on a single server but quite hard to achieve at scale. As an industry, we are notoriously bad at tracking vulnerable operating system binaries, leading to one breach after another. Stats from Gartner predict this trend will continue into and past 2020. With a serverless approach, patching servers is the platform's responsibility. Beyond patching, serverless reduces the risk of a denial-of-service attack

Google parent's free DIY VPN: Alphabet's Outline keeps out web snoops

Outline promises to solve the double-edged sword of VPN services. There are loads of free VPN services, which in theory can protect sensitive information when using a public Wi-Fi network. However, as ZDNet's David Gewirtz has pointed out, you probably shouldn't entrust these with digging an encrypted tunnel between your computer and another machine. An alternative option is to pay around $120 a year for a VPN service, but again this requires trusting the provider and weighing up the jurisdiction it operates in. Outline offers journalists a cheaper way to set up their own VPN server on any cloud provider or on their own hardware, cutting out the need to trust a third party. "Outline gives you control over your privacy by letting you operate your own server. And Outline never logs your web traffic," Jigsaw product manager Santiago Andrigo wrote. "We made it possible to set up Outline on any cloud provider or on your own infrastructure so you can fully own and operate your own VPN and don't have to trust a VPN operator with your data."

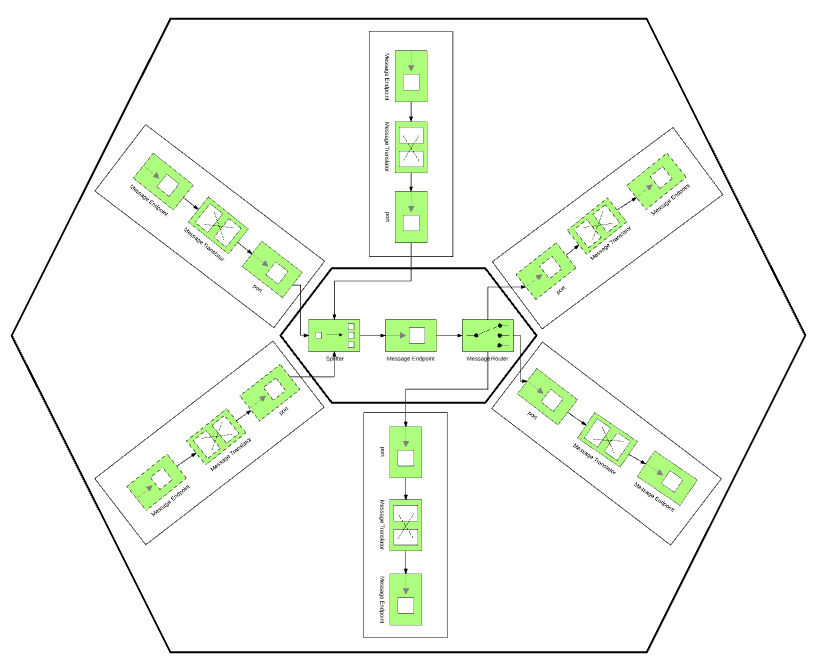

Hexagonal Architecture as a Natural Fit for Apache Camel

Let's look at the two extremes: a layered architecture manages the complexity of a large application by decomposing it and structuring it into groups of subtasks of particular abstraction levels called layers. Each layer has a specific role and responsibility within the application and changes made in one layer of the architecture usually don't affect the components of other layers. In practice, this architecture splits an application into horizontal layers, and it is a very common approach for large monolithic web or ESB applications of the JEE world. On the other extreme is Camel, with its expressive DSL and route/flow abstractions. Based on the Pipes and Filters pattern, Camel would divide a large processing task into a sequence of smaller, independent processing steps (Filters) connected by a channel (Pipes). There is no notion of layers that depend on each other, and, in fact, because of its powerful DSL, a simple integration can be done in a few lines and a single layer only. In practice, Camel routes split your application by use case and business flow into vertical flows rather than horizontal layers.

Do Facebook Users Really Care About Online Privacy?

Facebook, since 2014, has a platform policy that clearly states what developers of third party apps can and cannot do. With regards to data, third party apps have to elaborate in their privacy policy on what data they are collecting and how they plan to use that data. These third-party apps must also delete any data received from Facebook. What Facebook also does now is moderate third party apps. Apps go through a review process where they must justify why that information is necessary for the app. Facebook characterizes "detailed information" as anything other than a user's friends, public profile, and email. Approval is only granted if apps can show that the information they are requested will be directly used. But Facebook's updated platform policy only came into place a year after the "Cambridge University researcher named Aleksandr Kogan had created a personality quiz app, which was installed by around 300,000 people who shared their data as well as some of their friends' data," as revealed by Zuckerberg in the public post.

Wide area Ethernet can fuel digital network transformation

Enter wide area Ethernet (WAE) services. WAE is a technology that has been around a long time but never gained the same level of adoption as other network services such as MPLS or consumer-type broadband services. In some ways Ethernet has always been a solution looking for a problem as its attributes didn't align cleanly with the challenges most businesses faced. Indeed, the network was considered by many to be a commodity -- the basic plumbing if you will -- where the information being transported was best-effort in nature. Because of this, network managers and procurement officers just went with what they knew, even though it was often considerably more expensive. DX is the problem that Ethernet and WAE have been waiting for. WAE directly addresses the business problems faced by digital organizations. ... Data continues to grow at exponential rates; 90% of all data that exists today, in fact, has been created in the past two years, according to ZK Research. IoT, video, mobile services and other data will only continue to add to the glut.

Machine learning is proving so useful that it's tempting to assume it can solve every problem and applies to every situation. Like any other tool, machine learning is useful in particular areas, especially for problems you’ve always had but knew you could never hire enough people to tackle, or for problems with a clear goal but no obvious method for achieving it. Still, every organization is likely to take advantage of machine learning in one way or another, as 42 percent of executives recently told Accenture they expect AI will be behind all their new innovations by 2021. But you’ll get better results if you look beyond the hype and avoid these common myths by understanding what machine learning can and can’t deliver. ... Think of it as anything that makes machines seem smart. None of these are the kind of general “artificial intelligence” that some people fear could compete with or even attack humanity. Beware the buzzwords and be precise.

As organizations matured, they could use the cloud control plane tools to create NAC rules. While the interface required training, the concepts were similar. Traffic from one set of hosts was allowed or disallowed. However, the cloud security control plane does represent one of the first early challenges in hybrid IT security—a consistent operations control plane. As hybrid IT services become more complex, security professionals required more granular controls between the public cloud and private infrastructure. Take the universal example of the web and application tiers in a three-tier application as an example. Merely creating a firewall rule that allows traffic from the web-tier to the application-tier proved complex. Early private data center firewalls lacked the context of ephemeral cloud security objects. If the web-tier leveraged elastic compute, the public cloud administrator had to ensure that auto-scaled web servers were all created in the same network scope for the static firewall to properly filter traffic.

What would a regulated-IoT world look like?

Perhaps the most useful contrast to the U.S.’s lack of regulatory attention to IoT security issues is Europe, where the General Data Protection Regulation has provoked howls of outrage from the tech industry, but won praise from privacy rights advocates. GDPR, in essence, places the burden on companies to state clearly and up-front what types of user data will be gathered, and precisely what it will be used for. It also gives users the right to see data that has been collected about them, and to correct inaccuracies. It’s not wildly dissimilar to the most stringent data protection law currently on the books in the U.S. – the Health Insurance Portability and Accountability Act, better known as HIPAA. According to Sadeh, a more broad-based privacy protection law in the U.S., designed to address the threats posed by IoT and other technologies that have badly outstripped existing regulations, could easily resemble HIPAA with greater scope.

Google Is Working on Its Own Blockchain-Related Technology

The technology presents challenges and opportunities for Google. Distributed networks of computers that run digital ledgers can eliminate risks that come with information held centrally by a single company. While Google’s security is strong, it’s one of the largest holders of information in the world. The decentralized approach is also beginning to support new online services that compete with Google. Still, the company is an internet pioneer and has long experience embracing new and open web standards. To build its ledger, Google has looked at technology from the Hyperledger consortium, but it could opt for another type that may be easier to scale to run millions of transactions, one of the people familiar with the situation said. "Any time there’s a paradigm shift like this, there’s an opportunity for new giants to emerge -- but also for incumbents to adopt the new approach," said Elad Gil, a startup investor who worked on early mobile projects at Google more than a decade ago.

Quote for the day:

"Do not lose hold of your dreams or aspirations. For if you do, you may still exist but you have ceased to live." -- Thoreau

No comments:

Post a Comment