4 collaboration security mistakes companies are still making

If organizations don’t provide access to vetted collaboration tools, employees

will likely find their own and use insecure solutions, said Sourya Biswas,

technical director, risk management and governance at security consulting firm

NCC Group. “Therefore, while it’s important for organizations to embrace digital

collaboration, at the same time they should prevent installation and use of

unapproved tools, via mechanisms such as restricted local admin access and

managed browser solutions.” Even when collaboration tools are vetted and

approved, organizations must be cognizant of the different collaboration

platforms that each employee is allowed to access in order to prevent sensitive

data from being exfiltrated and avoid providing new attack vectors for bad

actors, said Michael McCracken, senior director of end user solutions at SHI

International, a reseller of technology products and services. In addition, IT

needs to maintain central control over these tools, said AJ Yawn, partner, risk

assurance advisory at Armanino, an independent accounting and business

consulting firm.

EC Says European Private Data Can Flow to Compliant US Companies

The business community had been waiting for guidance on how data privacy policy

might look in the EU, says Dona Fraser, senior vice president of privacy

initiatives with BBB National Programs, a nonprofit that oversees national,

industry self-regulation programs. With the former EU-US Privacy Shield rendered

invalid in 2020 by the European Court of Justice, new policy was needed. Fraser

says companies wanted to comply and be able to safely conduct business without

worry of intervention or whether or not their consumers were being treated

properly, but policy was in limbo. The announcement about the new framework

seems to have restored confidence in the program. “This week,” she says, “we’ve

received an enormous amount of inquiries from current and past participants

saying, ‘What's next, what do we do?’ The eagerness that we’re hearing in the

marketplace is, for us, from a business perspective, it’s great to hear.”

Logistics of the framework and the approval process for businesses still need to

be worked out, Fraser says, but now the door is open for companies that halted

work with data from Europe to reemerge.

CISO perspective on why boards don’t fully grasp cyber attack risks

A CISO needs to understand the knowledge and background of the board members to

be able to translate technical jargon into business language and something

familiar with the target audience. I approach this by relating technical jargon

to everyday situations or business scenarios, something the board can easily

grasp. To be effective at this style of communication, I collaborate with other

business leaders outside of the technology groups to optimize business

alignment. Focusing on the potential business impact of cybersecurity risk also

allows a CISO to frame technical issues in terms of their consequences such as

financial loss or damage to the company’s brand. It is equally important to be

concise and avoid over-embellishing cyber-risks, while still focusing on the

strategic objectives you are asking the board to weigh in on. To bridge the gap

between board members and CISOs to promote the mitigation of cyber-risk, it is

essential that a CISO enhance communication, educate board members about

cybersecurity risks and promote a collaborative approach to decision making.

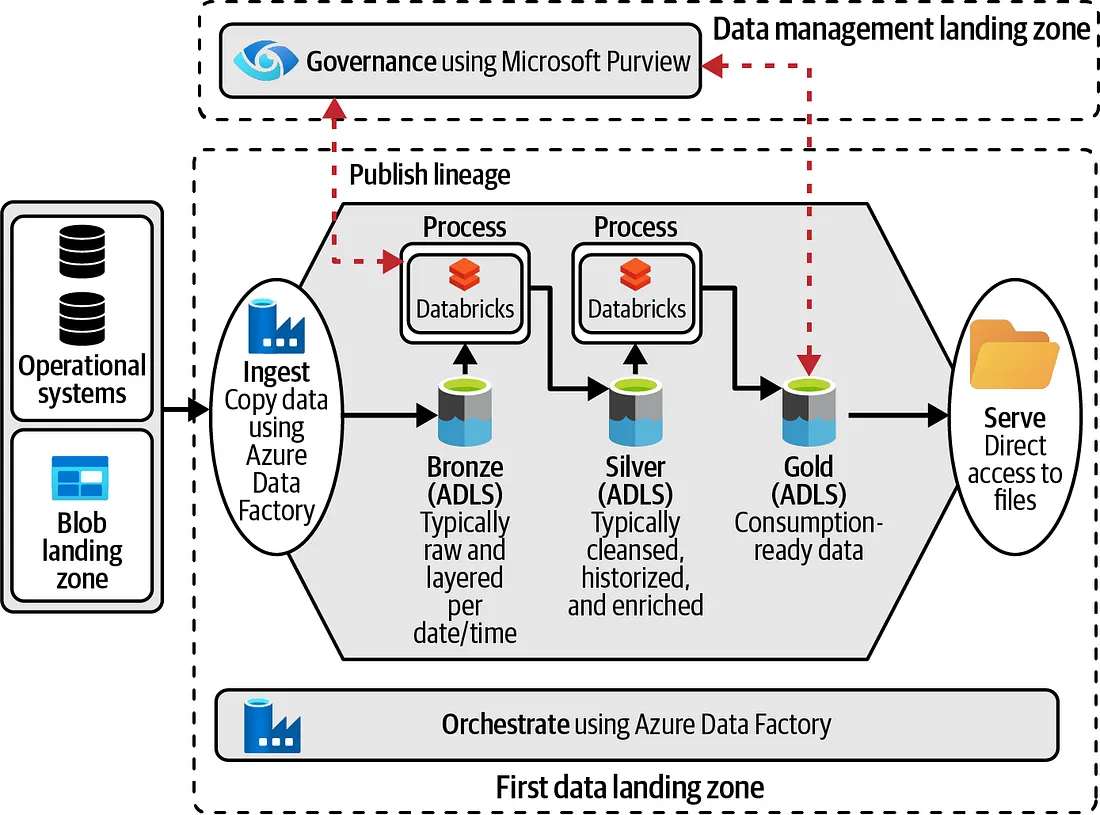

Data Management at Scale

If your company already has a high level of data management maturity or is

decentrally organized, then you can begin with a more decentralized approach to

data management. However, to align your decentralized teams, you will need to

set standards and principles and make technology choices for shared

capabilities. These activities need to happen at a central level and require

superb leaders and good architects. I’ll come back to these points toward the

end of this chapter, when discussing the role of enterprise architects. Besides

the starting point, there are other aspects to take into consideration with

regard to centralization and decentralization. First, you should determine your

goals for the end of your journey. If your intended end state is a decentralized

architecture, but you’ve decided to start centrally, the engineers building the

architecture should be aware of this from the beginning. With the longer-term

vision in mind, engineers can make capabilities more loosely coupled, allowing

for easier decentralization at a later point in time.

Designing High-Performance APIs

By incorporating specific design principles, developers can build APIs that

scale effectively and operate efficiently. Here are key considerations for

building scalable and efficient APIs: Stateless Design: Implement a stateless

architecture where each API request contains all the necessary information for

processing. This design approach eliminates the need for maintaining a session

state on the server, allowing for easier scalability and improved performance.

Use Resource-Oriented Design: Embrace a resource-oriented design approach that

models API endpoints as resources. This design principle provides a consistent

and intuitive structure, enabling efficient data access and manipulation. Employ

Asynchronous Operations: Use asynchronous processing for long-running or

computationally intensive tasks. By offloading such operations to background

processes or queues, the API can remain responsive, preventing delays and

improving overall efficiency. Horizontal Scaling: Design the API to support

horizontal scaling, where additional instances of the API can be deployed to

handle increased traffic.

Why SUSE is forking Red Hat Enterprise Linux

To understand what’s happening here, we need to go back a few years. In late

2020, Red Hat made a crucial change to CentOS Linux (the Community Enterprise

Linux Operating System). For the longest time, CentOS was essentially the free

(as in beer) version of Red Hat Enterprise Linux (RHEL), Red Hat’s flagship

distribution. Red Hat acquired CentOS in 2014 after a lot of turmoil in the

CentOS community and gained a permanent majority on the CentOS board. “The

CentOS project was in trouble,” Gunnar Hellekson, Red Hat’s VP and GM for Red

Hat Enterprise Linux, told me. “At the same time, we needed a way to collaborate

with other communities — OpenStack in particular at the time. And we said, well,

here’s an opportunity! We can take the CentOS project. Now we have something

that is freely available and close enough to RHEL to do the development on — and

then that gives us a way to work in the community. And then when customers move

into production, they can go on to Red Hat Enterprise Linux.”

The Disconnected State of Enterprise Risk Management

Compliance, with its myriad frameworks, standards and mandates, remains the

primary means by which we assess and maintain the risk posture of our national,

defense and private sector entities. Compliance is how we gauge our resilience,

determine shortcomings and prioritize mitigation efforts to resolve them.

Compliance, ostensibly, is how we determine where to point our limited security

resources in the form of controls to ensure protection against threats. And yet,

while the threats occur in real time, our compliance efforts remain relegated to

a historical reporting function, capturing our prior state at best or, worse

yet, someone’s subjective opinion of an organization’s security posture. After

all, most compliance programs today are best characterized as “opinion farming

at scale,” built on surveys or manual assessments of controls by human analysts,

who in turn depend on the cooperation and information of countless system

owners. No matter how high you stack those opinions, they don’t turn into

facts.

Downsides to using cloud autoscaling systems

Autoscaling can reduce costs by optimizing resource utilization, but savings are

not guaranteed. I have seen autoscaling systems lead to unexpected cost

increases. For example, rapid and frequent scaling operations can generate

additional charges that are often unexpected. This will undoubtedly happen if

resources are not managed efficiently. I’ve seen unpredictable workload patterns

or sudden spikes in demand trigger autoscaling processes. This results in more

instances or resources provisioned, but also a potentially enormous cloud bill.

The only way to work around this is to carefully analyze and forecast workload

patterns to balance scalability and cost-effectiveness. ... Certain applications

don’t work well with autoscaling systems. Legacy or monolithic applications that

rely on static configurations or have complex interdependencies may not perform

very well with autoscaling systems. Of course, there is a fix, normally

rewriting a portion of the entire application to leverage autoscaling more

efficiently.

Defining the CISO Role

CISOs are tasked with the strategic leadership of information security for their

companies. This can entail building a cybersecurity program and overseeing the

teams that execute the policies that underpin that program. The responsibilities

are many and varied. For example, Heins is responsible for incident response,

security engineering and operations, identity and access management, cloud and

application security, and governance, risk, and compliance. Effectively

implementing cybersecurity demands that CISOs spend much of their time engaging

with stakeholders throughout an organization: board members, other executives,

and people in other departments. They also spend part of their time on external

engagement. Meg Anderson, vice president and CISO of investment management and

insurance company Principal Financial Group, notes that she talks with her CISO

peers about emerging threats and best practices. That part of the job can help

CISOs think about how to structure their programs effectively and build a

pipeline of talent for the future.

Security First! Strategies for Building Safer Software

Having security involved in the initial stages of a software development process

always made sense, as with bug fixing, it is faster and cheaper to address

security issues early on. But, particularly in larger enterprises, it was rarely

done in practice. By the same token, individual development teams would tend not

to invest in security if they saw it as the role of a dedicated security team

and thus somebody else’s problem. This pushed security to the right, as one of

the things that happened between development and deploying to production, where

security becomes more difficult and often less effective. It also led to

friction between the development and security teams, since the two groups had

conflicting goals: Developers were under pressure to ship more features more

quickly, and saw security as a gatekeeper, slowing down or even halting

development to allow time to investigate issues. At its most extreme, developers

felt, security’s ideal situation would be that nothing would be deployed to

production at all — after all, if nothing is running, then nothing can get

hacked.

Quote for the day:

"One must be convinced to convince, to

have enthusiasm to stimulate the others." -- Stefan Zweig

No comments:

Post a Comment