Data-driven policing strategies bring new look to public safety

First, there's the enhanced decision-making that can happen when you have more information coming in from multiple sources from Next Gen 911 enabling new data sources -- for example, a crash notification from a vehicle. Today, you get a phone call 15 minutes after an accident happens. With telematics integration, you get a notification from the vehicle that says not only has there been an accident, but what is the location, did the vehicle roll over, how many people are in the vehicle. It's just a tremendous amount of information available to the call-taker and first responder that can result in lives saved. Also, there is the ability for analytics to power new insights and improvements in how they handle responses. There's the ability to gain insights from the incident and how it was handled; being able to reconstruct and analyze and identify opportunities for improvement is going to be enhanced when you have this growth in information coming into the center.

Putting artificial intelligence (AI) to work

While everything has the potential to be improved by AI, two notable areas are marketing, which for a long time has been the leading adopter of AI techniques, and the automotive industry, where there is a realization that self-driving vehicles are coming and will change the industry. Other industries are earlier in their AI journeys but are already seeing an impact. For example, EY has helped develop a chatbot for a national blood bank, helping to reach younger donors through a user experience combining AI technologies, social media and human curation. EY has also assisted a global bank to use Natural Language Processing technology to automate voice and text analytics for its complaints and compliance processes. ... The biggest risk is non-adoption. Every challenge in the world, and in business in particular, is an opportunity for AI. Adopting AI will require patience and a willingness to learn, and will be complex and lengthy, so firms need to start now. Many early projects will have a low return on investment (ROI) and a limited impact

What is agile methodology? Modern software development explained

Because software was developed based on the technical architecture, lower level artifacts were developed first and dependent artifacts afterward. Tasks was assigned by skill, and it was common for database engineers to construct the tables and other database artifacts first, followed by the application developers coding the functionality and business logic, and then finally the user interface was overlaid. It took months before anyone saw the application working and by then, the stakeholders were getting antsy and often smarter about what they really wanted. No wonder changes were so expensive! Not everything that you put in front of users worked as expected. Sometimes, users wouldn’t use a feature at all, so that feature was a wasted investment. Other times, a capability was widely successful but required reengineering to support the scalability and performance required. In the waterfall world, you only learned these things after the software was deployed, after all that effort and expense.

We Need Computers with Empathy

Using computer vision, speech analysis, and deep learning, we classify facial and vocal expressions of emotion. Quite a few open challenges remain—how do you train such multi-modal systems? And how do you collect data for less frequent emotions, like pride or inspiration? Nonetheless, the field is progressing so fast that I expect the technologies that surround us to become emotion-aware in the next five years. They will read and respond to human cognitive and emotional states, just the way humans do. Emotion AI will be ingrained in the technologies we use every day, running in the background, making our tech interactions more personalized, relevant, authentic, and interactive. It’s hard to remember now what it was like before we had touch interfaces and speech recognition. Eventually we’ll feel the same way about our emotion-aware devices.

Blockchain-Based CVs Could Change Employment Forever

APPII’s platform allows candidates to create Intelligent Profiles – recording details of professional achievement or educational certification on the distributed ledger, where it can be verified and then permanently recorded. It then allows organizations such as businesses or educational institutions to verify the “assertions” that candidates make during applications. By recording on a candidate’s profile that an assertion has been verified, there is no need for it to be checked again in the future. It also uses facial recognition technology to verify the identity of candidates, by asking them to take a picture using the mobile app and comparing it to a photograph on official identification documents such as passports. McKay says “In high-risk industries, it’s imperative for employers to undertake due diligence – in financial services if you’re providing your money to someone to invest, you don’t want that person to have got their job by falsifying their CV. It’s the same if you go to see a doctor or nurse.”

Here are strategies to achieve 6 common business resolutions for 2018

It's no secret that the tech world can be a high-pressure, stressful place especially if you're the one in charge. According to Business Insider, well-known tech leaders all have their ways of dealing with stress. Bill Gates and Warren Buffett advise reserving time for hobbies and taking that time, no matter what. Apple's CEO Tim Cook encourages leaders to maintain a focus on positive thinking, no matter how many cynics and negative thinkers surround them, and Facebook COO Sheryl Sandberg turns the phone off at night. ... Career-ending events like 2017's Equifax security breach, and the shortage of cybersecurity experts in the job market might be keeping you up at night. Communicate your concerns to upper management and the board if you feel you have security exposures and you lack the skills or resources to address them. This is the time to ask for extra budget money if you have to bring in security experts or consultants to fill skills gaps, or to purchase security software or monitoring solutions.

Will Google kill Chrome OS and Android in 2018?

According to this set of predictions, Fuchsia will replace Android Wear, Android and Chrome OS, but run existing apps designed for those platforms. In other words, future Android phones would ship with Fuchsia instead of Android, and Chromebooks would ship with Fuchsia instead of Chrome OS. That would spell the end of Chrome OS and Android as we know them and usher in a single-platform utopia for apps that run across all devices. The fabulous-Fuchsia faction assumes the new OS will solve whatever problems they’re currently having with either Chrome OS or Android. Fuchsia is expected to end Android fragmentation, solve the problem of slow and uneven Android updates, enable developers to build single apps that run natively on both iOS and Android and boost the performance of Chromebooks.

Entering the Next Era of Human Machine Partnerships

Over the next few years, AI will change the way we spend our time acting on data, not just curating it. Businesses will harness AI to do data-driven “thinking tasks” for them, significantly reducing the time they spend scoping, debating, scenario planning and testing every new innovation. It will mercifully release bottlenecks and liberate people to make more decisions and move faster, in the knowledge that great new ideas won’t get stuck in the mire. Some theorists claim AI will replace jobs, but these new technologies may also create new ones, unleashing new opportunities for humans. For example, we’ll see a new type of IT professional focused on AI training and fine-tuning. These practitioners will be responsible for setting the parameters for what should and shouldn’t be classified good business outcomes, determining the rules for engagement, framing what constitutes ‘reward’ and so on.

AI Will Soon Be So Good At Hacking, We’ll Only Be Able To Stop Them With Other AI

We may soon see machine learning algorithms repurposed towards hiding a piece of malware on a network, using its pattern analysis to ensure that the malicious code’s actions blend in with the daily traffic. Say a company manages to get a bit of spyware into a major rival’s network. It can then stay dormant, while the AI analyses data over time to learn crucial details – how does the firewall work, what time of the day are cyber security teams active, etc. The AI can then use this data to find a weak point in the security system, and even create cover for the spyware to siphon and transfer out data on new technology being researched, or major projects being negotiated, or even which employees are unhappy and looking to quit. And that’s just in corporate espionage. There’s no telling how much havoc an AI-powered malware can wreak on regular consumer systems across the Web.

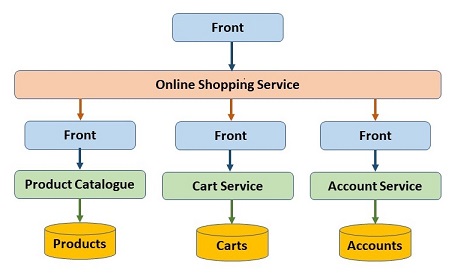

Entity Services is an Antipattern

In a microservice based architecture, it is important to keep the different services separated. Entity services is a common pattern now applied to microservices, but entity services is an anti-patternthat works against separation, Michael Nygard claims in one of a short series of blog posts on how to work with microservices. Nygard, among other things author of Release It!, notes that entity services is a solution to a problem that is commonly rediscovered, and refers both to a microservices architecture e-book from Microsoft and a tutorial from Spring for two of many new examples of usage of this pattern. For Nygard, an antipattern is a pattern that makes things worse. In arguing that entity services really is an anti-pattern, he uses a large legacy monolithic application as an example. In this application there are multiple instances of the process with all features local and in-process

Quote for the day:

"Success doesn’t come to you just by wanting it—it comes to you by getting in agreement with what It takes to ACHIEVE it." -- @MattManero

No comments:

Post a Comment