Quote for the day:

"A positive attitude will not solve all your problems. But it will annoy enough people to make it worth the effort" -- Herm Albright

Is AI Killing Sustainability?

/dq/media/media_files/2026/02/25/is-ai-killing-sustainability-2026-02-25-16-14-35.jpg) This article examines the paradoxical relationship between the rapid growth of

artificial intelligence and environmental goals. On one hand, AI's massive

computational needs are driving a surge in energy consumption, with global

spending projected to reach $2.52 trillion this year. This expansion is

fueling an exponential rise in data center power requirements, potentially

consuming as much electricity as 22% of U.S. households by 2028. However, the

author argues that AI also serves as a critical tool for boosting

sustainability. By analyzing vast datasets, AI can optimize supply chains,

automate waste management, and enhance energy efficiency in buildings by up to

30%. The piece provides six strategic tips for organizations to utilize AI for

greenhouse gas reduction, including predictive environmental risk monitoring,

accurate emission reporting, and improved renewable energy integration.

Despite these benefits, a tension exists between corporate "green" ambitions

and financial constraints, often leading to a "lite green" approach where

cost-cutting takes priority over true environmental innovation. Ultimately,

while AI's infrastructure poses a significant threat to climate targets, its

potential to identify high-ROI decarbonization opportunities offers a path

toward reconciling technological advancement with ecological preservation,

provided that organizations move beyond superficial commitments toward mature,

outcome-driven strategies.

This article examines the paradoxical relationship between the rapid growth of

artificial intelligence and environmental goals. On one hand, AI's massive

computational needs are driving a surge in energy consumption, with global

spending projected to reach $2.52 trillion this year. This expansion is

fueling an exponential rise in data center power requirements, potentially

consuming as much electricity as 22% of U.S. households by 2028. However, the

author argues that AI also serves as a critical tool for boosting

sustainability. By analyzing vast datasets, AI can optimize supply chains,

automate waste management, and enhance energy efficiency in buildings by up to

30%. The piece provides six strategic tips for organizations to utilize AI for

greenhouse gas reduction, including predictive environmental risk monitoring,

accurate emission reporting, and improved renewable energy integration.

Despite these benefits, a tension exists between corporate "green" ambitions

and financial constraints, often leading to a "lite green" approach where

cost-cutting takes priority over true environmental innovation. Ultimately,

while AI's infrastructure poses a significant threat to climate targets, its

potential to identify high-ROI decarbonization opportunities offers a path

toward reconciling technological advancement with ecological preservation,

provided that organizations move beyond superficial commitments toward mature,

outcome-driven strategies.PQC roadmap remains hazy as vendors race for early advantage

The transition to post-quantum cryptography (PQC) is evolving from a

theoretical concern into an urgent operational risk, prompting major security

vendors to race for early market advantages. As mainstream players like Palo

Alto Networks, Cisco, and IBM join specialized firms, the focus has shifted

toward structured readiness offerings centered on discovery, inventory, and

migration planning. A significant hurdle for organizations remains the lack of

visibility into cryptographic sprawl across infrastructure, making it

difficult to identify vulnerabilities in legacy algorithms like RSA. The

urgency is further fueled by the “harvest now, decrypt later” threat model,

where adversaries collect encrypted data today for future decryption by

capable quantum computers. While NIST has finalized several PQC standards,

experts suggest that the expected moment of cryptographic compromise could

arrive as early as 2029, making immediate preparation essential. Despite the

marketing push, some observers question whether these PQC offerings represent

a new category of security tools or simply a necessary enforcement of

long-overdue security hygiene, such as comprehensive asset mapping and

certificate tracking. Ultimately, the migration to quantum-safe environments

requires a phased approach and a commitment to crypto-agility, ensuring that

enterprises can adapt to evolving cryptographic standards before legacy

systems become insurmountable liabilities in a post-quantum world.

The transition to post-quantum cryptography (PQC) is evolving from a

theoretical concern into an urgent operational risk, prompting major security

vendors to race for early market advantages. As mainstream players like Palo

Alto Networks, Cisco, and IBM join specialized firms, the focus has shifted

toward structured readiness offerings centered on discovery, inventory, and

migration planning. A significant hurdle for organizations remains the lack of

visibility into cryptographic sprawl across infrastructure, making it

difficult to identify vulnerabilities in legacy algorithms like RSA. The

urgency is further fueled by the “harvest now, decrypt later” threat model,

where adversaries collect encrypted data today for future decryption by

capable quantum computers. While NIST has finalized several PQC standards,

experts suggest that the expected moment of cryptographic compromise could

arrive as early as 2029, making immediate preparation essential. Despite the

marketing push, some observers question whether these PQC offerings represent

a new category of security tools or simply a necessary enforcement of

long-overdue security hygiene, such as comprehensive asset mapping and

certificate tracking. Ultimately, the migration to quantum-safe environments

requires a phased approach and a commitment to crypto-agility, ensuring that

enterprises can adapt to evolving cryptographic standards before legacy

systems become insurmountable liabilities in a post-quantum world.Tech Debt “For Later” Crashed Production 5 Years Later

This Devrim Ozcay’s article critiques the pervasive hype surrounding AI in

DevOps, specifically addressing the gap between marketing promises and

production realities. The author argues that while "autonomous remediation" and

"predictive incident detection" are often touted as revolutionary, they

frequently fail in complex, high-stakes environments. These tools often rely on

simple logic or pattern matching, and general-purpose models like ChatGPT can be

dangerous during active incidents by providing confident but entirely incorrect

root cause hypotheses. Instead of relying on AI for critical judgment, the

article suggests leveraging it for "assembly" tasks that alleviate the

mechanical burden on engineers. This includes filtering log noise,

reconstructing incident timelines from disparate sources, and drafting initial

postmortem reports. By automating these time-consuming, repetitive processes,

teams can reduce the duration of post-incident documentation from hours to

minutes. Ultimately, the article advocates for a balanced approach where AI

handles the data organization while human engineers retain sole responsibility

for interpretation and decision-making. This shift allows practitioners to focus

on high-leverage problem-solving rather than tedious transcription, ensuring

that incident response remains both efficient and reliable without succumbing to

the unrealistic expectations often presented at tech conferences.

This Devrim Ozcay’s article critiques the pervasive hype surrounding AI in

DevOps, specifically addressing the gap between marketing promises and

production realities. The author argues that while "autonomous remediation" and

"predictive incident detection" are often touted as revolutionary, they

frequently fail in complex, high-stakes environments. These tools often rely on

simple logic or pattern matching, and general-purpose models like ChatGPT can be

dangerous during active incidents by providing confident but entirely incorrect

root cause hypotheses. Instead of relying on AI for critical judgment, the

article suggests leveraging it for "assembly" tasks that alleviate the

mechanical burden on engineers. This includes filtering log noise,

reconstructing incident timelines from disparate sources, and drafting initial

postmortem reports. By automating these time-consuming, repetitive processes,

teams can reduce the duration of post-incident documentation from hours to

minutes. Ultimately, the article advocates for a balanced approach where AI

handles the data organization while human engineers retain sole responsibility

for interpretation and decision-making. This shift allows practitioners to focus

on high-leverage problem-solving rather than tedious transcription, ensuring

that incident response remains both efficient and reliable without succumbing to

the unrealistic expectations often presented at tech conferences.

What Is Sampling in LLMs and How Does It Relate to Ethics?

This article explores the technical mechanisms behind how AI models choose their words and the subsequent moral responsibilities of developers. Sampling is the process by which an LLM selects the next token from a probability distribution. Techniques such as temperature, Top-K, and Top-P (nucleus sampling) are used to balance creativity with accuracy. Higher temperature settings introduce more randomness, which can foster innovation but also increases the likelihood of "hallucinations" or the generation of biased and harmful content. Conversely, lower settings make the model more deterministic and reliable for factual tasks but can lead to repetitive and uninspired responses. From an ethical standpoint, the choice of sampling strategy is never neutral. It requires a delicate balance between providing a diverse range of perspectives and ensuring the safety and truthfulness of the output. The author emphasizes that organizations must transparently define their sampling parameters to mitigate risks like misinformation. Ultimately, ethical AI development hinges on understanding these technical levers, as they directly influence how a model perceives and interacts with human values, necessitating a cautious approach to model tuning that prioritizes user safety and informational integrity.AI Won't Fix Cybersecurity, But It Could Rebalance It

The article explores the nuanced role of artificial intelligence in

cybersecurity, debunking the myth that it serves as a total panacea while

highlighting its potential to rebalance the long-standing asymmetric advantage

held by attackers. Traditionally, cybercriminals have enjoyed a lower barrier to

entry and a higher success rate because defenders must be perfect across every

surface, whereas attackers only need to succeed once. With the advent of

generative AI, malicious actors are leveraging the technology to craft

sophisticated phishing campaigns, automate vulnerability discovery, and

democratize complex malware creation. Conversely, AI empowers defenders by

automating routine monitoring, identifying anomalous patterns at machine speed,

and bridging the significant talent gap within the industry. This technological

shift creates a perpetual arms race where AI functions as a force multiplier for

both sides. Rather than eliminating threats, AI recalibrates the battlefield,

allowing security teams to process vast datasets and respond to incidents with

unprecedented agility. However, the human element remains indispensable;

strategic oversight and critical thinking are essential to guide AI tools.

Ultimately, while AI will not "fix" the inherent vulnerabilities of digital

infrastructure, it offers a vital mechanism to shift the strategic advantage

back toward those safeguarding the digital frontier.

The article explores the nuanced role of artificial intelligence in

cybersecurity, debunking the myth that it serves as a total panacea while

highlighting its potential to rebalance the long-standing asymmetric advantage

held by attackers. Traditionally, cybercriminals have enjoyed a lower barrier to

entry and a higher success rate because defenders must be perfect across every

surface, whereas attackers only need to succeed once. With the advent of

generative AI, malicious actors are leveraging the technology to craft

sophisticated phishing campaigns, automate vulnerability discovery, and

democratize complex malware creation. Conversely, AI empowers defenders by

automating routine monitoring, identifying anomalous patterns at machine speed,

and bridging the significant talent gap within the industry. This technological

shift creates a perpetual arms race where AI functions as a force multiplier for

both sides. Rather than eliminating threats, AI recalibrates the battlefield,

allowing security teams to process vast datasets and respond to incidents with

unprecedented agility. However, the human element remains indispensable;

strategic oversight and critical thinking are essential to guide AI tools.

Ultimately, while AI will not "fix" the inherent vulnerabilities of digital

infrastructure, it offers a vital mechanism to shift the strategic advantage

back toward those safeguarding the digital frontier.

AI Is Not Here to Replace People, It’s Here to Replace Waiting

In this insightful interview, Aliaksei Tulia, the Chief Technical Officer at CoinsPaid, argues that the true purpose of artificial intelligence in the financial sector is not to displace human judgment but to eliminate the friction of waiting. Tulia emphasizes that AI acts as a powerful catalyst for efficiency and speed within the digital payment ecosystem by automating repetitive, high-volume tasks that traditionally create operational bottlenecks. By handling routine duties such as document summarization, log scanning, and boilerplate coding, AI allows for a significant compression of cycle times while maintaining necessary human oversight. The article highlights how CoinsPaid integrates these intelligent tools to enhance consistency and visibility, ensuring that the platform remains robust without sacrificing control. Furthermore, the discussion explores the essential division of labor where technology manages data-heavy routine processes, freeing professionals to focus on high-level strategic decisions, complex problem-solving, and improving the overall customer experience. This pragmatic approach represents a shift where AI handles the disciplined "first pass," allowing people to dedicate their expertise to tasks requiring creativity and accountability. Ultimately, Tulia envisions a future where AI-driven automation defines industry standards, proving that the technology’s primary value lies in its ability to streamline operations for a global audience.Dynamic UI for dynamic AI: Inside the emerging A2UI model

The article "Dynamic UI for Dynamic AI: Inside the Emerging A2UI Model" explores

the transformative shift from traditional graphical user interfaces to

Agent-to-User Interfaces. As AI agents become increasingly autonomous, the

standard chat-based "command line" is no longer sufficient for managing complex

workflows. A2UI represents a fundamental paradigm shift where the interface is

dynamically generated by the AI to match the specific context and requirements

of a task. Unlike static SaaS platforms with fixed menus, A2UI allows agents to

create ephemeral, highly functional components—such as interactive charts, data

tables, or specialized dashboards—on demand. This movement is powered by

advancements like Vercel’s AI SDK and features like Anthropic’s Artifacts, which

allow for real-time rendering of code and UI. The goal is to bridge the gap

between human intent and machine execution by providing a rich, interactive

medium that transcends simple text responses. By embracing generative UI,

developers are enabling a more fluid collaboration where the software adapts to

the user, rather than the user being forced to navigate rigid software

structures. This evolution signals the end of "one-size-fits-all" application

design, ushering in a future where every interaction produces a bespoke,

temporary interface tailored specifically to the immediate problem.

The article "Dynamic UI for Dynamic AI: Inside the Emerging A2UI Model" explores

the transformative shift from traditional graphical user interfaces to

Agent-to-User Interfaces. As AI agents become increasingly autonomous, the

standard chat-based "command line" is no longer sufficient for managing complex

workflows. A2UI represents a fundamental paradigm shift where the interface is

dynamically generated by the AI to match the specific context and requirements

of a task. Unlike static SaaS platforms with fixed menus, A2UI allows agents to

create ephemeral, highly functional components—such as interactive charts, data

tables, or specialized dashboards—on demand. This movement is powered by

advancements like Vercel’s AI SDK and features like Anthropic’s Artifacts, which

allow for real-time rendering of code and UI. The goal is to bridge the gap

between human intent and machine execution by providing a rich, interactive

medium that transcends simple text responses. By embracing generative UI,

developers are enabling a more fluid collaboration where the software adapts to

the user, rather than the user being forced to navigate rigid software

structures. This evolution signals the end of "one-size-fits-all" application

design, ushering in a future where every interaction produces a bespoke,

temporary interface tailored specifically to the immediate problem.

AI Use at Work Is Causing “Brain Fry,” Researchers Find, Especially Among High Performers

The Futurism article "AI Use at Work Is Causing 'Brain Fry'" highlights a

concerning trend where artificial intelligence, despite its promises of

productivity, is significantly damaging employee mental health. A study of 1,500

workers conducted by Boston Consulting Group and the University of California,

Riverside, introduced the term "AI brain fry" to describe the cognitive

exhaustion resulting from excessive interaction with AI tools. Approximately 14

percent of employees—predominantly high performers in fields like software

development and finance—reported symptoms such as mental "static," brain fog,

and headaches. This fatigue is largely driven by information overload, rapid

task-switching, and the constant, draining necessity of overseeing multiple AI

agents. Rather than lightening the load, these tools often force users to work

harder to manage the technology than to solve actual problems. The consequences

are severe for both individuals and organizations; the research found a 33

percent increase in decision fatigue and a higher likelihood of employees

quitting their jobs. Ultimately, the piece argues that while AI is marketed as a

way to supercharge efficiency, it often acts as a "burnout machine" that

compromises cognitive capacity and leads to costly errors or paralysis in

professional environments.

The Futurism article "AI Use at Work Is Causing 'Brain Fry'" highlights a

concerning trend where artificial intelligence, despite its promises of

productivity, is significantly damaging employee mental health. A study of 1,500

workers conducted by Boston Consulting Group and the University of California,

Riverside, introduced the term "AI brain fry" to describe the cognitive

exhaustion resulting from excessive interaction with AI tools. Approximately 14

percent of employees—predominantly high performers in fields like software

development and finance—reported symptoms such as mental "static," brain fog,

and headaches. This fatigue is largely driven by information overload, rapid

task-switching, and the constant, draining necessity of overseeing multiple AI

agents. Rather than lightening the load, these tools often force users to work

harder to manage the technology than to solve actual problems. The consequences

are severe for both individuals and organizations; the research found a 33

percent increase in decision fatigue and a higher likelihood of employees

quitting their jobs. Ultimately, the piece argues that while AI is marketed as a

way to supercharge efficiency, it often acts as a "burnout machine" that

compromises cognitive capacity and leads to costly errors or paralysis in

professional environments.Submarine cables move to the center of critical infrastructure security debate

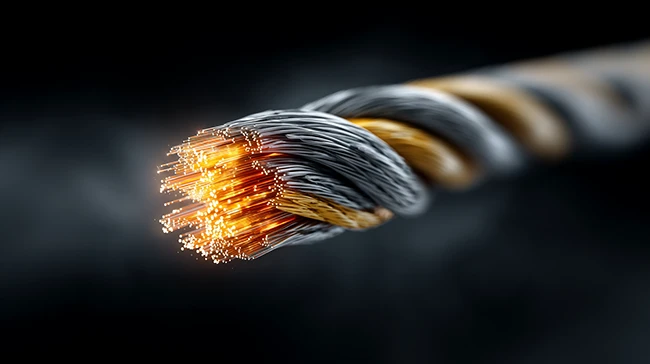

The article examines the escalating strategic significance of submarine cables,

which facilitate the vast majority of international data traffic but are

increasingly vulnerable to geopolitical tensions and physical threats. A new

sector report highlights how high-profile incidents, such as the 2024 Baltic Sea

cable severing, have transitioned these underwater assets from ignored

infrastructure into critical security priorities. Beyond intentional sabotage or

"grey-zone" activities, the industry faces significant resilience challenges,

including an annual average of two hundred cable faults primarily caused by

commercial fishing and anchoring. This vulnerability is exacerbated by a

critical shortage of specialized repair vessels and experienced personnel,

complicating rapid incident response. Furthermore, the shift in ownership

dynamics, where cloud hyperscalers are now primary investors, creates commercial

friction with traditional operators while reshaping infrastructure architecture.

Technological advancements, particularly AI-driven distributed acoustic sensing,

are transforming cables into active monitoring tools, yet technical solutions

alone remain insufficient. The report concludes that long-term security depends

on improved international coordination and unified governance frameworks between

governments and private entities. Ultimately, protecting these vital conduits

requires a holistic approach that integrates technical controls, organizational

readiness, and cross-border cooperation to match the scale of modern digital

dependency and evolving global risks.

The article examines the escalating strategic significance of submarine cables,

which facilitate the vast majority of international data traffic but are

increasingly vulnerable to geopolitical tensions and physical threats. A new

sector report highlights how high-profile incidents, such as the 2024 Baltic Sea

cable severing, have transitioned these underwater assets from ignored

infrastructure into critical security priorities. Beyond intentional sabotage or

"grey-zone" activities, the industry faces significant resilience challenges,

including an annual average of two hundred cable faults primarily caused by

commercial fishing and anchoring. This vulnerability is exacerbated by a

critical shortage of specialized repair vessels and experienced personnel,

complicating rapid incident response. Furthermore, the shift in ownership

dynamics, where cloud hyperscalers are now primary investors, creates commercial

friction with traditional operators while reshaping infrastructure architecture.

Technological advancements, particularly AI-driven distributed acoustic sensing,

are transforming cables into active monitoring tools, yet technical solutions

alone remain insufficient. The report concludes that long-term security depends

on improved international coordination and unified governance frameworks between

governments and private entities. Ultimately, protecting these vital conduits

requires a holistic approach that integrates technical controls, organizational

readiness, and cross-border cooperation to match the scale of modern digital

dependency and evolving global risks.

No comments:

Post a Comment