Top 10 Features to Look for in Automated Machine Learning

Following best practices when building machine learning models is a time-consuming yet important process. There are so many things to do ranging from: preparing the data, selecting and training algorithms, understanding how the algorithm is making decisions, all the way down to deploying models to production. I like to think of the machine learning design and maintenance process as being comprised of ten steps (see the diagram above). But, if I want to save time, increase accuracy, and reduce risk, I don’t manually go through the entire machine learning process in order to build my machine learning models. Instead, I turn to automated machine learning, using clever software that knows how to automate the repetitive and mundane steps, and freeing me up to do what humans are best at: communication, applying common sense, and being creative. And, to get the most out of automated machine learning, I want it to automate each and every one of the 10 steps. So, here’s my guide to what to look for in an automated machine learning system. ... Look for an automated machine learning platform that can automatically engineer new features from existing numeric, categorical, and text features. You will want a system that knows which algorithms benefit from extra feature engineering and which don’t, and only generates features that make sense given the data characteristics.

What is an enterprise wide agile transformation and why CIOs should lead it

Agile practices change the nature of how teams define their customers, align on implementation strategies, debate priorities and commit to getting work done. Agile teams with a history of consistent delivery and demonstrating a strong partnership with their customers can change the culture. Instead of top-down priorities and timelines, teams align on strategic goals and produce business outcomes with incremental deliveries. CEOs are looking for smarter, faster and more innovative organizations that can propel growth, enable winning customer experiences, compete with analytics and drive efficiencies with automation. ... They want more efficient and higher quality operations, smarter sales teams closing more strategic deals and financial groups reporting and forecasting in near real time. And CEOs don’t know how to get there. They are increasingly relying on their leaders and staff to pave the journey for them. CIOs who have excelled at delivering results and culture change with agile practices in IT have the opportunity to extend the practice, culture and mindset as an enterprise wide way of operating.

Why Enterprise Blockchain Projects Fail

For one, there is a general lack of vision and understanding that plagues many blockchain projects. Blockchain, like other technologies, does not live in a vacuum devoid of any significant linkage to organizational and societal norms, design, dysfunction and purpose. When you add in years of pent up inertia and entrenched behaviors present in organizations and markets, means that just because something new can evoke positive change, does not mean it will. For this, a clear organizational vision and deep technical and strategic understanding of where blockchain is fit for purpose can go a long way. Unfortunately, many project leaders are hardly conversant in blockchain, let alone the other array of emerging technologies they must intersect with in order to extract maximum value and autonomy. Perhaps the biggest point of failure, is the general lack of cyber hygiene present in many early blockchain projects. The second major point of failure and perhaps the hardest to overcome is blockchain’s social, organizational and market coordination issue.

Redis-Based Tomcat Session Management

Redis is an in-memory open-source data project. In fact, it is the most popular in-memory database that is currently available. In particular, Redisson can be used as a Redis Java client. Redisson uses Redis to empower Java applications for companies' use. It is intended to make your job easier and develop distributed Java applications more efficiently. Redisson offers distributed Java objects and services backed by Redis. Redisson's Tomcat Session Manager allows you to store sessions of Apache Tomcat in Redis. It empowers you to distribute requests across a cluster of Tomcat servers. This is all done in non-sticky session management backed by Redis. Alternative options might serialize the whole session. However, with this particular Redis Tomcat Manager, each session attribute is written into Redis during each invocation. Thanks to this advantage, Redisson Session Manager beats out other Redis-based managers in storage efficiency and optimized writes. Tomcat Session Management, in this way, is used in the most ideal way possible.

Research indicates the only defense against killer AI is not developing it

If you’re thinking killer robots duking it out in our cities while civilians run screaming for shelter, you’re not wrong – but robots as a proxy for soldiers isn’t humanity’s biggest concern when it comes to AI warfare. This paper discusses what happens after we reach the point at which it becomes obvious humans are holding machines back in warfare. According to the researchers, the problem isn’t one we can frame as good and evil. Sure it’s easy to say we shouldn’t allow robots to murder humans with autonomy, but that’s not how the decision-making process of the future is going to work. The researchers describe it as a slippery slope: If AI systems are effective, pressure to increase the level of assistance to the warfighter would be inevitable. Continued success would mean gradually pushing the human out of the loop, first to a supervisory role and then finally to the role of a “killswitch operator” monitoring an always-on LAWS.

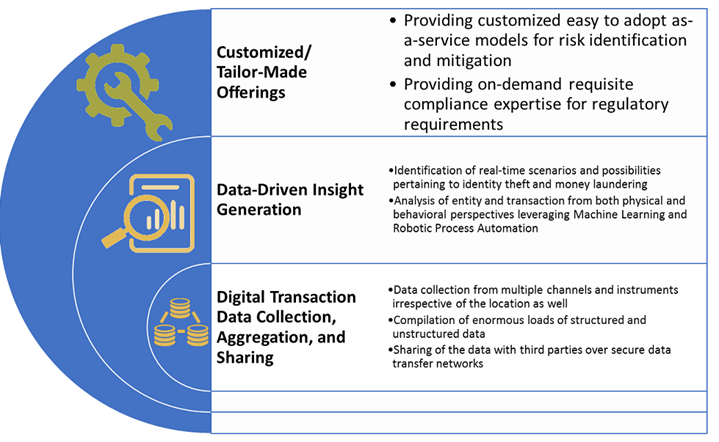

RegTech solutions can mitigate risk while aiding regulatory compliance

While regulations continuously evolve; the costs of non-compliance are skyrocketing. Therefore, to adhere to stringent mandates and norms, banks and PSPs are turning to advanced technological capabilities for support. The result? Regulatory Technology (RegTech) tools, solutions, and firms are gaining mainstream popularity among financial institutions looking to redefine and streamline compliance processes across jurisdictions, lines of business and client bases. RegTech digital solutions collect intelligence through data analytics, predictive modeling, and statistical tools. This functionality is particularly important when it comes to proactively addressing multiple regulations versus taking a one-at-a-time approach that may result in several remediation measures. It is no surprise that firms’ RegTech spending is expected to average 48% annual growth over the next five years, expanding from $10.6 billion in 2017 to $76.3 billion in 2022. RegTech drastically improves the efficiency of compliance-related processes, data aggregation, data analysis, and tailored need-based offerings.

The Best Reason for Your City to Ban Facial Recognition

We’re not prepared as a society to ensure that facial recognition will be used responsibly and without discriminatory effects. We’re not prepared as individuals for a world in which we can be automatically tracked and identified wherever we go without our knowledge or consent. Even if we were ready, the technology itself isn’t: Experts both inside and outside the technology industry acknowledge that the artificial intelligence underlying facial recognition systems still struggles with accuracy, particularly when it comes to identifying the faces of people of color — which is to say, the people who are most likely to be affected by it. In a test last year by the ACLU, Amazon’s facial recognition software falsely matched the faces of 28 members of U.S. Congress to the mug shots of people who had been arrested. The mismatches disproportionately affected representatives of color. Perhaps most important, our governments and law enforcement agencies are not prepared to guard against abuses of the technology or the data it produces, to ensure it is kept confidential, or to constrain its use to the appropriate situations.

Building Digital-Ready Culture in Traditional Organizations

Recognizing the immense scalability of digital solutions, digital leaders typically focus on creating impact, assuming that profit will follow. At their best, these companies revolutionize how people and organizations interact, reinvent industries, and break the power of entrenched gatekeepers. The other three values support that mission. Speed helps companies stay ahead of competitors and keep up with rapidly changing customer desires. Openness encourages employees to challenge the status quo and work with anyone who can help them achieve their goals quickly. Autonomy gives people the freedom to do what’s right for the company and its customers without waiting for formal approval at every turn. Together, these values can foster an engaged, empowered workforce where employees feel a personal responsibility to constantly change the company — and often the world. The values of high-performing digital companies frame their essential practices: rapid experimentation, self-organization, data-driven decision-making, and an obsession with customers and results.

Why data governance matters – and who should own it?

CIOs say that all that own, manage and/or rely on data to make decisions, should be involved in data governance. A financial services CIO said, “to use Gramm-Leach-Bliley Act (GLBA) terms, this includes data managers and regulation monitors. They must be at the table. In the end, this could include someone from just about every business area.” For many organizations, the legal department is a key stakeholder to align with and ensure the organization is meeting necessary governance requirements. Data can pose legal challenges. The longer you keep data, the more data can be used in e-discovery. While the business may want to keep data forever, there is a risk in not defining and enforcing data retention as part of a data governance program. Data governance stakeholders, for this reason, often include leaders from operations, sales, marketing, HR, accounting/finance. The C-suite leaders need to play a role. Where they exist, information governance and records management functions need to be included.

Data Pipeline Automation: The Next Step Forward in DataOps

The emerging DataOps field borrows many concepts from DevOps techniques used in general software engineering, including a focus on agility, leanness, and continuous delivery, Eckerson Group writes. The core difference is that it’s implemented in a data analytics environment that touches many data sources, data warehouses, and analytic methodologies. “As data and analytics pipelines become more complex and development teams grow in size,” Eckerson and Ereth write, “organizations need to apply standard processes to govern the flow of data from one step of the data lifecycle to the next – from data ingestion and transformation to analysis and reporting. The goal is to increase agility and cycle times, while reducing data defects, giving developers and business users greater confidence in data analytics output.” There are a handful of vendors delivering shrink-wrapped solution in this area, and not (yet) many open source tools. While DataOps is growing in recognition and need, the tools that supported automated data pipeline flows are relatively new, Eckerson Group writes.

Quote for the day:

"Leadership is not a solo sport; if you lead alone, you are not leading." -- D.A. Blankinship

No comments:

Post a Comment